Table of Contents

- Stage-Based Selection Criteria (What Matters at Each Phase)

- 30-Day Operating Plan

- Founder Scenarios and Recommended Execution Models

- Common Mistakes and Fast Fixes

- FAQ

Most startup teams choose a website platform under pressure. A launch deadline is close, resources are limited, and the immediate goal is simple: publish fast, start collecting demand, and move forward.

That pressure creates predictable mistakes. Founders choose tools based on homepage polish or short feature lists, then run into problems when they need reliable experiments, cleaner analytics, or faster cross-team updates.

The right platform decision should support more than day-one publishing. It should help your team ship consistently, improve conversion quality every week, and avoid expensive rebuilds when growth complexity increases.

This guide gives you a practical way to evaluate free website options in 2026 and turn that choice into a repeatable operating system. Instead of chasing tool hype, you will use stage-based criteria, implementation checklists, and decision metrics tied to real startup outcomes.

sbb-itb-bf47c9b

Quick Strategic Takeaways

Quick Strategic Takeaways for Choosing the Best Free Website Platforms for Startups

- Evaluate platforms by operating speed and measurement reliability, not only visual templates.

- Match platform choice to your current startup stage and team composition.

- Keep architecture stable and test one high-impact variable per week.

- Use proof, pricing orientation, and CTA clarity as your first conversion levers.

- Add accessibility and mobile checks to every release gate.

- Document winning patterns so campaign quality compounds over time.

- Scale traffic only after message quality and downstream lead signals stabilize.

Why Startup Teams Struggle With Free Website Platforms

Free tools are not the problem by themselves. The problem is choosing a tool without defining the workflow it needs to support.

Most early teams treat platform selection as a one-time design choice, but startup websites are operational assets. Messaging changes, channels shift, offers evolve, and proof needs constant updates. If your platform cannot support these updates quickly, your growth loop slows down.

Three failure patterns appear across early-stage teams. First, launch speed is prioritized without planning for iteration speed. Second, teams use one generic page for all traffic sources, which weakens conversion quality. Third, experiments are run without clear tracking standards, so results cannot be trusted.

A better model starts with an operational question: can this platform support weekly improvement cycles for the next six months without forcing technical bottlenecks? If the answer is unclear, the short-term speed benefit may create long-term execution drag.

Stage-Based Selection Criteria (What Matters at Each Phase)

The best platform depends on startup stage. A pre-product team proving demand has different constraints than a team managing multiple channels and sales handoffs.

Pre-seed and validation stage

At validation stage, your priority is message-market clarity. You need to publish quickly, run simple tests, and gather clean signals from early users.

Focus on fast template deployment, easy section editing, mobile-safe defaults, and lightweight form setup. If non-technical teammates cannot update key page sections without escalation, your learning loop will be too slow.

Avoid over-indexing on advanced features that you will not use in the next 90 days. Speed of iteration is more valuable than feature breadth when your core goal is to validate positioning and offer logic.

Early traction stage

Once acquisition channels diversify, structural control becomes critical. You need channel-specific variants, clearer attribution, and a repeatable release process.

At this stage, prioritize modular page sections, reusable blocks, and easy duplication by audience segment. These capabilities reduce rework and preserve brand consistency while you adapt narratives by intent.

You should also evaluate how quickly your team can update proof, FAQs, and form logic based on real objections. This is where many free tools fail if content operations are not considered during selection.

Growth stage

When your startup has more contributors and higher traffic volume, governance matters as much as publishing speed. You need controlled update workflows, consistent quality standards, and documented experiment decisions.

Look for platform behavior that supports ownership clarity, release gating, and reliable analytics hygiene. Growth teams lose time when every update requires manual QA recovery or ad-hoc fixes.

At this stage, the strongest platform is the one that reduces coordination cost while preserving execution velocity.

Free vs Low-Cost: A Practical Decision Model

The free-versus-paid debate is often framed incorrectly. The real decision is not subscription price versus no price. The real decision is whether a platform can support your revenue workflow without hidden operational costs.

A startup can run effective campaigns on free plans when page structure is simple and team discipline is high. Problems appear when teams treat free plans as unlimited infrastructure and ignore maintenance overhead.

Use a total operating cost lens with five components:

- tool subscription cost

- team hours lost to manual workarounds

- launch delays from restricted workflows

- analytics quality loss from fragile tracking

- conversion loss from slow iteration cycles

In many cases, free plans are ideal for validation and early traction, then low-cost upgrades become rational when they remove recurring bottlenecks. This transition should be planned, not reactive.

The Startup Platform Scorecard

A scorecard keeps selection objective. Without one, teams overvalue visual demos and undervalue execution reliability.

Use these weighted criteria when comparing platforms:

- 25%: editing and publishing speed for non-technical users

- 20%: conversion architecture control (sections, CTAs, forms)

- 20%: mobile performance and baseline UX quality

- 15%: tracking and attribution reliability

- 10%: collaboration workflow and ownership clarity

- 10%: long-term maintainability and migration risk

Set the weights before vendor comparisons to reduce bias. Once the scorecard is complete, pick the platform that best supports your operating model, not the one with the longest feature page.

Conversion Architecture for Startup Websites

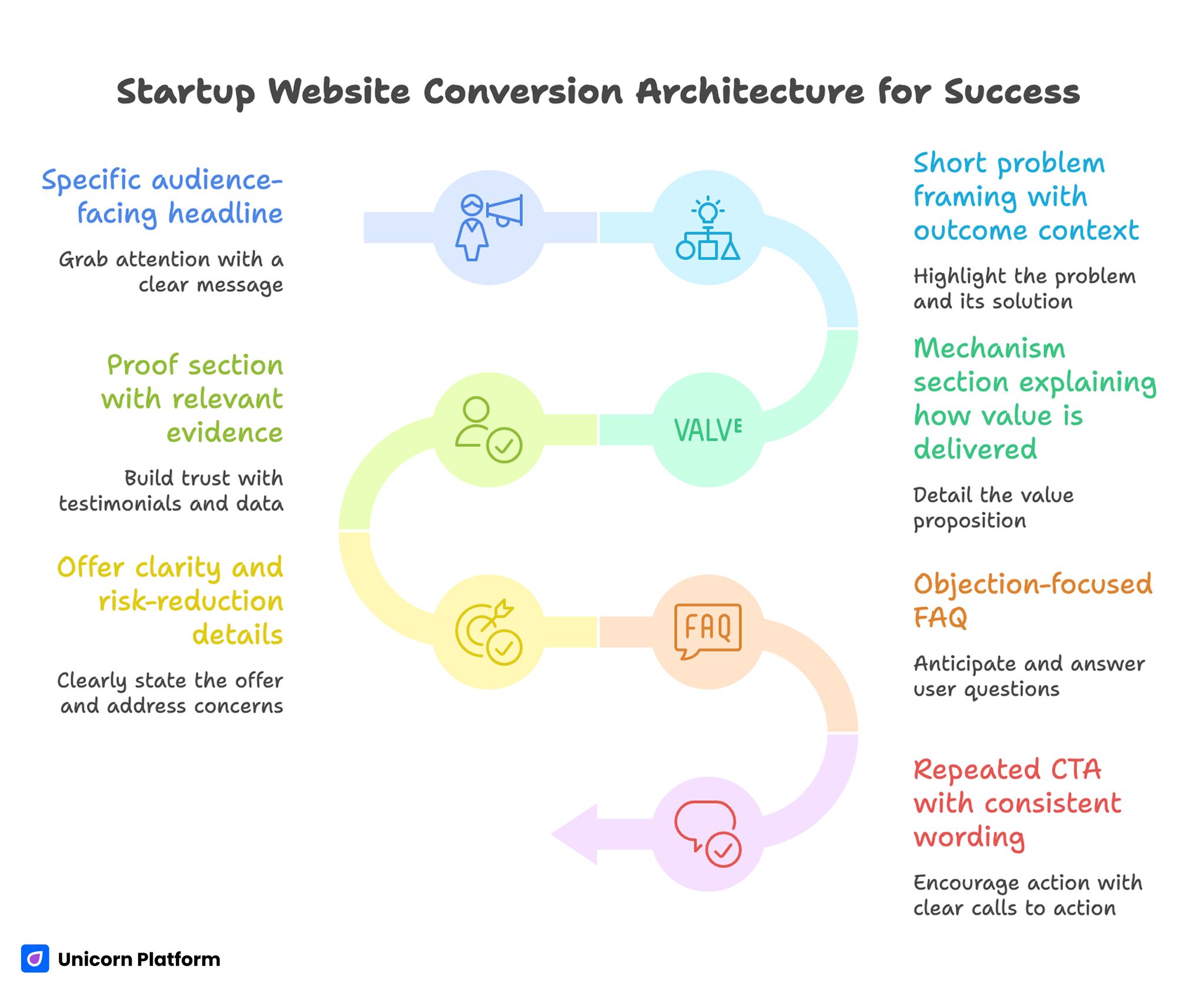

Startup Website Conversion Architecture for Success

Startup websites fail when they ask users to decide before value is clear. Strong architecture solves this by sequencing information to reduce uncertainty in the right order.

Research on landing page performance also supports this structured approach. According to HubSpot’s landing page best practices research, pages that clearly sequence value explanation, proof, and action prompts tend to convert significantly better than pages that present information without a clear decision flow.

Use a conversion-first structure for most startup campaigns:

- specific audience-facing headline

- short problem framing with outcome context

- mechanism section explaining how value is delivered

- proof section with relevant evidence

- offer clarity and risk-reduction details

- objection-focused FAQ

- repeated CTA with consistent wording

This structure is flexible across industries because it follows decision psychology, not trend aesthetics. It also creates clear test points when conversion declines.

If your team needs a clean baseline before deep optimization, this practical guide on creating free landing pages with Unicorn Platform is a useful reference for setup speed.

Message Quality Standards That Improve Qualified Leads

Copy quality has a larger effect on startup conversion than most teams expect. Generic wording can produce clicks while reducing downstream lead quality, which creates false confidence.

User behavior research supports the importance of clear and structured messaging. Studies from the Nielsen Norman Group show that people typically scan web pages in an F-shaped reading pattern, which means headlines, short sections, and clear value statements play a major role in whether visitors understand the offer quickly.

Use three message standards across every important page. First, every core claim should include mechanism, not only aspiration. Second, CTA text should describe the next step clearly. Third, each high-impact claim needs supporting evidence nearby.

For example, replace "grow faster" language with precise, operational value statements. Tell users what changes in their workflow, how quickly it changes, and what they should do next.

This approach improves conversion quality because it helps unfit users self-filter early. That protects sales time and improves handoff efficiency across marketing and product teams.

User-generated content is especially valuable here. Screenshots of real landing pages, shared publicly by founders, often reveal how vague claims lead to confusion in comments and feedback threads. When founders revise messaging based on that feedback—adding specifics, timelines, or constraints—lead quality tends to improve. This creates a fast feedback loop between audience perception and message clarity.

Proof Design That Carries the Decision

Proof is often treated as a design block, but it should be treated as a decision accelerant. Users do not need more claims. They need credible signals that reduce perceived risk.

Build proof in layers with clear jobs. Outcome proof demonstrates measurable impact. Process proof shows how results are produced. Credibility proof confirms that the team can execute reliably.

A practical proof stack can include short customer quotes with context, implementation examples, onboarding timeline clarity, and role-specific trust markers. Keep proof close to the CTA and refresh it on a fixed cadence.

When teams simplify page structure and tighten proof placement, conversion usually improves without large visual changes. This pattern is consistent across both paid and organic startup acquisition flows.

For teams tuning narrative flow and section hierarchy, this article on simple landing-page design for startups is a useful companion.

Form Strategy: Reduce Friction Without Killing Lead Quality

Short forms typically increase completion, but shorter is not always better if qualification disappears. The right model is progressive qualification across the first interaction and follow-up sequence.

First-touch forms should request only routing essentials. Useful fields include name, work email, role, and one intent or use-case selector.

Collect deeper context after confirmation, when user commitment is higher. Budget windows, technical stack details, and implementation complexity can appear in message follow-ups or onboarding forms.

This design balances top-funnel conversion and downstream sales quality. It also reduces abandonment caused by early friction.

Channel-Specific Variants: One System, Multiple Paths

Running all traffic into one page is a common startup shortcut. It saves time initially, but quality usually drops once paid, organic, and referral traffic arrive with different intent levels.

Create source-aware page variants that share the same design system and core proof library. Keep the structure consistent, then adjust narrative emphasis by source intent.

Cold paid traffic usually needs stronger context and clearer risk reduction early. Warm referral traffic often needs faster action pathways and concise trust confirmation.

Document variant logic before launch so changes are deliberate and comparable. Without this step, teams make random edits that are difficult to measure and maintain.

For prelaunch and demand-capture programs, this guide on effective waitlist landing pages helps map acquisition intent to post-signup behavior.

Video as a Conversion Layer, Not a Decoration Layer

Video can improve startup conversion, but only when tied to a specific decision moment. Generic explainer videos often raise watch time without improving action rates.

Use decision-mapped video placements:

- top section: short value explanation for cold traffic

- mid section: feature or workflow demo tied to a core objection

- bottom section: concise founder message near final CTA

Each video should support one user decision. Keep scripts specific and connect them to measurable outcomes.

Measure impact beyond play rate. Compare CTA click-through, form completion, and assisted conversion influence by traffic source.

Accessibility and Keyboard Navigation as Growth Levers

Accessibility is a conversion variable, not only a compliance task. When navigation and forms are difficult for keyboard-first users, hidden drop-off increases.

Add an accessibility release gate for every major publish. Include tab-order checks, visible focus states, descriptive labels, and clear error messaging.

These checks improve usability for all users, including those on constrained devices or high-friction browsing conditions. Accessibility improvements often create measurable conversion gains because they reduce interaction failures.

Unicorn Platform workflows can standardize many of these defaults in reusable templates. Standardization reduces QA overhead and protects quality as page volume grows.

Analytics Hygiene: The Most Overlooked Growth System

Many startup teams run experiments with weak tracking discipline. When metrics are inconsistent, teams cannot separate signal from noise and waste effort on false conclusions.

Create a simple analytics framework before scaling tests:

- define one primary metric per page goal

- define one secondary quality metric per experiment

- standardize event naming across variants

- document traffic source taxonomy

- run tracking validation before each launch

This framework turns weekly edits into reliable learning. It also improves cross-team trust in experiment outcomes.

If your team wants a lightweight launch-to-measure rhythm, this three-step startup site workflow is useful for aligning publishing with measurement discipline.

Internal Content System for Organic Growth

Free startup platforms can perform well in organic channels when content architecture supports decision flow. Publishing disconnected blog posts without conversion pathways rarely compounds.

Use a cluster model with clear intent levels. Top-of-funnel content should answer broad questions and route readers to focused solution pages. Mid-intent content should compare approaches and reduce practical objections. High-intent pages should provide proof, offer clarity, and direct next actions.

Internal links should support user decisions, not keyword stuffing. Keep links contextual and limit each paragraph to one deep reference when needed.

A strong cluster system increases discoverability while preserving conversion context. Over time, this model improves both traffic quality and pipeline predictability.

Security, Ownership, and Reliability for Lean Teams

Website reliability is often ignored until campaigns scale. A broken form, outdated policy, or inaccessible CTA can damage acquisition efficiency quickly.

Define ownership across critical areas: content updates, proof refreshes, tracking integrity, and release approvals. Assign one accountable person per area even in small teams.

Use a weekly reliability checklist:

- form delivery and notification checks

- CTA and redirect validation

- mobile rendering checks on key devices

- policy and pricing consistency review

- tracking event sanity check

This routine prevents avoidable conversion losses and reduces emergency fixes during campaign windows.

14-Day Startup Launch Sequence

A structured launch sequence helps teams move quickly without skipping quality controls. The objective is to publish early and learn fast while protecting baseline performance.

Day 1-3: baseline publish

Build one focused page with one clear goal, one primary CTA, and one proof section. Configure core analytics events and validate end-to-end form routing before traffic activation.

Day 4-7: clarity improvements

Update headline specificity, tighten mechanism copy, and improve CTA wording based on early behavior data. Remove sections that draw attention but do not improve decision quality.

Day 8-10: trust and objection coverage

Add context-rich proof near CTA and publish FAQ answers to the most common friction points. Keep changes targeted so attribution remains clear.

Day 11-14: first controlled test

Run one A/B test on a high-impact variable such as hero framing or proof placement. Record hypothesis, results, and decision in a shared log.

30-Day Operating Plan

Week 1: architecture and instrumentation

Stabilize page structure, ensure event coverage for key actions, and align source tagging standards. This foundation protects data quality before experimentation volume increases.

Week 2: messaging and proof optimization

Test one messaging angle and one proof position while holding all other elements stable. Evaluate winners with both conversion and lead-quality metrics.

Week 3: form and handoff quality

Simplify first-touch forms, improve confirmation messaging, and reduce follow-up delay. This stage often improves lead-to-opportunity conversion without additional traffic.

Week 4: source-aware variant rollout

Launch one channel-specific variant and compare performance against baseline by source and device. Use results to update reusable templates for future campaigns.

60-Day Compounding Workflow

Days 1-20 should focus on removing structural friction and establishing repeatable QA. Days 21-40 should expand controlled testing and refine segment-specific messaging.

Days 41-60 should consolidate winning patterns into standard modules and document ownership rules. By this point, your team should have a clear release cadence and stronger cross-functional alignment.

The goal of 60-day execution is not maximum experimentation volume. The goal is to build a reliable operating system that improves with each cycle.

90-Day Scale Readiness Framework

Before increasing budget or campaign volume, validate that core quality signals are stable. Growth should amplify proven systems, not unresolved conversion issues.

Run a 90-day readiness review across five categories:

- message consistency by source

- proof freshness and relevance

- lead-quality stability by channel

- form reliability and response-time quality

- tracking integrity and decision confidence

If two or more categories are unstable, pause scaling and fix foundations first. This prevents expensive growth cycles built on weak conversion mechanics.

Founder Scenarios and Recommended Execution Models

Choosing a platform is easier when mapped to a real operating context. Abstract feature checklists are useful, but founder teams make better decisions when they evaluate tools against weekly execution patterns.

The scenarios below are practical starting points, not rigid templates. Use them to align platform choice with team capacity, acquisition model, and decision cadence.

Scenario 1: Solo founder validating one core offer

A solo founder usually needs one focused page, one proof module, one CTA path, and one follow-up flow. The priority is learning speed, so workflow should minimize setup friction and reduce context switching between writing, publishing, and checking data.

In this model, weekly progress matters more than elaborate architecture. Ship one page version quickly, review behavior every seven days, and make one meaningful change each cycle. Fast consistency usually outperforms occasional major redesigns.

Scenario 2: Two to four person team running paid and organic channels

When multiple channels are active, one page is rarely enough. A small team should maintain a shared component system and create source-aware variants with controlled messaging differences.

Operationally, this team should assign one owner for copy updates, one for proof maintenance, and one for tracking integrity. Even light ownership boundaries prevent decision bottlenecks and reduce launch-week errors.

Scenario 3: Founder-led B2B sales with higher-consideration buyers

B2B startup funnels often require deeper proof and stronger objection handling before conversion. The website should support structured decision paths that connect value claims to real implementation context.

In this scenario, form quality matters as much as volume. Progressive qualification and precise follow-up expectations help sales teams prioritize fit and reduce time spent on low-intent inquiries.

Scenario 4: Product-led startup with freemium onboarding

Product-led funnels depend on low-friction actions, but clarity still drives activation quality. Users need to understand who the product is for, what first success looks like, and how long setup takes.

This model performs best when landing pages and onboarding messages use consistent language. Disconnect between pre-signup copy and in-product expectations can increase churn in the first week.

Migration Playbook: Moving from Free Setup to Scalable Operations

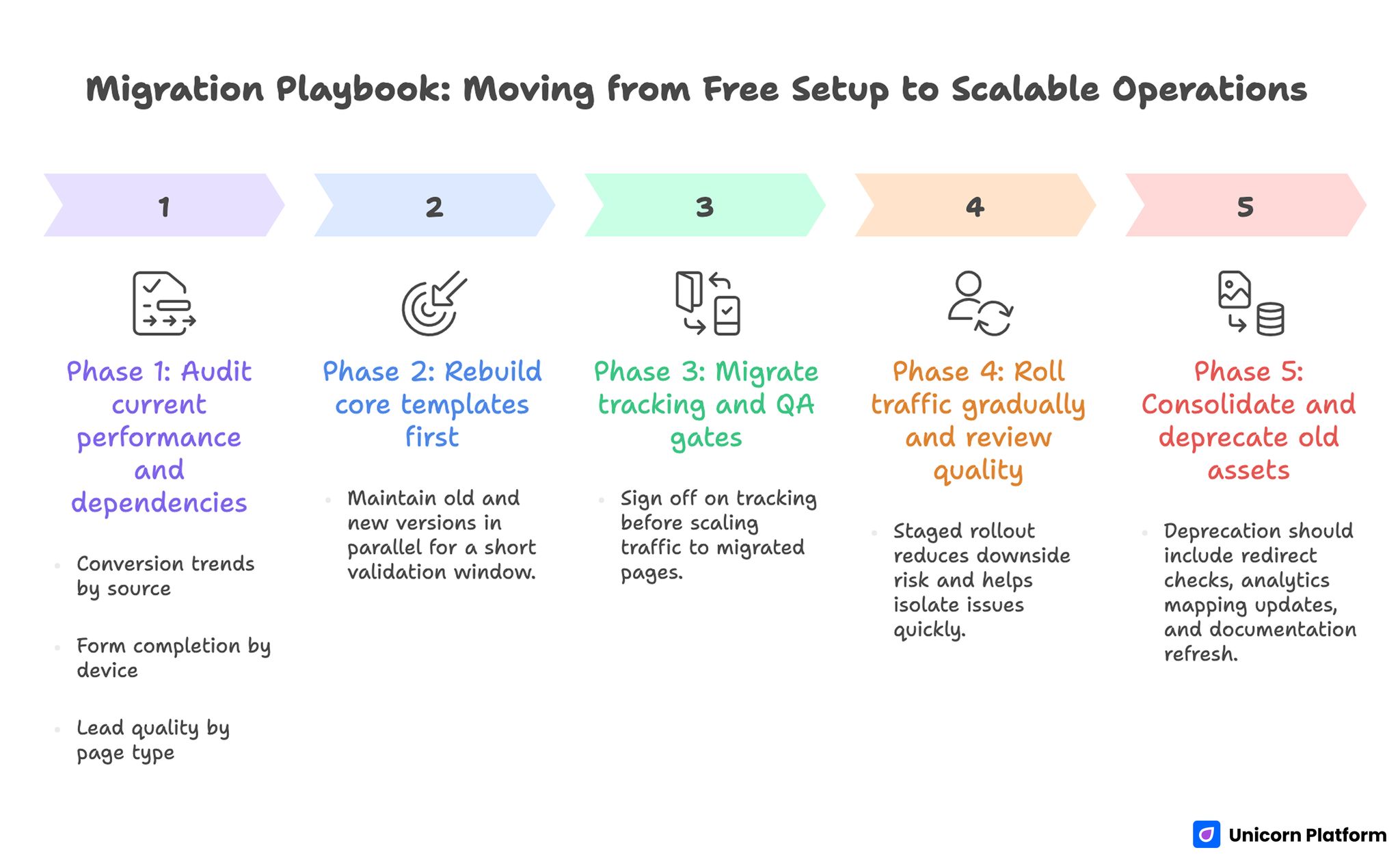

Migration Playbook: Moving from Free Setup to Scalable Operations

A migration does not have to be a full rebuild. Most startups can transition in controlled phases if they separate structural changes from message changes and protect tracking continuity.

The biggest migration risk is changing too many variables at once. When layout, copy, forms, and analytics all change together, teams lose attribution confidence and struggle to explain conversion movement.

Phase 1: Audit current performance and dependencies

Before moving anything, map your active pages, traffic sources, key events, and follow-up routes. Document what is already working so critical behaviors are preserved during the transition.

This audit should include conversion trends by source, form completion by device, and lead quality by page type. Clear baseline metrics are essential for measuring whether migration improves or harms performance.

Phase 2: Rebuild core templates first

Start with one primary template for your highest-impact funnel and recreate only essential elements. Avoid carrying over low-performing sections that survived due to habit rather than results.

At this point, maintain old and new versions in parallel for a short validation window. Parallel validation lets teams verify functional parity before routing full traffic.

Phase 3: Migrate tracking and QA gates

Tracking migration should be treated as a separate workstream, not a final checklist item. Recreate event definitions intentionally and test conversion paths end to end.

A practical rule is to sign off on tracking before scaling traffic to migrated pages. Clean metrics protect decision quality and prevent false optimization conclusions during the first weeks post-migration.

Phase 4: Roll traffic gradually and review quality

Move traffic in controlled increments rather than all at once. Start with one source segment, verify conversion quality and lead behavior, then expand to additional channels.

This staged rollout reduces downside risk and helps isolate issues quickly. If quality drops, teams can diagnose the exact handoff point instead of troubleshooting an entire system under pressure.

Phase 5: Consolidate and deprecate old assets

After migrated pages stabilize, archive weak or duplicate legacy assets. Keeping outdated variants active creates reporting noise and confuses future optimization cycles.

Deprecation should include redirect checks, analytics mapping updates, and documentation refresh. A clean operating environment is one of the strongest predictors of sustainable execution speed.

Weekly Operating Review Template for Startup Website Teams

High-performing teams treat website work as a recurring operating review, not a background task. A 30 to 45 minute weekly review is usually enough to maintain momentum when agenda structure is consistent.

Start with objective metrics, then move to decisions. Teams that debate copy preference before reviewing data often create random cycles and weak learning.

Step 1: Snapshot of pipeline-relevant metrics

Review conversion rate, qualified lead share, source-level performance, and time-to-publish for recent updates. Track trend direction instead of reacting to single-day spikes.

If one metric changes sharply, validate data integrity first. Instrumentation errors are common and can distort weekly decisions if not checked early.

Step 2: Experiment review and decision log

For each active test, record hypothesis, change applied, decision metric, and observed result. End each test with a keep, refine, or revert decision and assign follow-up ownership.

A disciplined decision log prevents repeated experiments with slightly different wording. Over time, this record becomes the team’s most valuable execution asset.

Step 3: Content and proof freshness check

Proof modules and FAQs degrade if they are not refreshed regularly. Use this step to identify sections where claims are still true but examples are outdated.

Refreshing proof does not require full rewrites. Small updates with relevant context are often enough to improve trust and keep pages aligned with current product reality.

Step 4: UX and accessibility quality gate

Review mobile rendering, CTA visibility, keyboard flow, and form error behavior on priority pages. These checks should be fast and consistent so quality regressions are caught before campaign impact grows.

If a regression is found, prioritize fix speed over adding new features that week. Reliability work protects acquisition efficiency more than feature expansion during unstable periods.

Step 5: Next-week execution plan

End every review with one primary experiment and one reliability task. This keeps workloads realistic and protects focus for lean teams.

Define clear owners, deadlines, and success criteria before closing the meeting. Ambiguous ownership is one of the most common causes of missed updates and repeated launch issues.

Comparison Matrix: Matching Platform Strengths to Startup Needs

Many teams ask for one universal ranking of free builders, but rankings are less useful than fit-based matching. A practical matrix compares platform strengths to the specific jobs your website must do in the next quarter.

For example, a founder validating one offer should prioritize speed, clarity, and minimal setup. A multi-channel team should prioritize variant management, consistent QA, and attribution hygiene. A sales-heavy B2B team should prioritize proof depth and qualification workflow control.

This fit-based approach reduces buyer regret because selection criteria are tied to execution demands rather than category popularity. It also makes future upgrades easier because migration triggers are defined from the start.

To operationalize this matrix, assign each startup need a priority score and map it against your scorecard categories. Select the platform with the strongest weighted fit for current objectives, then schedule a 60-day reevaluation to confirm assumptions against real performance.

Common Mistakes and Fast Fixes

Mistake 1: selecting by visual template quality alone

Template polish is useful, but it does not guarantee growth performance. Use a weighted scorecard tied to operating outcomes before choosing a platform.

Mistake 2: building one page for every channel

Different traffic sources require different context depth. Keep one design system and create narrative variants by intent.

Mistake 3: publishing claims without evidence

Users delay decisions when proof is weak or far from CTA. Add context-rich evidence near decision points and update it regularly.

Mistake 4: forcing long first-touch forms

Early friction lowers conversion and often worsens data quality. Keep first interactions short, then qualify deeper in follow-up steps.

Mistake 5: treating accessibility as optional

Keyboard flow and focus clarity are conversion fundamentals. Add accessibility checks to every release gate.

Mistake 6: running tests without measurement discipline

Unclear metrics produce false wins and weak decisions. Standardize event naming, test scope, and result logging before scaling experiments.

Mistake 7: delaying governance until the team is larger

Lack of ownership causes inconsistent updates and launch errors. Define responsibilities early, even with a small team.

Mistake 8: scaling traffic before lead quality stabilizes

High click volume can hide low-intent pipeline quality. Gate budget expansion behind stable conversion and downstream quality signals.

FAQ: Best Free Website Platforms for Startups

Are free website platforms good enough for real startup growth?

Yes, for many teams at validation and early traction stages. They work well when paired with clear process standards for messaging, testing, and QA.

When should a startup move from free to paid plans?

Upgrade when recurring bottlenecks slow execution or reduce quality, such as restricted workflows, fragile analytics, or collaboration limits.

What is the most important selection criterion for founders?

Editing and publishing speed for non-technical users, combined with reliable conversion and tracking controls.

How many page variants should we run initially?

Start with one baseline and one source-specific variant. Expand only after your measurement system is stable.

Should early pages include pricing details?

Include pricing orientation even if full tables are not needed. Users need enough context to evaluate fit with confidence.

How often should we run experiments?

One major experiment per week is a practical cadence for most startup teams. It keeps attribution cleaner and decisions easier.

Can accessibility work really improve conversions?

Yes. Better keyboard flow, clearer labels, and visible focus states reduce friction for all users, not only those with specific accessibility needs.

Which metric should we prioritize above raw conversion rate?

Lead quality by source. High conversion with low-fit leads can reduce pipeline efficiency and distort optimization decisions.

What does good governance look like in a small team?

Clear ownership for messaging, proof updates, tracking checks, and release approval. Keep it simple but explicit.

What is the biggest scaling mistake startup teams make?

Increasing traffic before message consistency and downstream quality signals are stable across at least two review cycles.

Final Takeaway

The best free website platform for startups is the one that supports fast publishing, reliable testing, and sustainable quality control. Tool choice should be evaluated by operating outcomes, not feature marketing.

With Unicorn Platform, teams can build a repeatable growth system by combining reusable page structure, clear ownership, and weekly evidence-based updates. When process quality is high, startup websites stop being static marketing pages and become dependable revenue infrastructure.

For teams that need a broader landscape view before locking workflow decisions, this comparison of free startup website builders provides useful context.