Table of Contents

- Core Decision Sequence for Stronger Lead Quality

- Common Failure Modes and Fixes

- 30-Day Execution Plan

- FAQ

Most teams can improve form submissions. Fewer teams can improve submission quality at the same time. That difference is why some acquisition programs grow pipeline reliably while others produce activity without revenue confidence.

A landing page is not just a design asset. It is a routing system that should connect the right visitor to the right next step with clear expectations. If that routing logic is weak, even strong traffic sources produce low-fit leads, slower follow-up cycles, and lower close potential.

This is where many programs break. Teams run campaigns, optimize headlines, and increase click-through rates, but they do not clarify offer fit, qualification logic, and post-submit continuity. The page converts, yet downstream teams still describe leads as unprepared or misaligned.

Strong acquisition systems treat conversion as an end-to-end decision flow. They align relevance, trust, qualification, and handoff quality in one coherent structure. When these components are built deliberately, conversion quality improves without relying on aggressive friction.

This guide provides that structure for 2026. It is designed for operators who need a repeatable model for planning, building, and improving lead capture pages in Unicorn Platform under real campaign pressure.

sbb-itb-bf47c9b

Key Takeaways

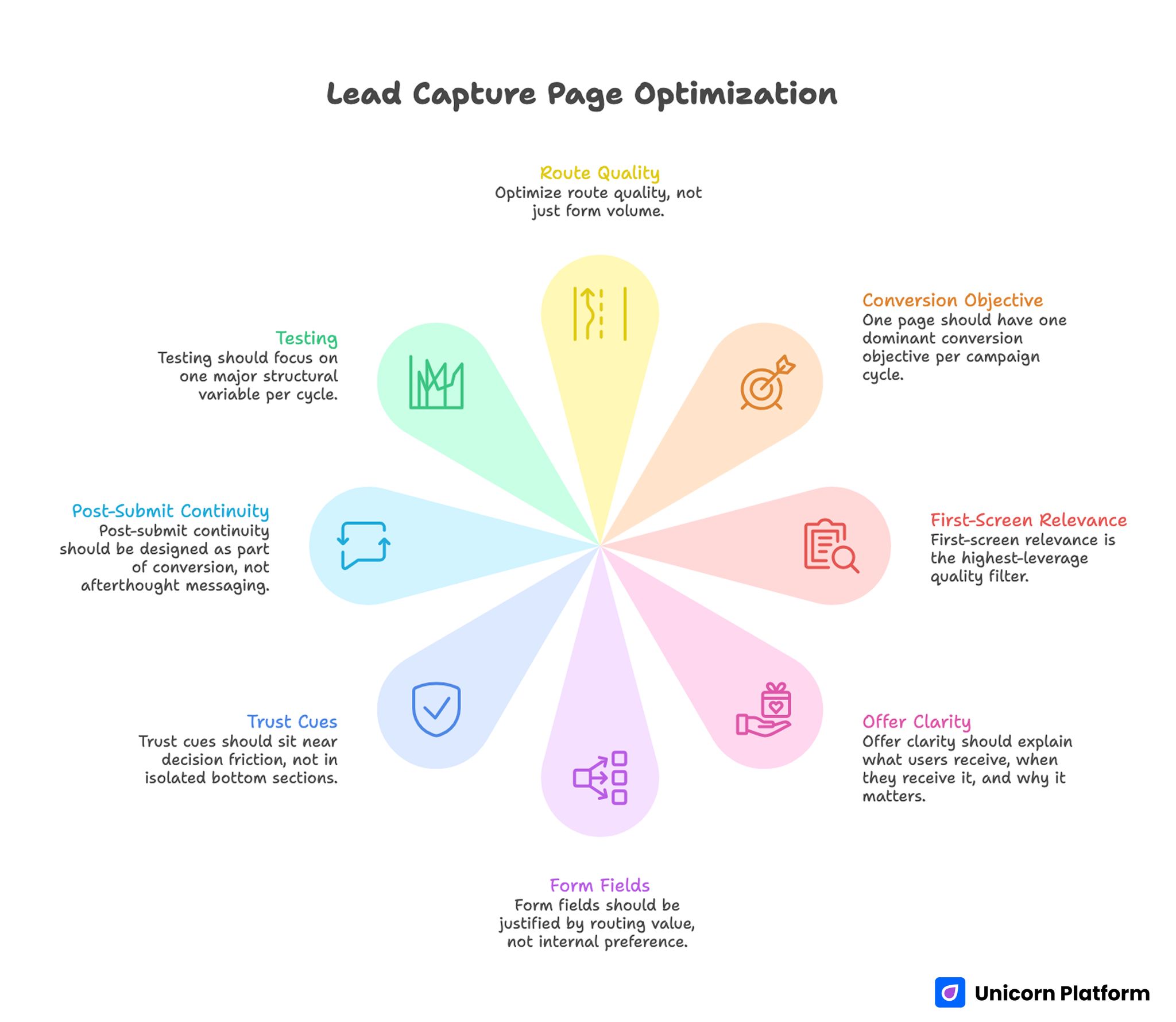

Lead Capture Page Optimization

- A high-performing lead capture page should optimize route quality, not only form volume.

- One page should have one dominant conversion objective per campaign cycle.

- First-screen relevance is the highest-leverage quality filter.

- Offer clarity should explain what users receive, when they receive it, and why it matters.

- Form fields should be justified by routing value, not internal preference.

- Trust cues should sit near decision friction, not in isolated bottom sections.

- Post-submit continuity should be designed as part of conversion, not afterthought messaging.

- Testing should focus on one major structural variable per cycle.

Why Lead Programs Underperform Even With Solid Traffic

Most underperformance starts with fit ambiguity. Visitors arrive and cannot determine quickly whether the offer is for their role, problem stage, or team context. Some low-intent users still submit, while high-intent users postpone action because they need clearer alignment.

The next issue is value blur. Many pages explain features and capabilities but never clarify the specific decision support or business outcome the visitor should expect after conversion. Without concrete value, the form appears risky because the next step remains uncertain.

Another frequent problem is trust timing. Evidence and process transparency often appear after the first major call to action. This sequence asks for commitment before uncertainty is reduced, which increases avoidable drop-off among qualified prospects.

Finally, teams optimize surface metrics while ignoring operational quality. Submission counts rise, but acceptance rates, meeting readiness, and follow-through quality stay flat or decline. Without quality-aware metrics, optimization moves in the wrong direction while dashboards still look positive.

Industry research confirms how common this gap is. According to marketing data compiled by HubSpot, many organizations report that generating high-quality leads is their biggest challenge, even when traffic and form submissions continue to grow.

What a Lead Capture Page Must Do in Practice

A useful page should do five jobs in a clear order. It should identify fit, define value, reduce risk, collect routing signal, and preserve momentum after submission. If one of these jobs is weak, the system becomes less reliable even when conversion rate appears healthy.

This is why page clarity should be treated as operational infrastructure, not campaign decoration. The page influences sales efficiency, handoff speed, and forecasting confidence because it determines what kind of intent enters the pipeline.

In practical terms, your page should answer the following before users submit:

- Is this relevant to my current problem and role?

- What exactly do I receive after I convert?

- How much effort is expected from me?

- What happens next and how quickly?

- Why should I trust this process?

When those answers are easy to find, lead quality usually improves faster than teams expect.

Core Decision Sequence for Stronger Lead Quality

Reliable conversion pages follow a predictable sequence. They do not start with form pressure. They start with orientation and confidence.

Use this five-stage sequence:

- Fit: clarify audience and use-case boundaries.

- Value: state concrete outcome and expected timeline.

- Confidence: provide relevant proof and process visibility.

- Qualification: collect minimum routing inputs for next-step quality.

- Continuity: reinforce what happens after submission.

This order mirrors how business buyers reduce risk under time constraints. Breaking the order forces users to infer missing information, which reduces confidence and increases low-quality submissions.

Research on modern B2B decision journeys shows that business buyers often conduct extensive independent research before engaging with vendors, making clear value framing and trust signals essential early in the experience.

If your team is standardizing section order across multiple funnels, this guide on high-converting landing page structure is useful for documenting section jobs and preserving consistency.

Page Blueprint: Section Roles That Prevent Conversion Drift

A conversion page becomes easier to optimize when each section has one explicit responsibility. Multi-purpose sections create ambiguity and make test results hard to interpret.

First screen: fit plus outcome

Your opening block should identify audience context and practical value in one glance. Avoid broad claims that could apply to anyone, because broad claims attract broad intent and weak routing quality.

A strong first-screen set includes a role-aware headline, a concrete outcome, and one clear primary action. Supporting copy should reduce interpretation burden, not introduce a second narrative.

Value block: define what users get next

This section should describe deliverable clarity. Explain whether the user receives an audit, benchmark, demo path, planning session, or implementation brief. Unclear deliverables create uncertainty that lowers completion quality.

When possible, include expected turnaround ranges. Timeline clarity helps users decide whether this step fits their urgency.

Trust block: resolve practical risk

Trust for acquisition pages should be scenario-relevant. Use proof that answers likely doubts for this audience: outcome credibility, process reliability, and team competence in similar contexts.

Generic praise is weak at this stage. Contextual proof with clear constraints typically performs better because it feels operational rather than promotional.

Qualification block: capture routing signal

Form logic should collect enough data to route well and follow up intelligently. It should not capture every detail your internal teams might eventually want.

For many campaigns, first-touch routing can be built from a small set of fields plus source context. Additional details can be collected later through confirmation flows or scheduled conversations.

Continuity block: reinforce next-step confidence

Conversion quality depends on what happens after submit. A clear confirmation state with timing and expectation language can improve downstream responsiveness significantly.

Users should leave the page knowing exactly what to expect and how to prepare. Ambiguous handoff copy is one of the fastest ways to lose intent after successful conversion.

Offer Architecture by Funnel Readiness

Not all visitors should receive the same action path. Some need low-friction diagnostics, some need tactical evaluation support, and some are ready for deeper conversations.

Use an offer ladder with one dominant route per campaign objective. Keep secondary routes visible but subordinate so users are not forced to choose between equal-priority actions.

A practical readiness ladder:

- Early readiness: lightweight diagnostic or benchmark output.

- Mid readiness: structured review call with clear agenda.

- Late readiness: scoped implementation planning conversation.

This structure improves routing quality because action options match decision readiness instead of forcing one uniform path.

Form Strategy: Minimum Fields, Maximum Routing Value

Form quality is rarely improved by adding many fields upfront. It improves when each field maps to a real post-submit decision.

Before adding any field, ask three questions. Does this field change routing? Does it change follow-up quality? Can it be collected later without harming outcomes? If the answer is no, the field likely adds friction without operational value.

For many B2B acquisition pages, first-step forms can work well with name, work email, and one high-signal qualifier such as role scope or timeline priority. Additional context is better collected after trust is confirmed.

Microcopy and error-state standards

Field labels should be explicit, concise, and role-comprehensible. Helper text should explain purpose, not restate labels. Error messages should be actionable and immediate so users can recover without frustration.

Small wording improvements here often increase completion quality more than cosmetic form redesigns.

Progressive qualification workflow

Progressive qualification allows teams to keep initial friction low while still capturing useful depth. After conversion, follow-up can gather richer context through guided questions tied to explicit value.

This sequence usually produces better data quality because users provide deeper information after they understand what they receive in return.

Trust Design That Supports Commitment

Trust sections should be mapped to actual hesitation points in the flow. If users hesitate before form submission, place confidence cues near the action area, not only in deeper page sections.

Practical trust signals include contextual proof, process transparency, response standards, and clear ownership language for next steps. The most effective trust elements are usually specific and operational.

When teams need to align trust placement with broader business goals, this guide on aligning optimization with business outcomes helps prioritize confidence signals that actually affect pipeline quality.

CTA Hierarchy and Route Clarity

A page with many equal-priority actions often underperforms because visitors cannot infer preferred next steps. Clear hierarchy reduces decision load and improves routing consistency.

Use one primary CTA linked to the campaign objective. Add one secondary CTA only when it maps to a distinct readiness state. Keep tertiary routes visually subordinate and context-dependent.

CTA copy should describe outcome, not internal process. Visitors respond better when actions explain what they will get, not what your team will do internally.

Channel-Specific Variants Without Structural Chaos

Different channels bring different user expectations. Search users typically need direct relevance confirmation. Social users may require faster trust reinforcement. Partner referrals may require less education and clearer scheduling options.

The best operational model is one canonical template with controlled channel variants. Change headline angle, proof order, or CTA framing while keeping structural sequence stable. This preserves comparability in testing and prevents maintenance sprawl.

Teams that modify full structure per channel usually lose learning quality because too many variables change at once.

Mobile and Performance Standards

A large share of lead capture starts on mobile devices, including high-consideration journeys. Mobile friction can reduce quality before teams notice in aggregate reports.

Run real-device QA for readability, field interactions, keyboard behavior, submit reliability, and confirmation continuity. Desktop previews are insufficient for release decisions on high-impact pages.

Performance should prioritize meaning-first delivery. Load critical copy and action cues early, then progressively load non-critical visual assets. Fast visual rendering is less valuable than fast decision clarity.

Sales Handoff Design: Where Quality Is Realized

Marketing conversion quality is not complete until handoff is reliable. If submissions enter generic queues without context transfer, even qualified intent can decay before first response.

Define priority logic, ownership, and response windows per route type. First responders should see context signals from the form and source so follow-up can start with relevance.

Handoff consistency improves meeting quality and shortens qualification cycles. It also gives marketing cleaner feedback loops for page optimization.

Handoff checklist

- Priority tier logic based on qualification signal.

- Response time expectations by tier.

- Context summary visible in the first-touch workflow.

- Branch path for non-fit or low-readiness submissions.

A short, repeatable handoff checklist usually creates more improvement than adding new tooling without process alignment.

Measurement Model for Pipeline-Oriented Optimization

Submission counts are leading indicators, not final outcomes. Teams need a balanced metric system that covers volume, quality, and progression.

Track this minimum stack:

- Conversion rate by source and device.

- Qualified submission rate by campaign segment.

- Sales acceptance rate for first-touch leads.

- Meeting progression quality or opportunity entry.

- Time-to-first-response consistency.

- Post-submit engagement behavior.

Reviewing these metrics together prevents false wins where volume rises but fit quality declines.

Primary and guardrail metric pairing

Every test should have one primary success metric and one guardrail. Example: primary metric can be qualified submissions, guardrail can be sales acceptance stability. This structure avoids local optimization that harms downstream conversion stages.

Experiment Program: One Major Variable Per Cycle

Fast testing is useful only when outcomes are interpretable. Many teams reduce learning speed by changing headline, form, proof, and CTA hierarchy in the same release.

Use controlled cycles with one major variable and predefined rollback rules. Keep release notes concise and comparable so decisions are based on evidence, not memory.

High-value test areas:

- First-screen fit framing.

- Offer wording and deliverable clarity.

- Form length and field order.

- Trust-block placement near action zones.

- Confirmation copy and immediate next-step guidance.

Document every cycle with hypothesis, change, expected effect, observed effect, and next action. This builds reusable learning and reduces repeated mistakes.

Lead-Generation Landing Pages by Offer Type

Different offers require different page emphasis. Teams often copy one template across every campaign and then wonder why quality varies by route type. The issue is not always traffic quality. It is often a mismatch between offer complexity and section depth.

A useful way to prevent mismatch is to define page models by conversion intent. Keep the same structural spine, but change depth and sequencing where needed. This keeps operations consistent while improving relevance for each offer type.

Diagnostic or audit offer pages

Diagnostic offers usually attract visitors who are aware of a problem but uncertain about the right next step. These users need clear scope language and explicit output expectations before submitting.

Your page should explain what the diagnostic covers, what it excludes, and what the recipient will receive. If the deliverable is a short scorecard, say so. If it includes prioritized recommendations, define the format and expected turnaround.

These pages perform best when trust cues highlight analytical rigor and decision usefulness, not brand volume. Visitors want to know whether the diagnostic will help them choose an action path, not whether your company is generally popular.

Demo-request pages

Demo pages work best when they frame the session outcome clearly. Generic "book a demo" messaging often underperforms because users cannot distinguish between a product tour and a relevant evaluation conversation.

High-quality demo pages clarify use-case fit, expected agenda, and likely post-demo path. This reduces no-show risk and improves readiness on both sides of the conversation.

Form strategy for demo pages should balance qualification with ease. Ask for inputs that change demo relevance or routing quality, then move deeper discovery into confirmation or pre-call workflows.

Content-download pages

Guide or report download pages can produce high volume with mixed quality if value framing is too broad. Visitors should know what practical decision support the content offers before they submit.

Strong pages describe reader fit, content depth, and implementation value. Weak pages rely on vague "ultimate guide" language that attracts curiosity without commitment.

Post-submit continuity matters here as well. If download confirmation does not align with page promise, users disengage quickly and nurturing quality drops.

Webinar or event-registration pages

Event-driven capture requires faster clarity around audience fit, timing, and expected outcomes. Visitors need enough confidence to commit schedule time, not only enough interest to click.

These pages should prioritize speaker credibility, session scope, and practical takeaways near the top. Registration friction should stay low, while expectation-setting should remain precise.

Follow-up quality is critical for event pages. Confirmation messaging should include calendar guidance, attendance expectations, and reminder cadence so registration quality translates into attendance quality.

Free-tool or calculator pages

Tool-led offers often attract intent-rich users, but only when access clarity is immediate. Visitors should know what problem the tool solves, what inputs are required, and what output is produced.

Page structure should move quickly from relevance to utility demonstration, then to access CTA. Long persuasive copy can reduce momentum when users are primarily seeking fast practical value.

When tool pages include lead capture gates, explain why gating exists and what users get immediately after submission. Transparent gating preserves trust better than hidden friction.

Pre-Launch QA Checklist for Lead-Generation Landing Pages

Teams with strong conversion quality usually do one thing consistently: they run the same short QA gates before every major release. Without that discipline, fast iteration creates silent regressions in form behavior, trust context, and tracking quality.

This checklist should be practical enough to run under pressure. It should validate the highest-risk points without becoming a heavy process that teams skip when deadlines are tight.

Message and intent checks

Confirm that first-screen copy matches campaign promise and audience stage. If ad language emphasizes operational outcomes, the opening block should do the same. Mismatch here can reduce quality before users interact with the form.

Validate that one primary conversion objective is visually dominant. Secondary actions can exist, but hierarchy should remain obvious so users are not forced into interpretation.

Form and interaction checks

Test each required field for routing value. Remove fields that do not influence next-step quality. This one decision alone often improves completion reliability and downstream data usefulness.

Check helper text and error states on real devices. Ambiguous validation messaging creates drop-off patterns that are often mistaken for traffic-quality issues.

Trust and objection checks

Ensure confidence cues are positioned near high-friction decisions. If trust elements are buried, qualified users may abandon before reaching them.

Review objection handling for practical clarity. Pages should answer likely concerns about effort, timeline, and process without forcing users into large FAQ scans.

Performance and mobile checks

Validate first meaningful view on mobile under realistic network conditions. Critical copy and CTA elements should remain visible and readable without excessive scrolling or zoom behavior.

Confirm that submit flow, confirmation state, and follow-up triggers work consistently across devices. Conversion quality degrades quickly when technical reliability is inconsistent during live traffic spikes.

Measurement and handoff checks

Verify analytics events at key points: form start, field-error rate, submit success, and confirmation view. Event gaps can make optimization decisions unreliable for entire cycles.

Confirm routing logic and ownership rules in handoff workflows. Submissions should land with context, priority tags, and expected response-time standards already attached.

A lightweight checklist like this protects quality without reducing iteration speed. Over time, it also creates cleaner comparative data because release quality is more stable across campaigns.

Scenario Playbooks for Common Pipeline Patterns

Campaign performance often looks confusing until teams map behavior to the right scenario. These playbooks help operators diagnose and respond without defaulting to broad redesigns.

Scenario 1: Strong form volume, weak sales acceptance

This pattern usually signals fit ambiguity. The page attracts attention but does not filter sufficiently for readiness or relevance.

A practical response is to tighten role and use-case framing in the first screen, then clarify offer output near the CTA. Keep form friction stable in the first cycle so the impact of message changes is measurable.

Scenario 2: Healthy click-through, poor form completion

When clicks are high and completions are low, friction or uncertainty near the form is often the issue. Users are willing to evaluate but not confident enough to submit.

Fix by reducing non-essential fields, improving helper text, and moving trust cues closer to action modules. Then validate mobile interactions, since completion friction is usually worse on small screens.

Scenario 3: Good submissions, weak first-response engagement

If teams submit but ignore follow-up, page promises and handoff experience are likely misaligned. Users may not understand what happens next or why the next step is useful.

Fix by rewriting confirmation copy and first follow-up sequence for explicit expectation clarity. Include response timing and one practical action in the first communication step.

Scenario 4: Paid channels outperform organic in quality

This often means organic pages are too broad while paid pages are message-matched. Organic traffic may be entering with mixed intent that your page does not segment clearly.

Fix by introducing clearer fit boundaries and route clarity for organic arrivals. Use the same core template but adjust first-screen framing to reflect likely organic intent clusters.

Scenario 5: Quality drops after frequent iteration cycles

Repeated edits can create structural drift when contributors optimize locally. Over time, section logic degrades and test signals become noisy.

Fix by reapplying canonical section order, locking core modules, and requiring release checklist pass before scale. Stability in structure improves learning speed more than constant redesign.

90-Day Scale Plan for Conversion and Quality

A 30-day cycle improves immediate bottlenecks. A 90-day plan is needed to compound results and reduce operational variance across channels.

Month 1: Stabilize core architecture

Focus on first-screen fit, offer clarity, form reliability, and confirmation continuity. Lock one canonical template and remove low-value variation points.

By the end of month one, your team should have stable baseline metrics and explicit ownership for copy, workflow, and QA decisions.

Month 2: Expand controlled source variants

Build source-specific variants from the same structural template. Change only high-impact messaging surfaces and track performance by segment with primary-plus-guardrail logic.

At this stage, documentation quality matters as much as test results. Teams should record change rationale and keep/revert decisions in one shared log.

Month 3: Operationalize governance and handoff quality

Turn successful patterns into reusable standards for future campaigns. Enforce release gates for message match, mobile reliability, and event integrity before scaling traffic.

Then harden sales handoff rules so submission context is consistently transferred. This final step is what converts page performance gains into pipeline reliability.

Common Failure Modes and Fixes

Failure Mode 1: Broad messaging attracts broad intent

Pages rely on generic value language that cannot filter for role fit. Volume may increase, but lead quality declines.

Fix by tightening audience and use-case framing in the first screen. Clarify non-fit boundaries where appropriate.

Failure Mode 2: Form-heavy qualification at first touch

Too many required fields are used to compensate for weak message clarity. Completion and mobile usability decline.

Fix by reducing first-step fields and using progressive qualification after submission.

Failure Mode 3: Trust appears too late

Proof and process clarity sit below the first major CTA. Qualified users hesitate before they see risk-reducing information.

Fix by moving relevant trust cues closer to the primary decision point.

Failure Mode 4: No clear post-submit expectation

Users submit successfully but do not know response timing or next-step format. Engagement quality drops quickly.

Fix by upgrading confirmation language and first follow-up continuity with explicit timeline context.

Failure Mode 5: Channel variants drift structurally

Each channel page evolves separately until section logic diverges and results become hard to compare.

Fix by enforcing one canonical structure and limiting channel changes to controlled message surfaces.

Failure Mode 6: Metrics track activity, not pipeline quality

Teams optimize for submission count and ignore acceptance, progression, and response quality.

Fix by implementing a quality-aware metric stack and tying test decisions to primary plus guardrail metrics.

30-Day Execution Plan

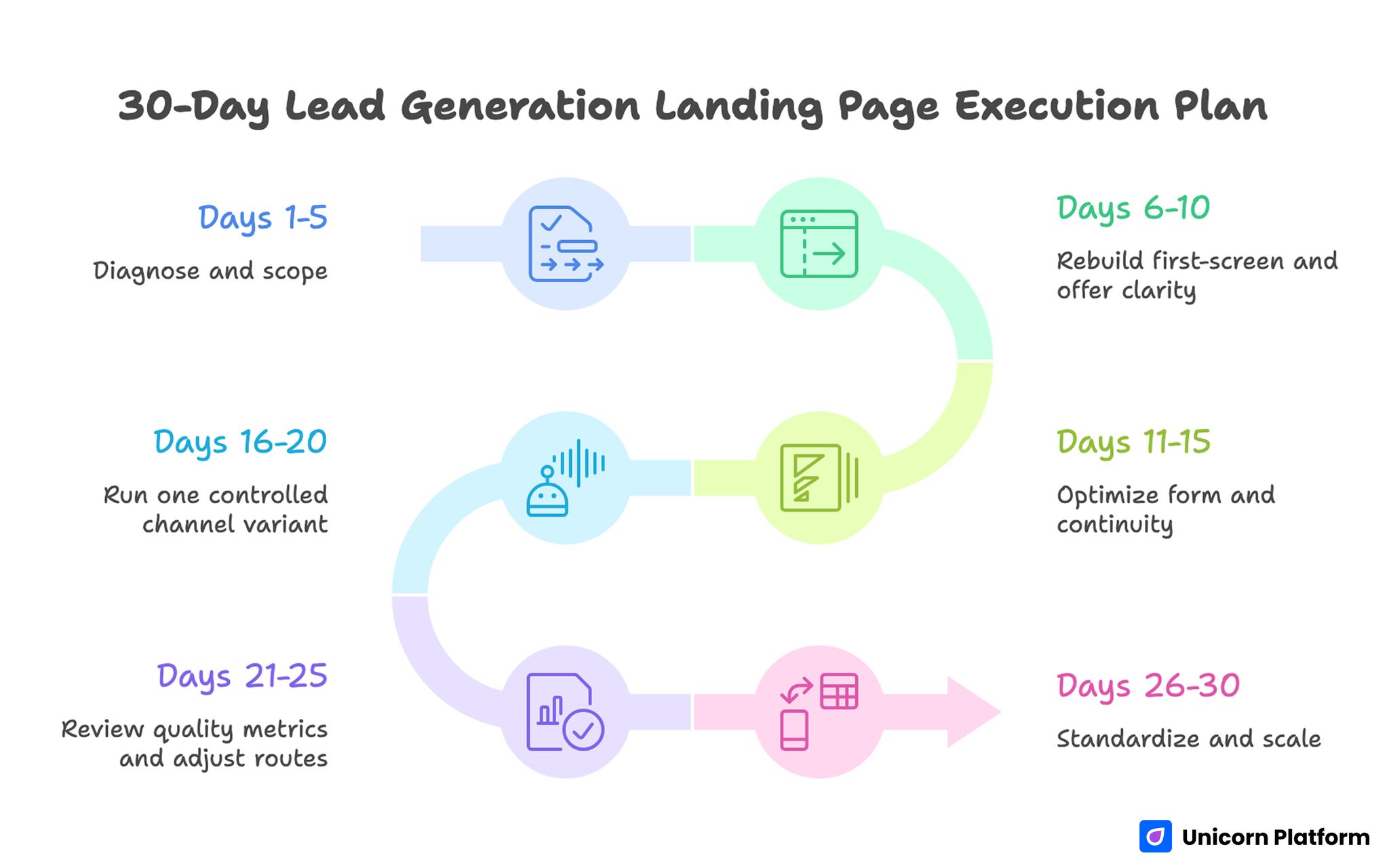

30-Day Lead Generation Landing Page Execution Plan

Days 1-5: Diagnose and scope

Audit current pages for fit clarity, offer precision, form friction, and trust placement. Define one primary metric and one guardrail metric for the next cycle.

Days 6-10: Rebuild first-screen and offer clarity

Rewrite opening sections with explicit audience and outcome language. Clarify deliverable and expected timeline in plain terms.

Days 11-15: Optimize form and continuity

Simplify first-touch fields, refine helper copy, and improve confirmation messaging. Validate full flow on real mobile devices.

Days 16-20: Run one controlled channel variant

Create one source-specific variant from the same architecture. Change only one major variable and compare against baseline.

Days 21-25: Review quality metrics and adjust routes

Evaluate submission quality, acceptance behavior, and response consistency. Promote winning blocks and retire weak variants.

Days 26-30: Standardize and scale

Update templates, QA checklists, and handoff playbooks based on evidence from the cycle. Scale only what improves both conversion and pipeline quality.

Running This System in Unicorn Platform

Unicorn Platform supports this model when teams build with reusable section modules and strict release gates. Speed is useful only when structure remains stable and decisions are measurable.

Start with one canonical lead capture template, then run controlled variants by source. Use shared checklists for copy clarity, form behavior, trust placement, and post-submit continuity before every release.

If your team is refining behavioral signal interpretation during optimization cycles, this guide on user behavior patterns for landing pages is useful for prioritizing bottlenecks that affect lead quality.

The operating principle is simple: structured clarity first, iteration speed second. When both are present, programs scale with less rework and better pipeline predictability.

FAQ: Lead-Generation Landing Pages

How many fields should a lead form include on first touch?

Use only fields that change routing or immediate follow-up quality. Most teams perform better when initial forms are short and additional context is gathered after trust is established.

Should we remove navigation from all conversion pages?

In most dedicated acquisition campaigns, reducing external exits improves focus. If navigation is necessary for trust context, keep it minimal and clearly secondary to the primary action.

What makes lead-generation landing pages perform better for B2B teams?

The strongest B2B pages combine precise fit framing, practical trust signals, and clear next-step expectations. They reduce uncertainty in business terms rather than relying on generic persuasion patterns.

How do we improve lead quality without reducing volume too much?

Tighten first-screen fit language before increasing form friction. Better relevance filtering often improves quality while preserving healthy conversion rates.

What is the best way to handle low-readiness visitors?

Offer one secondary route that matches their stage, such as a benchmark or diagnostic resource. Keep that route subordinate to the primary objective so hierarchy stays clear.

Which trust signal matters most near the CTA?

The most effective signal is the one that resolves immediate hesitation for your audience. In many B2B flows, process transparency and response expectations outperform generic testimonials.

How often should we test page changes?

Weekly or biweekly cycles work well when traffic is stable. The key is testing one major variable at a time and documenting results consistently.

How do we know if our page is attracting the wrong audience?

Watch qualified submission rate, acceptance signals, and early call quality, not only form volume. High volume with weak progression is a clear misalignment indicator.

Should we create separate pages for every traffic source?

Start with one canonical architecture and controlled message variants. Build full separate pages only when channel behavior consistently requires structural differences.

What should happen immediately after submission?

Users should receive confirmation, expected timeline, and one clear next step. This immediate clarity improves follow-through and reduces uncertainty-driven drop-off.

What is the fastest high-impact change for weak performance?

Rewrite first-screen relevance and offer clarity, then validate form friction on mobile. Those two updates often produce the fastest improvement in both conversion and quality.

Final Takeaway

Strong lead generation landing pages are built as decision systems, not isolated design artifacts. When fit, value, trust, qualification, and continuity are aligned, teams collect fewer low-intent submissions and more pipeline-ready opportunities.

With Unicorn Platform, this system can be implemented quickly without sacrificing control. The advantage is not just faster publishing. The advantage is faster learning with clearer quality outcomes.