Table of Contents

- The 2026 Decision Framework for Choosing a Builder

- Conversion Architecture That Scales Across Segments

- 30-Day Launch Plan for AI Landing Page Programs

- FAQ

AI page builders made launch speed easy. Reliable conversion quality is still hard.

Many teams can ship five new pages in a week and still miss growth goals because generation speed hides process gaps: weak message architecture, generic claims, shallow proof, unclear CTA sequencing, and inconsistent post-launch testing. The result is predictable. Traffic arrives, engagement looks acceptable, and qualified pipeline stays below target.

The practical objective in 2026 is no longer "build pages faster." The objective is to operate a repeatable page system where every release improves clarity, trust, and conversion outcomes. That requires better tool selection and better operating discipline.

This guide explains how to do both. You will get a practical framework for selecting AI landing page builders, evaluating cost models, structuring conversion-first pages, running controlled experiments, and scaling page operations in Unicorn Platform without quality drift.

If you are still setting up your base creation workflow, this implementation guide on how to create an AI landing page is a useful starting point before moving to advanced optimization.

sbb-itb-bf47c9b

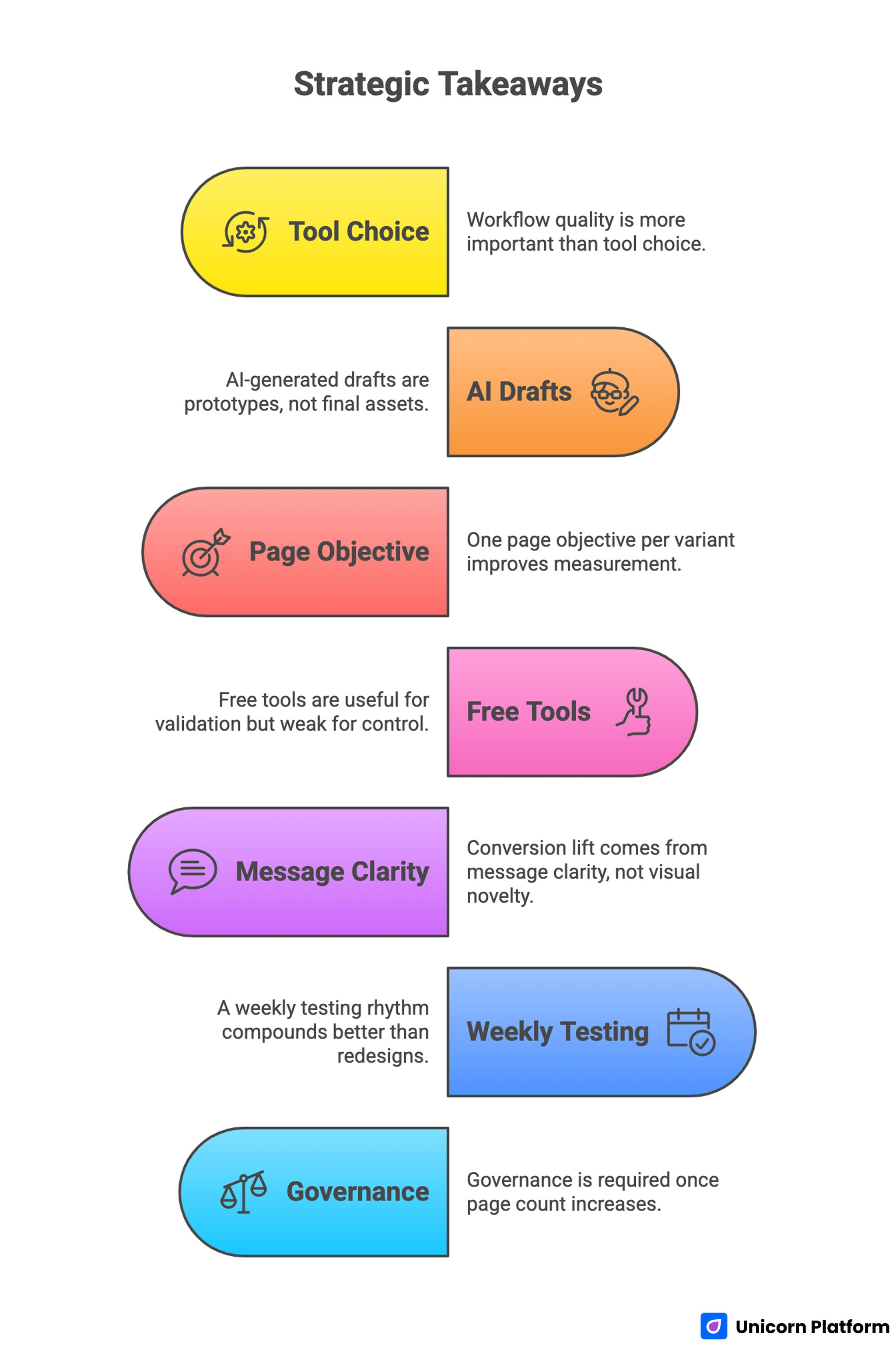

Quick Strategic Takeaways

Key Strategic Takeaways for AI Landing Page Builders

- Tool choice matters, but workflow quality matters more.

- AI-generated drafts should be treated as prototypes, not final assets.

- One page objective per variant improves measurement and decision speed.

- Free tools are useful for validation but often weak for long-term control.

- Conversion lift usually comes from message clarity, not visual novelty.

- A weekly testing rhythm compounds better than occasional redesigns.

- Governance is required once page count and contributor count increase.

Why So Many AI-Generated Pages Underperform

Underperformance usually starts with an assumption problem. Teams assume AI output quality and conversion readiness are the same thing. They are not. Output quality means readable copy and acceptable layout. Conversion readiness means the page helps a specific visitor make a specific decision with enough confidence to act.

A second issue is claim inflation. AI drafts often produce strong marketing language quickly, but those claims are rarely supported by operational detail. Visitors notice the gap. They may keep reading, but trust weakens before the CTA.

A third issue is decision overload. When pages show multiple actions with equal visual weight, users hesitate. Hesitation lowers completion rates and corrupts testing data because behavior becomes noisy.

A fourth issue appears in operations. Teams launch several variants simultaneously, change too many variables, and cannot identify which change produced which result. Without controlled experiments, iteration becomes guesswork.

The 2026 Decision Framework for Choosing a Builder

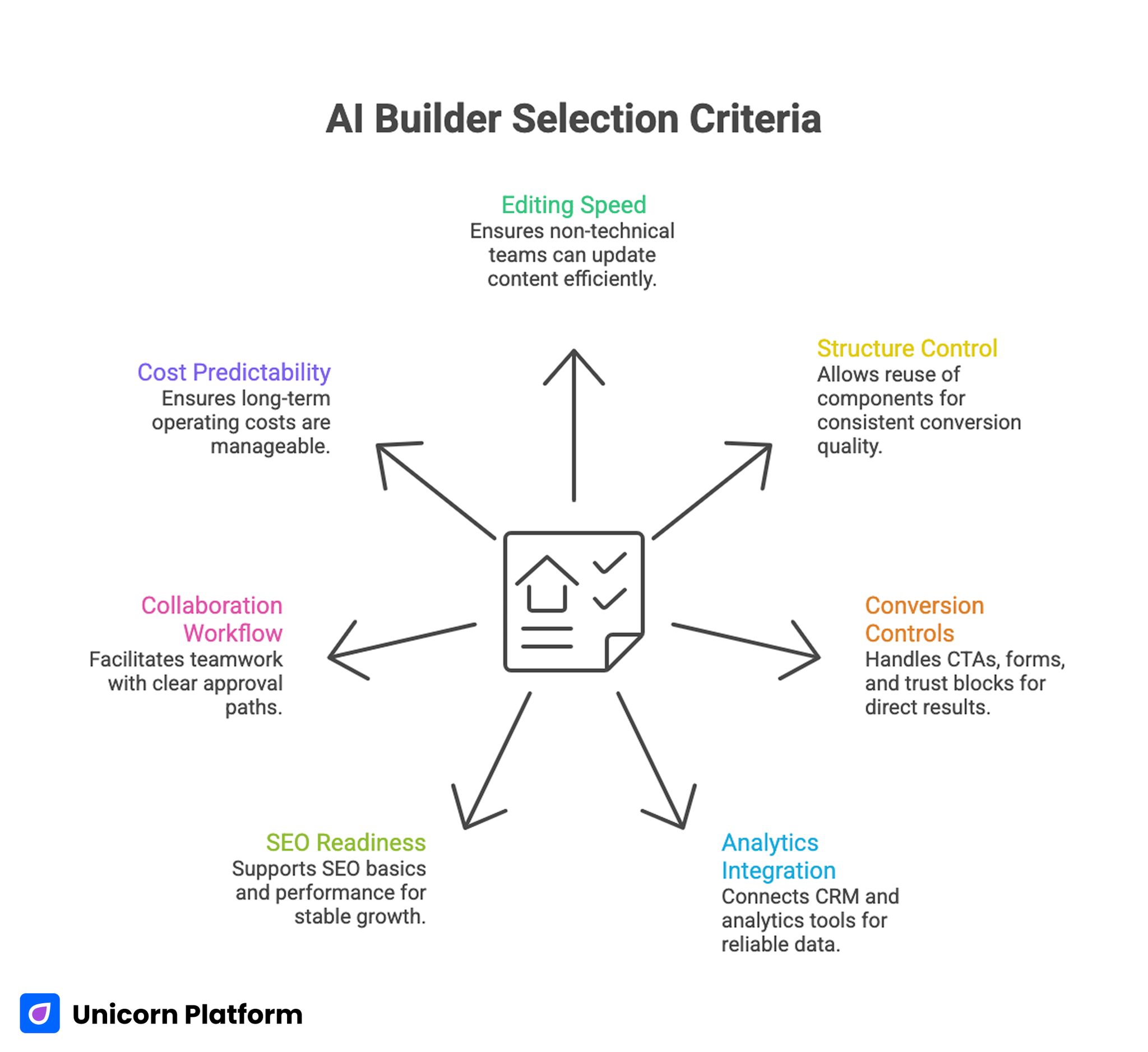

AI Builder Selection Criteria

A practical builder choice starts with a scorecard, not brand preference. The scorecard should reflect your business model, team capacity, and growth timeline.

Criterion 1: editing speed for non-technical contributors

Can product, growth, and content teams update critical sections without engineering support? If no, launch velocity will be constrained even if the platform looks powerful.

Criterion 2: structure control and component reuse

Can you reuse tested sections while adapting narrative by segment? Reusable components are one of the strongest predictors of consistent conversion quality at scale.

Criterion 3: conversion controls

Can the tool handle CTA hierarchy, form flexibility, intent-based section ordering, and trust-block placement cleanly? These features affect results more directly than decorative options.

Criterion 4: analytics and integration reliability

Can you connect CRM, analytics, attribution, and automation tools without brittle setups? Weak integration quality creates false learning and slows optimization.

Criterion 5: SEO and technical readiness

Does the platform support practical SEO basics and performance hygiene? High traffic without technical reliability produces fragile growth.

Criterion 6: collaboration workflow

Can multiple contributors work with clear approval paths and safe publishing controls? Team friction often becomes the hidden bottleneck as programs grow.

Criterion 7: 12-month cost predictability

Entry pricing is less important than long-term operating cost. A platform that looks cheap in month one can become expensive through seat expansion, integration overhead, and rework burden.

A weighted scorecard based on these criteria removes most emotional tool debates.

Builder Archetypes and When They Fit Best

Most AI landing page tools fall into a few functional archetypes. Knowing the archetype helps you choose faster and avoid mismatch.

Archetype A: prompt-first rapid generators

These platforms are strong for quick ideation and draft generation. They are useful in early-stage validation when your main goal is speed of concept testing.

Primary risk: generic output and weaker control of conversion logic unless manual editing standards are strict.

Archetype B: design-flexible builders with AI assistance

These tools combine generation support with deeper layout control. They are useful when brand standards and conversion architecture both matter.

Primary risk: teams can over-design pages and delay learning if scope discipline is weak.

Archetype C: campaign-optimization platforms

These builders focus on testing, scaling, and campaign operations. They are useful when acquisition volume is already meaningful and incremental conversion gains justify deeper optimization workflows.

Primary risk: complexity can exceed what lean teams can maintain without clear ownership.

Archetype D: CMS-ecosystem builders

These tools integrate into broader site stacks, often with strong plugin ecosystems. They are useful when existing infrastructure and content operations require compatibility.

Primary risk: integration flexibility can increase maintenance overhead if governance is weak.

The right archetype depends on operating stage, not hype.

Practical Comparison Model for Shortlisting Tools

After identifying the right archetype, run a consistent simulation across shortlisted tools. Use the same campaign scenario for each platform and score outcomes objectively.

Recommended simulation:

- Build one cold-traffic page variant.

- Build one warm-traffic comparison variant.

- Add one proof update and one CTA change.

- Connect analytics and form destination.

- Publish and validate mobile behavior.

Track completion time, editing friction, error frequency, and control depth.

Example weighting model

- editing speed: 20%

- conversion control: 20%

- integration reliability: 15%

- reusable section workflow: 15%

- SEO/performance control: 10%

- collaboration quality: 10%

- cost predictability: 10%

Use independent scoring by two contributors before group review. Independent scoring reduces group bias and reveals true disagreement areas.

Free vs Paid Paths: What Actually Changes

Free AI builders are useful for early-stage teams with uncertain positioning. They lower the cost of experimentation and can speed initial direction finding.

The limitation appears when teams move beyond simple prototypes. Paid distribution and segmented offers usually require stronger control over templates, integrations, analytics, and brand consistency than free plans provide.

A practical progression:

- validation stage: free or low-cost tools for message exploration

- stabilization stage: move to a builder with stronger conversion controls

- scaling stage: prioritize integration reliability and workflow governance

Switch too late and growth slows from tool constraints. Switch too early and resources get consumed before fit is clear.

Cost Models and Hidden Expenses

Many teams underestimate true operating cost because they focus on subscription price alone.

Real cost includes:

- platform subscription and renewals

- contributor seat growth

- integration tooling and maintenance

- content and proof refresh labor

- migration and rework risk

Hidden cost is often highest in rework. If your tool cannot reuse successful components cleanly, teams rebuild too much and lose velocity.

Build a 12-month cost projection using conservative, expected, and aggressive growth scenarios. Include estimated page volume, experiment cadence, contributor count, and integration requirements.

Conversion Architecture That Scales Across Segments

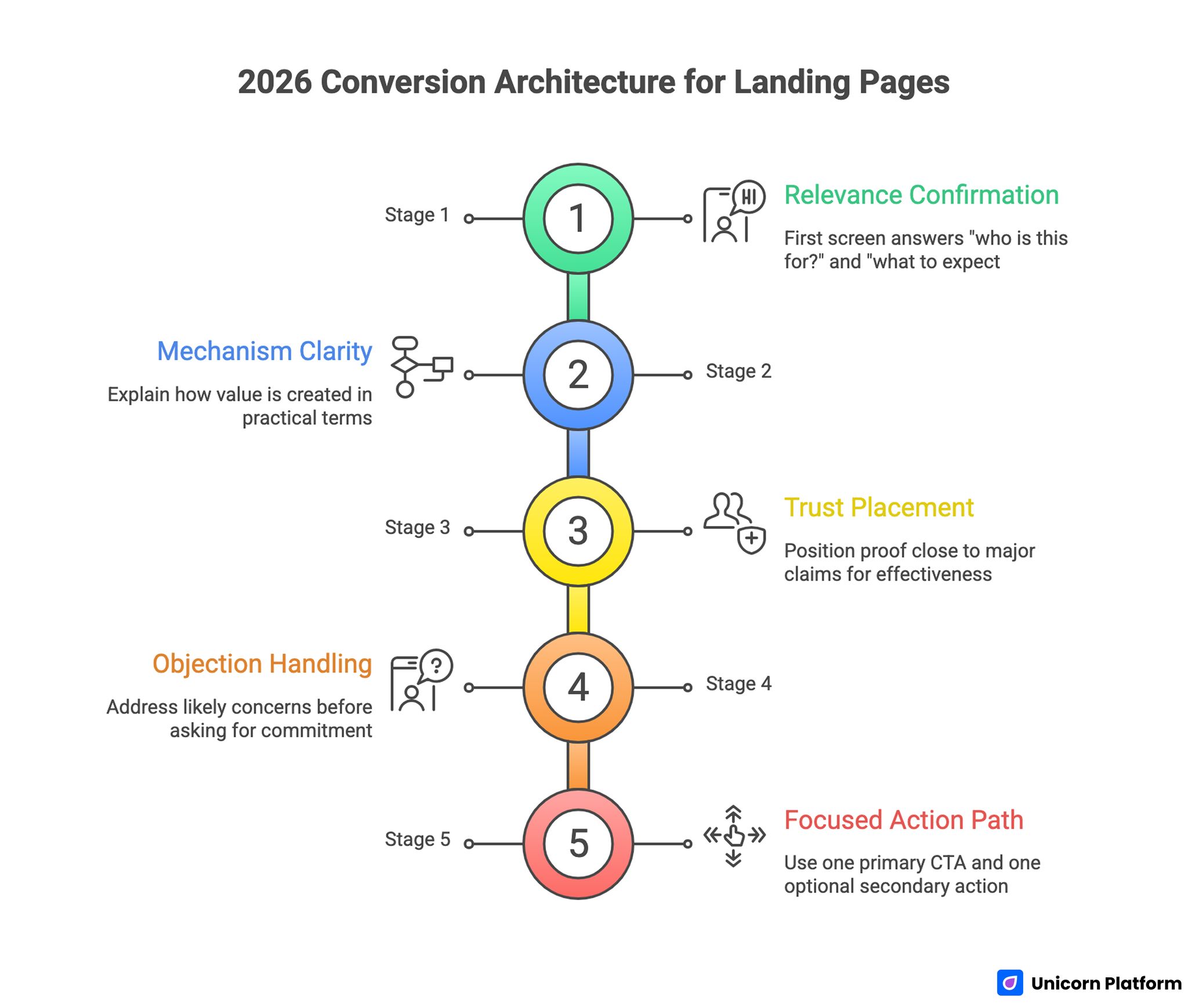

Key Stages of Landing Page Conversion Architecture

The strongest pages in 2026 still follow a clear decision flow. AI assistance changes drafting speed, not user psychology.

Stage 1: relevance confirmation

The first screen should answer who the page is for and what first result to expect. Ambiguous first screens create immediate bounce risk.

Stage 2: mechanism clarity

Explain how value is created in practical language. Avoid long feature lists before users understand workflow logic.

Stage 3: trust placement

Position proof close to major claims. Distributed proof is usually more effective than one testimonial cluster near the bottom.

Stage 4: objection handling

Address likely concerns before asking for commitment. Typical objections include fit, implementation effort, time-to-value, and pricing confidence.

Stage 5: focused action path

Use one primary CTA per variant and one optional lower-friction secondary action. This improves both user confidence and experiment clarity.

When teams keep this structure stable and test one variable at a time, learning compounds faster.

AI Drafting Standards: From Raw Output to Publish-Ready Pages

AI copy can accelerate production, but quality controls determine outcomes.

Use a strict editorial pipeline:

- Strategy pass: confirm objective, audience, and intent stage.

- Clarity pass: remove vague claims and generic language.

- Proof pass: attach evidence to key promises.

- CTA pass: verify action path and commitment transparency.

- Quality pass: readability, consistency, and technical checks.

Reject drafts that sound polished but remain non-specific. Specificity is a stronger conversion lever than style.

For teams making frequent post-launch adjustments, this guide on updating landing pages with AI prompts can support controlled revision cycles without disrupting core structure.

Prompt quality dramatically affects AI output. OpenAI’s prompt engineering guidelines recommend structured prompts with clear audience, intent, and proof placeholders to generate reliable first drafts

WordPress and CMS-Integrated Workflows

Teams in CMS ecosystems often need to balance AI drafting speed with plugin compatibility, performance constraints, and operational consistency.

A practical workflow for CMS-first teams:

- generate draft structure in AI builder layer

- map sections to CMS-compatible components

- validate performance impact from plugin stack

- run mobile and form QA before scaling traffic

Common risk: adding too many plugins to replicate native conversion features. Complexity rises, reliability drops, and iteration slows.

CMS workflows can still perform well when architecture remains lean and governance is explicit.

SEO and AI-Search Readiness for Landing Pages

Landing pages should be discoverable and decision-ready. These goals are aligned when structure is clear and message intent is specific.

Core SEO readiness includes:

- precise title and heading hierarchy

- clear internal routing by intent stage

- metadata aligned to actual page promise

- performance-safe assets and mobile quality

AI-search readiness also depends on direct answer quality. Sections that clearly answer fit, mechanism, and constraint questions are easier for retrieval systems and users to interpret.

If your current structure is broad or inconsistent, this practical conference and seminar landing-page framework is useful as a clarity benchmark for intent-first sequencing.

Aligning metadata, headings, and content with user intent is key to visibility. Moz emphasizes that combining technical SEO with clear editorial structure ensures pages are both discoverable and persuasive.

Trust Design and Responsible AI Messaging

Trust is one of the strongest conversion drivers for AI offers. Visitors need to understand both value and boundaries.

Effective trust modules include:

- capability boundaries in plain language

- process transparency for key outputs

- evidence freshness dates for metrics and proof

- clear escalation path when automation is uncertain

Trust information should appear near high-impact claims and before high-commitment CTAs.

Pages that delay trust explanations often see strong initial engagement and weak completion quality.

30-Day Launch Plan for AI Landing Page Programs

Week 1: objective lock and baseline rebuild

Set one page objective, one audience, and one primary success metric. Rebuild first-screen message and CTA path for specificity.

Week 2: mechanism and proof upgrade

Add concise process explanation, distributed trust blocks, and objection handling. Remove non-essential sections that dilute flow.

Week 3: controlled segmentation tests

Launch one cold-intent and one warm-intent variant. Test one major variable only, then monitor qualified conversion behavior.

Week 4: retain, revise, and standardize

Retain winning sections, revise underperformers, and update reusable templates. Document outcomes and next hypotheses.

This plan is realistic for lean teams and strong enough for structured growth programs.

90-Day Scale Plan

Days 1-30: stabilize baseline quality

Improve consistency across message, proof, and CTA hierarchy. Verify analytics reliability before expanding variant volume.

Days 31-60: expand by adjacent segments

Add high-overlap audience variants with minimal structural drift. Adapt only the sections that influence fit and objection logic.

Days 61-90: operationalize governance

Formalize ownership, release gates, and monthly proof-refresh cadence. Archive weak pages and protect high-performing patterns.

Scaling becomes safer when teams institutionalize what works instead of restarting every cycle.

Weekly Optimization Cadence That Compounds

A consistent weekly operating rhythm usually beats irregular large updates.

Suggested cadence:

- Monday: review signal quality by section and source.

- Tuesday: select one hypothesis with clear metric target.

- Wednesday: implement change in one page family.

- Thursday: run QA checks and mobile verification.

- Friday: document results and keep/revise/archive decision.

This rhythm creates cleaner data and more reliable decision speed.

Common Failure Patterns and Fast Corrections

Failure pattern: high CTR, low qualified actions

Correction: tighten first-screen specificity and qualification language. Traffic quality often improves when promises and constraints are explicit.

Failure pattern: many tests, little learning

Correction: limit to one major variable per cycle and standardize experiment logs.

Failure pattern: visually strong pages, weak trust

Correction: move proof and boundary language closer to major claims and final CTAs.

Failure pattern: free-tool lock-in at scale

Correction: migrate when control limits begin to hurt lead quality or iteration speed.

Failure pattern: stale proof assets

Correction: implement monthly proof refresh and metric validation ownership.

Failure pattern: inconsistent channel messaging

Correction: map ad promise to page confirmation and proof section before launch.

Team Governance for Multi-Page Programs

As page count grows, governance determines whether quality improves or decays.

Practical role map:

- positioning owner: message clarity and intent fit

- proof owner: evidence validity and freshness

- growth owner: experiment design and metric interpretation

- QA owner: release checks and technical reliability

Each release should have explicit approval and rollback accountability. Shared ownership without defined responsibility usually creates delays and errors.

Operational governance is not bureaucracy when it protects conversion reliability.

Scenario Example: Turning Fast Drafting Into Real Gains

A growth team produced frequent AI-generated pages and saw strong publication speed with inconsistent pipeline results. Their first major fix was process, not tool replacement.

They standardized first-screen specificity, distributed proof placement, and one-primary-CTA rules across all variants. They also moved from broad redesigns to weekly one-variable tests.

Within two cycles, qualified lead share improved because visitors could self-qualify faster and trust signals appeared earlier in the decision path.

The lesson was simple: speed became valuable only after structure and governance were fixed.

WordPress-Centric Implementation Path for AI Landing Pages

Many growth teams use WordPress ecosystems for core content operations and still want AI-assisted landing-page speed. That combination can work well when implementation order is controlled and plugin complexity is limited.

A practical WordPress path starts with role clarity. Decide whether WordPress is the publishing layer only or also the experimentation layer. If WordPress is doing both, you need stronger change controls because testing scripts, caching settings, and plugin behavior can interfere with clean measurement.

Start with a clean technical baseline:

- lightweight theme or framework with predictable rendering behavior

- one primary page-builder layer, not several overlapping builders

- one analytics layer with verified event naming conventions

- one form routing workflow connected to CRM destination

Once baseline is stable, map your landing-page architecture by intent stage. Cold-intent pages need fast clarity and low-friction micro-commitment paths. Comparison-intent pages need mechanism detail and structured proof. Decision-intent pages need implementation confidence and commitment clarity.

Step-by-step operational sequence for WordPress teams

Step 1: Define one campaign objective and one primary audience segment.

Avoid building generalized pages for multiple audience types in one flow. Segment-specific pages improve both conversion quality and test interpretation.

Step 2: Select one reusable section system.

Decide how hero, mechanism, proof, objection handling, and CTA modules will be reused across campaigns. Reusable sections reduce QA time and keep narrative quality stable.

Step 3: Configure performance defaults before design iteration.

Set image compression standards, script loading priorities, and cache rules before running variant tests. If performance shifts unpredictably between variants, conversion comparisons become less reliable.

Step 4: Build two variant templates from the same structure spine.

Use one outcome-first hero variant and one mechanism-first hero variant. Keep all other major variables stable so the test remains interpretable.

Step 5: Add trust boundaries as first-class page components.

Include capability limits, data-handling summary, and escalation path near key claims. Risk-sensitive users need these details before they are ready to act.

Step 6: Validate device and input behavior.

Check thumb-zone CTA access, keyboard behavior on form fields, and scroll behavior under real device conditions. Desktop-only QA is not enough for modern acquisition flows.

Step 7: Launch to controlled traffic windows.

Use stable source windows and avoid concurrent broad campaign edits while first results are collected. Early signal quality determines later decision quality.

Step 8: Archive weak variants quickly.

Underperforming variants should be retired once confidence is sufficient. Keeping too many weak pages live creates operational drag and reporting noise.

Common WordPress-specific risks and mitigations

Risk: too many plugins for overlapping functions.

Mitigation: maintain one approved plugin list with clear ownership and quarterly review.

Risk: inconsistent template behavior across contributors.

Mitigation: lock core section templates and expose only approved editable zones.

Risk: tracking fragmentation from mixed scripts.

Mitigation: maintain one event schema and one change log for tracking updates.

Risk: performance degradation after content updates.

Mitigation: include page-speed checks in publish gates, not post-release cleanup.

With this approach, WordPress teams can keep AI-assisted speed while preserving conversion reliability and operational clarity.

Pricing Models, ROI Reality, and Hidden Cost Forecasting

Pricing conversations often start and end at subscription tiers. That is rarely enough for operational decisions.

A realistic evaluation separates cost into four buckets:

- platform and seat cost

- integration and data-routing cost

- content and proof maintenance cost

- performance loss cost from tool limitations

The fourth bucket is the most important and most ignored. If your tool cannot support clean iteration and stable conversion controls, you pay through lower lead quality and slower learning, even when subscription fees look affordable.

Pricing models you will encounter most often

Subscription-based pricing is predictable for baseline planning and works well when contributor count and page volume are stable. Risk appears when seat growth is rapid or when key conversion features are locked behind high tiers.

Pay-per-use pricing can work for low-volume experimentation, but costs can climb quickly during active optimization windows with high variant turnover.

Hybrid pricing models mix subscription and usage multipliers. They can be efficient if your team has clear release discipline and avoids uncontrolled draft volume.

One-time deals may look attractive for budget-constrained teams, but long-term support quality, update cadence, and integration maturity should be evaluated carefully before relying on them for core operations.

Hidden costs that materially affect outcomes

Migration cost is often underestimated. If a tool switch becomes necessary during growth, page transfer, tracking reimplementation, and QA cycles can consume weeks.

Contributor training cost also matters. Tools with steep workflow complexity reduce effective output, especially when non-technical teammates are central to operations.

Proof maintenance cost grows with page volume. Teams that produce many segmented pages without refresh ownership quickly accumulate stale trust assets, which lowers conversion quality.

Experiment complexity cost rises when tools lack clean variant controls. Teams spend more time diagnosing test contamination and less time improving proven patterns.

Practical ROI model for decision meetings

Use a quarterly ROI worksheet with five variables:

- average time-to-publish per page variant

- qualified conversion rate by intent stage

- average cost per qualified action

- contributor hours per iteration cycle

- rework rate from failed or noisy experiments

This model turns tool choice into an operating decision tied to business outcomes, not a design preference discussion.

Tool-Selection Playbooks by Team Type

Different teams need different tool characteristics. A universal "best builder" rarely exists in practice.

Startup product team playbook

Primary objective: fast validation with clean signal quality.

Tool preference: high editing speed, simple collaboration, reliable analytics hookup, and low setup overhead.

Operational rule: prioritize one primary funnel and one adjacent segment before expanding page families. Over-segmentation too early reduces learning clarity.

Agency playbook

Primary objective: repeatable quality across many client contexts.

Tool preference: reusable component libraries, access controls, multi-project governance, and export flexibility.

Operational rule: standardize launch gates across all accounts. Variation in client vertical should affect narrative and proof, not release discipline.

E-commerce growth team playbook

Primary objective: improve paid traffic yield and post-click confidence.

Tool preference: strong conversion controls, clear product-proof modules, and straightforward integration with commerce and attribution systems.

Operational rule: test one commercial variable at a time, such as offer framing, trust placement, or checkout-path clarity.

B2B demand-gen team playbook

Primary objective: higher-quality pipeline from segmented acquisition.

Tool preference: CRM-friendly forms, role-specific variants, and trustworthy mechanism explanation components.

Operational rule: align page language with sales qualification criteria so lead routing and follow-up quality remain consistent.

Creator and media team playbook

Primary objective: convert attention into subscription and qualified demand.

Tool preference: fast campaign-page creation, simple A/B controls, and clean mobile readability.

Operational rule: keep message promise and CTA commitment aligned with audience trust stage. Over-aggressive asks can degrade long-term audience value.

Team-type playbooks shorten selection cycles because they connect tool features to real operating goals.

Pre-Launch and Post-Launch QA Framework

Reliable page programs use QA as a conversion function, not a technical afterthought.

Pre-launch QA checks

- first-screen promise matches source message

- mechanism explanation is specific and truthful

- proof appears near major claims

- CTA hierarchy is clear and non-conflicting

- form behavior is verified end to end

- mobile interaction is validated on real devices

- analytics events are firing with correct labels

Post-launch QA checks

- qualified conversion quality by source

- segment-level drop-off diagnosis by section

- proof freshness and claim validity

- CTA commitment alignment by intent stage

- performance stability under traffic changes

- weekly documentation of test outcomes and decisions

Release-gate policy

Do not scale traffic if core QA gates fail.

Fixing trust or flow issues before scale almost always yields better return than paying for more traffic into a weak page.

Do not keep weak variants live "just in case."

Archive quickly and preserve only pages that pass both quality and conversion criteria.

Do not run broad redesigns during active tests.

Stability windows are required for interpretable data and confident decisions.

This QA framework keeps page operations predictable as output volume grows.

Advanced Experiment Backlog for Mature Programs

Once core conversion architecture is stable, mature teams need a structured backlog that prioritizes high-impact experiments over low-value cosmetic changes.

A useful experiment backlog is grouped by decision stage:

- relevance stage experiments: hero specificity, audience framing, source-message alignment

- understanding stage experiments: mechanism explanation depth, visual flow sequence, terminology simplification

- trust stage experiments: proof format, claim-validation placement, boundary transparency wording

- action stage experiments: CTA framing, form friction, commitment clarity

This stage-based grouping helps teams avoid random test selection. It also makes cross-team planning easier because each proposed change is tied to a specific user decision bottleneck.

Prioritization model for backlog selection

Use a practical scoring formula:

- expected impact on qualified conversion

- confidence based on prior evidence

- implementation effort and QA complexity

- risk of contaminating active tests

Choose one high-impact, medium-effort experiment and one low-effort maintenance improvement per weekly cycle. That balance improves momentum while keeping the system stable.

Reporting model that improves decision speed

Test reports should be short and comparable. Use a fixed template:

- hypothesis and decision-stage target

- pages and segments included

- primary metric and guardrail metrics

- result confidence and interpretation

- keep/revise/archive decision

- next test recommendation

Teams that standardize reporting usually make faster and more consistent calls on weak variants. They also reduce repetitive debates because decision history is visible and structured.

Guardrails for high-volume testing

Avoid running multiple high-impact tests on the same page family in one cycle. Keep one major variable per page family to protect interpretability.

Pause backlog expansion when data quality drops. If attribution looks unstable or traffic mix changes sharply, stabilize measurement before adding new experiments.

Retire stale tests from the backlog. Any test idea older than one quarter should be re-evaluated against current user behavior and commercial priorities before execution.

Quarterly review for backlog quality

At quarter end, review test outcomes by decision stage and identify where learning density is strongest. Some teams over-invest in hero tests and under-invest in trust-sequencing or form-friction tests, which creates blind spots in optimization.

Use quarterly reviews to rebalance backlog allocation and update template standards. If one proof format repeatedly outperforms alternatives, codify it as default and move experimentation effort to the next bottleneck layer.

This backlog discipline transforms testing from ad hoc activity into a dependable operating asset that compounds over time. It also improves onboarding for new contributors, because test history and decision rationale become explicit rather than tribal. Teams with explicit experiment memory usually scale faster with fewer repeated mistakes across campaigns.

FAQ: AI Landing Page Builders in 2026

What is the main advantage of AI landing page builders?

The main advantage is faster drafting and launch velocity. Business impact appears when speed is paired with strong conversion operations and quality controls.

Are free AI builders enough for serious campaigns?

They can work for early validation. Serious campaigns usually need stronger control over integrations, testing workflows, and brand consistency.

How do we choose between many similar tools?

Use a weighted scorecard and run the same scenario test in each tool. Evaluate operational fit, not marketing claims.

Do AI-generated landing pages convert better by default?

No. They convert better only when message clarity, trust placement, and CTA sequencing are intentionally designed.

What is the best first test after launch?

Test first-screen positioning clarity against one alternative. Early relevance improvements often create the fastest conversion gains.

How many variants should we run at once?

Run only what your team can measure and maintain responsibly. Excess variants without governance reduce learning quality.

Should we optimize for traffic volume or qualified actions first?

Qualified actions first. Volume without intent fit usually creates cost and noise without durable growth.

How often should proof sections be updated?

At least monthly, or faster when major offer, metric, or product changes occur.

Can no-code platforms support long-term scale?

Yes, when section reuse, governance, and measurement standards are mature.

What is the biggest long-term mistake teams make?

Treating landing pages as one-time projects. Strong programs operate pages as evolving systems with explicit ownership and weekly learning loops.

How do we keep AI drafts from sounding generic?

Use constrained prompts, strict editorial passes, and evidence-based claim rules. Generic language should never pass final QA.

What should be in every final QA gate?

Style consistency, claim evidence checks, trust placement review, CTA clarity, mobile behavior, and analytics validation should all be mandatory before scale. Consistency wins over random redesign cycles.

Final Takeaway

AI landing page builders create an execution advantage only when teams pair generation speed with disciplined decision systems. The winning model in 2026 is clear: choose tools by operational fit, run conversion-first page architecture, and iterate with strict quality governance.

With Unicorn Platform, teams can operationalize this model effectively through reusable structures, fast controlled updates, and evidence-driven optimization loops that improve both speed and conversion quality over time.