Table of Contents

- What AI Already Does Well in No-Code Teams

- A Practical 9-Step Workflow for AI-Assisted Page Production

- 30-Day Implementation Plan

- Common Failure Patterns and Practical Fixes

- FAQ

The most common AI question in software and web teams is simple: will machines take over coding work completely? It is a compelling headline, but it is usually a poor planning framework. Teams do not fail because they guessed a trend wrong. Teams fail because they adopt tools without clear ownership, quality controls, and decision logic.

Programming is not a single task. It is a chain of responsibilities that includes discovery, architecture, implementation, validation, release, and iteration. AI changes parts of this chain quickly, but it does not remove accountability for business outcomes.

No-code environments make this more visible. Teams can publish faster than before, so strategic errors also move faster. The winning approach is not "AI versus humans." The winning approach is capability allocation: which steps AI can accelerate safely, and which steps people must continue to own.

Unicorn Platform is strongest when used with that operating mindset. Speed then becomes an advantage because it is connected to structure, governance, and measurable learning.

sbb-itb-bf47c9b

Quick Takeaways

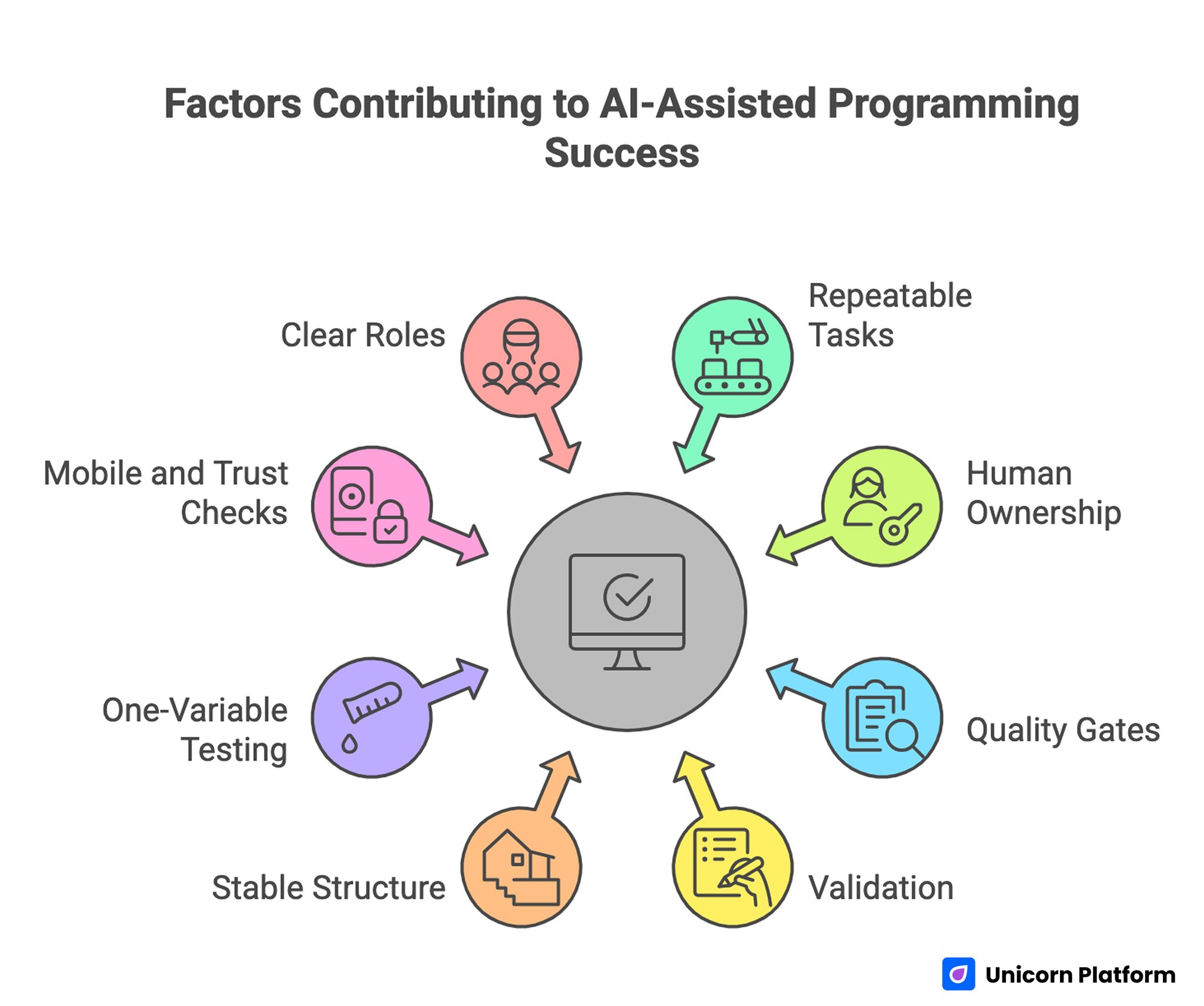

Factors Contributing to AI-Assisted Programming Success

- AI improves throughput in repeatable production tasks.

- Human ownership remains critical for strategy and final accountability.

- No-code speed creates value only when quality gates are strict.

- Draft generation is easy; trustworthy outcomes require validation.

- Page structure should stay stable while copy and proof evolve.

- One-variable testing is more useful than high-volume random edits.

- Mobile and trust checks should block releases when they fail.

- Teams need clear roles before scaling AI-assisted workflows.

Why the Debate About Programmer Replacement Is Usually Misframed

The replacement question sounds binary, but real work is not binary. A single product launch might involve user research, requirement decisions, architecture tradeoffs, copy development, landing page implementation, analytics setup, QA, and post-launch optimization. AI may assist in each stage, yet assistance is not equivalent to full ownership.

According to the World Economic Forum in its Future of Jobs Report, automation is expected to transform tasks rather than fully replace core professional roles, with humans continuing to play a central role in decision-making, creativity, and accountability.

A second framing problem is confusing output with value. AI can generate code, layouts, and copy quickly. Business value still depends on accuracy, trust, fit with user intent, and consistency across channels.

A third problem is ignoring operational risk. Fast AI-generated output can include factual mistakes, weak security assumptions, inaccessible UI decisions, and misleading claims. If teams do not run strong checks, speed amplifies risk.

A better planning question is practical: how should work be redistributed so teams can ship faster while preserving quality and trust? This framing leads to better operational choices than replacement-style debates.

What AI Already Does Well in No-Code Teams

AI is already useful in high-volume, low-ambiguity tasks. It can generate first drafts, suggest content variants, propose section alternatives, summarize feedback, and speed up repetitive formatting.

In page production workflows, AI is often strongest at ideation and early synthesis. It can produce multiple initial directions quickly, which helps teams avoid blank-page delays.

AI also helps with routine transformation work. Examples include rewriting a section for another audience, condensing long notes, or generating initial FAQ candidates from support transcripts.

These advantages are real, and teams should use them. The operational mistake is stopping at generation and skipping decision-level review.

When teams are setting up AI-assisted page workflows, how to create AI landing pages can help establish a repeatable draft-to-review path. It is most effective when paired with explicit ownership and release rules.

Where Human Ownership Remains Non-Negotiable

Even with strong AI support, several responsibilities should remain human-led because they shape risk and outcomes directly. This is where accountability should stay clear.

Positioning is one of them. AI can provide options, but deciding who the page is for and what promise it should make requires market judgment and business context.

Claim validation is another. Teams need humans to verify facts, remove overstatements, and align language with legal and trust boundaries.

Release accountability is also human-owned. Someone must decide whether a page is acceptable to publish based on product truth, user clarity, and brand risk.

Post-launch interpretation remains a human responsibility as well. AI can summarize metrics, but teams still need judgment to decide which changes are meaningful and which are noise.

Capability Allocation Framework for AI + No-Code Work

A practical way to run mixed workflows is to map each task to one of three buckets: AI-assisted, human-led, or joint review. Teams can then scale speed without losing control.

AI-assisted by default

- draft generation from structured briefs

- variant ideation for headlines and section flow

- summarization of user feedback and notes

- routine formatting and section-level rewrites

Human-led by default

- audience and positioning decisions

- claim accuracy and risk review

- trust and policy communication

- final release sign-off and accountability

Joint review tasks

- mechanism sections that explain how value is delivered

- objection handling and trust placement

- CTA wording and form friction strategy

- test hypothesis design and result interpretation

This framework keeps teams from drifting into either extreme: full automation dependence or slow manual bottlenecks. Balanced execution usually produces more reliable outcomes.

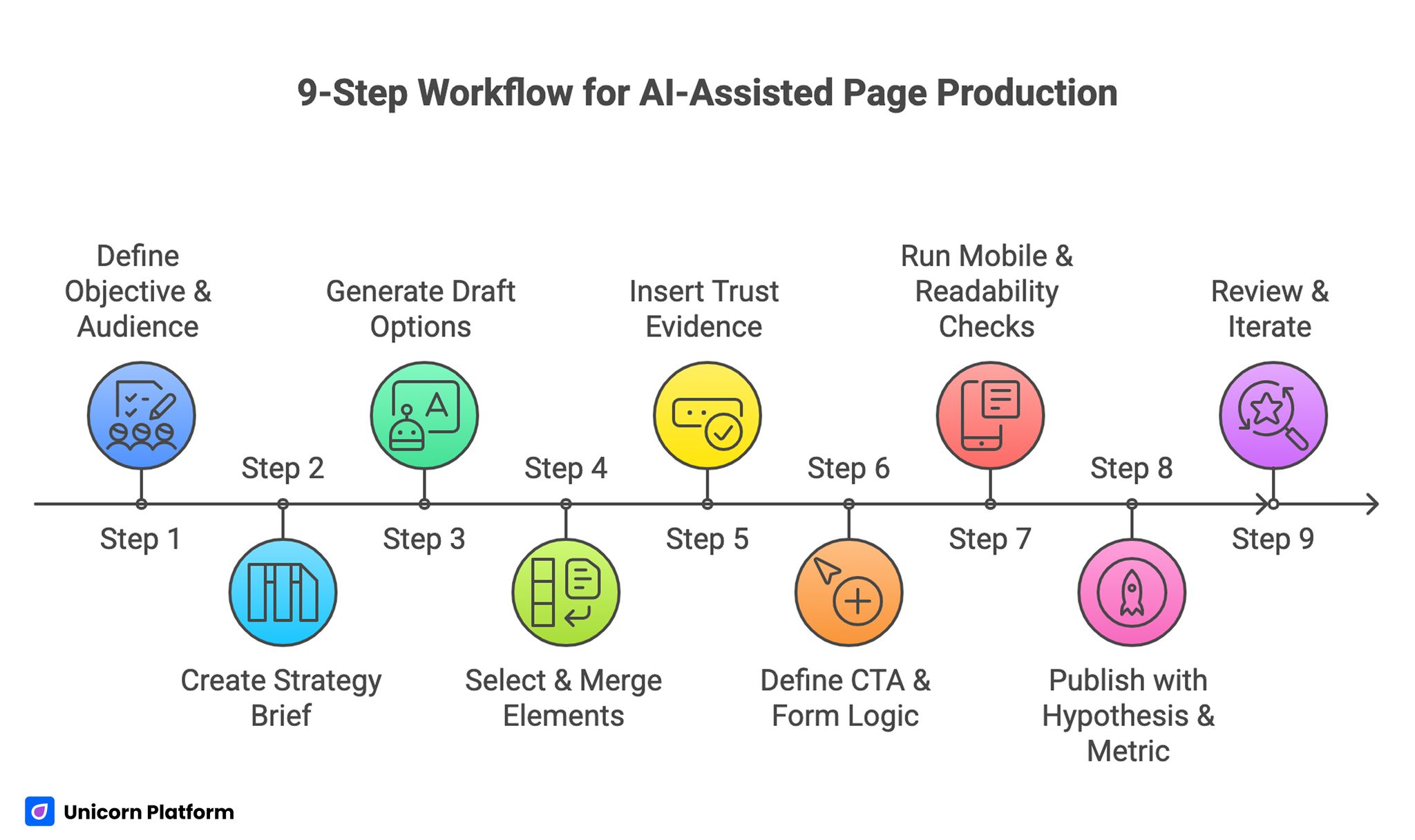

A Practical 9-Step Workflow for AI-Assisted Page Production

9-Step Workflow for AI-Assisted Page Production

Fast teams benefit from one standardized sequence. The goal is to keep quality stable while reducing cycle time.

Step 1: Define objective and audience

Set one page objective and one primary segment. Mixed objectives usually create muddled messaging and weaker conversion.

Step 2: Create a short strategy brief

Document problem framing, promise, mechanism summary, risk boundaries, and desired action. This gives AI and humans the same context.

Step 3: Generate structured draft options

Use AI to produce multiple directions, but keep structural constraints explicit so outputs stay usable. Clear constraints reduce cleanup work later.

Step 4: Select and merge strongest elements

A human editor should curate options and remove generic wording. Curation quality matters more than generation volume.

Step 5: Insert trust evidence near claims

Map each major claim to one confidence cue. Avoid collecting all proof in a separate block.

Step 6: Define CTA path and form logic

Align action language with readiness level. Keep form friction low but include routing-critical questions.

Step 7: Run mobile and readability checks

Validate first-screen clarity, tap ergonomics, and content scan quality before release. Mobile behavior should be checked as a release condition.

Step 8: Publish with hypothesis and metric

Every release should include one primary metric and one guardrail metric. This keeps fast iteration tied to meaningful progress.

Step 9: Review and iterate with one major variable

Run controlled tests and keep concise release notes so learning compounds across cycles. Consistent logs improve decision speed in future launches.

Teams that need stronger structure discipline during rollout can align this workflow with a step-by-step guide to a high-converting landing page structure. This helps keep section logic stable as contributor count grows.

UX and Design in the AI Era: What Changes and What Does Not

AI has changed the speed of design exploration. It has not changed the fundamentals of UX quality.

Users still need clear hierarchy, understandable flow, trust cues at decision points, and low-friction actions. If these fundamentals are weak, AI-generated polish will not rescue outcomes.

Design teams should use AI to expand options, then evaluate options against behavior and conversion logic. Novel layouts are useful only when they improve comprehension and intent progression.

Accessibility standards remain especially important. AI can produce visually appealing outputs that still fail readability, focus order, or contrast requirements. Human review must catch these gaps before release.

Trust, Risk, and Policy Language for AI-Assisted Content

AI-assisted content can increase user skepticism if claims are too broad or confidence language feels synthetic. Trust architecture should be explicit and practical.

Explain what is automated and what is human-reviewed. Users respond better when teams communicate boundaries clearly.

Place trust modules where uncertainty appears. If a section makes a speed claim, add process evidence nearby. If a section makes an outcomes claim, provide realistic proof context.

Avoid language that implies certainty where variability exists. Honest scope and limitation statements often improve qualified conversion because they reduce mismatch.

For teams building broader no-code governance around trust and quality, build your custom website without coding offers a useful operational reference. It can help teams turn ad hoc edits into repeatable execution.

CTA and Qualification Strategy in AI-Enhanced Funnels

AI can increase top-of-funnel volume by improving relevance and personalization. This is valuable, but higher volume is not the same as better pipeline quality.

CTA wording should describe real next steps. Generic labels create weak intent signaling and increase low-fit actions.

Form design should match the business model. If follow-up is expensive, early qualification fields are essential. If speed is critical, staged qualification can reduce friction while preserving routing quality.

Evaluate form changes with qualification outcomes, not only completion rates. Short forms can look successful while creating expensive downstream triage.

Mobile and Performance Release Gates

High-velocity teams often test desktop deeply and mobile lightly. That pattern usually harms conversion quality.

A minimum mobile gate should confirm first-screen clarity, proof visibility before deep scroll, tap comfort for key controls, and reliable form behavior under common keyboard flows. Teams that enforce this gate usually see cleaner conversion data.

Performance checks should focus on decision-critical moments, not just aggregate page load values. Slow response near CTA or form interactions often causes disproportionate drop-off.

Release gating should be binary. If a critical check fails, the page should not launch until fixed.

If your team needs practical behavior-first optimization guidance for these gates, 10 user behavior tips to optimize landing pages can help prioritize fixes by user impact. It is useful for deciding what to fix first.

Measurement Model for AI-Assisted Teams

Rapid publishing creates abundant data. Without structure, that data becomes noise.

Use a four-layer measurement hierarchy:

- clarity metrics around first-screen and mechanism engagement

- interaction metrics around trust and CTA progression

- conversion metrics around qualified submissions or bookings

- business metrics around pipeline and revenue progression

Each release should include a primary metric and a guardrail. Guardrails prevent false wins, such as higher submissions with lower qualification.

Weekly review cycles are usually enough for active page programs. Monthly strategic reviews can identify deeper pattern shifts and governance gaps.

Team Roles and Skill Development in 2026

The strongest teams are not replacing all traditional roles. They are reshaping roles around higher-value decisions.

Writers and marketers increasingly act as structured editors rather than blank-page creators. Designers become system maintainers and decision-flow owners, not only asset producers.

Engineers remain important in integration, reliability, and platform-level quality. No-code removes certain bottlenecks, but technical oversight remains essential for scale and resilience.

AI literacy should be developed across functions. Teams should know how to brief tools, evaluate outputs, and enforce review standards consistently.

30-Day Implementation Plan

Week 1: Map work and ownership

Define task buckets for AI-assisted, human-led, and joint-review work. Set role owners for strategy, trust, analytics, and release QA.

Week 2: Standardize structure and trust

Lock one page backbone, define trust placement rules, and align CTA hierarchy with intent stages. This makes cross-page reviews far more consistent.

Week 3: Run focused experiments

Launch one major-variable test per page family. Review both conversion and qualification outcomes.

Week 4: Consolidate and scale

Retire weak patterns, promote validated modules, and publish a short operating note for future cycles. The note should document what worked and why.

This first month should reduce execution noise and increase interpretation confidence. It should also improve onboarding speed for new contributors.

90-Day Operating Model

Month 1: Stability

Establish predictable structure, clearer trust standards, and strict release gates across priority pages. Avoid adding extra complexity before this layer is stable.

Month 2: Optimization

Test message clarity, mechanism framing, and CTA specificity with controlled variables and cleaner hypotheses. Keep each cycle scoped so interpretation remains sharp.

Month 3: Expansion

Scale high-performing modules to related pages and onboard additional contributors through documented standards. This creates sustainable velocity instead of random spikes.

By day 90, the expected signal is consistent qualified performance, not occasional spikes from random iteration. Stability is a better indicator than isolated wins.

Common Failure Patterns and Practical Fixes

Failure pattern 1: AI-generated pages sound polished but vague

Cause is usually weak strategic input. AI draft quality cannot exceed the clarity of the brief.

Fix by strengthening audience definition, objective clarity, and claim boundaries before generation. Better briefs usually improve every downstream step.

Failure pattern 2: Submission volume rises but lead quality drops

Cause is misaligned CTA expectations and insufficient qualification cues. Users submit without understanding next-step commitment.

Fix by tightening CTA language and introducing one routing-critical question early. This improves both fit and follow-up efficiency.

Failure pattern 3: Teams publish more but learn less

Cause is multi-variable changes and weak release documentation. Attribution then becomes unreliable.

Fix by limiting each cycle to one major variable and maintaining concise test logs. This raises learning quality over time.

Failure pattern 4: Trust issues increase after AI adoption

Cause is overconfident claim language and stale proof assets. Users notice these inconsistencies quickly.

Fix by adding explicit boundaries and scheduling regular trust-content reviews. Consistent updates preserve credibility.

Failure pattern 5: Quality drops as contributor count grows

Cause is unclear ownership and inconsistent template rules. Teams then optimize in conflicting directions.

Fix by assigning decision rights explicitly and enforcing shared review gates. Governance should be simple but non-negotiable.

FAQ: Will AI Replace Programmers?

1. Is AI going to fully replace programmers soon?

Full replacement is unlikely in practical production teams. AI is improving execution speed, while humans remain essential for strategy, accountability, and risk control.

2. What work should teams automate first?

Start with repeatable drafting, summarization, and formatting tasks. Keep strategic decisions and release approvals human-led.

3. Can no-code teams ship reliable pages without engineers?

They can ship quickly, but reliability at scale still benefits from technical oversight, especially around integrations and measurement integrity. Engineering involvement is usually lighter than before, but still important.

4. What is the biggest risk when adopting AI workflows?

The biggest risk is speed without governance. Teams publish more output but lose quality and interpretability.

5. How should teams evaluate AI-generated copy?

Evaluate against audience fit, claim accuracy, trust clarity, and action logic. Fluency alone is not a quality signal.

6. How often should trust content be reviewed?

Monthly is a practical baseline. High-volume programs may need biweekly checks.

7. What metric should be prioritized first?

Choose one primary metric tied to qualified outcomes, then protect it with a relevant guardrail metric. This reduces false positives in optimization reviews.

8. How do we avoid keyword-stuffed AI output?

Give clear editorial constraints, then run a strict post-edit pass for natural language and readability. Manual cleanup is still necessary for publish-ready quality.

9. Should every traffic source get its own variant?

Only when intent differences are material enough to justify separate messaging emphasis. Extra variants without a reason usually increase complexity.

10. What makes AI-assisted teams successful long term?

Clear ownership, stable structure, strict quality gates, and disciplined learning loops make AI speed sustainable. This combination helps teams scale without losing trust.

Final Takeaway

AI is not a direct replacement for programming and product judgment. It is a force multiplier for teams that run clear systems. No-code environments amplify this effect because production speed is already high.

With Unicorn Platform, the most effective approach is to combine AI-assisted execution with human-led strategy, trust governance, and release accountability. Teams that operate this way improve both speed and outcome quality over time.