Table of Contents

- Why SaaS Pages Underperform Even With Strong Products

- 30-Day Execution Plan

- Common Failure Modes and Fixes

- Page Archetypes by SaaS Category

- FAQ

Most SaaS organizations are not missing ideas. They are missing reliability. Teams launch new pages, run paid campaigns, watch traffic rise, and still struggle to produce consistent trial quality or sales-ready demos. When this happens repeatedly, the problem is usually not demand. The problem is page system design.

A high-performing SaaS page does more than present product benefits. It helps the right visitor recognize fit, evaluate risk, and choose the next action with confidence. If those decisions are not supported in sequence, even strong messaging and polished visuals will underperform.

This is where operational discipline matters. Instead of treating each launch as a one-off creative project, strong teams use repeatable structure, deliberate section ownership, and measurable testing cadence. In Unicorn Platform, that approach is practical because teams can iterate quickly while preserving consistency across campaigns.

sbb-itb-bf47c9b

Quick Takeaways

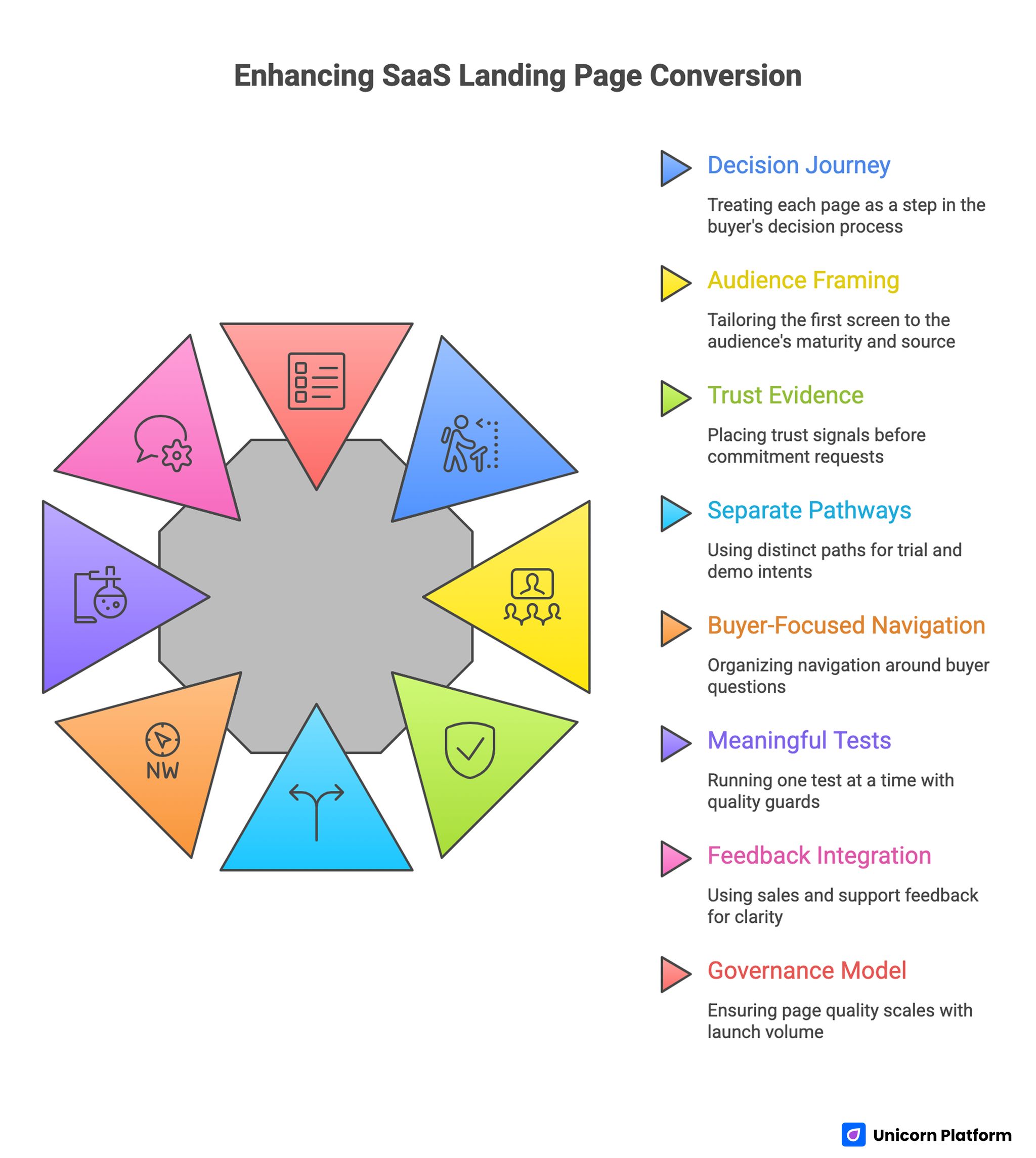

Enhancing SaaS Landing Page Conversion

- Treat each page as a decision journey, not a feature brochure.

- Match first-screen framing to audience maturity and acquisition source.

- Place trust evidence before major commitment requests.

- Use separate pathways for trial intent and demo intent.

- Keep navigation focused on buyer questions, not internal org charts.

- Run one meaningful test at a time with one quality guardrail.

- Use sales and support feedback to improve pre-conversion clarity.

- Build a governance model so page quality scales with launch volume.

Why SaaS Pages Underperform Even With Strong Products

SaaS pages often fail for structural reasons rather than copy quality alone. A page can look modern and still produce weak outcomes if information appears in the wrong order. For example, a visitor may see aspirational brand language before understanding how the product maps to their workflow. Or they may be pushed toward a demo before seeing enough confidence signals to justify effort.

Another frequent issue is audience collision. A single page attempts to speak to founders, operations managers, IT evaluators, and procurement stakeholders at once. This creates message dilution. Everyone sees parts of relevance, but no one sees a full argument tailored to their decision criteria.

The third issue is operational drift. As teams launch more campaigns, modules become inconsistent. Pricing language, trust claims, and CTA hierarchy vary across pages without clear standards. Over time, this inconsistency erodes conversion reliability even when traffic quality stays stable.

The Buyer-Decision Model for SaaS Conversion

SaaS conversion generally follows a four-stage decision model. Stage one is orientation: "Is this solution relevant to my context?" Stage two is credibility: "Can I trust this team and product?" Stage three is effort evaluation: "How hard is adoption?" Stage four is commitment: "Should I start now?"

This structured decision process is consistent with broader B2B buying behavior research. According to HubSpot, modern buyers typically interact with multiple pieces of content and evaluate several vendors before committing, which makes clear decision sequencing on landing pages essential for moving prospects toward conversion.

High-performing pages align section sequence to these decisions. Orientation comes first, then confidence, then onboarding feasibility, then action. When pages skip stages or reorder them poorly, visitors delay commitment and continue evaluation elsewhere.

This model also improves internal alignment. Product marketing can own orientation clarity. Sales can validate confidence and objection coverage. Product can verify onboarding feasibility. Growth can optimize commitment pathways. Structured ownership reduces ambiguity and speeds improvement cycles.

Defining One Primary Outcome Per Page

Every SaaS page should optimize for one primary conversion outcome. Trying to optimize simultaneously for newsletter signups, free trials, and enterprise demos usually weakens all three. The page can still include secondary routes, but one route should dominate hierarchy.

For product-led motions, the primary action is often trial or workspace creation. For sales-led motions, the primary action is qualified demo request. For hybrid motions, teams can use role-based entry paths while preserving one dominant CTA for each variant.

Clear primary outcome improves everything downstream: module prioritization, test design, and metric interpretation. Without it, teams ship changes that move surface metrics but do not improve pipeline quality.

Audience Segmentation Without Page Sprawl

Segmentation improves relevance, but uncontrolled segmentation creates maintenance chaos. A practical strategy is "stable structure, variable emphasis." Keep one core architecture and adapt only high-impact layers by audience.

The need for segmentation becomes clearer when looking at modern B2B buying dynamics. Research from Gartner shows that B2B purchase decisions often involve multiple stakeholders across departments, which means landing pages must help different evaluators quickly identify the information relevant to their role.

For early-stage companies, emphasize time-to-value and setup simplicity. For mid-market operators, emphasize workflow control, governance, and integrations. For enterprise buyers, emphasize reliability, security posture, and procurement alignment.

The structure remains consistent: fit clarity, proof, implementation pathway, and action. What changes is the order and depth of detail within those blocks. This protects QA while delivering contextual relevance.

First-Screen Architecture That Reduces Bounce

The first screen has one job: make the right visitor feel that staying is rational. This requires specific value framing, clear audience fit, and one obvious next step. If the first screen is overloaded with vague claims, hero visuals become decorative noise.

A strong first-screen formula includes a concrete outcome statement, a short support line addressing likely hesitation, and a primary CTA with action clarity. Supporting links can exist, but they should not compete equally with the main action.

For teams refining first-screen structure, this SaaS content hierarchy guide is useful for turning abstract messaging into section-level decisions.

Messaging Precision: From Claims to Operational Outcomes

SaaS buyers respond to operational clarity. "Improve efficiency" is weak because it is generic. "Reduce handoff delays by giving every project owner one live status view" is stronger because it connects promise to workflow behavior.

Every major claim on the page should answer one practical question: what changes in daily work after adoption. This framing improves both conversion and sales alignment because it sets expectations early.

Avoid inflated certainty language. Credibility is stronger when claims are precise and scoped. A trustworthy page communicates capability and constraints in a way that helps buyers self-qualify accurately.

Workflow Demonstration Strategy

Screenshots alone rarely build confidence. Buyers need context for how the product fits into real operations. A good workflow section explains where the product enters a process, what it replaces or simplifies, and what immediate outcome appears.

Keep this demonstration concise and sequential. Show a short journey from trigger to resolution: input, automation or collaboration step, and measurable result. This gives visitors a practical mental model of adoption.

For demo-focused pages, workflow sections should appear before final CTA pressure. For trial-focused pages, workflow sections can support onboarding confidence by reducing uncertainty around first actions.

Proof Architecture: Evidence by Decision Stage

Proof should be layered by stage rather than dumped into one testimonial block. Early stage proof can include recognizable customer categories and high-level trust indicators. Mid-stage proof should address objections such as setup complexity, reliability, or team adoption.

Late-stage proof should align directly to commitment concerns: support responsiveness, implementation clarity, and risk controls. This pattern helps buyers progress naturally from curiosity to confidence.

Context matters as much as quantity. One precise proof snippet that matches buyer concerns can outperform many generic quotes. Keep proof recent, attributable, and connected to use-case context where possible.

Trust Signals Beyond Logos

Logo walls are useful but limited. Mature SaaS buyers need trust depth, especially when adoption affects team operations and data workflows. Practical trust content includes reliability practices, security posture summaries, onboarding support model, and change-management expectations.

Place trust signals near moments of friction, not only in footer or enterprise pages. If a buyer hesitates at action stage, accessible trust cues can resolve uncertainty quickly.

For sales-assisted flows, ensure trust language matches what sales and success teams can actually deliver. Mismatch between page promise and onboarding reality damages both conversion quality and retention confidence.

Trial Pathway Design for Product-Led Growth

Trial pathways should optimize for activation quality, not account volume alone. If signup is easy but first-value realization is unclear, activation rates and retention suffer.

A high-quality trial section clarifies who trial is for, what first success looks like, and what support exists during setup. This helps users assess commitment level before signup and improves post-signup outcomes.

Reduce unnecessary entry friction but keep essential qualification context. Asking for nothing can boost raw signups while increasing low-fit users. Balanced trial gating often performs better for long-term efficiency.

Demo Pathway Design for Sales-Led Growth

Demo pathways should qualify intent without forcing users through a heavy form experience. Visitors request demos when they believe the product may solve a meaningful problem and the sales interaction will be useful.

Strong demo sections answer three concerns: what the demo covers, who should join, and what decision the demo helps make. This framing increases demo quality and improves sales acceptance.

Form design should collect only fields that materially improve routing and preparation. Extra fields that do not affect qualification should be deferred to follow-up.

Navigation Strategy as a Conversion Lever

Navigation is frequently treated as a design utility rather than a conversion mechanism. In SaaS, navigation choices influence how quickly users reach confidence-critical information.

Top navigation should prioritize buyer decisions: product fit, implementation, pricing clarity, security trust, and support expectations. It should not mirror internal team structure or content ownership boundaries.

Use progressive disclosure where needed. Keep core options visible and move deep documentation into secondary layers. This preserves decision momentum without blocking deeper evaluation.

For teams improving page-level pathing and qualification flow, this SaaS lead generation framework can help connect navigation changes to pipeline outcomes.

Objection-Handling Blocks That Increase Conversion Quality

Every high-performing SaaS page handles objections proactively. Common objections include integration complexity, migration risk, team adoption difficulty, and pricing uncertainty.

Build objection blocks around realistic concerns seen in sales calls and support conversations. Each objection should have a concise response that combines process clarity and confidence evidence.

Avoid defensive tone. Objection handling works best when it acknowledges risk plainly and explains how the team mitigates it in practice.

Pricing Communication Without Friction Spikes

Pricing confusion is a major source of conversion leakage. If buyers cannot infer likely fit to plan tier, they delay action or abandon evaluation.

Pricing communication should emphasize decision clarity: who each plan is for, what capabilities unlock meaningful value, and what changes operationally at higher tiers. Complex details can remain available, but first-layer explanation must be simple.

When pricing is customized, explain why and what information shapes quotes. Ambiguity without rationale erodes trust.

Integration and Implementation Clarity

For many SaaS categories, implementation confidence is the decisive factor. Buyers may like features but hesitate because they expect disruption.

Address implementation in practical terms: expected setup path, required stakeholders, typical timeline windows, and support checkpoints. This reframes adoption from unknown risk to manageable process.

Integration content should focus on business continuity, not only technical capability lists. Buyers want to understand how existing workflows remain functional during transition.

Enterprise Evaluation Layer

Enterprise buyers require additional confidence layers that self-serve pages often omit. They need signals around governance, reliability, data practices, procurement readiness, and ongoing support accountability.

A practical enterprise layer can exist within the same core architecture. It can surface trust modules, integration depth, and implementation standards without forcing all visitors through enterprise-heavy copy.

This targeted depth improves conversion quality by helping enterprise evaluators self-identify and move to the correct pathway sooner.

Mobile Research Behavior in SaaS

Even for enterprise software, early evaluation increasingly begins on mobile devices. Buyers may discover solutions during travel, between meetings, or while triaging options quickly.

If mobile pages hide key value statements, compress trust cues, or weaken CTA visibility, consideration can drop before desktop sessions occur. Mobile quality therefore affects top-funnel efficiency more than many dashboards suggest.

Test real-device behavior before launch. Prioritize first-screen orientation, readable proof blocks, and stable action visibility across common device classes.

Performance as a Trust Signal

Slow pages do not only harm conversion mechanics. They also signal operational weakness to buyers evaluating mission-critical tools.

Prioritize performance improvements on conversion-critical templates first. Above-the-fold speed, interaction readiness, and media efficiency should meet launch gates before campaign scaling.

Track performance by template and source segment. Site-wide averages can hide severe bottlenecks in paid campaign paths.

Experimentation System: From Opinion to Evidence

SaaS teams often run too many simultaneous changes and lose decision clarity. A stronger system limits each experiment to one meaningful variable and one primary success metric.

Pair primary metric with one guardrail metric. For example, if demo requests increase, ensure qualification rate does not decline. This protects pipeline quality while improving volume.

Define observation windows before launch and avoid premature calls based on early noise. Consistent methodology is critical for compounding learning.

Experiment Backlog Design

A practical backlog should be organized by funnel stage and expected impact. Stage one tests improve orientation clarity. Stage two tests improve confidence and objection coverage. Stage three tests reduce action friction.

Each backlog item should include hypothesis, expected lift direction, risk level, implementation owner, and metric pair. This structure keeps prioritization objective and reduces reactive testing.

Review backlog weekly and retire stale ideas that no longer align with current traffic mix or go-to-market priorities.

Metrics Tree for Pipeline-Quality Optimization

Conversion programs fail when teams track too few or too many disconnected metrics. A metrics tree solves this by linking page behavior to pipeline outcomes.

Top metric can be qualified trial or accepted demo volume by source. Supporting metrics include stage progression rates, activation quality, and early retention or sales progression indicators. Diagnostic metrics include section interactions, form friction, and device-specific behavior.

Use thresholds to trigger action. If a supporting metric shifts beyond range, assign test owners immediately. This creates faster response without random redesign.

Attribution Hygiene for Page Decisions

Attribution confusion can distort page optimization decisions. Teams may credit page changes for outcomes primarily driven by campaign targeting or seasonal demand.

Use controlled cohorts and consistent windows when evaluating experiments. Compare like-for-like source segments and avoid mixing major channel changes with template tests in the same period.

Document assumptions and uncertainty explicitly. Clear attribution hygiene improves confidence in rollout decisions and protects team credibility.

Sales and Success Feedback Loop

Sales and customer success teams hear objections that analytics tools cannot capture fully. Their feedback should feed directly into page updates.

Create a weekly loop where top recurring objections are mapped to existing page sections. If an objection appears repeatedly, it belongs earlier in the decision path.

This loop improves conversion quality because it aligns pre-conversion messaging with real post-click conversations. It also reduces avoidable misalignment between marketing promises and sales delivery.

Support and Onboarding Signals as Pre-Conversion Inputs

Support logs and onboarding friction reveal expectation gaps. If users repeatedly ask questions that should have been clarified before signup, the page system is missing critical clarity.

Translate recurring support patterns into page updates. Clarify setup expectations, role responsibilities, and timeline assumptions where needed.

This process improves both conversion and retention by reducing mismatch between perceived and actual onboarding effort.

Governance Model for Cross-Functional GTM Teams

As launch frequency increases, governance becomes a conversion lever. Without clear ownership, pages drift and quality becomes inconsistent.

A practical model assigns specific lanes. Product marketing owns narrative and fit logic. Growth owns test design and measurement. Product owns workflow accuracy. Sales owns qualification feedback. Operations or QA owns final release validation.

Shared templates in Unicorn Platform make this model practical because teams can collaborate on specific blocks without breaking overall architecture.

Template Governance in Unicorn Platform

Template governance should define required sections, optional modules, and non-negotiable trust content. This ensures each new page starts from a quality baseline.

Variation should be intentional and documented. Teams can adapt hero framing, proof order, and CTA emphasis while keeping core decision sequence stable.

When templates are governed well, launch speed increases without sacrificing consistency. That is where no-code execution becomes a strategic advantage rather than a quality risk.

For CRM-heavy evaluation paths, this Salesforce landing page guide can support template decisions around trust and qualification flow.

Scenario: Mid-Market B2B SaaS Recovery

A mid-market SaaS company had rising paid traffic but flat sales-accepted demos. Analysis showed three problems: broad hero messaging, delayed trust modules, and noisy demo forms.

The team introduced a structured recovery plan in Unicorn Platform. They built one canonical template, launched role-focused variants, simplified form fields, and inserted trust cues before primary action zones.

They also implemented weekly sales-feedback mapping and one-variable test cadence. Within two months, demo qualification improved and time-to-first-value for trial cohorts shortened.

The biggest gain came from operational consistency. Once template governance and ownership were clear, improvements became predictable.

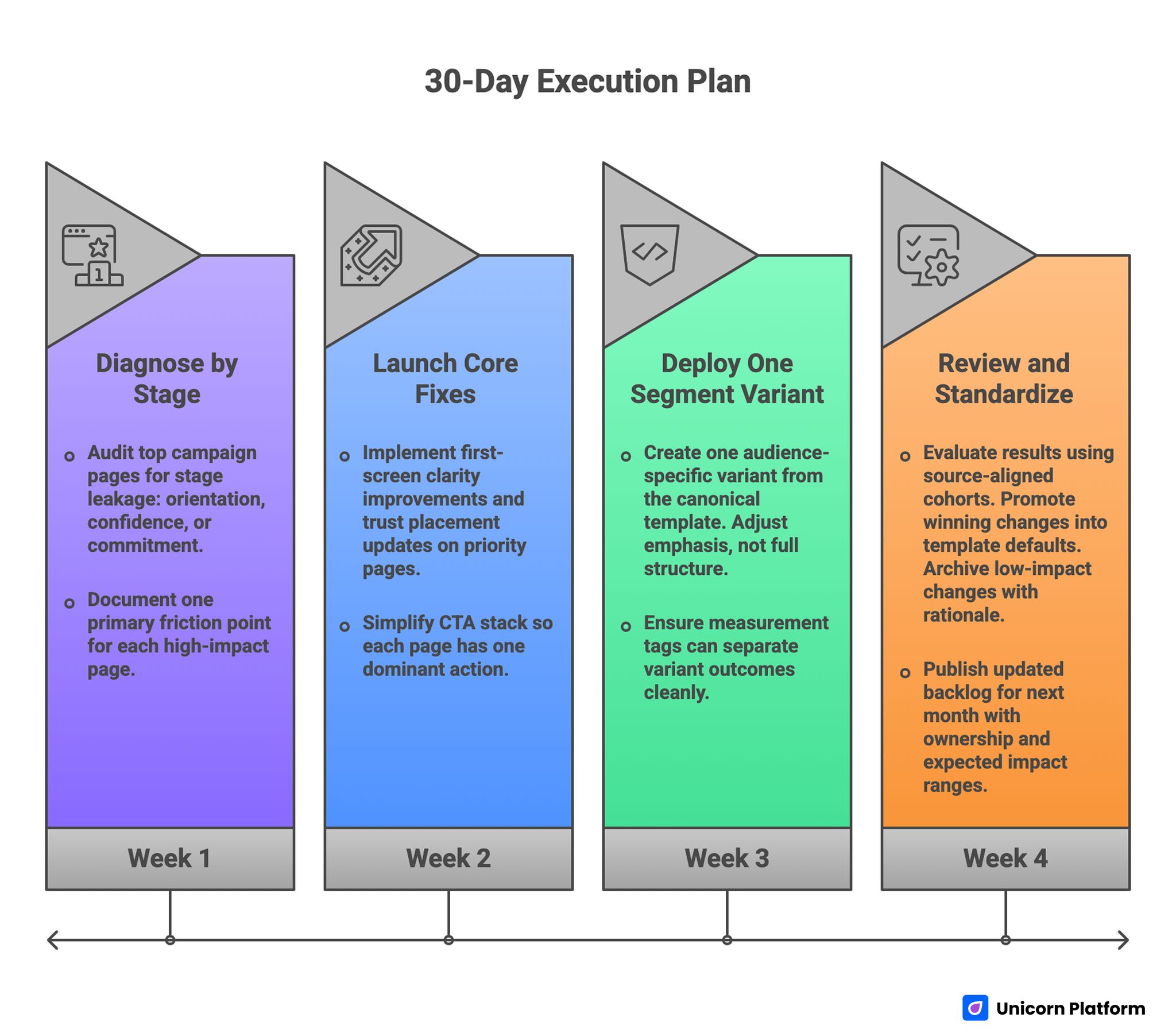

30-Day Execution Plan

30-day SaaS Landing Page Conversion Optimization Execution Plan

Week 1: Diagnose by

Audit top campaign pages for stage leakage: orientation, confidence, or commitment. Document one primary friction point for each high-impact page.

Define one canonical template in Unicorn Platform with clear section roles. Lock non-negotiable trust modules and action hierarchy.

Week 2: Launch Core Fixes

Implement first-screen clarity improvements and trust placement updates on priority pages. Simplify CTA stack so each page has one dominant action.

Run real-device mobile QA and verify performance readiness on conversion-critical templates.

Week 3: Deploy One Segment Variant

Create one audience-specific variant from the canonical template. Adjust emphasis, not full structure. Ensure measurement tags can separate variant outcomes cleanly.

Launch one controlled test with primary metric and one quality guardrail.

Week 4: Review and Standardize

Evaluate results using source-aligned cohorts. Promote winning changes into template defaults. Archive low-impact changes with rationale.

Publish updated backlog for next month with ownership and expected impact ranges.

90-Day Scale Plan

Month 2: Expand Controlled Variants

Add source-specific and role-specific variants while preserving template integrity. Continue one-variable test cadence and maintain weekly feedback loops with sales and support.

Focus on improvements that increase qualified conversion, not just front-end click metrics.

Month 3: Institutionalize Systems

Formalize governance artifacts: template rules, metrics tree thresholds, decision log standards, and escalation protocols. Train contributors on ownership lanes and release criteria.

At this stage, the goal is compounding reliability. Teams should be able to launch faster while improving qualification quality consistently.

Common Failure Modes and Fixes

1) One Page for Every Audience

Problem: message dilution and weak relevance. Fix: stable architecture with role-specific emphasis variants.

2) Trust Content Too Late

Problem: users hesitate before seeing confidence cues. Fix: move proof and trust modules near early decision points.

3) CTA Overload

Problem: visitors see too many equal-priority actions. Fix: one dominant action per page with controlled secondary paths.

4) Form Bloat

Problem: qualification forms collect low-value fields. Fix: keep essential routing fields only and defer extras.

5) Navigation Complexity

Problem: pathing reflects internal structure, not buyer questions. Fix: simplify top-level options to decision-critical routes.

6) Multi-Variable Testing Noise

Problem: attribution becomes unreliable. Fix: one meaningful variable per cycle, fixed metric pair.

7) Missing Ownership

Problem: inconsistent updates and quality drift. Fix: explicit section ownership plus final QA authority.

8) Metric Tunnel Vision

Problem: top-line conversion rises while quality drops. Fix: pair primary conversion with qualification guardrails.

9) Mobile Neglect

Problem: early consideration leakage on small screens. Fix: enforce mobile launch gates and real-device testing.

10) No Feedback Integration

Problem: recurring objections remain unresolved on-page. Fix: weekly sales/support signal mapping into page updates.

ICP and Jobs-to-Be-Done Workshop Framework

Many SaaS teams skip deep ICP definition because they feel they already know their customer. In practice, "we sell to product teams" or "we serve operations leaders" is too broad to guide page decisions. A landing page improves only when it is built around concrete buyer jobs, constraints, and switching triggers.

A practical workshop starts with three questions for each segment: what job is urgent right now, what current workaround is failing, and what risk makes change difficult. These answers define the emotional and operational context your page must address. If the page does not reflect these realities, visitors see information but do not feel understood.

Document jobs in action language, not abstract categories. Instead of "needs collaboration," specify "needs to reduce rework caused by late handoffs between product and delivery teams." This creates direct inputs for headline framing, workflow demonstrations, and objection handling sections.

Then define decision thresholds by segment. One segment may need proof of rapid setup within existing tools. Another may need governance confidence for cross-functional use. A third may need clear cost-to-value expectations before involving procurement. These thresholds should map to page sections in sequence.

Use this workshop output to create message maps that power variants. Each map should include primary pain, desired outcome, key objection, required proof, and preferred next action. When every page block ties back to this map, conversion quality becomes more predictable and easier to improve over time.

Page Archetypes by SaaS Category

Not all SaaS categories should use the same page emphasis. The conversion structure can stay consistent, but priority blocks differ by category economics and buyer behavior. Teams that ignore this tend to copy templates that worked elsewhere but fail in their own motion.

Horizontal productivity tools usually win with immediacy and fast relevance. Buyers compare multiple options quickly, so first-screen clarity and quick workflow understanding matter most. These pages should emphasize rapid adoption and low switching friction before expanding into deeper governance detail.

Vertical SaaS often needs credibility within domain-specific workflows. Buyers care about category fit, compliance expectations, and whether the product reflects real operational nuances. Pages for these products should include scenario-driven proof and domain language that signals genuine understanding.

Infrastructure and developer-adjacent products usually require stronger trust and implementation confidence. Buyers need clarity on reliability, integration depth, and migration risk. These pages should elevate architecture fit and operational continuity earlier than generic feature narratives.

AI-assisted SaaS categories add another layer: trust in output quality and control. Buyers want productivity gains, but they also need assurance about reviewability, governance, and failure boundaries. Pages should balance speed promises with practical oversight mechanisms so expectations remain credible.

The lesson is simple: choose an archetype intentionally, then adapt emphasis. Avoid mixing all archetypes in one page. Mixed logic creates confusing decision paths and weakens the argument for every segment.

Offer and Packaging Experimentation for SaaS Pages

Many SaaS teams test copy heavily but under-test offer structure. Yet offer design often has greater impact on qualified conversion because it shapes perceived risk and commitment. A page can have excellent narrative and still underperform if the entry offer does not match buyer readiness.

Start by defining the commitment model for each segment. New users may prefer low-friction exploration with clear activation path. Mature buyers may prefer guided discovery tied to implementation planning. Offer modules should match these realities rather than forcing one route for everyone.

Useful offer experiments include trial length framing, onboarding-assist visibility, usage-limit communication, and value milestone cues. Each test should clarify what the user gets immediately and what changes after initial adoption. Ambiguity in these transitions often causes drop-off or low-quality signups.

Packaging communication should make decision boundaries explicit. If tiers are too vague, buyers delay and seek external comparison. If tiers are too dense, cognitive load increases and momentum drops. A balanced approach provides quick-fit guidance with optional depth for evaluators who need detail.

Do not evaluate offer tests only on form submissions. Track activation quality, sales acceptance, and early retention indicators to avoid adopting offers that attract low-fit volume. Margin-aware and quality-aware testing creates stronger long-term economics than surface conversion lifts.

Procurement and Security Narrative for Mid-Market and Enterprise

Enterprise-facing SaaS pages often lose momentum when they treat procurement and security as late-stage topics only. In reality, many evaluators screen for these concerns before taking any action. If confidence is absent early, high-intent visitors may never convert to pipeline.

A practical approach is progressive trust disclosure. Early sections should signal security and governance maturity without overwhelming the page. Mid sections can expand with relevant details about controls, access management, and reliability practices. Deeper technical documentation can remain available for specialist review.

Procurement clarity should also be visible. Buyers need to know whether commercial processes are predictable, whether contracts are standardized, and how legal review is typically handled. This does not require full legal detail on-page, but it does require confidence that the path is managed.

For enterprise demo routes, include expectation framing around who should attend and what the first session covers. This reduces low-fit demo requests and increases sales productivity. The page should communicate that the conversation is practical, scoped, and aligned to real adoption decisions.

When security and procurement language is integrated naturally into conversion flow, enterprise visitors progress with less friction. The goal is not to overload every visitor with technical detail. The goal is to make trust discoverable at the moment each segment needs it.

Competitive Positioning Blocks That Avoid Commodity Comparison

SaaS categories become commoditized quickly when pages rely on feature checklists alone. Buyers compare rows of similar claims and default to price or incumbency. Strong positioning blocks shift comparison from "who has more features" to "who delivers better operational outcome for this specific job."

A useful positioning pattern starts with context, not confrontation. Define the common workflow problem and its business cost. Then explain the decision principle your product embodies, such as implementation speed with governance, or flexibility without configuration debt. This reframes evaluation around value logic.

Comparison content should be selective and honest. Overly aggressive competitor framing can reduce credibility. Instead, emphasize where your approach is structurally different and why that difference matters in daily operations. Buyers trust specificity more than broad superiority claims.

Include "best-fit / not-fit" guidance where appropriate. This may seem counterintuitive, but it improves conversion quality by helping unsuitable visitors self-select out early. High-quality pipeline often improves when teams stop optimizing for indiscriminate volume.

Positioning blocks are strongest when reinforced by proof. Pair each strategic claim with evidence tied to implementation behavior, time-to-value, or operational reliability. This closes the gap between narrative and trust.

Internationalization and Regional Conversion Design

Global SaaS programs often localize language but forget to localize decision logic. Regional buyers can vary in risk tolerance, procurement expectations, and adoption pathways. A literal translation of one page rarely captures these differences.

Start with regional discovery for decision drivers, not only vocabulary. In some markets, social proof from local peers may matter most. In others, implementation support and documentation depth may dominate. Regional page variants should adapt emphasis to these realities while preserving core structure.

Pricing communication requires particular care in international contexts. Currency display, tax inclusion norms, billing-cycle expectations, and contract terms can all influence trust and conversion readiness. Clarify these early enough to avoid late-stage surprises.

Legal and privacy framing should also align with regional norms where relevant. Buyers should not need to search multiple pages to understand baseline compliance posture. Trust is stronger when confidence cues are available in localized context without overloading the primary journey.

Operationally, manage regional variants with bounded flexibility. Keep shared template standards for structure and trust modules, but allow local teams to adjust examples, proof order, and CTA language. This supports relevance without fragmenting governance.

Lifecycle Journey Design: From First Click to Expansion

High-performing SaaS pages are not isolated assets. They are entry points into a broader lifecycle system. If landing experience and onboarding experience are disconnected, conversion gains can evaporate in activation and retention stages.

Map page messaging directly to first-week product experience. If the page promises rapid setup, onboarding must reinforce that promise with clear first-success steps. If the page emphasizes governance confidence, onboarding should surface controls and accountability early. Consistency across these stages reduces churn risk.

For sales-assisted motions, ensure pre-demo expectations align with demo structure and follow-up cadence. When buyers feel continuity across touchpoints, trust deepens and pipeline velocity improves. Discontinuity creates skepticism even when product quality is high.

Expansion pathways should be considered at page-planning stage. The users who convert today may become champions for additional teams later. Pages that attract good-fit initial users with realistic expectations often produce stronger expansion potential than pages optimized only for top-of-funnel volume.

Lifecycle-aware design shifts optimization from short-term conversion spikes to durable revenue quality. That is the core advantage of system-level thinking in SaaS conversion programs.

Documentation and Operating Cadence for Scale

Optimization maturity is largely a documentation problem. Teams with weak records repeat failed tests, lose context during staffing changes, and debate decisions already proven months earlier. Teams with clear documentation compound improvements faster.

Maintain three core artifacts: template standard doc, experiment register, and objection library. The template standard doc defines mandatory sections and ownership rules. The experiment register captures hypothesis, change, metric pair, and decision. The objection library tracks recurring concerns from sales and support with mapped page responses.

Run a fixed operating cadence. Weekly: prioritize one test and one maintenance update. Monthly: review template integrity and proof freshness. Quarterly: reassess segmentation model, guardrail thresholds, and strategic risks. This cadence prevents both stagnation and chaotic over-change.

Escalation rules are equally important. Define when a decline in quality metrics triggers immediate rollback, and who has authority to execute it. Fast rollback protects pipeline quality during aggressive iteration phases.

Teams that treat documentation as a core conversion tool usually ship faster with fewer reversals. They do not rely on memory or individual heroics. They rely on systems that preserve learning and enforce quality at scale.

Content Freshness and Asset Decay Control

SaaS pages lose performance over time even when strategy remains sound. Proof becomes outdated, screenshots drift from current product UI, and pricing or packaging references no longer match live offers. Buyers notice these mismatches quickly and treat them as trust signals in the wrong direction.

Build a freshness schedule with clear update frequencies by asset type. High-visibility proof snippets, hero screenshots, and pricing references should be reviewed monthly. Workflow diagrams, integration lists, and FAQ responses should be reviewed quarterly or after major release cycles. Security and compliance references should be reviewed whenever policy or certification status changes.

Use a simple decay score to prioritize updates. If an asset is old, low-specificity, or misaligned with current narrative, it gets higher urgency. Teams can then batch-refresh high-impact blocks instead of running full page rewrites.

Freshness work should tie back to conversion metrics. If a page section with outdated proof has weak progression rates, prioritize that update first. This keeps maintenance connected to business outcomes and avoids cosmetic-only refresh cycles.

The same logic applies to internal links and supporting paths. As your content ecosystem grows, ensure linked resources still align with page-stage decisions and user intent. Freshness is not just visual. It includes relevance and continuity across the whole journey.

Partner and Co-Marketing Landing Pathways

SaaS companies increasingly rely on partner channels, marketplace exposure, and co-marketing campaigns for pipeline growth. These visitors arrive with different expectations than direct paid-search or branded traffic, so using a generic destination page often reduces conversion quality.

Partner-origin visitors typically need fast trust continuity. They want to see why the partnership exists, what workflow problem the combined solution addresses, and what next step is appropriate for their role. If that continuity is missing, they treat the page as unrelated marketing and disengage.

A practical co-marketing page model includes four elements. First, context framing that connects partner source to buyer job. Second, shared-value explanation that clarifies when the combined approach is better than standalone options. Third, implementation confidence content that shows practical rollout path. Fourth, action pathway aligned to partnership goal, such as guided demo or integration-first trial.

In Unicorn Platform, these partner pathways can reuse your core template system while swapping partner-specific narrative and proof blocks. This preserves governance and measurement quality while maintaining channel relevance.

Partner pathways also need a clean handoff model. If the visitor should route to direct sales, partner-assisted onboarding, or self-serve activation, that path must be explicit. Ambiguous ownership at handoff stage creates avoidable drop-off and internal friction.

Track partner variants separately in your metrics tree. A channel can show strong click volume but weak qualified progression if narrative continuity is poor. Isolated tracking helps teams improve partner conversion quality without overcorrecting primary site templates.

Conversion Impact Forecasting for Leadership Planning

Mature optimization programs need a forecasting layer so leadership can plan investment and staffing with confidence. Without forecasts, conversion work is often treated as tactical and under-resourced despite strong ROI potential.

A useful forecast model starts with baseline qualified-conversion rates by segment and source. Then estimate plausible lift ranges for top backlog items based on historical tests and implementation complexity. This creates scenario planning rather than one-point promises.

Build three forecast bands: conservative, expected, and upside. Conservative assumes modest lift and slower adoption. Expected assumes average test success with normal rollout speed. Upside assumes higher lift on priority pages with strong execution consistency. Bands help decision-makers understand risk rather than overcommitting to optimistic projections.

Tie forecast assumptions to operational capacity. If a plan expects six major tests per month but team bandwidth supports only three, the forecast should adjust. Overstated execution assumptions are one of the biggest causes of planning errors in CRO programs.

Leadership reporting should include both output and quality metrics. Report not only lift estimates but also guardrail behavior, such as demo acceptance, activation quality, and early retention trends. This prevents growth planning from being disconnected from revenue quality.

Use forecasts to prioritize backlog tiers. If two tests have similar potential lift but one has lower implementation risk and clearer attribution, schedule that one first. Forecasting is most valuable when it improves sequencing decisions, not just executive slides.

Executive Dashboard Design for SaaS CRO Programs

Most executive dashboards are overloaded with indicators and still fail to guide action. A better dashboard shows only the metrics required to answer three questions: where leakage is growing, what experiments are in flight, and which decisions are pending.

A strong executive view includes stage-level trend cards, current top risks, and experiment pipeline status with confidence levels. Each card should include one sentence of decision context so leaders understand whether action is required now or later.

Separate metric movement from decision status. A metric can move while no decision is required if it remains within expected range. Conversely, small movement can require action when guardrails are breached. Dashboards should make this distinction explicit.

Include a rollout health section. Many programs focus on test outcomes but underreport rollout consistency across templates and regions. Showing rollout progress alongside performance prevents "winning tests" from stalling in implementation.

Finally, connect dashboard cycles to governance cadence. Weekly dashboards support operating decisions. Monthly dashboards support resource and prioritization decisions. Quarterly dashboards support strategic alignment and risk posture updates. This layered cadence keeps leadership engaged without causing reactive micro-management.

Rollback Protocols for High-Velocity Testing

Fast experimentation is useful only when teams can reverse harmful changes quickly. Without clear rollback rules, a weak variant can stay live too long and damage pipeline quality before anyone takes ownership of the reversal.

Define rollback triggers before each launch. Typical triggers include sharp decline in qualified demo rate, unusual rise in low-fit trial volume, abrupt increase in form abandonment, or guardrail breaches in early retention and support-contact signals. Predefined triggers reduce debate during high-pressure cycles.

Assign one rollback owner per release. This person should have authority to revert, communicate impact, and log the incident in the experiment register. Shared responsibility without clear decision authority often delays corrective action.

Use phased rollout whenever traffic volume is high. Start with partial exposure, confirm signal direction, then expand. If early signals violate thresholds, revert immediately and analyze root cause before relaunching an adjusted variant.

A good rollback process is not a sign of failure. It is a sign of operational maturity. Teams that treat reversibility as part of normal execution move faster over time because they can test aggressively without risking prolonged quality regressions.

Pre-Launch QA Checklist

Before launch, confirm offer-message consistency, pricing clarity, trust recency, and CTA hierarchy across all visible states. Verify that confidence cues appear before major commitment requests.

Run full mobile checks on real devices for first-screen readability, action visibility, navigation clarity, and form usability. Validate performance metrics on conversion-critical templates.

Verify tracking integrity for stage-level analysis and experiment attribution. Ensure variant identifiers and source segmentation are accurate.

Confirm ownership sign-off from product marketing, growth, product, sales feedback, and QA. Releases should not bypass this sequence.

FAQ: SaaS Landing Page Conversion System in 2026

1) Should SaaS teams use one universal landing page?

Usually no. Use one core architecture with intentional variants by role and source.

2) What is the fastest high-impact improvement?

Improve first-screen value clarity and move trust cues earlier in the flow.

3) Trial or demo as primary CTA?

Choose based on GTM motion and segment intent. Do not optimize for both equally on the same page.

4) How many tests should run at once?

Fewer is better for signal quality. One major variable per page cycle is typically optimal.

5) Which metric matters most?

Use a qualified conversion metric tied to downstream outcomes, not clicks alone.

6) How often should templates be revised?

After evidence, not on fixed creative cycles. Promote repeat winners into standards.

7) Are logos enough for trust?

No. Add implementation clarity, support expectations, and practical proof context.

8) How should navigation be optimized?

Prioritize buyer decision routes and reduce non-essential top-level complexity.

9) What breaks conversion quality over time?

Ownership drift, stale proof, and inconsistent policy or CTA logic across variants.

10) Can no-code workflows handle enterprise SaaS campaigns?

Yes, if supported by strict governance, QA gates, and disciplined testing.

11) How does mobile affect enterprise SaaS conversion?

Mobile often drives early consideration, so poor mobile clarity can suppress later desktop conversions.

12) What creates compounding gains?

Stage-based diagnosis, clean experiments, cross-team ownership, and consistent feedback loops.

Final Takeaway

SaaS landing performance improves when pages are managed as systems rather than isolated designs. The highest returns come from structured decision flow, trust-aware sequencing, clean experimentation, and governance that scales.

Unicorn Platform supports this model by combining speed with reusable structure. Keep templates stable, vary emphasis intentionally, and tie every release to quality outcomes so conversion gains compound over time.

Sustained gains come from disciplined iteration, transparent ownership, and conversion decisions grounded in buyer reality rather than internal assumptions.