Table of Contents

- Why Low-Budget Page Programs Underperform

- Form Design: Volume vs Quality Tradeoff

- 30-Day Implementation Plan

- Common Failure Modes and Practical Fixes

- FAQ

Low-budget page production is no longer a niche tactic. It is now part of mainstream growth operations for startups, agencies, solo creators, and in-house teams that need faster campaign iteration without large production overhead.

The challenge is not publishing speed. Most teams can now ship pages quickly. The challenge is keeping conversion quality high when launch cycles accelerate and multiple channels demand variants at the same time.

This is where many teams lose performance. They treat no-cost page tools as a design shortcut rather than as part of a structured conversion system. As a result, they publish more pages, but learn less from each release.

A high-performing no-cost workflow should do four things consistently: preserve message clarity, reduce buyer uncertainty, route users into the right next step, and generate interpretable data for the next optimization cycle.

Unicorn Platform can support this model effectively because teams can reuse proven sections, deploy variants quickly, and maintain one stable architecture while testing controlled message changes. Speed becomes a strategic advantage only when paired with process discipline.

sbb-itb-bf47c9b

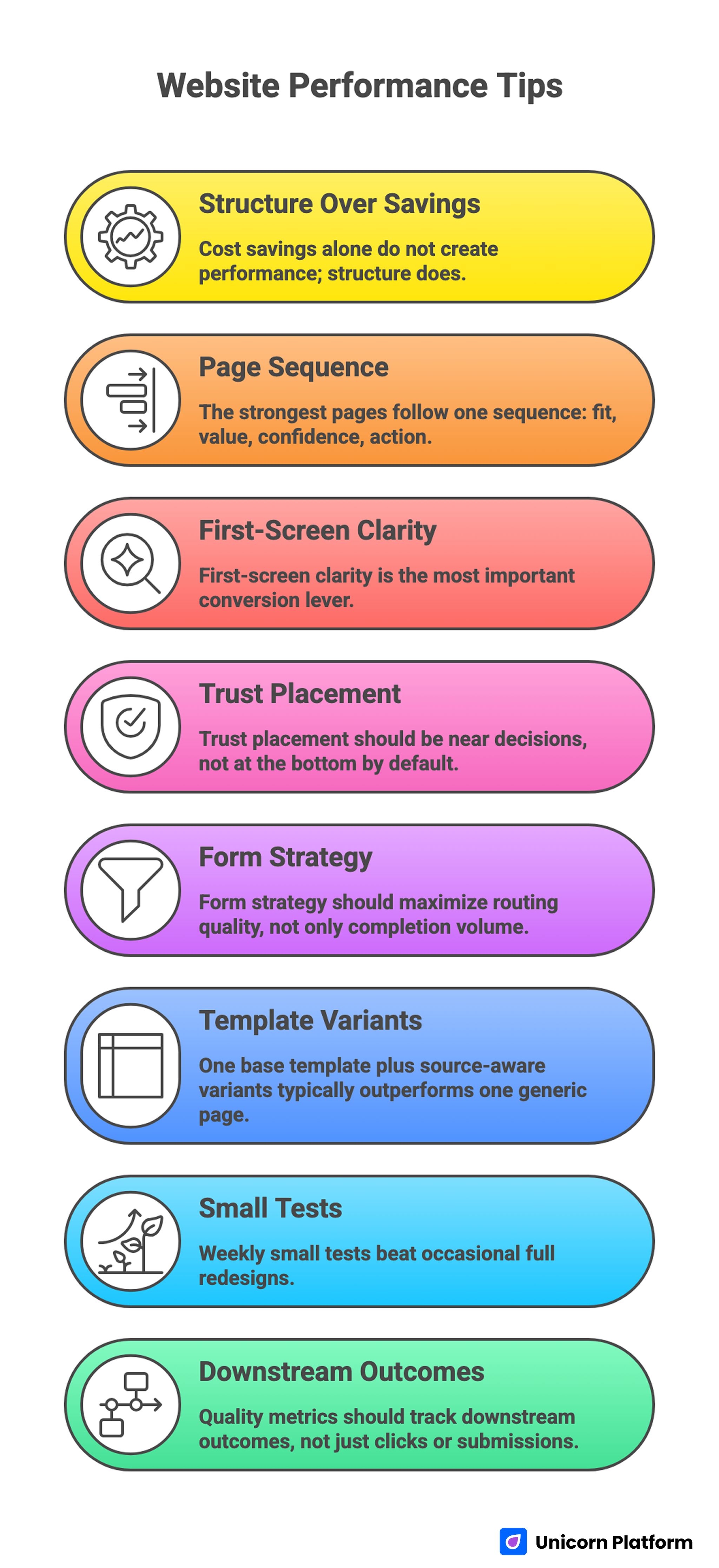

Quick Takeaways

Website Performance Tips

- Cost savings alone do not create performance; structure does.

- The strongest pages follow one sequence: fit, value, confidence, action.

- First-screen clarity is the most important conversion lever.

- Trust placement should be near decisions, not at the bottom by default.

- Form strategy should maximize routing quality, not only completion volume.

- One base template plus source-aware variants typically outperforms one generic page.

- Weekly small tests beat occasional full redesigns.

- Quality metrics should track downstream outcomes, not just clicks or submissions.

Why Low-Budget Page Programs Underperform

Teams that run no-cost page programs often face the same three failures. First, they publish without a stable narrative structure, so every campaign starts from zero and performance changes become hard to interpret.

Second, they over-index on visual novelty. A page may look polished, but if the opening promise is vague and trust cues are delayed, conversion confidence remains low.

Third, they skip operational rigor because the page was "quick" to launch. Mobile interaction problems, weak form logic, and inconsistent confirmation flows are treated as minor details until they materially affect pipeline quality.

Underperformance is usually not caused by tools. It is caused by absent decision frameworks and weak release governance.

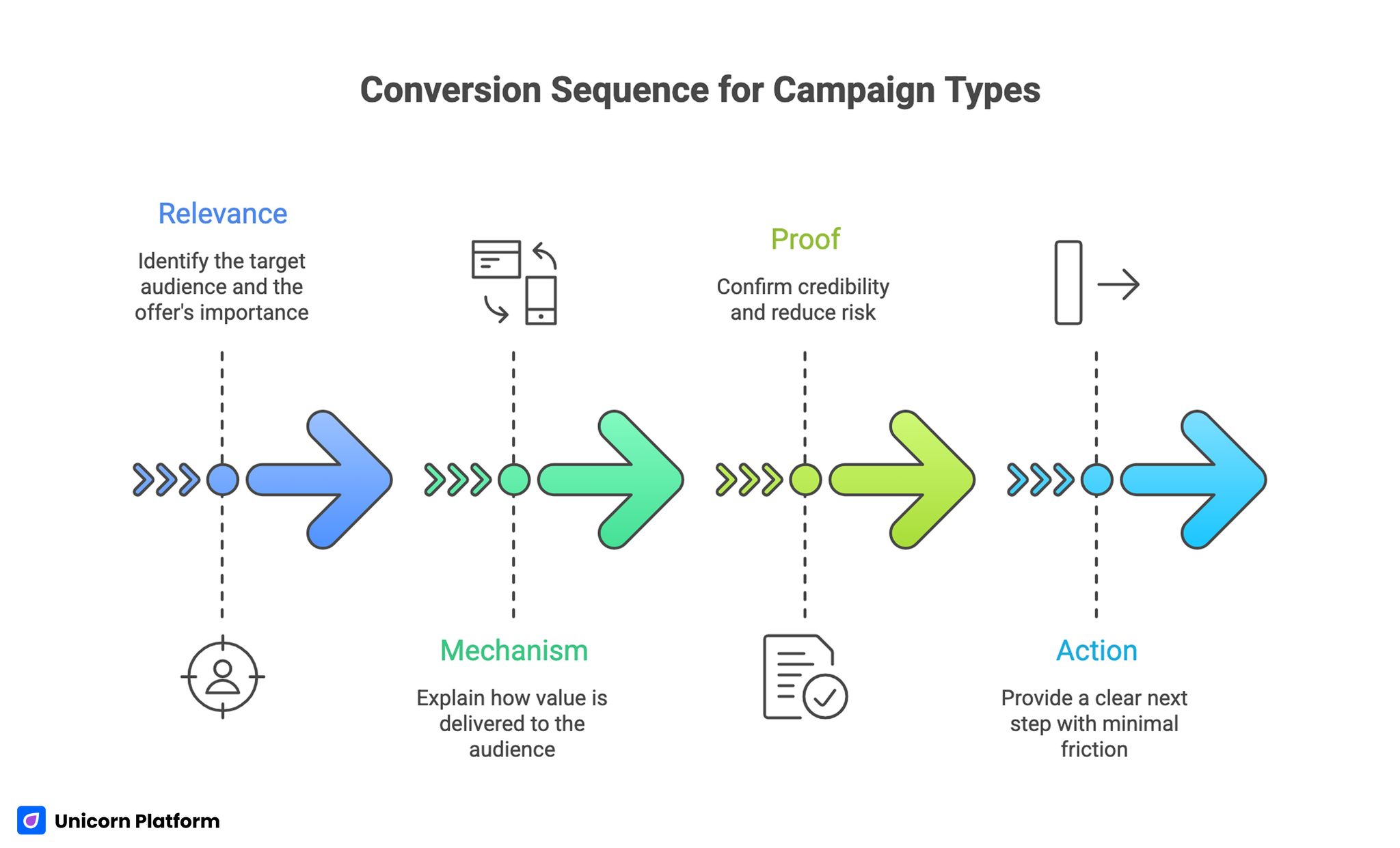

The Conversion Sequence That Works Across Campaign Types

Conversion Sequence for Campaign Types

No-cost page workflows improve significantly when teams apply a consistent decision sequence. The sequence is simple but powerful: relevance, mechanism, proof, and action.

Relevance tells visitors who the offer is for and why it matters now. Mechanism explains how value is delivered. Proof confirms credibility and reduces risk. Action provides a clear next step with minimal friction.

This sequence should be preserved regardless of page type, traffic source, or campaign urgency. Teams can adapt depth and emphasis, but the order should remain stable for cleaner measurement and faster iteration.

For teams refining foundational page architecture, this high-converting landing page structure guide is a useful baseline for section sequencing.

First-Screen Clarity: The Highest-Leverage Section

The first screen must answer three questions quickly. Who is this for? What specific outcome is offered? What should the visitor do next?

When these answers are unclear, the rest of the page loses effectiveness because visitors do not invest enough attention to reach deeper sections. No amount of lower-page polish compensates for a weak opening.

Strong first-screen copy is outcome-led and precise. Weak first-screen copy uses broad language such as "transform your growth" without showing practical direction.

A reliable opener includes one role or context signal, one concrete value statement, one credibility hint, and one primary CTA.

First-Screen QA Prompt

Before launch, ask:

- Is the target user obvious without scrolling?

- Is the value concrete and measurable?

- Is the CTA tied to that value?

- Is there at least one reason to trust the claim?

If one answer is weak, revise the first screen before touching other sections.

Audience Fit and Positioning Discipline

Many no-cost pages fail because they try to speak to everyone. Broad language may increase superficial appeal but usually reduces qualified conversion.

Fit should be explicit. Name the audience context, operating environment, and problem stage. This helps the right users self-select and gives others a clear signal that a different route may be better.

Positioning should be specific enough to filter noise, but not so narrow that it blocks adjacent high-fit users. The balance comes from outcome language tied to real use cases rather than demographic assumptions.

For early-stage teams defining audience and offer clarity, this startup landing page creation guide can help structure positioning decisions.

Mechanism Clarity: Show How the Outcome Happens

Visitors convert when they understand not only what they get, but how they get it. Mechanism clarity reduces perceived risk and improves trust in the promise.

A strong mechanism section explains process in plain language, highlights expected effort, and defines what is required from the user. This can be done with a short step flow, one visual, or a compact before/after explanation.

Mechanism depth should match traffic intent. Search users often need clear process framing. Email users may need less explanation and stronger urgency.

Mechanism uncertainty is one of the most common reasons for mid-page abandonment, especially in categories with technical or AI-driven offers.

Trust Architecture for Fast-Published Pages

No-cost pages can trigger skepticism when they look generic or over-optimized. Trust must be designed intentionally to counter that perception.

Effective trust modules include context-rich proof, transparent process notes, and clear delivery expectations. Generic praise without scenario context is usually weak.

Proof should be placed near the claim it supports. If evidence sits far below the statement, users may interpret the claim as unverified and continue with caution.

A practical trust pattern is one proof element per major claim cluster. This keeps pages compact while maintaining confidence.

Copywriting Standards for High-Quality Low-Cost Pages

Fast publishing does not require low-quality writing. It requires tighter writing standards. Each section should communicate one idea, one decision, and one next step.

Use concrete nouns, specific outcomes, and constrained promises. Avoid inflated adjectives and category clichés that sound impressive but fail to guide action.

Good copy on low-budget pages is usually shorter, sharper, and easier to validate. It favors operational clarity over persuasive theatrics.

Copy Editing Rules

- Replace broad claims with measurable outcomes.

- Keep paragraph intent singular.

- Use active verbs tied to next-step actions.

- Remove lines that do not support a conversion decision.

These rules improve both readability and conversion confidence.

CTA Strategy by Readiness Stage

Not all traffic should be pushed to the same action. CTA intensity must align with readiness level.

Cold audiences often respond better to low-friction actions. Warm audiences can handle deeper commitment requests. High-intent audiences may be ready for direct conversion routes.

The critical rule is hierarchy. One dominant CTA per variant, one optional secondary path, and no competing equal-priority actions.

This reduces cognitive load and clarifies intent signals for downstream teams.

Form Design: Volume vs Quality Tradeoff

Form strategy should be driven by routing value, not by habit. Every required field should influence what happens after submission.

When fields do not affect prioritization or personalization, they add friction without value. This hurts conversion and creates unnecessary effort for mobile users.

A strong first-step form often includes name, email, and one high-signal qualifier. Deeper context can be collected later through confirmation steps or follow-up forms.

Field Audit Checklist

- Does this field change routing decisions?

- Can this signal be captured later?

- Is mobile completion easy?

- Does the user understand why it is asked?

If answers are weak, defer the field.

Mobile-First Quality Standards

A large share of no-cost page traffic arrives via mobile. If mobile experience is weak, campaign economics degrade quickly.

Mobile quality should include readable first-screen hierarchy, visible CTA placement, comfortable tap targets, clean keyboard behavior, and fast load experience on weaker networks.

Desktop approvals are insufficient. Real-device QA is essential before traffic scale.

Teams should also track mobile quality by source because friction patterns differ across paid social, search, and email.

Speed Without Chaos: Operational Workflow

Fast execution needs process guardrails. A practical workflow for Unicorn Platform teams looks like this:

- Select one audience and one objective.

- Clone a proven section structure.

- Draft specific first-screen copy.

- Add mechanism and trust modules.

- Configure one primary CTA path.

- Run mobile and form QA.

- Launch with one explicit hypothesis.

This workflow keeps launch speed high while preserving test integrity.

AI-Assisted Drafting: Where It Helps and Where It Hurts

AI can accelerate idea generation, variant drafting, and section rewrites. It is useful for speed, but not for final truth validation.

Teams should use AI for option expansion, then apply human review for factual accuracy, proof relevance, and claim precision.

Unreviewed AI copy tends to overuse generic phrases and weak claims. This can reduce trust even if the page looks polished.

For teams integrating automation into rapid production cycles, this AI landing page workflow guide can help define safe drafting boundaries.

AI Usage Policy for Page Teams

- AI drafts options, humans approve final copy.

- AI suggests variants, humans define test hypothesis.

- AI proposes structure, humans validate fit and proof.

- AI output never bypasses QA gates.

This policy preserves quality while retaining speed benefits.

Source-Aware Variants for Better Relevance

One generic page for every channel is rarely optimal. Search users, social users, and email users arrive with different context and trust levels.

The best approach is one base template plus source-aware messaging adjustments. Keep structure stable and adapt headline emphasis, mechanism depth, and CTA framing by source intent.

This model improves relevance without sacrificing operational control. It also produces cleaner analytics because structural variables remain consistent.

Channel-Specific Design Patterns

Paid search variants should lead with immediate problem-solution clarity. Social variants should establish emotional relevance quickly with concise proof. Email variants should preserve message continuity from the email promise. Retargeting variants should focus on objection handling and risk reduction.

These patterns are not rigid rules, but practical defaults that reduce guesswork and speed up execution.

Variant Documentation Standards

Each variant should include:

- target channel

- hypothesis

- primary metric

- guardrail metric

- expected behavior change

This documentation improves decision quality and prevents repeated low-value tests.

Measurement Framework for Real Business Value

Top-line conversion rate alone can mislead teams. Better measurement uses layered metrics tied to downstream outcomes.

Layer one tracks page interactions such as clicks, starts, and submissions. Layer two tracks quality signals such as qualification fit and follow-up engagement. Layer three tracks commercial outcomes such as opportunity creation or purchase progression.

Each campaign needs one primary metric and one guardrail metric. Guardrails catch hidden quality declines while primary metrics drive focused optimization.

Practical Metric Pairs

- lead capture campaign: primary is qualified submissions, guardrail is low-fit rate

- product trial campaign: primary is trial starts, guardrail is activation completion

- content offer campaign: primary is engaged downloads, guardrail is early churn signal

Using these pairs makes optimization decisions more robust.

Weekly Optimization Cadence

A weekly cadence creates momentum without noise. Sporadic redesigns usually produce confusion and weak learning.

A practical cycle: Monday diagnostics, Tuesday hypothesis selection, Wednesday implementation, Thursday QA verification, Friday rollout decision.

One major variable per cycle is enough for strong insight. Faster performance growth comes from consistency, not from changing everything at once.

Monthly Freshness and Proof Maintenance

Even good pages decay over time when proof gets stale and context shifts. Freshness routines keep trust intact.

Run a monthly review on active pages. Validate proof recency, screenshot accuracy, offer relevance, and CTA alignment with current campaigns.

Quarterly, run template-level reviews to promote winners and retire weak modules. This prevents library bloat and keeps future launches efficient.

For teams using behavioral insights to prioritize updates, this user behavior optimization guide can help identify high-impact adjustments.

Governance Model for Fast Teams

Speed creates risk when ownership is unclear. A lightweight role model prevents drift.

Define four owners: messaging owner, proof owner, performance owner, and QA owner. Each owner has explicit release responsibilities.

Final publication should require QA sign-off and metric validation, even for quick experiments. This reduces silent regressions and protects learning quality.

Release Gate Checklist

- message clarity check

- proof relevance check

- form and CTA path check

- mobile interaction check

- analytics event check

If one gate fails, launch should pause until fixed.

Scenario: Startup Team With Limited Budget

A startup launched several low-cost pages quickly and achieved strong click-through rates but weak qualified conversion. The pages looked clean but used broad promises and long first-step forms.

Audit identified key issues: unclear audience fit, delayed proof placement, and form overload on mobile.

The team rebuilt in Unicorn Platform with one stable template, clearer first-screen positioning, compact proof near major claims, and staged qualification.

Over two months, qualified conversion improved while total traffic remained similar. Sales reported fewer low-fit conversations and faster progression for accepted leads.

Scenario: Agency Running Multi-Channel Campaigns

An agency managed multiple clients with tight timelines and used one generic page pattern across channels. Performance was inconsistent and hard to diagnose.

They adopted a source-aware variant model with strict documentation and weekly single-variable testing. Structure stayed stable while message emphasis changed by channel intent.

Within one quarter, campaign predictability improved. The agency reduced rework because winning modules were added to a shared template library.

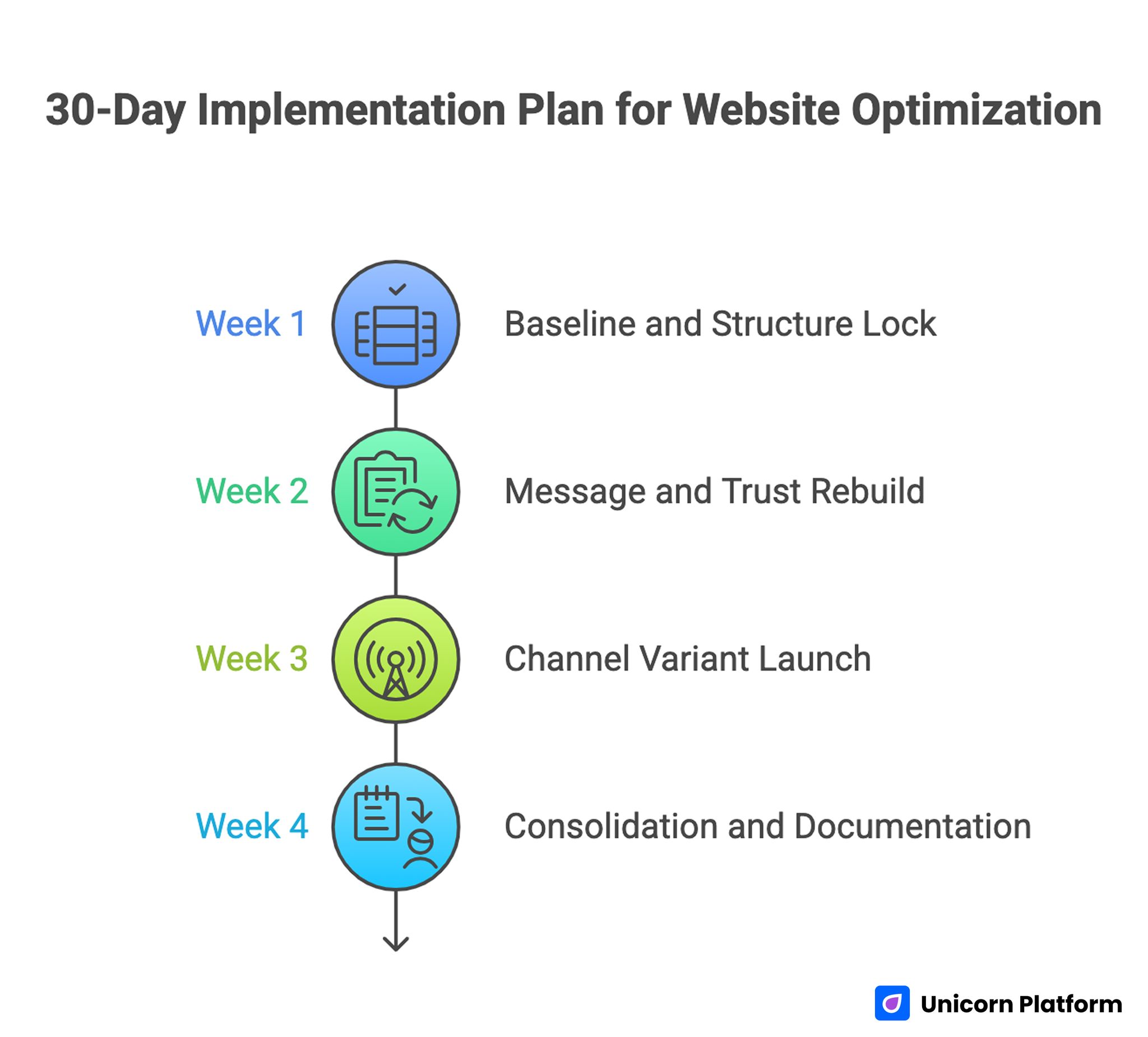

30-Day Implementation Plan

30-Day Implementation Plan for Website Optimization

Week 1: Baseline and Structure Lock

Audit current page against relevance, mechanism, proof, and action sequence. Identify the highest-leak section using behavior and quality metrics.

Define one primary metric and one guardrail metric for the next cycle. Lock one base template and remove conflicting CTA paths.

Week 2: Message and Trust Rebuild

Rewrite first-screen copy with explicit audience fit and concrete value. Move trust cues near major decision points.

Simplify form fields to routing essentials and validate mobile readability.

Week 3: Channel Variant Launch

Publish one variant for highest-volume channel with controlled message adjustments. Keep structure unchanged for clean attribution.

Run one test with predefined success and rollback thresholds.

Week 4: Consolidation and Documentation

Promote winning blocks into template library. Archive weak sections and document lessons in a decision log.

Assign monthly freshness owner and schedule next optimization cycle.

90-Day Scale Plan

Month 2: Expand by Intent Segment

Build controlled variants for cold, warm, and high-intent traffic while preserving template structure.

Develop reusable modules for hero, proof, mechanism, and CTA by readiness stage.

Month 3: Operationalize Reliability

Formalize release gates, ownership rules, and rollback triggers tied to guardrail decline.

Require decision logs for major changes so learning compounds across campaigns.

At this stage, scale should come from repeatable system quality, not design churn.

Economics of Low-Cost Page Programs

Cost reduction is usually the reason teams adopt low-budget page workflows, but cost reduction alone is not a growth strategy. The relevant question is not how little a page costs to publish. The relevant question is how much qualified value the page produces relative to effort, speed, and risk.

A cheap page can still be expensive if it sends unqualified traffic into sales workflows or if it generates misleading conversion signals that drive poor optimization choices. In contrast, a low-cost page with strong routing clarity can create high return by improving both volume and quality at the same time.

Teams should evaluate page economics using three lenses. The first is production efficiency: how quickly can a page move from brief to launch with acceptable quality. The second is conversion efficiency: how reliably does the page convert intended users into the next meaningful step. The third is downstream efficiency: how well do converted users progress into sales, activation, or revenue outcomes.

When these three lenses are reviewed together, teams make better decisions about where to simplify, where to invest, and where to standardize. They also avoid the common trap of over-optimizing top-of-funnel signals while degrading commercial outcomes.

Practical Cost-to-Value Signals

- Build time from brief approval to publish readiness

- Number of rework cycles required after launch

- Qualified conversion rate by source segment

- Sales acceptance or activation quality after conversion

- Support overhead caused by unclear messaging

Tracking these signals monthly helps teams understand whether speed improvements are actually increasing business efficiency.

Tool Selection Framework for No-Cost Workflows

Choosing a no-cost builder or low-cost stack should not be based only on surface features. Teams need to evaluate how well the tool supports structure consistency, collaboration, testing, and quality controls.

A practical selection framework starts with five questions. Can the tool support reusable section modules. Can it publish variants quickly without duplicating entire projects. Can it integrate with measurement and CRM workflows. Can it support role-based collaboration. Can it enforce enough constraints to reduce accidental quality drift.

Tools that score well on visual flexibility but poorly on workflow discipline often create hidden operational costs. Teams may spend less on software and more on manual fixes, duplicated QA, and recovery from inconsistent launches.

Migration decisions should also be based on transition cost. If moving to a new tool breaks template continuity, experiment history, or tracking consistency, short-term gains may be canceled by lost learning.

Selection Checklist

- Reusable section support for fast standardization

- Variant management without full page duplication

- Clear integration path for analytics and handoff systems

- Collaboration features that align with team roles

- Stable publishing and rollback capabilities

- QA-friendly editing environment

- Low friction for iterative updates under deadline pressure

The best tool is the one that preserves decision quality while reducing cycle time.

Template Library Operations

A template library is one of the highest-leverage assets in low-cost page programs. Without a library, teams recreate structure every cycle and repeat avoidable mistakes. With a library, teams launch faster and test more cleanly because page architecture is already validated.

Template libraries should be organized by objective and readiness stage, not by visual theme. For example, lead capture templates, trial activation templates, and purchase templates should each have clear conversion logic and defined section priorities.

Each template should include a baseline hero format, mechanism section options, proof module options, CTA rules, and form patterns. Teams can then adapt copy and emphasis while preserving structural integrity.

Library governance matters as much as library size. Adding too many unvalidated blocks creates noise and slows decisions. A good rule is to promote only modules that have demonstrated repeatable performance in at least two distinct campaigns.

Library Maintenance Cadence

- Weekly: add notes on tested blocks and observed performance

- Monthly: promote validated blocks and archive weak ones

- Quarterly: review library structure and remove outdated patterns

This cadence keeps the library practical and prevents slow drift into clutter.

Integration Architecture and Handoff Reliability

Landing page performance is tightly connected to what happens after conversion. If routing, enrichment, and follow-up are inconsistent, even high-quality page conversions can lose value quickly.

Integration architecture should define how submission data moves from the page into downstream systems. This includes CRM mapping, lead scoring inputs, response owner assignment, and notification timing.

A common failure pattern is partial integration where only contact data is passed, while intent context is lost. This forces manual triage and delays first response. Strong systems preserve context from the page so responders understand user expectations immediately.

Teams should also standardize fallback behavior. If an integration fails or delays, users should still receive confirmation and internal teams should still receive alerting. Operational resilience protects trust and prevents silent data loss.

Handoff Architecture Essentials

- Explicit field mapping from page to CRM

- Priority tier logic based on qualifier signals

- Assignment rules by region, segment, or product line

- SLA timers for first response accountability

- Alerting for integration failures and routing exceptions

When these components are clear, conversion value is preserved beyond the first form event.

Advanced Quality Assurance Beyond Visual Checks

Most teams run visual checks and basic form tests, but high-performing programs go further. Advanced QA includes scenario-based behavior validation, accessibility confidence checks, and performance readiness across real network conditions.

Scenario-based QA means testing user paths end to end: first visit, form completion, confirmation, follow-up interaction, and next-step continuity. This catches breaks that isolated page checks often miss.

Accessibility QA is not only a compliance issue. It also improves usability for all users, especially under mobile and high-friction contexts. Clear labels, keyboard compatibility, and readable contrast support conversion reliability.

Performance QA should evaluate time to meaningful value, not only full load metrics. Users should understand the offer and see a clear action path before secondary assets finish loading.

Advanced QA Sequence

- First-screen clarity validation on multiple devices

- Form and CTA interaction checks under real keyboard behavior

- Confirmation and follow-up continuity checks

- Accessibility pass for labels, contrast, and focus order

- Performance check under slower network conditions

- Tracking verification for primary and guardrail events

This sequence can be executed quickly when embedded into release templates.

Accessibility and Inclusion as Conversion Strategy

Inclusive design improves conversion by reducing unnecessary effort for more users. Teams often treat accessibility as a legal checkbox, but it is also a practical growth driver.

Pages that are easier to read, easier to navigate, and easier to complete on different devices usually convert better across broad audience sets. The gains may appear small per interaction, but they compound across campaigns.

Inclusive conversion design includes plain-language copy, clear semantic heading structure, descriptive form labels, and predictable interaction patterns. It also includes avoiding motion or visual effects that interfere with comprehension on mobile.

Accessibility improvements are especially valuable in low-cost workflows because they reduce support overhead and rework after launch.

Inclusion-Focused Design Checks

- Is critical information understandable without jargon?

- Can key actions be completed without precision tapping?

- Are form errors understandable and recoverable?

- Is the page usable with keyboard-only navigation?

- Are contrast and text sizing robust on bright mobile screens?

When these checks are integrated early, teams avoid expensive late fixes.

Decision Logging and Experimentation Maturity

Without clear decision logs, teams repeat tests, forget prior findings, and misattribute outcomes. This is common in fast publishing environments where multiple contributors make rapid changes.

A decision log should be short but complete. It should include hypothesis, change scope, expected behavior, primary metric, guardrail metric, launch date, and decision outcome. The goal is not bureaucracy. The goal is reusable learning.

Experimentation maturity improves when teams define what constitutes evidence. For example, a change should not be promoted to the main template unless it improves the primary metric without degrading guardrails over a minimum evaluation window.

This avoids the "lucky spike" problem where short-term fluctuations are mistaken for durable improvements.

Decision Log Template

- Test ID and objective

- Audience and channel context

- Specific page element changed

- Primary metric and guardrail metric

- Observed result and confidence notes

- Rollout, rollback, or iterate decision

Maintaining this template across campaigns compounds institutional knowledge.

Change Management for Multi-Owner Teams

As teams grow, more contributors touch page systems. Without change management rules, quality drifts quickly and accountability becomes unclear.

A practical model is role-based change classes. Messaging changes, structural changes, and integration changes should follow different approval paths. Structural and integration changes usually require stricter review than copy refinements.

Teams should also use release windows for high-traffic pages. Ad hoc edits during peak campaign periods increase risk and reduce debugging clarity when issues emerge.

Rollback plans should be prepared before major releases. Teams should know exactly which previous version to restore and who is responsible for the rollback decision if guardrails decline.

Change Management Rules

- Classify each change by impact level before implementation

- Require extra review for structural and integration updates

- Use planned release windows for high-volume traffic pages

- Define rollback triggers and owners before launch

- Log every high-impact change with expected outcomes

These rules preserve speed while protecting reliability.

Resource Planning and Team Capacity

Low-cost programs can fail when teams underestimate the operational load of frequent launches. Publishing quickly still requires review, QA, tracking validation, and follow-up alignment.

Capacity planning should account for both build work and maintenance work. Many teams staff for production and under-staff for iteration, which causes growing quality debt over time.

A practical planning model assigns weekly capacity slots: new builds, optimization updates, QA checks, and maintenance tasks. This prevents urgent launches from displacing essential quality work.

Teams should also track cycle time from brief to stable performance, not only brief to publish. This captures true operational efficiency.

Capacity Planning Questions

- How many high-impact launches can be supported per week?

- How much time is reserved for QA and diagnostics?

- What maintenance tasks are required for active pages?

- Which owners are currently overloaded by review responsibilities?

Answering these questions monthly helps prevent avoidable quality regression.

Risk Management for Fast Campaign Programs

Every fast campaign program needs explicit risk controls. Without them, small issues can compound into major performance losses before detection.

Risk controls should cover technical risk, messaging risk, and operational risk. Technical risk includes tracking failures and broken form routing. Messaging risk includes claim drift and expectation mismatch. Operational risk includes missed QA gates and unclear ownership.

A useful risk model combines early alerts and recovery playbooks. Early alerts detect anomalies quickly. Recovery playbooks define who acts, what is reverted, and how validation is repeated.

This model reduces panic-driven decision-making during performance dips and keeps recovery cycles short.

Essential Risk Controls

- event-level monitoring for conversion drop anomalies

- alerting on integration failures or routing delays

- scheduled checks on top revenue-impact pages

- documented recovery steps by failure type

- post-incident review template for learning capture

Risk controls are a performance multiplier, not just a safety net.

Cross-Functional Alignment Between Marketing and Sales

Landing page quality has limited value if downstream teams define quality differently. Marketing may optimize for submissions while sales optimizes for readiness and close potential.

Alignment begins with shared definitions. Teams should agree on what counts as a qualified submission, what response SLA is expected, and what context must be captured before first outreach.

Regular alignment reviews help detect mismatches early. If sales feedback shows recurring confusion from converted users, page messaging should be adjusted quickly.

Cross-functional reviews are most effective when they are short, metric-driven, and tied to specific page decisions. This keeps collaboration focused and actionable.

Alignment Rituals

- Weekly 20-minute quality sync on top pages

- Shared review of qualification and response metrics

- Joint decision on next test priorities

- Feedback loop from sales conversations into page updates

When marketing and sales share quality ownership, conversion systems become more reliable.

Implementation Worksheet for the Next Launch Sprint

To keep execution practical, teams can run a short worksheet before every release. Start by writing one sentence for audience fit, one sentence for promised outcome, and one sentence for the exact next step. If any statement feels generic, revise before design polishing begins.

Next, map the top three expected objections and assign each objection to a section where it will be resolved. Add one proof cue beside each high-risk claim and define which metric will confirm improvement. This worksheet takes less than 20 minutes and usually prevents expensive iteration cycles caused by vague positioning or missing trust context.

Common Failure Modes and Practical Fixes

1) Generic Opening Promise

Visitors cannot tell whether the offer is relevant. Replace broad claims with role-specific outcome language.

2) Mechanism Ambiguity

Users do not understand how value is delivered. Add concise process clarity before asking for commitment.

3) Late Trust Signals

Credibility appears after decision friction rises. Move proof closer to key claims and actions.

4) Form Over-Collection

Too many first-step fields reduce completion and quality. Use staged qualification for deeper context.

5) CTA Overload

Multiple equal actions fragment intent. Keep one dominant route and one secondary fallback.

6) Mobile Neglect

Desktop approval hides phone-based friction. Require real-device QA before every major launch.

7) Channel Mismatch

One message serves all sources and underperforms. Use source-aware framing with stable structure.

8) Weak Confirmation Flow

Users convert but do not know next steps. Add immediate confirmation and clear follow-up expectations.

9) Volume-Only Reporting

Top-line conversions increase while quality declines. Use layered metrics and guardrails.

10) No Template Governance

Fast edits create inconsistency and rework. Assign owners and enforce release gates.

Pre-Launch QA Checklist

Confirm first-screen fit clarity, value specificity, and visible primary action. Verify trust cues appear before major commitment points.

Check form behavior, mobile interaction quality, and confirmation continuity on real devices.

Validate metric instrumentation for primary and guardrail measures. Require final QA approval before scaling spend.

FAQ: No-Cost Landing Page Strategy

Can no-cost page programs compete with expensive production workflows?

Yes, if they use disciplined structure, controlled testing, and strong QA. Tool cost is less important than decision quality and operational consistency.

What should teams optimize first?

Start with first-screen clarity and trust placement. These usually produce the fastest quality gains.

Should teams use one page for all channels?

Usually no. Keep one template, then adapt message emphasis by channel intent.

How short should first-step forms be?

Collect only routing-critical information. Additional context should be captured after intent is confirmed.

Is AI drafting safe for conversion pages?

It is safe when used for option generation with mandatory human review. Never publish unverified claims from automated drafts.

How often should pages be refreshed?

Run monthly freshness checks for active pages and quarterly template-level audits.

What is the biggest mobile conversion mistake?

Assuming responsive layout equals responsive conversion. Message clarity and interaction flow must also be validated.

How many variables should be tested at once?

One major variable per cycle usually gives the cleanest insights. Multi-variable tests often create attribution noise.

Which metric matters most for lead-focused pages?

Qualified conversion quality is usually the best primary metric, paired with a guardrail for low-fit submissions.

What creates compounding performance over time?

Stable template systems, clear ownership, disciplined QA, and consistent decision logging.

Final Takeaway

Low-cost page workflows become powerful when they are run as systems, not shortcuts. The highest-performing teams pair fast production with clear sequence design, trust-aware messaging, and rigorous quality gates.

Unicorn Platform can support this model effectively. Keep the structure stable, adapt message depth by intent, and optimize against downstream outcomes. That is how no-cost execution turns into durable conversion performance.