Table of Contents

- Section Blueprint for Repeatable No-Code Quality

- Builder Selection Scorecard for 2026 Teams

- Scenario Playbooks

- 30-Day Execution Plan

- FAQ

No-code adoption solved one major problem for most teams: shipping speed. What it did not solve is quality consistency. Many teams can publish pages quickly but still struggle with conversion clarity, trust design, and post-launch learning discipline.

The reason is straightforward. Removing engineering bottlenecks increases publishing capacity, but it also increases the number of people who can make structural decisions. Without clear standards, pages diverge, user journeys break, and performance becomes difficult to diagnose. Research from Nielsen Norman Group shows that inconsistent user experience and unclear structure significantly reduce usability and conversion performance, especially in fast-built digital products Without clear standards, pages diverge, user journeys break, and performance becomes difficult to diagnose.

Unicorn Platform is effective because it allows rapid iteration while still supporting reusable page architecture. Speed becomes an advantage when it is paired with structure, governance, and evidence-based optimization.

sbb-itb-bf47c9b

Quick Takeaways

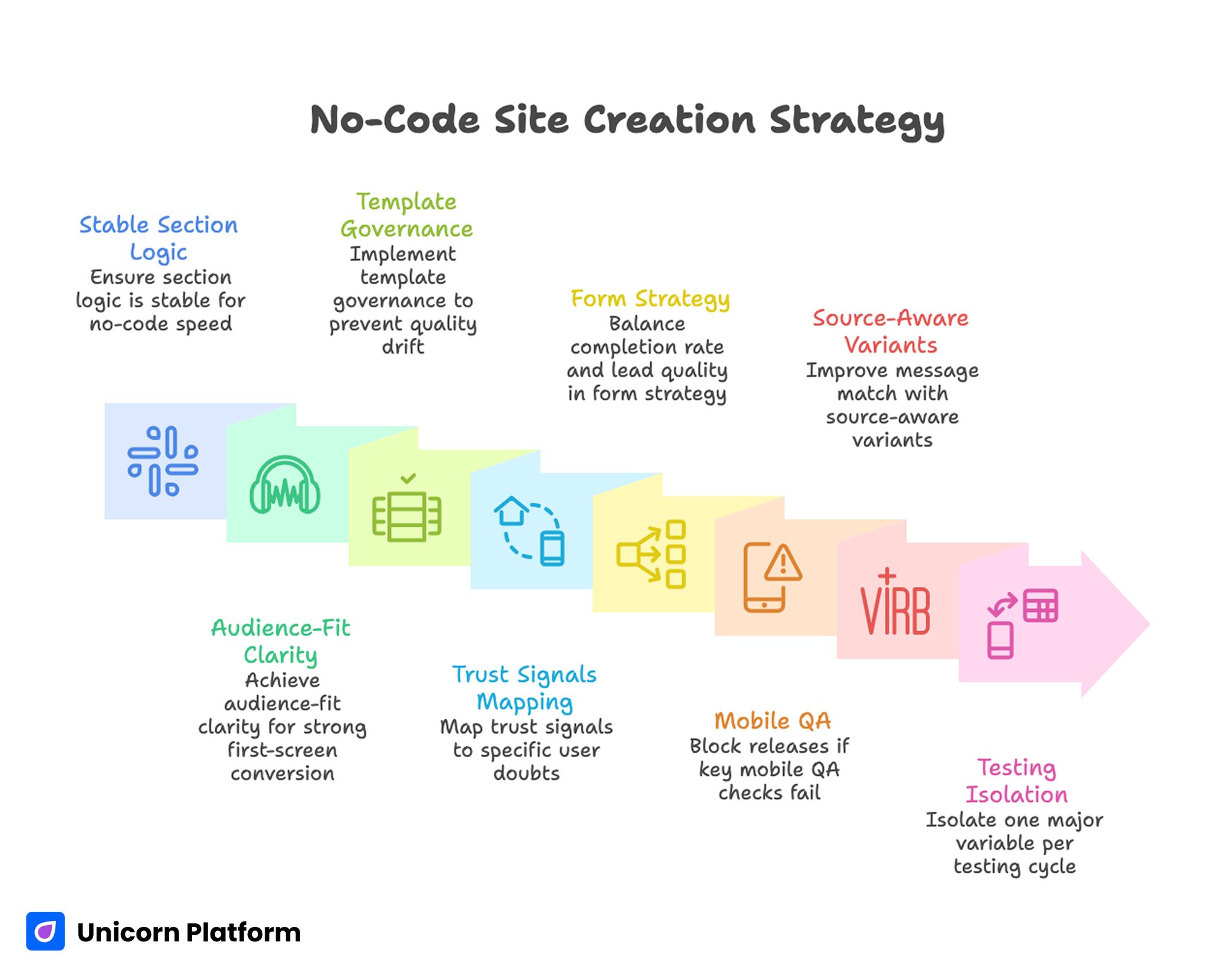

No-Code Site Creation Strategy

- No-code speed is valuable only when section logic is stable.

- Audience-fit clarity is the strongest first-screen conversion lever.

- Template governance prevents quality drift across contributors.

- Trust signals should be mapped to specific user doubts.

- Form strategy should balance completion rate and lead quality.

- Mobile QA should block releases when key checks fail.

- Source-aware variants improve message match without template chaos.

- Testing should isolate one major variable per cycle.

Why No-Code Projects Underperform Despite Fast Launches

A common failure pattern is surface-first editing. Teams adjust layout, colors, and components quickly, but the core narrative sequence remains weak. Users may find a page visually polished yet still struggle to understand value, credibility, and next steps.

A second pattern is template misuse. Teams copy one template across very different intents and only change headlines. This often creates mismatch between visitor expectations and section depth, especially when search, social, and email traffic all land on the same version.

A third pattern is weak release governance. Rapid updates are pushed without clear ownership, so no one is accountable for trust accuracy, conversion quality, or metric interpretation. Over time, this creates noisy data and inconsistent outcomes.

Start With Outcome and Audience Before Tool Selection

Teams often begin by comparing builders based on feature checklists. A better starting point is outcome definition. Clarify what the page must accomplish, which audience segment it serves, and what action quality looks like after conversion.

Outcome-first planning avoids tool-driven mistakes where advanced features are added because they exist, not because they improve decision flow. It also helps contributors evaluate edits by strategic impact rather than visual preference.

For teams onboarding quickly into drag-and-drop workflows, this simple drag-and-drop web presence guide can help align execution speed with practical launch priorities.

The Four-Layer Narrative Model for No-Code Pages

A reliable conversion sequence keeps page decisions consistent across teams. Studies from CXL highlight that clear value propositions, structured messaging, and well-placed trust elements are among the strongest drivers of landing page conversion performance. The four layers are relevance, mechanism, confidence, and action. Each layer answers a different question users need before committing.

Relevance explains who the page is for and why it matters now. Mechanism explains how the outcome is produced. Confidence validates key claims with specific evidence. Action defines one clear next step aligned with readiness level.

When this model is preserved, teams can test copy and design variants safely. When the sequence breaks, conversion metrics may move unpredictably because users face cognitive gaps at decision moments.

If your team needs a deeper section-order baseline before testing variants, this high-converting page structure reference is useful for maintaining narrative clarity.

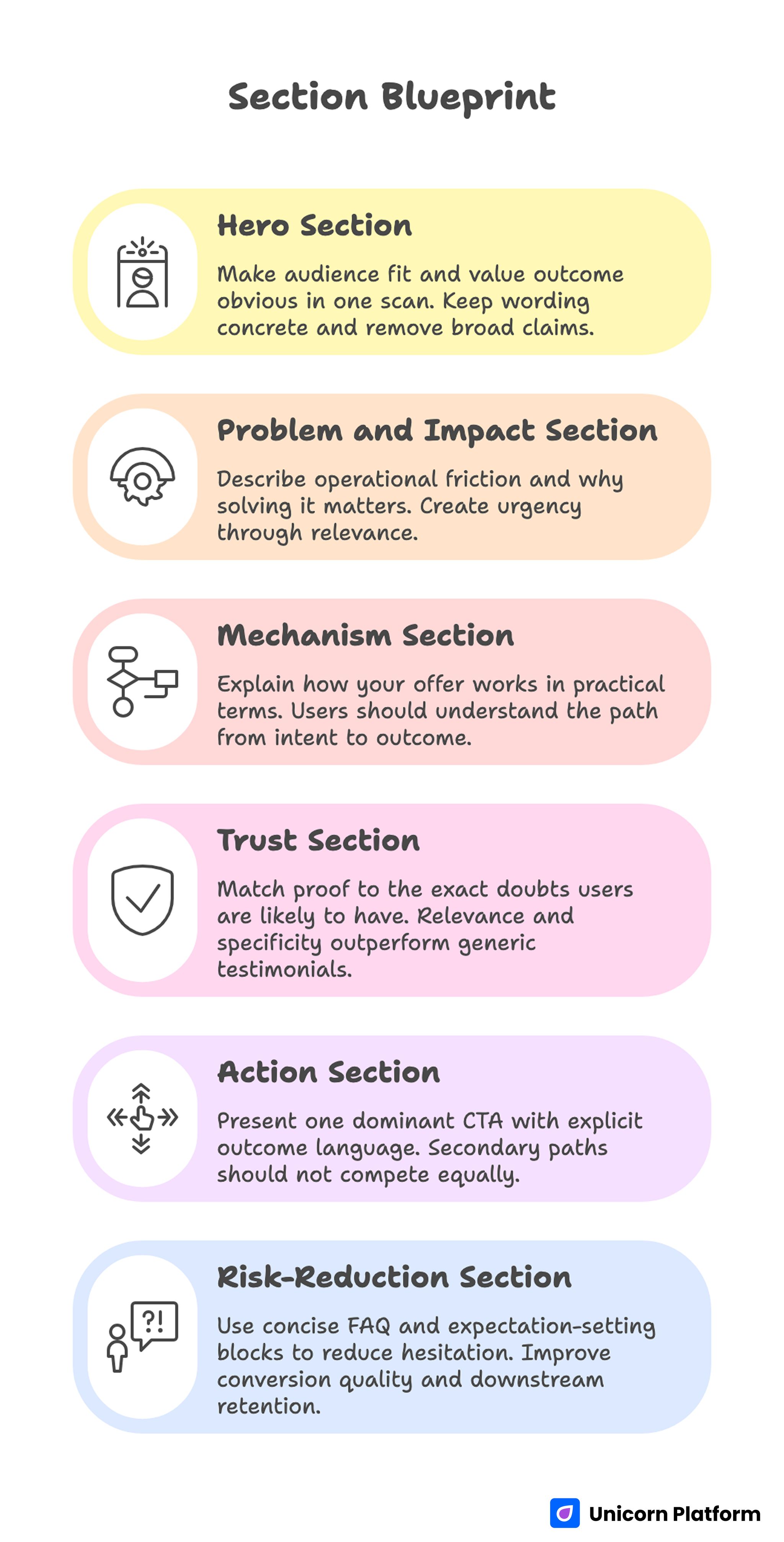

Section Blueprint for Repeatable No-Code Quality

Section Blueprint for Repeatable No-Code Quality

Hero section

The hero must make audience fit and value outcome obvious in one scan. Keep wording concrete and remove broad claims that force interpretation.

Problem and impact section

Describe the operational friction users face and why solving it matters. This section should create urgency through relevance, not exaggeration.

Mechanism section

Explain how your offer works in practical terms. Users should be able to understand the path from intent to outcome without guessing.

Trust section

Match proof to the exact doubts users are likely to have at this stage. Relevance and specificity outperform high-volume generic testimonials.

Action section

Present one dominant CTA with explicit outcome language. Secondary paths can exist, but they should not compete equally with the primary action.

Risk-reduction section

Use concise FAQ and expectation-setting blocks to reduce last-mile hesitation. This often improves both conversion quality and downstream retention.

Template Governance: The Core Advantage at Scale

No-code teams scale successfully when templates encode strategic rules, not only visual components. A strong template system reduces contributor variance while preserving room for controlled experimentation.

Practical governance rules should include audience scope, required trust modules, CTA hierarchy, and mobile QA criteria. Each template family should also have an owner responsible for keeping modules accurate and current.

Template governance is not bureaucracy. It is what enables fast publishing without sacrificing reliability.

For teams that want stronger visual polish while keeping governance intact, this beautiful no-code website design guide can help balance aesthetics with conversion priorities.

Trust and Proof Placement in No-Code Environments

No-code pages often fail because proof is positioned as decoration instead of decision support. Users need evidence where uncertainty appears, not at the very end of the page.

A useful trust mapping approach pairs each high-stakes claim with one relevant proof element nearby. For implementation claims, show process evidence. For outcomes claims, show context-rich results. For risk concerns, show clear boundaries and support expectations.

Refreshing proof on a fixed cadence is equally important. Outdated testimonials or stale claims can reduce trust faster than missing proof in the first place.

Form and CTA Strategy for Better Lead Quality

Fast publishing frequently leads to over-simplified forms that maximize submissions but reduce qualification quality. A better approach is staged friction: low effort for first commitment, useful context capture for follow-up quality.

CTA language should describe the real next step. Generic verbs often create ambiguity and lower intent quality. Explicit phrasing improves user confidence and helps teams interpret funnel behavior.

Every form field should justify its presence by improving routing, relevance, or operational follow-up. If a field does not change what happens next, it likely belongs later.

Mobile and Performance as Release Gates

Mobile behavior cannot be treated as post-launch cleanup. In many growth programs, the first meaningful interaction happens on a phone, so weak mobile hierarchy can erase value before users reach the trust and action sections.

A minimum release gate should check first-screen readability, tap-target comfort, form keyboard flow, proof visibility, and load behavior under standard network conditions. These checks should be pass/fail, not optional recommendations.

Performance should be reviewed at decision moments, not only aggregate load time. Delays near CTA or form interaction cause disproportionate conversion loss.

Source-Aware Variants Without Operational Chaos

One page rarely serves every channel equally well. Search visitors may need direct problem-solution framing, while social visitors often need faster relevance cues and stronger early proof.

Source-aware variants can improve fit when they preserve one stable narrative backbone. The goal is controlled adaptation, not fragmenting into unrelated page versions.

Define a variant policy in advance. Specify which sections can vary by source, which sections remain fixed, and which metrics determine whether a variant is kept or retired.

If your team is launching first pages under tight timelines, this three-step launch workflow can help maintain discipline while rolling out early variants.

Measurement Hierarchy for No-Code Programs

Publish velocity is not a performance metric by itself. Teams need a layered model that links page updates to commercial outcomes.

A practical hierarchy includes:

- clarity signals, such as first-screen comprehension behavior

- interaction signals, such as progression through trust and CTA zones

- conversion signals, such as qualified submissions

- business signals, such as progression to revenue events

Each release should include one primary metric and at least one downstream guardrail metric. This prevents local wins that degrade broader funnel quality.

Builder Selection Scorecard for 2026 Teams

Many teams choose platforms with a feature checklist mindset and then discover operational gaps after launch. A stronger approach is scoring builders against the real work your team must perform for the next twelve months.

Start by weighting criteria according to business impact. For most teams, a practical weighting model is narrative control, reusable sections, publishing governance, integration flexibility, analytics clarity, and performance reliability.

Narrative control means more than visual freedom. You need predictable section behavior so contributors can iterate headlines, proof blocks, and CTAs without breaking page hierarchy.

Reusable section systems matter because repeated work destroys velocity at scale. If each page requires custom assembly, the team spends more time rebuilding patterns than improving outcomes.

Governance controls are critical when multiple contributors publish. Role permissions, review flow, and template ownership directly influence whether quality holds as publishing volume increases.

Integration flexibility should be judged by practical workflows, not logo counts on pricing pages. Check whether the platform supports your lead routing, CRM handoff, and campaign analytics in ways your team can maintain.

Analytics clarity is often underestimated. Teams need event visibility at decision points, especially around trust interaction, CTA clicks, and form progression, or they end up optimizing from incomplete signals.

Performance reliability should be tested under realistic conditions before rollout. Evaluate template speed after real images, embedded media, and form logic are present, not only on clean demo pages.

If you are reviewing visual direction and conversion logic at the same time, this real estate website design walkthrough shows how design choices can stay aligned with decision flow rather than aesthetics alone.

A practical way to compare options is to run one identical pilot page on each shortlisted tool. Keep the same copy structure, trust modules, CTA logic, and tracking plan, then evaluate with a fixed rubric after two weeks of traffic.

The goal is not finding a perfect platform. The goal is selecting the environment where your team can publish fast, learn cleanly, and preserve quality as more contributors participate.

Migration Playbook: Move to No-Code Without Losing Performance

Migration projects fail when teams treat them as design refreshes instead of performance continuity programs. The safer approach is phased transition with baseline protection and controlled rollout.

Phase one is baseline capture. Before moving anything, document traffic source mix, conversion rate by page intent, top entry pages, and major funnel drop-off points so you can detect real changes after migration.

Phase two is page classification. Split pages into conversion-critical, support, and archival categories. Critical pages should move first with deeper QA, while low-impact pages can follow once the new system stabilizes.

Phase three is structure mapping. Preserve successful narrative sequence where it exists, then improve weak sections with clearer relevance, mechanism, confidence, and action blocks.

Phase four is tracking continuity. Recreate core events before launch and verify data parity between old and new page versions so reporting does not break during handoff.

Phase five is release gating. Run mobile checks, form flow checks, link validation, and trust proof accuracy checks before each migration batch is published.

Phase six is post-launch monitoring. Use a short daily review window in week one and a weekly rhythm after stabilization to catch regressions quickly.

A common migration mistake is replacing proven copy with generic brand messaging because the team wants a visual reset. If an old section is converting, keep its core logic and improve clarity instead of rewriting everything at once.

Another frequent issue is moving too many pages in one release wave. Smaller batches make it easier to isolate causes when performance changes and prevent high-cost debugging cycles.

Source-based traffic should also be validated after migration. Search, social, and paid visitors often react differently to layout and messaging adjustments, so monitor segment behavior rather than relying on site-wide averages.

For teams building conversion-focused campaign pages during migration, this real estate landing page guide is useful as a model for preserving message match from click to form completion.

Migration quality improves when ownership is explicit. Assign one decision owner each for structure, trust accuracy, and measurement so conflicting edits do not bypass release controls.

Successful migrations are rarely dramatic. They look operationally calm because teams protect proven logic, validate each release layer, and scale only after data consistency is confirmed.

Team Handoff Model: Keep Speed High as Contributors Increase

No-code teams usually start with one or two people and then add contributors from marketing, content, and design. Without a handoff model, this growth introduces inconsistency faster than most teams expect.

A practical handoff system uses three artifacts: a page brief, a section-level checklist, and a release note. Together they preserve intent, quality, and learning continuity across editors.

The page brief should define audience, desired action, proof requirements, and channel assumptions in plain operational language. This prevents contributors from making major structural decisions based only on visual preference.

The section checklist should be tied to decision flow. Reviewers should confirm whether each section answers the next user question rather than checking only grammar or brand tone.

Release notes should record what changed, why it changed, and which metric is expected to move. This small habit compounds into a useful internal evidence base over time.

Weekly review cadence is enough for most teams. Use one session for performance signals and one session for qualitative page walkthroughs so data and UX interpretation stay connected.

Quality usually declines when teams optimize too many pages at once with no prioritization logic. Keep a simple queue: high-traffic pages first, then high-intent pages with conversion friction, then long-tail pages.

When contributors need fast onboarding into repeatable setup patterns, this quick startup website creation guide can support training without forcing oversimplified templates into advanced use cases.

Disagreement is normal in cross-functional teams, so governance should define tie-break rules in advance. For example, structure decisions can be owned by conversion strategy, while visual style disputes can be resolved by design standards.

As teams mature, add quarterly template reviews to retire low-performing modules and standardize the strongest ones. That is how no-code systems stay fast without becoming fragmented libraries of inconsistent components.

Scenario Playbooks

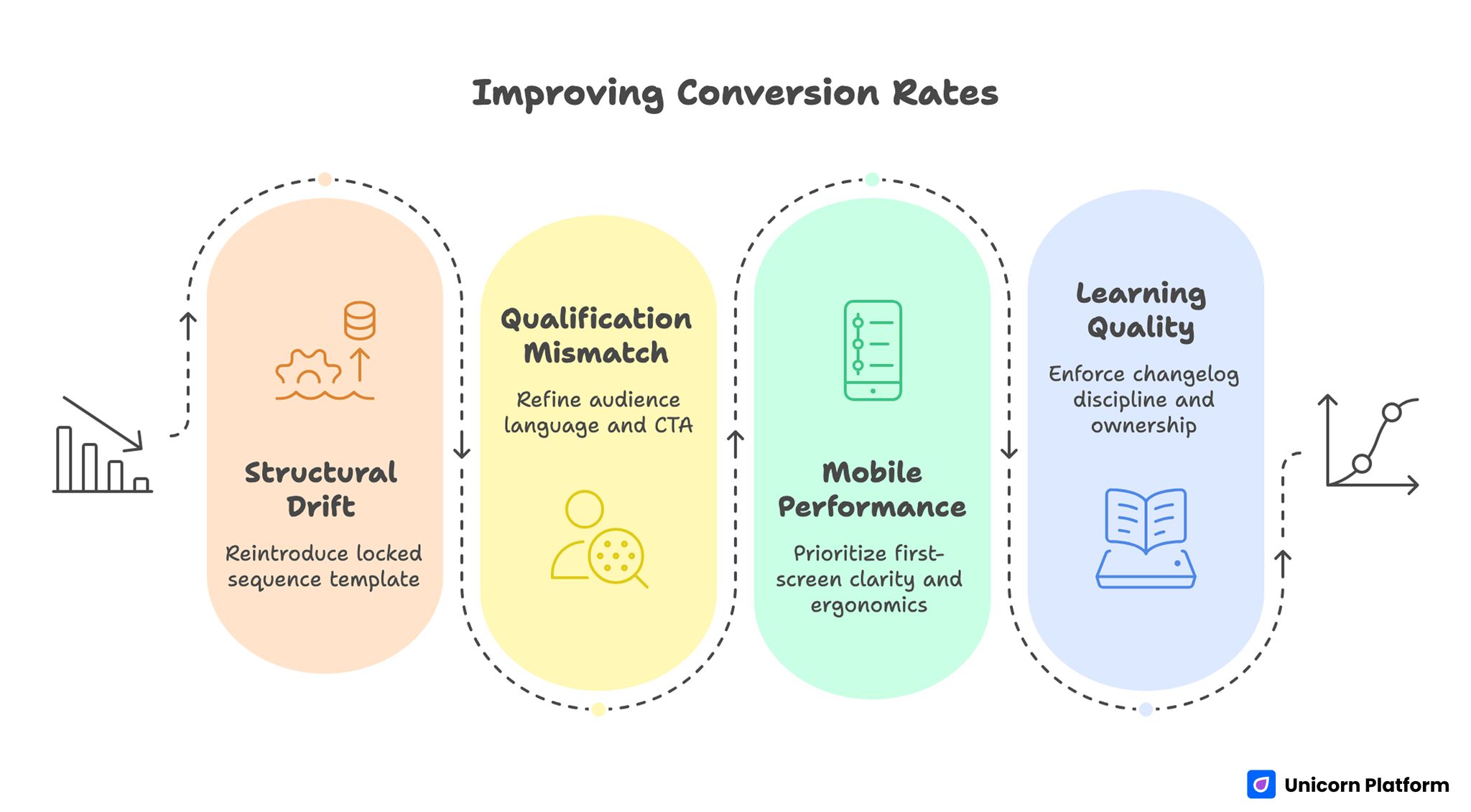

Improving Conversion Rates With Structured No-Code Page Design

Scenario 1: Fast publishing, inconsistent conversion

This usually indicates structural drift across pages. Reintroduce a locked sequence template, then test one major variable at a time to restore interpretability.

Scenario 2: High form submissions, low sales acceptance

The likely issue is qualification mismatch. Refine audience language, tighten CTA expectations, and add one routing-critical field.

Scenario 3: Good desktop performance, weak mobile outcomes

Hierarchy is likely collapsing on small screens. Prioritize first-screen clarity, proof placement, and tap-target ergonomics before further copy testing.

Scenario 4: Team velocity rises, learning quality falls

Documentation and ownership may be weak. Enforce changelog discipline and assign explicit release decision owners for strategy, trust, and analytics.

30-Day Execution Plan

Week 1: Baseline and architecture lock

Audit priority pages for sequence quality, trust placement, and CTA clarity. Lock a control template and define baseline metrics.

Week 2: Trust and action optimization

Improve proof relevance, align CTA language to readiness level, and simplify form friction where appropriate. Complete mobile and performance QA before release.

Week 3: Controlled testing

Run one major variable test per page family. Track both conversion and qualification quality to avoid false positives.

Week 4: Standardize and scale

Document outcomes, update template modules, and apply winning patterns to adjacent pages. Retire weak variants to reduce operational noise.

90-Day Operating Model

Month two should focus on reliability. Expand validated modules across related pages while maintaining strict governance rules.

Month three should institutionalize knowledge. Build an internal library of proven headline patterns, trust modules, and CTA variants by intent stage and source channel.

At ninety days, success should be assessed by consistency of qualified outcomes, not only number of published pages.

FAQ: No-Code Site Creation

Is no-code enough for professional websites?

Yes, when teams apply strong structural and quality standards. Tool capability is rarely the limiting factor; process discipline is.

How many templates should a team maintain?

Maintain only the set needed for distinct intent categories. Too many templates increase governance overhead and reduce learning transfer.

What should we test first on underperforming pages?

Start with first-screen audience fit and CTA clarity. These changes often produce larger quality shifts than cosmetic design edits.

Should pages include navigation menus?

For conversion-focused pages, limited navigation usually performs better. If navigation is present, it should not compete with the primary action.

How can we improve lead quality without hurting conversion?

Use more specific positioning and clearer expectation-setting language. Better self-qualification often improves engagement while keeping healthy conversion.

How often should trust proof be refreshed?

A monthly review cadence is a practical default. Refresh sooner when offers, pricing, or audience concerns change materially.

What causes most no-code quality drift?

Uncontrolled edits from multiple contributors without shared rules. Template governance plus clear ownership usually resolves this quickly.

Should every traffic source have its own variant?

Not always. Create variants only when intent differences are strong enough to justify separate messaging emphasis.

Which metrics matter beyond conversion rate?

Track qualified submissions, follow-up progression quality, and downstream revenue-related outcomes. These reveal whether page performance is commercially useful.

Can small teams run this system effectively?

Yes, if roles are explicit and review cadence is consistent. One person can hold multiple roles, but responsibilities should remain clear.

Final Takeaway

No-code execution is most powerful when it runs on a clear operating system: stable narrative sequence, disciplined template governance, strict release QA, and evidence-based iteration.

With Unicorn Platform, teams can launch quickly without surrendering quality. The long-term advantage appears when each release improves both speed and the reliability of business outcomes.