Table of Contents

- The Four-Role Content Model

- Common Failure Patterns and Fixes

- 30-Day Execution Plan

- Scenario Playbooks

- FAQ

Most startups no longer ask whether they should publish content. They ask why output is growing while qualified pipeline stays inconsistent. Teams publish blog posts, short videos, social clips, newsletters, and landing pages, yet results often feel noisy instead of compounding.

The root issue is usually not effort. It is architecture. Without clear role definition for each format, shared messaging across channels, and outcome-level measurement, content becomes activity rather than leverage. Teams then optimize production speed while missing strategic fit.

A stronger approach treats content as an operating system. Each format has a job. Each job connects to a funnel stage. Each stage has measurable quality signals. Once this structure is in place, publishing velocity becomes useful because it feeds a learning loop instead of a random output loop.

This guide breaks down how to design that system in practice. It focuses on startup and SaaS teams that need reliable growth outcomes, not just more impressions.

sbb-itb-bf47c9b

Key Takeaways

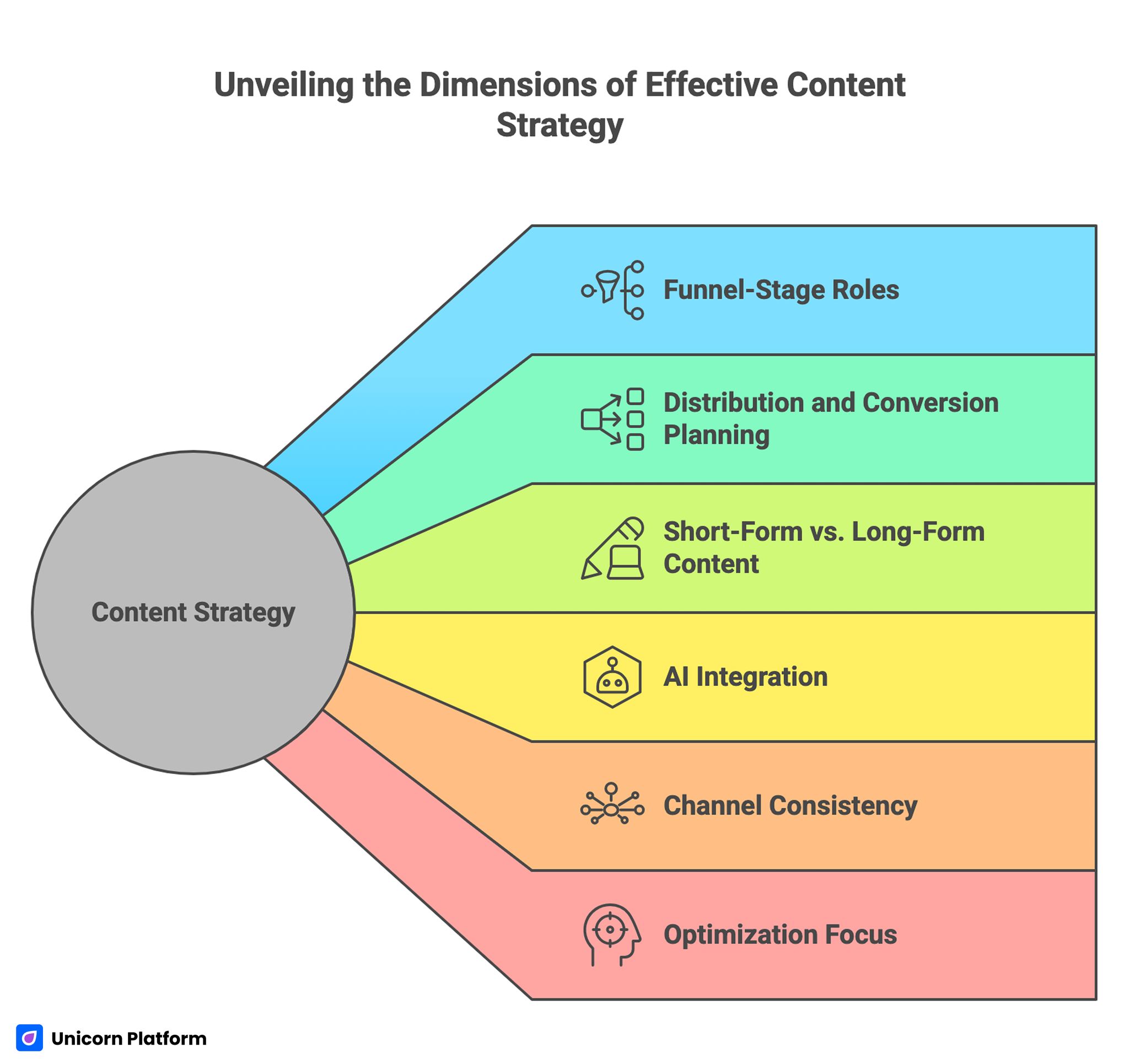

Unveiling the Dimensions of Effective Content Strategy

- Content works best when each format has one explicit funnel-stage role.

- Distribution and conversion pathways should be planned before production begins.

- Short-form output is useful, but long-form depth still drives trust and search durability.

- AI can accelerate execution when governance standards are defined in advance.

- Channel consistency matters more than channel volume.

- Monthly optimization should be tied to qualified outcomes, not vanity traffic spikes.

Why Content Programs Underperform

Most underperforming programs share the same structural flaws. The first flaw is role confusion. Teams create a piece because a topic is trending, then decide afterward what business job that piece should do. This reverses strategy and production.

The second flaw is metric mismatch. Performance reviews prioritize views, clicks, and watch time while ignoring activation quality, lead qualification, or assisted conversion paths. When teams optimize for attention without intent quality, content growth can mask business stagnation.

The third flaw is channel drift. Blog messaging says one thing, short video messaging says another, and landing-page copy says something else entirely. Users who encounter multiple touchpoints then see inconsistency instead of confidence.

The fourth flaw is weak iteration discipline. Too many changes happen at once, and results are interpreted without clean hypotheses. Teams keep shipping, but learning does not accumulate.

The Four-Role Content Model

A practical startup content system can be built around four roles. These roles are not platform-specific. They are decision-stage specific.

Role 1: Discoverability Assets

These pieces attract new users by answering broad questions and matching early search or social intent. Their goal is relevance discovery, not immediate conversion.

Examples include intent-led SEO explainers, educational videos, or introductory comparison pieces. Success signals include qualified session quality, topic relevance, and pathway progression into deeper assets.

Role 2: Consideration Assets

These assets reduce uncertainty. They help users compare options, understand tradeoffs, and evaluate fit.

Examples include framework articles, implementation walkthroughs, category comparisons, and case-oriented explainers. Success signals include deeper session behavior, assisted demo starts, and return-visit quality.

Role 3: Conversion Assets

These pages support action. They clarify offer boundaries, expected outcomes, and next steps so users can move with confidence.

Examples include campaign landing pages, signup pages, reservation pages, and high-intent service pages. Success signals include qualified conversions, activation quality, and lower pre-conversion support friction.

Role 4: Retention and Expansion Assets

These assets protect growth after initial conversion. They improve adoption quality and support long-term trust.

Examples include onboarding education, usage guides, feature deep dives, and outcome-focused update content. Success signals include activation depth, retention indicators, and expansion readiness.

When teams map each piece to one of these roles before production starts, editorial decisions become clearer and results become easier to diagnose. This also makes cross-functional planning faster because each asset has an explicit job.

Channel Strategy: One Message Core, Multiple Expressions

Startups often confuse multi-channel presence with strategic breadth. True breadth is message consistency across channel formats, not publishing frequency alone.

A practical method is to define one message core per campaign: audience context, core problem, outcome claim, and supporting proof. That core then gets translated into format-specific expressions.

A long-form article can provide depth and structure. A short video can deliver narrative clarity and emotional hook. A landing page can provide action readiness. The wording adapts, but the logic stays consistent.

This approach reduces contradiction between channels and improves user trust during multi-touch journeys. Over time, that consistency improves conversion quality across repeated visits.

Planning Content Backward From Conversion Paths

Production planning should start from desired outcomes, then map backward to supporting content. If a team starts with format ideas instead of outcome pathways, assets often miss business intent.

A useful planning sequence is listed below. Treat it as a pre-production gate before any asset enters drafting.

- Define target audience and decision stage.

- Define desired action and quality threshold.

- Identify objections that block action.

- Select format mix by role and channel fit.

- Define distribution plan and internal-link pathways.

- Set one primary metric and one guardrail metric.

This structure turns publishing into an experiment system rather than a volume contest. It also makes monthly performance reviews more actionable because intent is documented in advance.

Video as a Trust Accelerator, Not a Standalone Strategy

Video can compress understanding faster than text in many contexts, especially when users need to evaluate workflows, outcomes, or product experience. Yet video underperforms when it is disconnected from supporting assets.

A strong startup video system should define role by stage: awareness clips for discovery, explanation videos for consideration, and action-oriented assets for conversion support. Each video should route users into the next logical step.

For teams operationalizing this channel, this video strategy guide for startups is useful for structuring production priorities around measurable outcomes. It helps teams avoid spending effort on clips that do not support a defined next step.

Video quality standards should include message clarity, context fit, and conversion pathway continuity. High view counts with weak downstream behavior usually signal role mismatch, not distribution failure.

AI-Assisted Production With Human Governance

AI can materially improve production speed across ideation, outlining, repurposing, and first-draft generation. The risk appears when speed is adopted without governance. That usually produces inconsistent quality, weak factual precision, and brand drift.

A practical governance model separates automation tasks from human-required tasks. Automation can handle draft scaffolding, variant generation, and metadata support. Human reviewers should own factual integrity, strategic fit, and trust-sensitive phrasing.

Teams implementing this model can use this AI marketing operations reference to align tooling choices with business outcomes instead of novelty. The strongest gains usually come from workflow design, not from adding more tools.

Define mandatory review checkpoints before publish. At minimum, every asset should pass accuracy review, audience-intent review, and conversion-alignment review.

SEO-Led Topic Selection Without Keyword Forcing

Search-driven planning still matters, but modern performance depends on intent fit and depth quality more than exact-match repetition. Strong SEO planning is about understanding what users are trying to decide and delivering clearer answers than alternatives.

A practical SERP workflow includes the steps below. Running these checks before writing reduces topic guesswork and improves relevance.

- Identifying dominant intent class.

- Mapping content depth expectations.

- Detecting missing subtopics in current coverage.

- Aligning format type to decision stage.

- Building internal pathways toward action assets.

For teams running this process at scale, this data-driven SEO planning guide provides a strong structure for topic prioritization and gap analysis. It is especially useful when editorial demand exceeds production capacity.

The goal is not to publish every possible topic. The goal is to publish the right cluster in the right sequence, then improve it with behavior evidence.

Integrating SEO, Video, and Conversion Pages

Many startup programs treat these channels as separate workstreams. The result is fragmented experiences and weaker cumulative impact.

A stronger model links each discoverability asset to one consideration asset and one conversion-ready destination. Video and article content should both feed toward action pages with clear context transitions.

This integration improves user progression and makes measurement clearer. Teams can evaluate path-level behavior instead of isolated channel metrics.

When users see consistent narrative across formats, trust increases and conversion friction drops. This creates a stronger assisted-conversion path even when users do not convert in the first session.

Editorial Workflow for Cross-Functional Teams

High-performing programs rely on workflow discipline more than creative bursts. A simple but strict process usually beats complex tooling with weak ownership.

A practical workflow includes the stages below. Keep each stage lightweight but explicit so quality does not drift during fast cycles.

- Brief creation with intent and metric definition.

- Outline and format selection by role.

- Draft production with source and claim checks.

- Editorial review for clarity and consistency.

- QA review for conversion and readability.

- Launch with tracked hypothesis.

- Post-launch review with keep/stop/test decisions.

Role clarity is essential. Assign owners for strategy, production, distribution, analytics, and final QA. Ambiguous ownership slows decisions and reduces accountability.

Measurement Framework for Content Quality

Performance reviews should answer one question first: did this content improve qualified business outcomes at its intended stage? Starting with this question prevents teams from overvaluing cosmetic performance metrics.

A balanced measurement stack might include the indicators below. Together they provide a clearer picture than traffic or engagement metrics alone.

- Discoverability: qualified organic sessions and pathway progression.

- Consideration: depth behavior and assisted conversion influence.

- Conversion: qualified action rate and activation quality.

- Retention: adoption behavior and repeat engagement quality.

This stage-aware model helps teams avoid misleading conclusions from surface metrics. It also highlights where in the funnel content is creating or losing momentum.

Guardrail Metrics Matter

Every primary metric should have a guardrail. For example, if signup rate rises while activation quality falls, the page may be overpromising. Guardrails prevent teams from scaling the wrong wins.

Segment Before You Decide

Read results by source, audience cohort, and intent stage. A change that works for high-intent search traffic may fail for social traffic with lower readiness.

Common Failure Patterns and Fixes

Failure Pattern 1: Publishing by calendar pressure

Fix: prioritize by strategic role and measurable hypothesis, not by empty calendar slots. This shifts production from activity management to outcome management.

Failure Pattern 2: Repeating similar angles across formats

Fix: define the unique job of each asset before drafting. Role clarity usually reduces rewrite cycles and improves narrative coherence.

Failure Pattern 3: Heavy top-funnel output, weak conversion pathways

Fix: connect every discoverability asset to a clear next-step destination. Without progression pathways, top-funnel performance rarely compounds.

Failure Pattern 4: AI drafts shipped with minimal review

Fix: enforce mandatory human checkpoints for factual and trust-sensitive content. This preserves quality while still benefiting from production speed.

Failure Pattern 5: Content decisions based on one dashboard metric

Fix: use stage-specific primary metrics and guardrails in every review cycle. Guardrails protect quality when primary metrics temporarily improve for the wrong reasons.

Failure Pattern 6: Channel teams operating in isolation

Fix: define one campaign message core and enforce cross-channel consistency. Users should recognize the same promise regardless of entry channel.

30-Day Execution Plan

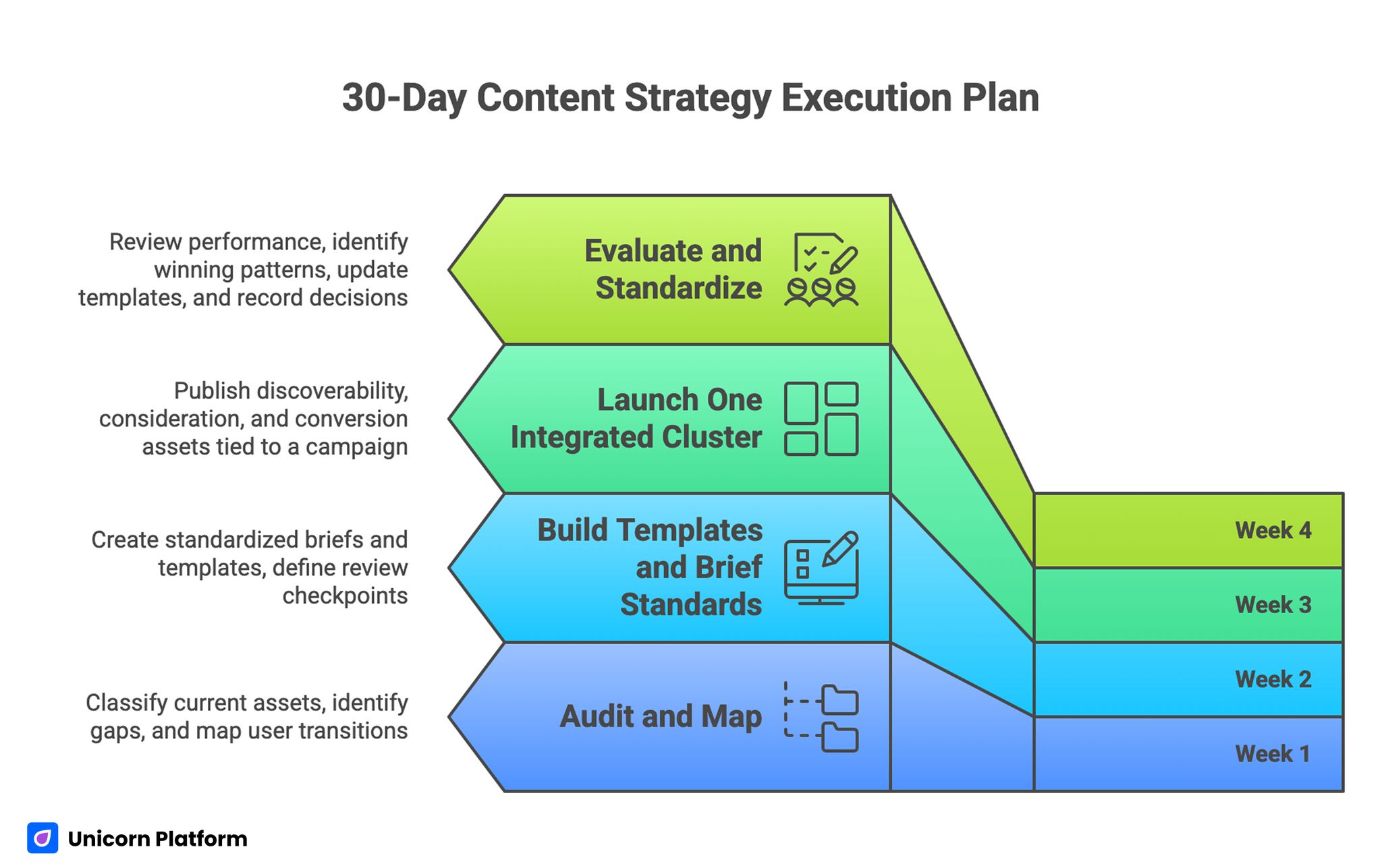

30-Day Content Strategy Execution Plan

Week 1: Audit and Map

Classify current assets by role, intent stage, and channel. Identify gaps where users lack clear transitions from discovery to action.

Week 2: Build Templates and Brief Standards

Create standardized briefs and template structures for each role. Define review checkpoints and ownership for quality control.

Week 3: Launch One Integrated Cluster

Publish one discoverability asset, one consideration asset, and one conversion asset tied to the same campaign objective. Connect them with clear internal pathways.

Week 4: Evaluate and Standardize

Review path-level performance, identify one winning pattern, and update default templates. Record one keep, one stop, and one next test decision.

Repeat monthly so improvements compound with every cycle. Consistent review cadence usually outperforms occasional large resets.

Scenario Playbooks

Scenario 1: High Publishing Volume, Low Pipeline Impact

A startup team publishes weekly across blog and social, yet sales-qualified outcomes stay flat. Review shows assets are mostly top-funnel and weakly connected to decision-stage content. Building a role-based cluster with clearer transitions typically improves qualified behavior without increasing total output.

Scenario 2: Strong Video Reach, Weak Conversion

A team gets high watch metrics but limited downstream action. The main issue is missing bridge assets and unclear CTA continuity. Pairing video topics with aligned consideration pages and action-ready destinations often improves trust progression and conversion quality.

Scenario 3: Fast AI-Assisted Output, Inconsistent Quality

A content team scales production using automation, but performance is unstable and messaging drifts across channels. Adding governance checkpoints and brief standards usually restores consistency while preserving speed gains.

FAQ: Modern Content Strategy in 2026

1. How many formats should a startup use at once?

Start with a small mix that covers discovery, consideration, and conversion. Scale only after the first mix produces repeatable quality outcomes.

2. Should every piece of content include a CTA?

Yes, but CTA intensity should match user readiness. Early-stage assets can use low-friction progression actions.

3. Is long-form content still worth investing in?

Yes. Long-form assets still drive trust, search durability, and decision support when they are practical and well structured.

4. How do we avoid channel inconsistency?

Define a message core for each campaign and adapt tone by format without changing core claims and proof logic. This preserves credibility while allowing creative flexibility by channel.

5. Where should AI fit in the workflow?

Use AI for speed tasks and keep humans on strategic judgment, factual precision, and trust-sensitive language. That division of labor keeps output fast and dependable.

6. What is the best first metric to track?

Track the stage-specific metric tied to your campaign goal, then add one guardrail to protect quality. This helps teams avoid scaling gains that hurt downstream performance.

7. How often should content plans be revised?

Run monthly performance reviews and quarterly strategic resets based on path-level outcomes. This cadence balances execution speed with strategic stability.

8. What causes most content waste?

Publishing assets that are not mapped to a role, stage, and measurable next-step pathway is the most common source of wasted effort. Mapping before production dramatically increases relevance and reuse potential.

9. Can one team handle SEO, video, and conversion content together?

Yes, with clear ownership and shared workflow standards. Alignment is more important than team size.

10. What should we test first?

Test first-screen relevance and pathway clarity between formats before testing aesthetic variations. Messaging and navigation clarity usually create larger gains than visual refinements in early cycles.

Final Takeaway

Strong content programs are built, not improvised. When teams define role-specific formats, enforce channel consistency, use AI with governance, and evaluate outcomes by stage quality, content becomes a compounding growth system.

The competitive edge comes from discipline: clear intent, clear pathways, clear measurement, and continuous iteration based on real user behavior. Teams that hold this discipline consistently tend to outperform teams with higher output but weaker operating structure.