Table of Contents

- Coming-Soon Page Architecture That Converts

- 30-Day Operating Plan

- Common Mistakes and Fast Fixes

- FAQ

Most startups do not fail at launch because the product is invisible. They fail because the early audience is weakly qualified, poorly informed, or disconnected from the promise made on the prelaunch page.

That is why prelaunch should be treated as the first growth stage, not a temporary marketing task. The goal is not only to collect signups. The goal is to identify people who will advocate, activate, and pull others into your launch cycle.

The founders who win this stage build a clear system: one focused page, one meaningful incentive, one follow-up sequence, and one weekly testing rhythm. They optimize for early promoter quality instead of vanity list size.

This guide explains how to run that system with practical constraints and measurable outcomes. It focuses on process quality so your launch week starts with qualified demand instead of noisy list volume.

sbb-itb-bf47c9b

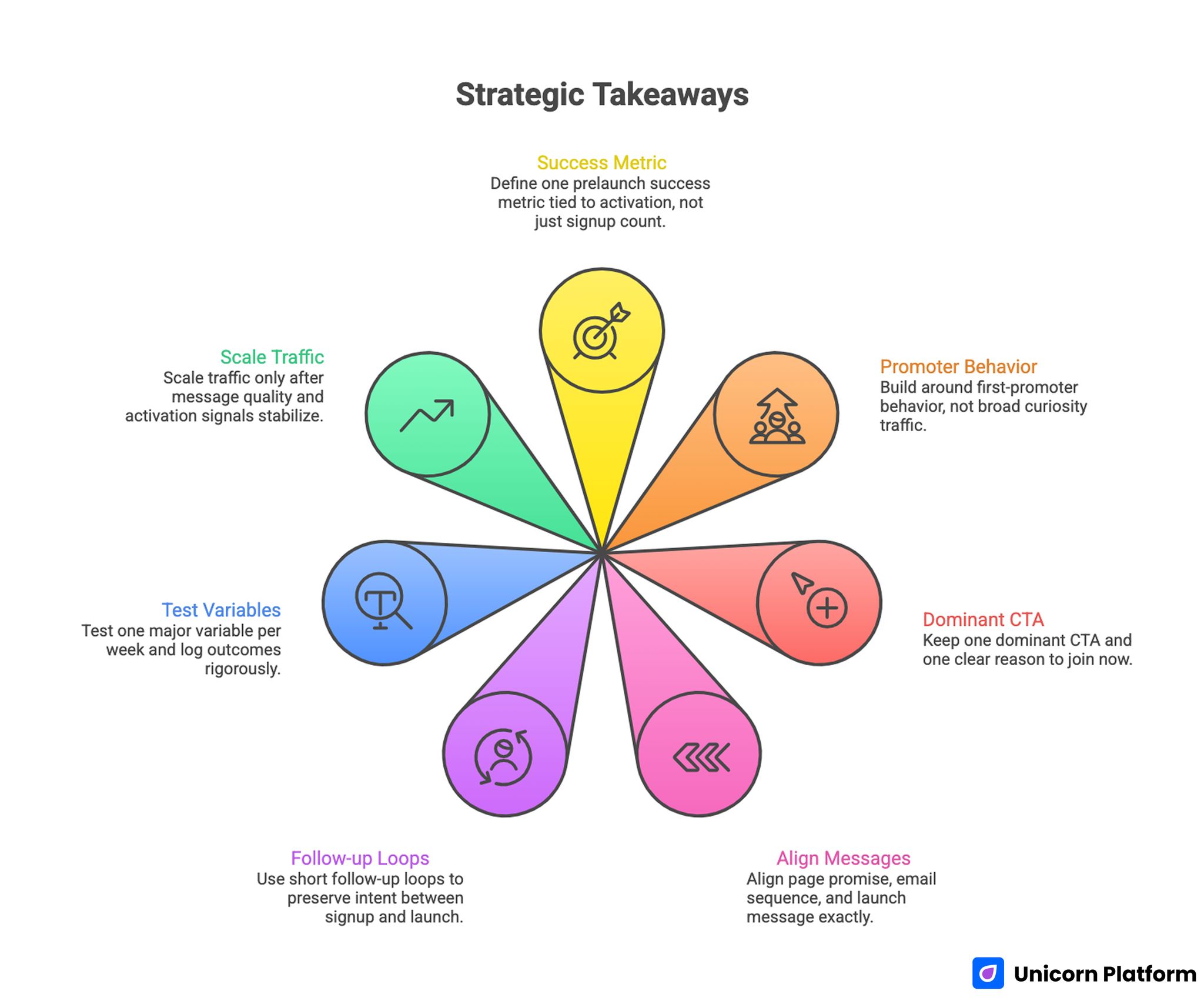

Quick Strategic Takeaways

Strategic Takeaways for Achieving First-Promoter Growth in Startup Prelaunch

- Define one prelaunch success metric tied to activation, not just signup count.

- Build around first-promoter behavior, not broad curiosity traffic.

- Keep one dominant CTA and one clear reason to join now.

- Align page promise, email sequence, and launch message exactly.

- Use short follow-up loops to preserve intent between signup and launch.

- Test one major variable per week and log outcomes rigorously.

- Scale traffic only after message quality and activation signals stabilize.

Who Your First Promoters Actually Are

First promoters are not only early signups. They are users who understand the promise quickly, believe it is relevant, and take at least one downstream action beyond form completion.

In practice, this means they open updates, reply with useful feedback, refer peers, or show launch-day readiness. These behaviors are better indicators of demand quality than raw list volume.

A high-volume waitlist with weak promoter behavior creates launch noise. A smaller list with strong promoter behavior creates launch momentum.

Prelaunch Funnel Design for Promoter Quality

Strong prelaunch funnels should be mapped before page copy is written. This prevents disconnected execution where page, incentive, and follow-up messages pull in different directions.

A practical funnel map includes the full handoff sequence from first click to launch-day action. This map keeps teams honest about where intent is being lost.

- traffic source intent

- first-screen promise

- signup action and incentive

- first follow-up message

- second follow-up milestone

- launch-day activation path

If one step is unclear, quality drops at scale. For example, a compelling page with vague follow-up often creates high signup rates and low activation rates.

Message-Market Fit Check Before Scaling

Many teams increase traffic too early. They see acceptable signup volume and assume the message is validated. Real validation happens when signups show promoter behavior in the first 7 to 14 days.

Run a simple message-fit check before scaling budget. The point is to confirm understanding quality, not only conversion percentage.

- Can users restate your value proposition clearly?

- Do follow-up replies reflect the problem you claim to solve?

- Is referral behavior present without aggressive prompting?

- Are activation-intent signals stable across sources?

If these signals are weak, improve message clarity first. Traffic amplification should come after fit validation, not before.

Coming-Soon Page Architecture That Converts

A high-performing prelaunch page should move users through one predictable decision flow. It should establish relevance, reduce risk, and make the next action obvious in one screen cycle.

Use this architecture as a stable default before running experiments. Structural consistency makes your test results easier to interpret.

- specific audience-facing headline

- concise problem and outcome frame

- credibility or progress proof

- one low-friction signup action

- incentive explanation in plain language

- expectation block for timeline and updates

- FAQ for common objections

For teams refining this structure, this guide to effective waitlist landing pages is useful for improving conversion logic without adding clutter.

Incentive Design That Attracts Better Promoters

The right incentive filters audience quality. The wrong incentive inflates your list with low-intent signups.

High-quality incentive models include early feature access, priority onboarding, founding-user pricing, and referral-linked queue movement. Each model should be matched to your product type and user motivation.

Avoid incentives that attract opportunistic signups disconnected from product value. Discount-only framing can inflate top-funnel volume while reducing downstream activation quality.

Form Friction Strategy for Better Conversion

Most prelaunch pages ask for too much information too early. That increases abandonment and often lowers trust because users do not yet understand value depth.

A practical first-touch form usually needs email plus one optional qualifier. Additional details should be collected after users receive context and proof through follow-up messages.

If signup quality looks weak, do not default to adding many required fields. First test promise clarity and incentive relevance. Form complexity is rarely the first fix for message mismatch.

Follow-Up Sequence That Preserves Intent

Signup is the start of demand management, not the end of conversion. Teams that treat signup as a finish line usually see launch-day drop-off.

Use a short, structured sequence. Each email should have one clear objective and one measurable action.

- Welcome and expectation reset

- Progress update tied to one concrete milestone

- Action prompt linked to referral or launch readiness

Each message should repeat core value language from the landing page. Consistency across page and email improves trust and increases activation reliability.

When teams prioritize this communication layer, launch-day engagement improves even without increasing signup volume. Better sequencing also reduces support load because expectations are set earlier.

Weekly Test Cadence for Early Growth

Prelaunch performance improves fastest under controlled testing. Random edits create noise and weak decision quality.

Useful weekly experiments include headline angle, CTA phrasing, incentive framing, proof placement, and form field count. Keep one major variable per cycle and document metric impact clearly.

A practical test log should include hypothesis, change, primary metric, secondary quality metric, and keep-or-revert decision. This turns weekly experiments into reusable operating knowledge.

For teams that need to ship faster between tests, this launch-your-site-in-3-steps workflow can help reduce production delay while keeping page quality controlled.

SEO During Prelaunch Without Diluting Focus

Prelaunch SEO works when supporting content answers real user questions and routes readers to one clear next action. It fails when teams publish broad content without conversion pathways.

A practical prelaunch SEO model includes use-case pages, objection-focused FAQ pages, and comparison pages tied to the main waitlist flow. Each support page should contain one context-appropriate action route.

For founders balancing tool choice and execution speed, this free website platform comparison for startups can help decide stack fit before scaling content production.

Quality Metrics That Matter More Than List Size

Raw signup totals can be misleading. High list volume with low engagement often indicates weak fit or unclear expectations.

Track these quality metrics across source, segment, and week-over-week movement. Trend direction often matters more than any single-day result.

- qualified signup ratio by source

- first follow-up open and click trend

- reply quality and objection patterns

- referral participation rate

- launch-day activation readiness

Quality metrics reveal whether demand is compounding or decaying. They also help teams decide when to scale paid traffic versus when to refine message-market fit.

Channel-Specific Acquisition Plans Before Launch

Prelaunch acquisition performs better when message format matches channel behavior. A founder community click and a paid social click rarely arrive with the same intent depth, so routing both into identical copy often weakens results.

Use one core promise across channels, then adjust framing for the audience context. The objective is consistency of value, not identical wording everywhere.

Community and founder channels

Community traffic from Slack groups, niche forums, and founder circles usually responds to specificity and transparency. Users want to know what is being built, for whom, and why this release is worth their attention now.

For these channels, lead with problem clarity and proof of progress rather than polished hype. A concise build timeline, transparent beta criteria, and direct founder voice usually outperform generic launch language.

Partner and newsletter channels

Partner placements and newsletter mentions can create sharp volume spikes, but quality depends on message match between the partner narrative and your landing page promise. If readers click expecting one outcome and land on broader copy, promoter quality drops quickly.

Create dedicated variants for high-impact partner placements with mirrored language in the hero and CTA. This keeps cognitive friction low and protects conversion quality during short high-volume windows.

Paid tests with intent filters

Paid prelaunch campaigns should begin with small-budget intent-filter tests, not broad scaling. Focus on learning which audience segments produce the strongest follow-up behavior after signup.

Pair each ad set with one intent hypothesis and evaluate by downstream quality signals, not only cost per lead. This keeps acquisition decisions tied to launch readiness rather than vanity efficiency metrics.

Promoter Scoring Model for Launch Readiness

A lightweight promoter scoring model helps teams decide whether launch demand is strong enough to scale. Without a score, teams often overreact to raw signup growth and miss weak activation indicators.

Use a simple 100-point framework with weighted signals. Start with default weights, then recalibrate once your data quality improves.

- 30 points: message comprehension quality in replies

- 25 points: follow-up engagement consistency

- 20 points: referral or share behavior

- 15 points: launch-intent actions such as calendar requests or onboarding steps

- 10 points: objection severity and frequency

Review scores weekly by traffic source and by cohort. A stable or improving score usually indicates real demand quality, while a declining score signals expectation mismatch that should be fixed before budget expansion.

A practical decision rule is to scale only when promoter score trends remain stable for two consecutive weeks. If score drops while signups rise, pause volume growth and fix promise clarity first.

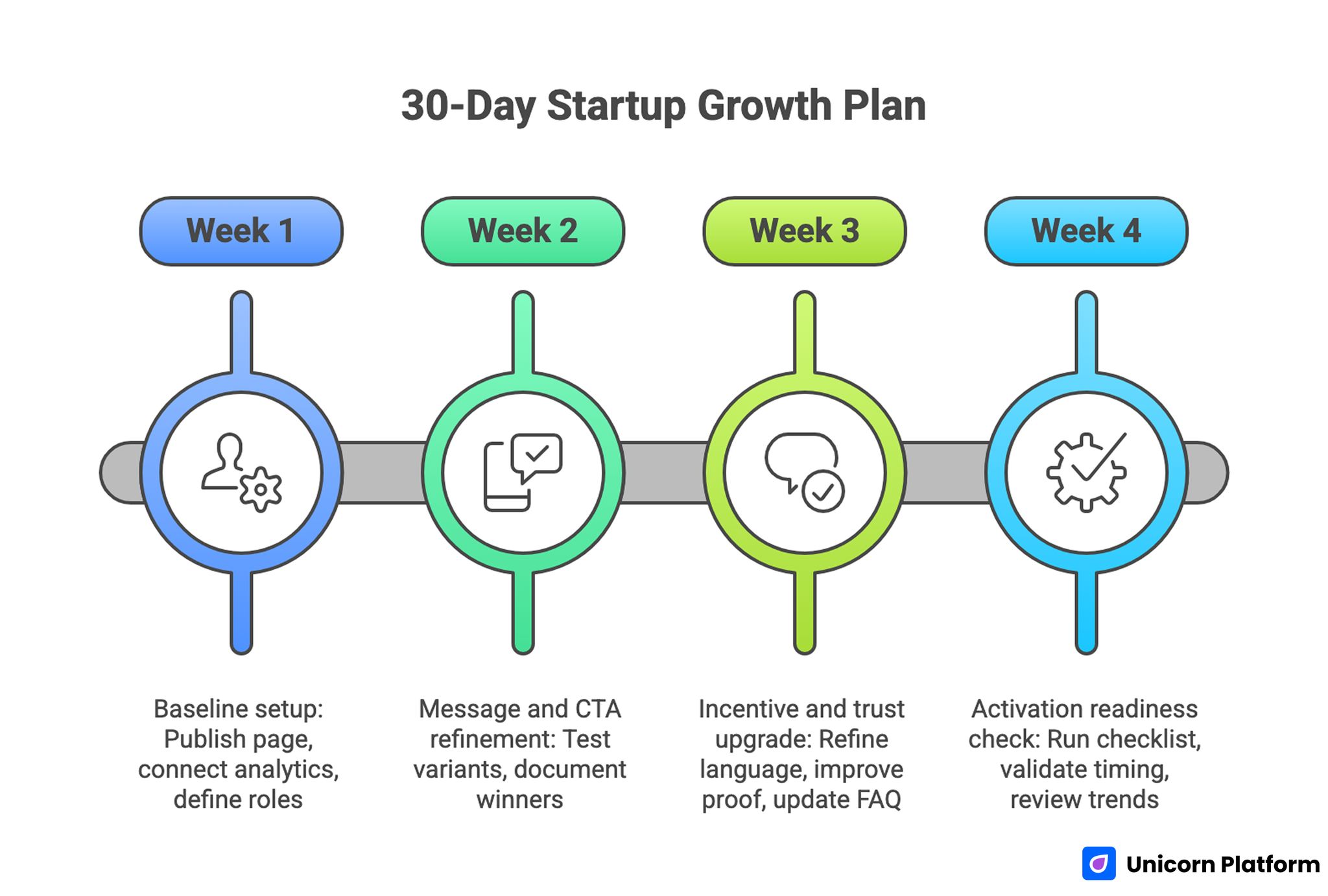

30-Day Operating Plan

30-Day Startup Growth Plan

Week 1: baseline setup

Publish one focused page, connect analytics and lead tagging, and ensure first follow-up message matches page promise. Define owner roles for page updates and follow-up messaging so execution does not stall.

Week 2: message and CTA refinement

Test one headline variant and one CTA variant, then keep only the winning pattern tied to quality signals. Document why the winner performed better so the logic can be reused in later campaigns.

Week 3: incentive and trust upgrade

Refine incentive language, improve progress proof visibility, and update FAQ using real objections from waitlist replies. At this stage, remove weak sections that create doubt without adding clarity.

Week 4: activation readiness check

Run launch-week checklist, validate follow-up sequence timing, and review promoter-quality trends before traffic scaling. Finalize escalation paths for support questions so response quality stays high during traffic peaks.

This cadence keeps execution realistic for lean teams while preserving testing discipline. It also reduces context switching by giving each week a clear operational focus.

90-Day Scale Plan

Month one should stabilize conversion architecture and baseline communication consistency. Month two should introduce source-specific variants with controlled messaging differences. Month three should formalize governance for experiment review, proof refresh, and launch-readiness scoring.

The 90-day horizon also gives teams enough time to identify weak audience segments and reallocate effort. This prevents long-term growth plans from being anchored to low-quality top-funnel channels.

Scale should follow signal stability, not calendar pressure. Teams that scale early with weak promoter quality often face expensive correction cycles after launch.

Cross-functional alignment is essential. Product, growth, and support teams should agree on promise language, rollout expectations, and launch support constraints before expanding acquisition.

Launch-Week Readiness Checklist

The week before launch is where quality usually slips. Teams increase traffic while unresolved clarity and routing issues remain in place.

Use this checklist before scaling traffic. Treat it as a release gate instead of a suggestion list.

- confirm consistency between ad preview, page hero, and welcome email

- validate form routing and source tagging

- verify thank-you page and first follow-up timing

- update FAQ with top current objections

- run one full mobile readability and CTA visibility pass

A readiness gate like this prevents avoidable failures and improves activation confidence. It creates a shared definition of launch quality across marketing, product, and support.

For teams building this from a free-stack baseline, this startup-site launch framework with free makers is useful for structuring launch operations without heavy technical overhead.

Common Mistakes and Fast Fixes

Mistake 1: broad promise with weak specificity

Fix by naming one audience, one problem, and one near-term outcome in the first screen. This immediately improves relevance and reduces confusion-driven bounce.

Mistake 2: urgency language without credibility

Fix by pairing urgency with concrete progress proof and transparent timeline context. Credibility turns urgency from pressure into confidence.

Mistake 3: traffic scaling before fit validation

Fix by using promoter-quality metrics to gate budget expansion. Quality thresholds reduce expensive post-launch correction cycles.

Mistake 4: long forms on first touch

Fix by reducing required fields and collecting deeper context later. Ask only for information that directly improves the next step.

Mistake 5: disconnected page and email messaging

Fix by aligning follow-up copy with the exact value promise on-page. Message consistency is one of the fastest ways to improve trust.

Mistake 6: no experiment documentation

Fix by logging every major test with quality metrics and decision outcomes. Documentation quality determines whether your wins are repeatable.

FAQ: How to Build First-Promoter Startup

What is the difference between an early signup and a first promoter?

An early signup completes a form. A first promoter shows downstream behavior such as referral, feedback, or activation readiness.

Should we optimize for waitlist size first?

No. Optimize for quality first. Large low-intent lists usually underperform at launch and create misleading confidence.

What is the minimum viable prelaunch page?

A clear promise, one trust signal, one CTA, and one expectation block are enough to start testing. You can add depth later after real objections appear.

How often should we update prelaunch pages?

Weekly updates are usually enough if each change is measured and documented. Faster changes without measurement usually produce noisy outcomes.

Is referral incentive always necessary?

Not always. Use referrals when network effects matter. For some products, early access clarity converts better than incentive complexity.

What signal tells us to scale traffic?

Stable conversion plus stable promoter-quality behavior across at least two weeks is a good scaling signal. Scaling before this window is usually riskier than it looks.

How many variants should we run early?

Start with one baseline and one source-specific variant. Too many variants early often produce noisy data and weak conclusions.

Can free tools handle serious prelaunch campaigns?

Yes, if process quality is high. Clear architecture and disciplined testing matter more than tool price for early-stage teams.

What is the most common launch-week mistake?

Scaling traffic before message consistency and routing quality are fully verified. This mistake often appears as growth until activation results arrive.

How do we protect quality as the team grows?

Use one shared template, fixed QA gates, and a documented weekly review process. Shared standards make growth quality less dependent on individual contributors.

Final Takeaway

Prelaunch is not a waiting period. It is your first measurable growth cycle where positioning, demand quality, and activation discipline are established.

Start with first-promoter quality, not signup vanity. Build one clear page, one clear incentive, and one clear follow-up system, then improve through weekly controlled tests.

With Unicorn Platform, startup teams can run this process quickly through reusable structures, rapid updates, and practical iteration without heavy technical bottlenecks. The teams that treat prelaunch as an operating system, not a page draft, usually arrive at launch with stronger momentum and clearer learning.