Table of Contents

- Why SEO Evidence Often Fails to Produce Business Movement

- On-Page Structure for Human and AI Retrieval

- 30-60-90 Day Rollout Plan

- Common Failure Patterns and Fixes

- FAQ

Most SEO programs do not fail because teams lack tools. They fail because evidence does not become action quickly enough. Query data is exported, dashboards are updated, and reports are shared, yet page quality and commercial outcomes barely move.

The gap is operational. Analysis lives in one workflow while publishing, technical fixes, and conversion updates live in separate workflows. By the time decisions are approved, search behavior has already shifted and content plans are stale.

An effective SEO model in 2026 has to behave like an operating system. It should convert signals into prioritized tasks, convert tasks into page changes, and convert page changes into measurable business outcomes on a fixed cadence.

When teams need a tighter way to connect optimization work to pipeline goals, the framework in aligning website optimization with your business goals is useful as a planning baseline.

sbb-itb-bf47c9b

Quick Takeaways

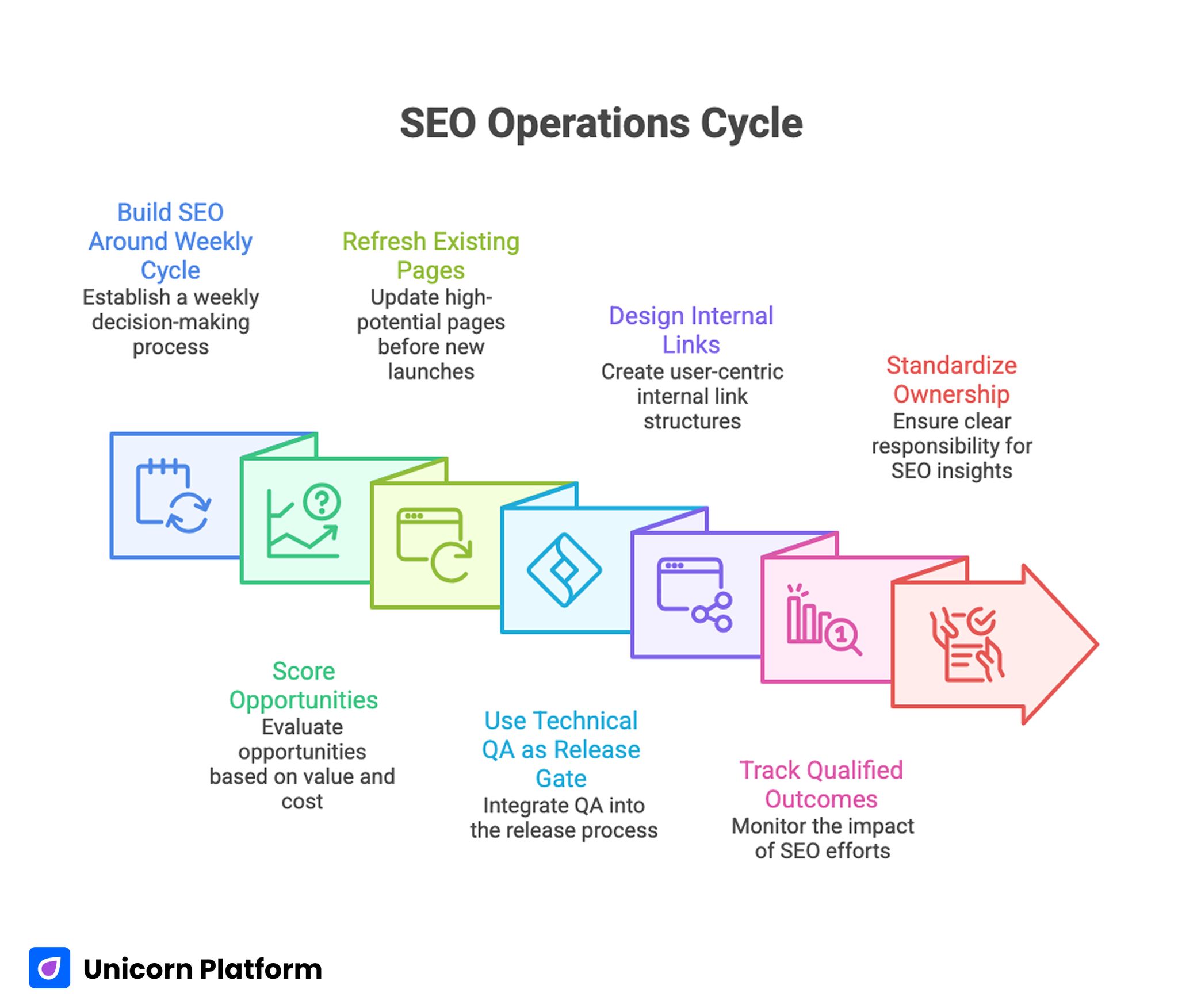

SEO Operations Cycle

- Build SEO around a weekly decision cycle, not monthly reporting cycles.

- Score opportunities by intent value, expected impact, and implementation cost.

- Refresh high-potential existing pages before launching large net-new batches.

- Use technical QA as a release gate, not as a quarterly cleanup task.

- Design internal links as user decision paths, not only crawl pathways.

- Track qualified outcomes, not only ranking movement.

- Standardize ownership so insights do not expire before execution.

Why SEO Evidence Often Fails to Produce Business Movement

Many organizations still run SEO as a sequence of disconnected activities. Analysts produce opportunity lists, writers create content from broad briefs, and developers fix issues when they have capacity. This structure creates delay and weak accountability.

Weak handoff is usually the first bottleneck. A keyword or query insight enters a spreadsheet, but nobody is explicitly responsible for turning that insight into page architecture, proof requirements, internal linking, and call-to-action placement. The insight stays interesting but not actionable.

A second bottleneck is measurement bias. Teams celebrate visibility growth without testing whether the traffic quality improved. If intent quality is weak, ranking gains can consume resources while contributing little to pipeline or revenue progression.

Treat Search Results as a Live Demand Map

Search results reveal active market language, intent maturity, and expected content depth. Reviewing high-performing pages by structure and decision flow helps teams understand what users are currently rewarding with clicks and time.

Focus on patterns that affect user decisions: opening clarity, section sequencing, trust placement, implementation depth, and answer completeness. These patterns matter more than superficial style details.

Pattern analysis should end with explicit execution choices. For every target page, decide what to keep, what to deepen, and what to replace. A pattern log without implementation decisions becomes a research archive, not a growth lever.

Build Query Clusters Around User Tasks

Isolated keyword planning creates cannibalization and fragmented user journeys. Cluster planning works better because it groups queries by user task and buying stage.

A practical cluster model includes four task types: learn, compare, validate, and decide. Each cluster gets one primary page role and one secondary support role. This prevents multiple pages from competing for the same intent while missing adjacent questions.

When building clusters, include operational terms that real buyers use during evaluation. Product features alone are usually too narrow. Queries related to setup effort, migration risk, governance, pricing logic, and implementation timelines often carry stronger intent quality.

Opportunity Scoring for Priority Decisions

A useful scoring model combines three variables: demand potential, business proximity, and delivery effort. Demand potential estimates reachable visibility. Business proximity estimates how close the query is to a valuable action. Delivery effort estimates time and complexity for high-quality execution.

Do not rank opportunities only by search volume. High volume with weak intent can create expensive content programs that look successful in traffic reports and underperform in commercial terms.

Simple scoring logic is enough if applied consistently. A weighted 1-5 score for each variable can produce a defensible queue and reduce subjective prioritization debates.

Editorial Briefs That Drive Execution Quality

Most SEO briefs fail because they define topic and target terms but not decision requirements. Strong briefs specify what a page must help a reader decide and what evidence is required to support that decision.

A reliable brief template includes intent statement, audience context, required sections, evidence expectations, internal link targets, and conversion path assumptions. This structure makes writing and QA faster because the decision logic is explicit.

Teams that manage many contributors can reduce quality variance by enforcing one brief format per intent class. Educational pages, comparison pages, and decision-stage pages should not share identical brief depth.

Update Existing Assets Before Mass Publishing New Ones

Many teams overproduce net-new pages while proven assets decay. Existing pages often have historical authority and link equity that can be reactivated with focused updates.

Prioritize refresh candidates by three signals: ranking decay, intent mismatch, and proof staleness. A page that still attracts visibility but fails to convert often needs narrative and evidence updates before any technical overhaul.

In high-velocity workflows, incremental updates outperform large rewrites. Improving first-screen relevance, section order, and FAQ depth can lift performance faster than rebuilding the entire page from zero.

Technical QA as a Mandatory Release Gate

Technical quality remains foundational for both discovery and conversion. Slow rendering, unstable layout, weak mobile interaction, and crawl/index errors can cancel editorial gains.

Technical QA needs to be a release requirement for every major update. Gate checks should include indexing status, canonical consistency, internal link integrity, Core Web Vitals trend, and mobile form usability.

If the team needs a repeatable checklist model, the process in how to audit your website like an SEO pro is a strong operational reference.

Internal Linking as Decision Architecture

Internal links are more than ranking signals. They guide readers from question discovery to confident action. Poor link pathways leave users at informational dead ends.

Design links by intent progression. Educational content should lead to deeper comparison or implementation pages when readers signal higher readiness. Comparison pages should route users toward practical decision resources and clear next actions.

Anchor text quality matters. Specific anchors that describe decision value perform better than generic phrases because users understand why the next click is relevant.

On-Page Structure for Human and AI Retrieval

On-page optimization in 2026 requires dual clarity. Human readers need fast comprehension and credible answers. AI retrieval systems need explicit, well-structured information that resolves intent without ambiguity.

Use clear headings, concise section goals, and direct answers before elaboration. Dense prose without navigable structure reduces both user completion and retrieval utility.

Entity coverage should be practical rather than forced. Include terms and concepts that naturally appear in real decision workflows, then support them with examples and implementation detail.

Proof Design and Trust Sequencing

Trust content should appear where uncertainty appears. If a section makes an operational claim, nearby evidence should show how that claim was achieved and under what conditions.

Proof without context underperforms. Numbers are stronger when paired with time window, use case, and implementation conditions. Narrative examples are stronger when they include constraints and tradeoffs, not only outcomes.

For decision-stage pages, trust sequencing should move from relevance proof to implementation proof to risk reduction proof. This mirrors how buyers evaluate new solutions.

Content Calendar Design From Performance Signals

Calendar planning should not be separated from performance data. Use historical winners and decaying assets to define cadence and workload mix.

A practical monthly split is often enough: refresh work, expansion work, and net-new content. Refresh work protects existing equity. Expansion work deepens high-performing clusters. Net-new work captures fresh opportunities.

Calendar commitments should include expected business effect, not only publication date. This keeps content velocity tied to strategic purpose.

Measurement Stack: Leading and Lagging Signals

Ranking movement is a leading signal, not a final outcome. Robust measurement includes visibility metrics, engagement metrics, and commercial metrics in one view.

Visibility metrics include impression trend, position trend, and page-level query diversity. Engagement metrics include depth of interaction, return behavior, and assisted navigation patterns. Commercial metrics include qualified submissions, meeting quality, and influenced pipeline movement.

Cross-functional review is essential. Marketing, product, and revenue teams should interpret the same scorecard to avoid metric silos and contradictory optimization decisions.

Operating Cadence for Continuous Improvement

Execution quality improves when cadence is predictable. Weekly cycles are usually the right unit for signal-to-action loops.

A simple weekly loop works well: review one cluster, ship one high-impact update, validate one measurable behavior change, and document one decision for the next sprint. This cadence prevents analysis backlog and keeps learning compounding.

Monthly reviews should focus on structural adjustments rather than tactical edits. Quarterly reviews should reassess cluster priorities against business strategy.

Governance and Role Ownership

SEO programs drift when ownership is vague. Assign explicit owners for insight generation, editorial execution, technical validation, and final QA.

Owner clarity should include decision rights. If nobody can approve a page update quickly, evidence loses value while waiting for consensus. Clear decision rights reduce cycle time and improve accountability.

Documentation must be lightweight and durable. Every major update should record hypothesis, change scope, observed outcome, and next step. This creates operational memory and prevents repeated mistakes.

Workflow Design for Unicorn Platform Teams

Unicorn Platform allows rapid publishing, which is a strategic advantage only when structure is disciplined. Fast releases without standardized QA usually increase content inconsistency.

A reliable platform workflow includes intent-class templates, release checklists, and post-launch review gates. Templates accelerate production while preserving section quality and conversion logic.

When content planning needs sharper format selection, the guidance in what is content marketing nowadays and what types work the best can help map each cluster to suitable asset types.

Experimental Design for SEO and Conversion Alignment

Testing should isolate one friction source per cycle. Large multi-variable updates make attribution unreliable and slow down learning.

Good test candidates include first-screen framing, trust placement, FAQ depth, and internal link pathways. Each test should have a clear expected behavior change and a predefined success condition.

Test outcomes should inform future brief standards. When a pattern works repeatedly, it should become default guidance, not remain a one-time experiment.

Localization and Multi-Market Scaling

Global growth programs require more than translation. Different regions express intent differently and evaluate trust with different expectations.

Local cluster planning should include regional terminology, local proof references, and market-specific conversion assumptions. Technical localization quality remains essential, including URL structure, locale mapping, and internal linking consistency.

Localization workflows should preserve the same operating discipline as the core program: clear ownership, repeatable QA, and revenue-linked measurement.

AI Usage Policy for Editorial Quality

AI can accelerate analysis and draft generation, but quality declines when outputs bypass editorial review. The highest risk is semantic blandness: pages look complete while adding little decision value.

Set explicit AI guardrails. Drafts must pass claim verification, intent-fit review, structural clarity checks, and duplication scans before publication.

Human reviewers should focus on differentiating detail, evidence precision, and commercial relevance. These are the areas where automation alone is weakest.

30-60-90 Day Rollout Plan

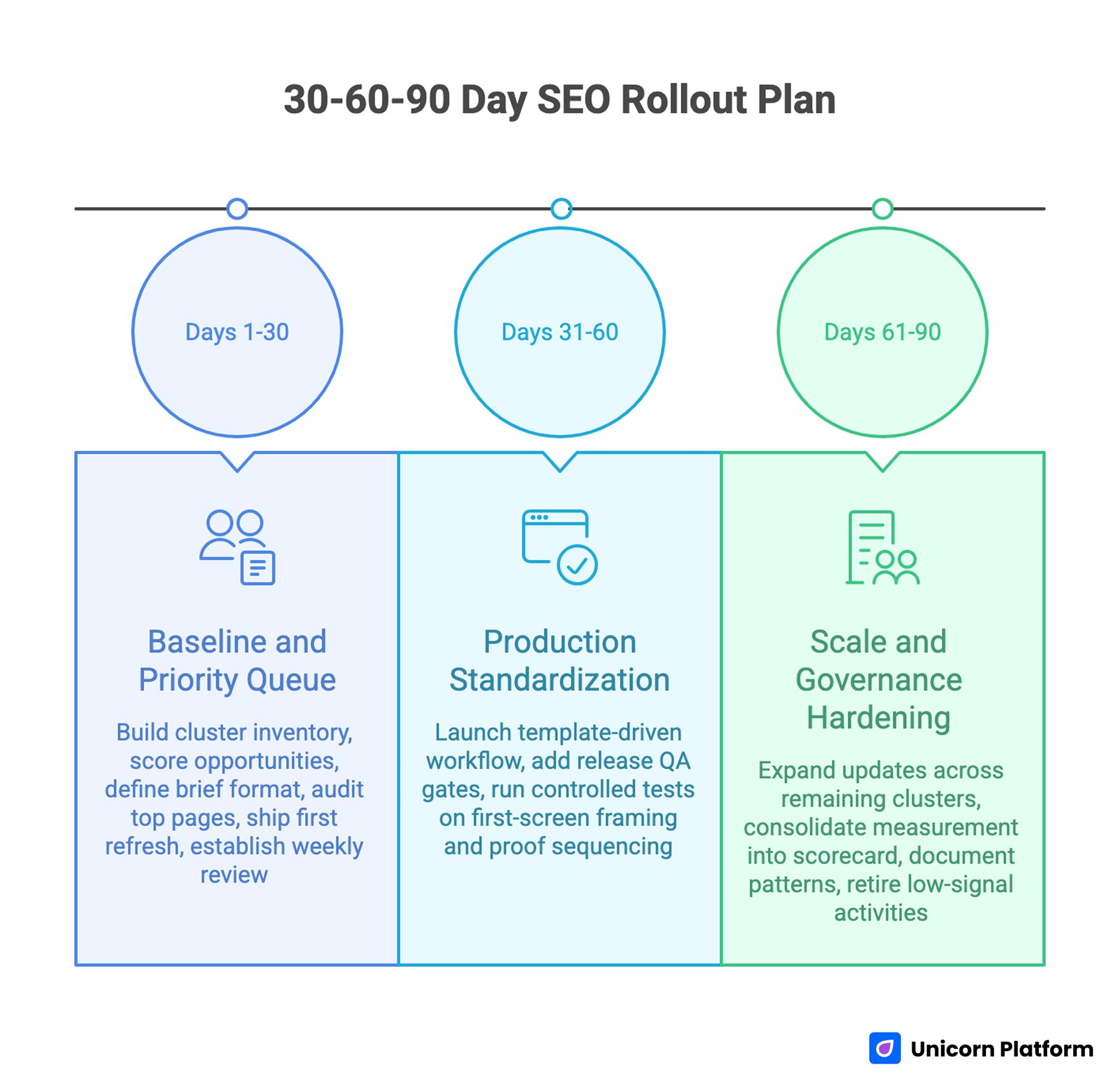

SEO Rollout Plan

Days 1-30: Baseline and Priority Queue

Build cluster inventory, score opportunities, and define one standardized brief format for each intent class. Audit top pages for technical blockers and intent mismatch.

Ship first high-impact refresh set and establish weekly review ritual with clear owner assignments.

Days 31-60: Production Standardization

Launch template-driven workflow in Unicorn Platform for key page types. Add release QA gates for technical health, internal links, and decision clarity.

Run controlled tests on first-screen framing and proof sequencing for top-priority pages.

Days 61-90: Scale and Governance Hardening

Expand updates across remaining high-value clusters using validated patterns. Consolidate measurement into one cross-functional scorecard.

Document working patterns and retire low-signal activities. By this stage, the system should prioritize quality of decisions over volume of output.

Common Failure Patterns and Fixes

Failure: Reporting Without Action Queue

Teams collect data but cannot name the next three updates. Fix this by converting every review into an owner-tagged priority list with due dates.

Failure: Keyword-Led Pages With Weak Intent Fit

Pages target terms but miss user decision needs. Fix this by rewriting briefs around task completion and evidence requirements.

Failure: Technical Debt Hidden by Traffic Growth

Visibility improves while UX and index quality degrade. Fix this by enforcing technical release gates on each major update.

Failure: Internal Links Without Journey Logic

Links exist but do not move users toward decisions. Fix this by mapping links to intent progression and validating anchor specificity.

Failure: Excess Net-New Production

Publishing pace is high while proven pages decay. Fix this with mandatory refresh allocation and maintenance scoring.

Failure: Fragmented Ownership

Insights, writing, and QA are handled by disconnected teams. Fix this with explicit role definitions and shared weekly decision cadence.

Executive Scorecard and Decision Triggers

Leadership reviews are most effective when they focus on choices, not just status. A strong SEO scorecard should help leaders decide where to invest, what to pause, and which risks require immediate action.

A practical monthly scorecard can include three layers. The first layer tracks visibility and coverage, such as cluster-level impressions, position trend, and page-level demand concentration. The second layer tracks behavior quality, such as meaningful engagement, scroll completion for strategic sections, and decision-path link usage. The third layer tracks business signals, such as qualified lead influence, meeting readiness, and pipeline contribution by cluster.

Decision triggers should be predefined. For example, if a high-value cluster loses visibility for two consecutive cycles, the team should run a focused root-cause review before shipping new net-new content in that area. If visibility rises while qualified outcomes decline, the team should prioritize intent and conversion diagnostics rather than additional ranking tactics.

Budget allocation becomes more defensible when tied to these triggers. Resources can move toward refresh programs, technical debt reduction, or deeper proof content based on observed impact, not internal preference. This keeps SEO investment aligned with revenue priorities during both growth periods and constrained quarters.

Scorecards should also include execution health indicators. If release lead time grows or QA failure rate increases, performance issues are likely to appear later even when current rankings look stable. Operational lag is often an early warning signal for future visibility and conversion decline.

FAQ: Evidence-Led SEO Operations in 2026

1) How often should clusters be re-evaluated?

A monthly pass is practical for most teams, with deeper quarterly reviews for strategy changes. High-volatility markets may require faster checks.

2) Should we prioritize new pages or refreshes?

Start with high-potential refreshes when existing pages already have visibility and links. Add net-new pages where intent coverage is missing.

3) What metric should guide priority first?

Use a combined view: business proximity plus reachable demand. Pure volume metrics usually create weak prioritization.

4) How large should a weekly update batch be?

Keep scope small enough for clean attribution. One meaningful update per priority cluster is often better than many shallow edits.

5) Can AI replace editorial review in this workflow?

No. AI accelerates drafts and analysis, but human review is required for evidence quality, differentiation, and strategic judgment.

6) What is the biggest mistake in internal linking?

Treating links as technical artifacts instead of decision guidance. Links should help readers move to the next useful action.

7) How do we reduce briefing inconsistency across writers?

Standardize brief templates by intent class and require explicit decision objectives in every brief.

8) When should a page be fully rebuilt?

Rebuild when intent, structure, and proof layers are all misaligned and incremental edits cannot restore clarity.

9) What makes a technical audit actionable?

Actionable audits assign owners, priority, and release impact for each issue instead of listing defects without execution context.

10) How do we prove SEO contributes to revenue?

Track qualified outcomes by page cluster and compare changes before and after specific updates. Tie reporting to pipeline indicators, not only rankings.

Final Takeaway

Evidence-led SEO in 2026 is a systems problem, not a keyword problem. Teams that convert search signals into fast, high-quality page decisions will outperform teams that only publish more content.

With disciplined cadence, clear ownership, and strict QA in Unicorn Platform, SEO becomes a repeatable growth engine that improves both visibility and measurable business results over time.

Related Blog Posts

- How to Build a Travel Blog Website That Grows Traffic and Drives Revenue

- AI Website Builder Strategy for 2026: How to Launch Fast Without Sacrificing Conversion Quality

- AI-Driven Digital Marketing in 2026: A Practical Operating Model for Growth Teams

- How to Build a Profitable Affiliate Niche System in 2026, Not Just Another Niche List