Table of Contents

- What to Evaluate Before Choosing an AI Builder

- Conversion Architecture That Works Across Segments

- 30-Day Execution Plan

- Common Mistakes and Fast Corrections

- FAQ

AI-assisted site creation has made publishing faster than ever, but speed alone has not solved growth problems. Many teams can produce pages quickly and still struggle with weak lead quality, low activation, or inconsistent brand trust.

The root issue is usually not tool capability. The root issue is operating discipline. Teams focus on generation speed and skip message clarity, proof structure, and post-launch iteration systems.

A good builder helps you draft quickly. A high-performing workflow helps you decide clearly, publish intentionally, and improve continuously.

This guide explains how to do exactly that in 2026. It covers tool-selection criteria, no-code versus custom tradeoffs, practical launch architecture, weekly testing operations, and governance standards that keep page quality stable at scale.

If you are evaluating assistant-led page creation as part of your stack, this practical review of ChatGPT website builder workflows is useful for understanding where AI drafting helps and where human editing remains essential.

sbb-itb-bf47c9b

Quick Strategic Takeaways

Strategic Takeaways for Website Building

- Builder choice matters less than workflow quality after launch.

- First-screen clarity and proof placement influence conversion more than visual novelty.

- No-code paths are strongest when scope and ownership are explicit.

- One page objective per variant improves measurement and iteration speed.

- Free tools can validate direction but often limit long-term control.

- Small weekly improvements usually outperform occasional full redesigns.

- Page systems should be run like products, not one-time projects.

Why Fast Page Creation Still Fails in Practice

Most low-performing page programs follow the same pattern. Teams publish a polished layout, then assume traffic and conversion will align automatically. When performance underdelivers, they change multiple elements at once and lose diagnostic clarity.

Another failure pattern is template dependency. Teams use default structures without mapping content to user intent stages. The result is broad messaging that attracts clicks but fails to produce qualified actions.

A third issue is ownership ambiguity. If nobody owns conversion logic, trust language, and proof freshness, pages decay quickly even when design quality remains high.

Strong teams avoid these pitfalls by creating explicit operating rules before launch, then iterating with disciplined measurement.

What to Evaluate Before Choosing an AI Builder

Tool selection should be based on operational fit, not marketing claims. A practical scorecard prevents expensive switching later.

1) Editing speed for non-technical teams

Can marketing, product, or founder teams update critical sections quickly without waiting for engineering cycles? Fast editing is essential when campaigns or offers change weekly.

2) Structure control and reusable blocks

Can your team reuse tested sections while adjusting page narrative by audience? Reusability improves consistency and reduces rework.

3) Conversion component quality

Does the platform make CTA hierarchy, form design, and trust placement straightforward? Conversion operations should feel native, not bolted on.

4) SEO and metadata control

Basic SEO controls should be simple and reliable: title tags, heading structure, internal links, and performance hygiene.

5) Integration and analytics readiness

Can you connect analytics, CRM, and automation tools without fragile workarounds? Clean data flow is required for informed iteration.

6) Cost predictability at scale

Entry price is less important than renewal and growth-stage cost behavior. Hidden scaling costs can erase early savings.

7) Team workflow support

Can multiple contributors work with clear responsibility boundaries? Collaboration quality affects output consistency more than UI polish.

Free vs Paid AI Builder Paths

Free options can be useful in early validation, especially for teams testing new offers with limited budget. They are effective for directional learning when expectations are realistic.

The tradeoff appears when programs move beyond initial tests. Free plans often restrict branding control, integration depth, advanced SEO settings, and conversion-focused customization.

A practical decision rule: stay free during concept testing, move to paid when low control starts reducing lead quality, conversion confidence, or iteration speed.

Budget discipline still matters. Paid tools are not automatically better unless your team has the process maturity to use their additional control effectively.

No-Code vs Custom Build: A Practical Decision Framework

The right path depends on business stage and risk tolerance, not ideology.

When no-code usually wins

- You need fast launch and fast feedback loops.

- Page performance depends more on messaging quality than bespoke interactions.

- Team bandwidth is limited and priorities change frequently.

When custom work is justified

- Your experience requires unique interactions with strict behavior logic.

- Compliance or security constraints demand deeper control.

- Competitive differentiation depends on custom front-end mechanics.

Hybrid model for most growth teams

Start no-code to validate narrative and conversion mechanics quickly, then invest in custom depth for proven paths that justify engineering effort.

This sequence usually reduces wasted effort and improves learning velocity.

Conversion Architecture That Works Across Segments

Conversion Architecture for Hight-Performing Pages

High-performing pages usually follow a stable decision sequence, even when visual style varies.

Step 1: high-specificity first screen

The first screen should make audience fit obvious. Visitors should quickly understand who the offer is for and what outcome to expect.

Step 2: mechanism clarity

Explain how value is delivered in concrete terms. Technical detail can be layered, but the core mechanism should be understandable without jargon.

Step 3: trust and proof

Show evidence near decision points. Proof should be concise, relevant, and connected to practical outcomes.

Step 4: objection resolution

Address predictable concerns before the final CTA. Common objections include effort, fit, timeline, and expected result quality.

Step 5: clear action path

Use one dominant CTA for each page objective. Too many competing actions reduce conversion confidence and measurement clarity.

This structure improves both reader comprehension and experiment reliability.

Small-Business Use Cases and Fit Rules

Small businesses often need lean execution with measurable outcomes, so builder selection should reflect operational reality.

Service businesses

Priority should be local relevance, clear offer framing, and straightforward lead capture. Simplicity and trust usually outperform design complexity.

E-commerce startups

Focus on product clarity, social proof quality, and checkout-path continuity. AI-generated descriptions can help speed output but require manual quality checks.

Agencies and consultants

Segment pages by vertical or service type. Reusable section blocks reduce production time while preserving positioning depth.

Creator-led businesses

Campaign pages should map to release cycles and audience intent. Fast publishing is useful only when narrative quality and CTA flow remain consistent.

A builder is the right fit when it supports these workflows without introducing avoidable friction.

Operating a Weekly Page Improvement System

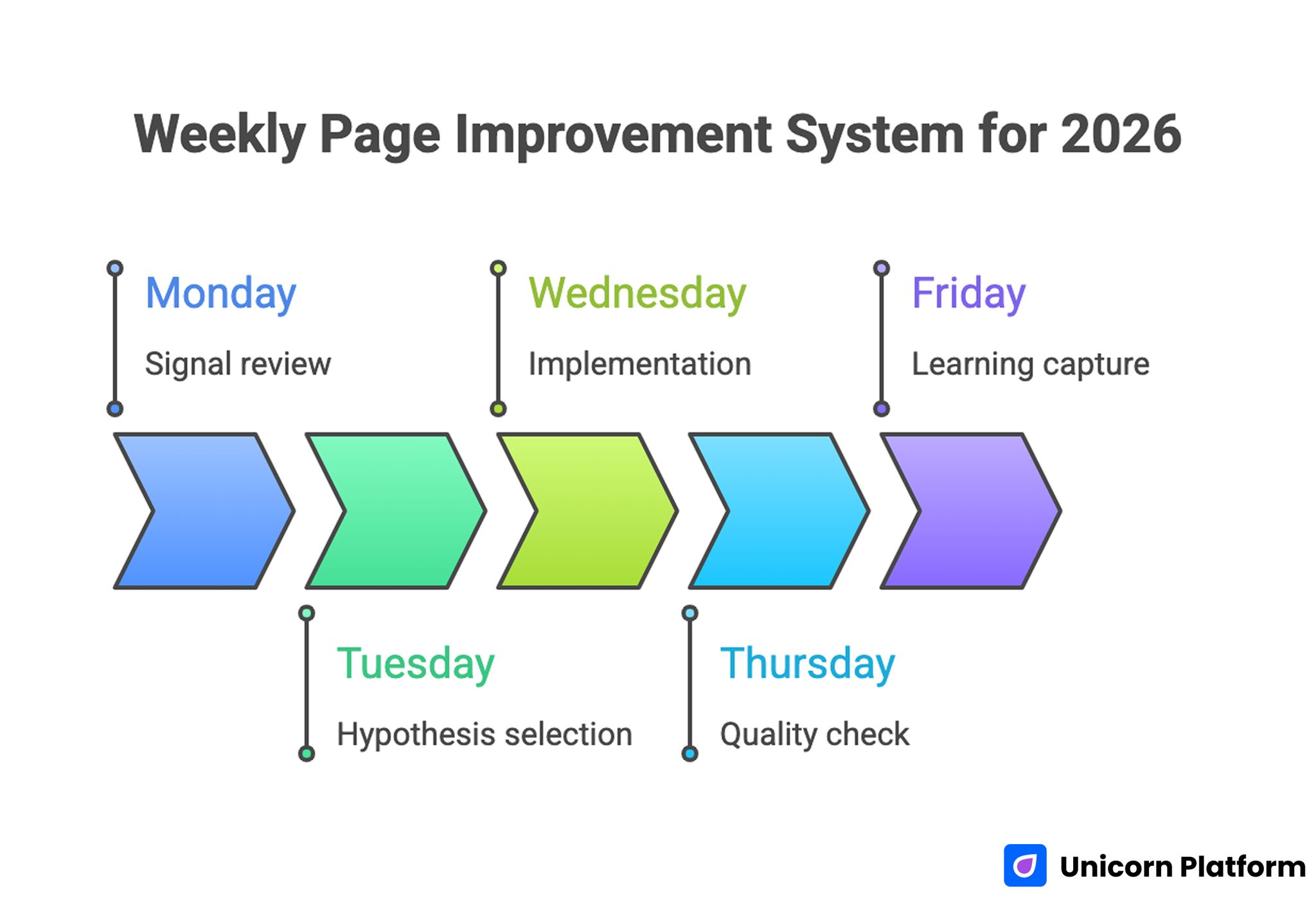

Weekly Page Improvement System for 2026

The biggest performance gains usually come after launch, not during initial build. A weekly operating rhythm keeps pages aligned with real audience behavior.

Structured experimentation is one of the most reliable ways to improve digital performance over time. As explained in Optimizely’s A/B testing guide, controlled experiments help teams isolate variables and understand which changes truly influence user behavior.

Monday: signal review

Review prior-week performance by section, source, and action stage. Focus on qualified conversion patterns, not raw clicks alone.

Tuesday: hypothesis selection

Choose one high-impact variable to test, such as hero framing, proof placement, or CTA language. Keep scope narrow for interpretable results.

Wednesday: implementation

Ship one controlled update and verify technical behavior. Avoid simultaneous structural changes that confound measurements.

Thursday: quality check

Validate readability, link behavior, form flow, and mobile rendering. Quality assurance should be systematic, not optional.

Friday: learning capture

Document results and decide whether to keep, revise, or archive the change. Cumulative learning is a core strategic advantage.

This cadence turns page programs into compounding assets rather than static web projects.

Message Quality Standards for AI-Generated Drafts

AI drafts are useful starting points, but they require strict editorial controls before publication.

Use this review checklist:

- Is the claim specific and audience-relevant?

- Is there evidence that supports each major promise?

- Is the page language aligned with actual offer scope?

- Is the next action clear and appropriate for user intent?

Reject drafts that rely on broad claims, repetitive phrasing, or unsupported outcomes. Fast publication without message quality discipline usually harms trust.

For teams scaling assistant-led production, this operational model for automated landing page workflows can help balance drafting speed with conversion accountability.

Trust Design for Conversion Stability

Trust is often treated as a visual layer, but it is a decision layer. Visitors evaluate whether promises are believable before they evaluate feature depth.

High-impact trust elements include:

- realistic outcome framing with scope context

- concise implementation transparency

- credible testimonials tied to specific use cases

- clear boundaries around what the product does not do

Trust language should appear near risk-heavy claims and high-commitment CTAs. Delayed trust information creates avoidable drop-off.

SEO + Conversion Alignment

Pages perform best when discoverability and conversion logic are planned together. SEO visibility without action clarity produces low-value traffic, while strong conversion without discoverability limits growth.

Use practical SEO fundamentals:

- precise title and heading hierarchy

- internal link routing by intent stage

- clean metadata and structured content blocks

- mobile performance and asset efficiency

When teams need high-speed publishing with durable structure, this practical guide to building custom sites without coding helps connect rapid execution to long-term maintainability.

Site performance has a measurable impact on user behavior and conversion outcomes. According to Google research on Core Web Vitals, improvements in loading speed and visual stability often correlate with higher engagement and stronger conversion rates.

Metrics That Matter Beyond CTR

Top-of-funnel engagement is useful but incomplete. High-performing programs track both immediate and downstream quality.

Recommended metric set:

- CTA click-through by section

- form completion quality

- qualified lead share by source

- activation or onboarding progression

- retention-adjacent signal by segment

These metrics keep optimization grounded in business outcomes rather than vanity performance.

Decision Matrix for Builder Selection Meetings

Tool discussions often stall because teams compare features without a shared weighting model. A simple decision matrix keeps selection meetings practical and prevents preference-driven deadlocks.

Score each shortlisted platform across weighted criteria that reflect your actual operating needs. Avoid equal weighting unless every factor truly has equal business impact.

Example weighting model:

- editing speed for non-technical contributors: 20%

- conversion controls and form flexibility: 20%

- integration reliability with analytics and CRM: 15%

- SEO and metadata control depth: 15%

- reusable block and template workflow: 10%

- collaboration and approval workflow support: 10%

- pricing predictability over 12 months: 10%

Run the matrix with at least two use-case simulations, not a homepage-only test. Many tools look strong on first build and weaker when you test segmented campaign pages, repeated updates, and multi-contributor review.

A reliable meeting flow:

- agree on criteria and weights before testing

- run the same scenario in each tool

- score independently, then compare rationale

- resolve only high-impact scoring disagreements

- decide with owner accountability and timeline

This process reduces emotional debates and creates a documented decision trail that helps future re-evaluations.

Pricing, Scalability, and Hidden Cost Controls

Most teams underestimate long-term tool cost by focusing on entry pricing. Initial subscription cost is only one part of the operating model.

Look at pricing through four layers:

- platform subscription and renewal behavior

- seat growth and collaboration overhead

- integration and automation cost expansion

- rework cost from workflow limitations

The fourth layer is frequently missed. If a platform forces manual workarounds, hidden labor cost can exceed subscription differences quickly.

A practical 12-month cost projection should include:

- expected number of active contributors

- expected number of live page variants

- expected monthly experiment volume

- required integrations for reporting and lead routing

- support or migration contingencies

Use scenario bands for conservative, expected, and aggressive growth. This prevents budget surprises when campaigns scale faster than planned.

Scalability controls should also include content operations. As page count rises, quality drift becomes a larger risk than tool cost alone.

Core scalability safeguards:

- shared component library with approved section patterns

- fixed naming conventions for campaign and segment pages

- scheduled proof refresh intervals by page type

- clear archive rules for underperforming pages

- one release checklist for all contributors

These safeguards make scaling safer and faster because teams spend less time rebuilding decisions from scratch.

Content Operations for Multi-Page Programs

When page programs move from a few launches to dozens of active assets, content operations determine whether conversion quality improves or degrades.

Set up three operational layers:

- production layer: draft, review, publish

- optimization layer: test, evaluate, retain or remove

- governance layer: approve claims, refresh proof, retire stale sections

Each layer should have explicit ownership and turnaround expectations. Without this structure, changes pile up, experiments overlap, and result interpretation gets unreliable.

A useful monthly operating rhythm:

- week 1: quality audit of top-performing pages

- week 2: controlled test rollout on priority segments

- week 3: trust and proof refresh cycle

- week 4: archive and template update decisions

This rhythm keeps programs lean while preserving conversion reliability as volume grows.

30-Day Execution Plan

Week 1: baseline and objective lock

Define page objective, audience, and success metric. Audit current narrative flow and remove low-value sections.

Week 2: trust and mechanism upgrades

Improve first-screen specificity, mechanism clarity, and proof placement. Validate mobile readability and form usability.

Week 3: controlled experiments

Run one major test and one minor test with clear hypotheses. Keep experiment logs concise and comparable.

Week 4: scale or prune

Scale winning patterns, archive weak variants, and update reusable section templates for future launches.

This plan is intentionally focused. Teams that limit simultaneous changes usually learn faster and reduce rework.

90-Day Scale Model

Days 1-30: stabilize baseline quality

Lock narrative spine, trust modules, and CTA hierarchy. Ensure measurement integrity before volume scaling.

Days 31-60: expand segment coverage

Create adjacent audience variants while preserving structural consistency. Adapt proof and objections for each segment.

Days 61-90: operationalize governance

Formalize ownership, review cadence, and release standards. Publish only changes that pass quality and evidence gates.

The goal is predictable growth behavior, not endless redesign cycles.

Common Mistakes and Fast Corrections

Mistake: publishing generic first drafts

Correction: enforce editorial review for specificity, evidence, and scope clarity before launch.

Mistake: running many tests at once

Correction: limit experiments to one major variable per cycle for clean attribution.

Mistake: relying on one broad homepage

Correction: build intent-specific pages for key audiences and traffic sources.

Mistake: overloading first screen with features

Correction: prioritize audience fit, outcome, and one clear next step.

Mistake: weak trust sequencing

Correction: move proof and risk-boundary language closer to major claims.

Mistake: no documentation discipline

Correction: keep weekly release notes with hypothesis, change, metric, and decision outcome.

Team Governance for Sustainable Output

Quality drift becomes inevitable when ownership is unclear. Assign clear roles for message approval, proof updates, analytics quality, and release QA.

Even in small teams, explicit ownership improves speed because decisions move through predictable paths. Governance is not bureaucracy when it prevents avoidable rework.

A practical ownership map:

- content owner: narrative clarity and scope accuracy

- growth owner: experiment design and KPI tracking

- proof owner: evidence freshness and claim validation

- QA owner: release checks and technical reliability

This structure supports fast execution with lower error rates.

FAQ: AI Website Builder Operations in 2026

What is the main benefit of AI-assisted site creation?

The main benefit is faster drafting and iteration. Results improve only when speed is paired with structured editing and measurement.

Are free builder tools enough for serious growth?

They are often enough for early validation. As complexity grows, limited control and integrations usually become bottlenecks.

How should small businesses choose between builders?

Choose based on editing speed, conversion controls, integration reliability, and cost behavior at your expected scale.

How many page variants should we run at once?

Run only as many variants as your team can measure and maintain responsibly. Quality and clarity are more valuable than raw variant volume.

What is the best way to improve conversion quickly?

Improve first-screen specificity, tighten proof relevance, and simplify CTA hierarchy. These changes often produce immediate gains.

Should we prioritize design polish or message clarity?

Message clarity should come first. Strong design cannot compensate for unclear value proposition or weak trust signals.

How often should pages be updated?

A weekly update rhythm is practical for most teams, with monthly strategic reviews for deeper direction decisions.

What should we do when traffic is high but leads are weak?

Re-check intent match, qualification language, and proof alignment. High traffic with weak fit usually indicates message mismatch, not only channel issues.

Can no-code workflows support advanced operations?

Yes, if governance and measurement are mature. No-code speed becomes strategic when releases are disciplined and evidence-driven.

What is the biggest long-term mistake teams make?

Treating page launches as one-time milestones. Sustainable growth comes from operating pages as evolving systems with clear ownership.

Before scaling paid distribution, run a short stabilization window with fixed traffic sources and no major design changes. This gives cleaner diagnostic data on message clarity, trust placement, and form flow quality. Teams that stabilize first usually spend media budget more efficiently because they scale pages that already convert with consistent lead quality.

Final Takeaway

AI-assisted builders are valuable when they improve operational speed and decision quality together. Publishing quickly is useful only when each release strengthens clarity, trust, and conversion reliability.

With Unicorn Platform, teams can run that model effectively: fast drafts, structured refinement, intent-specific variants, and repeatable optimization cycles that compound over time.