Table of Contents

- The Real Opportunity: Build a Connected Marketing System

- 30-Day Execution Plan for Mid-Size Teams

- Common Mistakes and Fast Corrections

- FAQ

Marketing teams no longer fail because they lack channels or tools. They fail because execution is fragmented across teams, systems, and timelines. Paid media, SEO, content, and landing pages often move in parallel with limited coordination, so performance gains are slower than expected.

Artificial intelligence can improve this situation, but only when teams treat it as an operating layer rather than a shortcut for content generation. The most effective organizations use AI to improve decision quality, reduce cycle time, and tighten message-to-intent alignment across the full funnel.

That shift is why operational design matters more than tool count. A large stack with weak process usually creates noisy output and low-confidence decisions. A simpler stack with stronger workflow discipline usually drives better revenue efficiency.

Within Unicorn Platform, this model becomes easier to implement because teams can ship, test, and revise conversion pages quickly while preserving layout consistency and brand control. That speed matters because learning value drops when updates are delayed for weeks.

sbb-itb-bf47c9b

Quick Takeaways

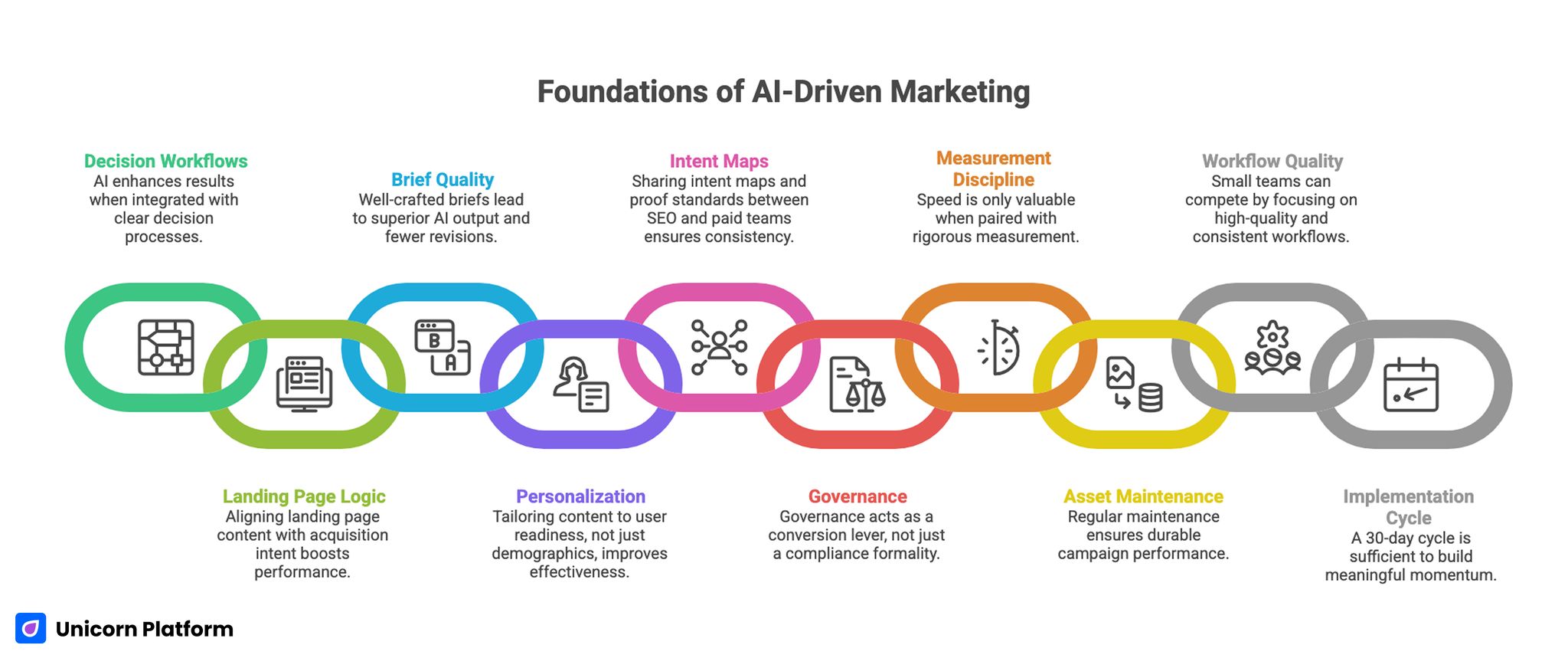

Foundation of AI-Driven Marketing

- AI improves results most when linked to clear decision workflows.

- Channel performance rises when landing page logic matches acquisition intent.

- Better briefs produce better AI output and reduce revision cycles.

- Personalization works best when it reflects readiness stage, not only demographics.

- SEO and paid teams should share intent maps and proof standards.

- Governance is a conversion lever, not a compliance formality.

- Speed is useful only when paired with measurement discipline.

- Asset maintenance drives durable performance beyond campaign spikes.

- Small teams can compete by increasing workflow quality and consistency.

- A 30-day implementation cycle is enough to create meaningful momentum.

Why Many AI Marketing Programs Underperform

The most common mistake is tool-first adoption. Teams deploy writing assistants, ad optimizers, analytics automation, and prompt workflows without redesigning how decisions move from insight to publication.

This creates a hidden tax on execution. More ideas are generated, but fewer high-quality decisions are shipped because priorities are unclear and ownership is distributed loosely.

A second issue is false productivity. Teams celebrate output metrics such as content volume, ad variation count, or experiment count, while business outcomes remain unchanged.

A third issue is weak message continuity. Channel promises and post-click experiences diverge, reducing trust and lowering conversion quality even when traffic is healthy.

The Real Opportunity: Build a Connected Marketing System

AI creates leverage when every channel contributes to one learning system. Search behavior should inform landing page framing. Landing page test outcomes should inform ad copy and audience targeting. Sales feedback should update content priorities.

In this model, every asset is both a conversion surface and a learning instrument. Teams stop treating campaigns as isolated launches and start treating them as iterative programs.

Unicorn Platform supports this approach by reducing the gap between strategy updates and live page changes. Fast implementation keeps learning loops active while the signal is still fresh.

Strategy Before Automation

Automation amplifies whatever strategy already exists. If positioning is unclear, automation scales confusion. If value hierarchy is clear, automation scales consistency.

A practical strategic baseline includes a short set of non-negotiables. Teams should align on them before campaign production starts:

- One primary audience segment per campaign theme.

- One high-priority problem statement.

- One measurable action outcome.

- One clear proof standard for claims.

This baseline should be approved before generation workflows begin. Doing so reduces low-value variations and improves editorial confidence.

Audience Intent Mapping as the Core Workflow

Keyword or audience lists are not enough. Teams need intent maps that connect user motivation to page purpose and CTA sequence.

Intent mapping can be structured around three stages. Each stage should map to one clear page objective:

- exploration intent: users compare options and seek orientation,

- evaluation intent: users validate fit and credibility,

- decision intent: users need clear next-step confidence.

Each stage requires different copy depth, proof placement, and conversion friction. AI can accelerate variant production, but teams must define these structural requirements first.

For teams improving opportunity prioritization across search and content, this framework on data-driven SEO strategies provides useful planning support. It is most effective when reviewed against conversion quality by intent segment.

Intent maps should be reviewed monthly because audience behavior shifts as products, pricing, and competition evolve. A static map usually becomes inaccurate faster than teams expect.

Personalization That Improves Decision Quality

Many personalization programs focus on superficial variables such as name insertion, industry labels, or generic dynamic blocks. Those tactics can help, but they rarely change conversion quality meaningfully on their own.

Higher-value personalization adapts the argument structure to user readiness. Early visitors need orientation and problem framing. Evaluators need mechanisms, comparisons, and proof depth. Decision-stage visitors need implementation detail and risk clarity.

AI can support this by producing controlled section variants for each stage while preserving one brand narrative backbone. This balance keeps relevance high without creating inconsistent voice patterns.

This approach improves relevance without fragmenting identity across channels. It also simplifies QA because the core narrative remains stable.

Content Production: Speed With Editorial Control

AI is highly effective for topic clustering, draft scaffolding, and rewrite acceleration. It is weaker at strategic prioritization, source judgment, and nuanced positioning decisions.

Strong teams use AI for execution speed and humans for editorial direction. They define voice boundaries, evidence standards, and narrative objectives before generation begins.

A robust content workflow includes five linked steps. Each step should have an owner and a completion standard:

- strategic brief,

- guided generation,

- factual and narrative review,

- intent-aligned CTA integration,

- post-publish performance review.

This sequence protects quality while preserving high publication velocity. It also helps teams detect weak inputs before they become expensive rewrites.

For teams balancing article, guide, and campaign formats, this resource on content marketing formats helps align asset type to funnel stage. That alignment improves both engagement quality and production efficiency.

SEO Execution in an AI-Accelerated Environment

Search programs are becoming more competitive in both traditional results and AI-assisted answer surfaces. Ranking potential now depends not only on keyword targeting, but on clarity, trust signals, and structured usefulness.

Practical SEO improvements in AI-enabled workflows include several repeatable actions. These actions should be tracked as part of one editorial checklist:

- better query-intent alignment,

- stronger section hierarchy,

- clearer claim-to-proof structure,

- faster update cycles for aging pages,

- tighter internal navigation paths.

Teams should avoid publishing high-volume low-differentiation content. Near-duplicate outputs create internal competition and dilute authority.

A smaller number of high-clarity pages with consistent updates usually delivers better long-term performance. This strategy also reduces internal cannibalization between similar pages.

Paid Acquisition and Landing Page Synchronization

AI can optimize bidding and ad variation quickly, but paid performance degrades when landing pages do not reflect the same intent logic used in acquisition targeting. Channel efficiency depends on message continuity, not bid automation alone.

A practical synchronization model includes three rules. These rules keep post-click behavior aligned with pre-click expectations:

- Each ad theme maps to a dedicated page narrative.

- Offer framing and proof order stay consistent from ad to page.

- Post-click CTA friction matches traffic readiness.

This prevents the common pattern where ad relevance is high but conversion quality remains weak. It also improves sales follow-up quality because lead intent is clearer.

Within Unicorn Platform, teams can deploy and iterate intent-specific pages rapidly, which is essential for maintaining signal quality during active campaigns. Fast deployment preserves the context needed for accurate optimization decisions.

Brief Quality Is the Hidden Multiplier

Weak prompts are usually symptoms of weak briefs. If brief inputs are generic, outputs become generic regardless of model sophistication.

High-quality briefs include six fields that should be present every time. Missing fields are a strong predictor of low-quality outputs:

- target audience context,

- conversion objective,

- tone and positioning boundaries,

- evidence constraints,

- required CTA behavior,

- exclusion rules for unsupported claims.

When brief templates are standardized by page type, teams reduce revision churn and improve onboarding speed for new contributors. Standardization also improves forecasting because production effort becomes more predictable.

The same discipline should apply to ad copy, email variants, and on-page microcopy. Cross-channel consistency is easier to maintain when the input structure is shared.

Measurement: Shift From Activity Metrics to Value Metrics

AI increases throughput, which can obscure whether the program is actually improving commercial outcomes. According to PwC, many organizations still struggle to realize measurable value from AI, with a significant share of companies reporting little or no financial impact due to gaps in strategy and implementation. Measurement must remain tied to business value.

A useful metric stack includes five high-value measures. They should be reviewed at a steady cadence with clear ownership:

- conversion rate by intent stage,

- qualified lead ratio by source,

- assisted revenue by content cluster,

- time-to-iteration after signal detection,

- post-click engagement quality by page variant.

These metrics reveal whether faster execution is producing better decisions or only more output. This distinction is critical for budget allocation and team confidence.

Teams should review metric movement alongside specific page and message changes. That relationship is where optimization confidence is built.

Governance as a Growth Function

Governance in AI marketing is often framed as risk management only. In practice, governance is a growth function because it reduces reversals, protects credibility, and speeds confident publication.

A practical governance model includes five operating controls. Controls should be lightweight but mandatory for high-impact assets:

- source and claim standards,

- role-based approval gates,

- regulated-topic escalation rules,

- revision history and ownership clarity,

- recurring quality audits.

When governance is explicit, teams can move faster without sacrificing trust. Clear boundaries reduce backtracking and approval bottlenecks.

Creative Differentiation in High-Volume Pipelines

As output volume rises, creative sameness becomes a strategic risk. Content may be technically correct but narratively interchangeable, which weakens brand memory and reduces conversion lift.

Teams should plan angle diversity before generation. Examples include operator workflow angle, customer story angle, contrarian thesis angle, and benchmark interpretation angle.

Each angle should map to a specific page purpose and proof model. This prevents repetitive narratives and improves relevance for different audience segments.

Within Unicorn Platform, angle-to-page mapping is easier to operationalize because template consistency allows faster variant deployment without structural drift. This keeps creative experiments useful instead of chaotic.

From Campaign Peaks to Durable Assets

Short campaign wins are useful, but durable growth depends on compounding assets. These include evergreen guides, structured comparison pages, role-specific landing templates, and reusable conversion blocks.

AI can accelerate initial production, but durability comes from maintenance discipline. Teams should schedule quarterly refreshes for high-value assets and update claims when evidence changes.

A practical maintenance loop includes five recurring checks. Each check should be tied to a measurable quality signal:

- ranking and conversion review,

- claim freshness check,

- internal link relevance review,

- CTA intent revalidation,

- controlled variant testing.

This turns content and pages into long-term revenue infrastructure rather than one-time campaign outputs. Durable assets reduce paid dependency and improve planning stability.

For teams building complementary attention loops beyond search, this video marketing strategy guide can support channel diversification and remarketing alignment. Combining search and video signals often improves audience qualification.

Cross-Functional Handshake With Product Teams

Marketing and product teams often operate on different clocks. Product ships features with technical detail, while marketing publishes narrative updates later, leaving a timing gap that wastes demand.

A stronger model uses a shared launch checklist. The checklist should be reviewed before release dates are finalized:

- validated benefit statement,

- proof artifacts and constraints,

- audience-specific use-case framing,

- page and campaign update deadlines,

- post-launch feedback capture plan.

This handshake reduces lag between product value and market communication. Faster alignment improves both campaign timing and product adoption clarity.

In Unicorn Platform workflows, linked launch checklists help teams turn release signals into conversion-ready updates quickly. This is especially important when release windows are short.

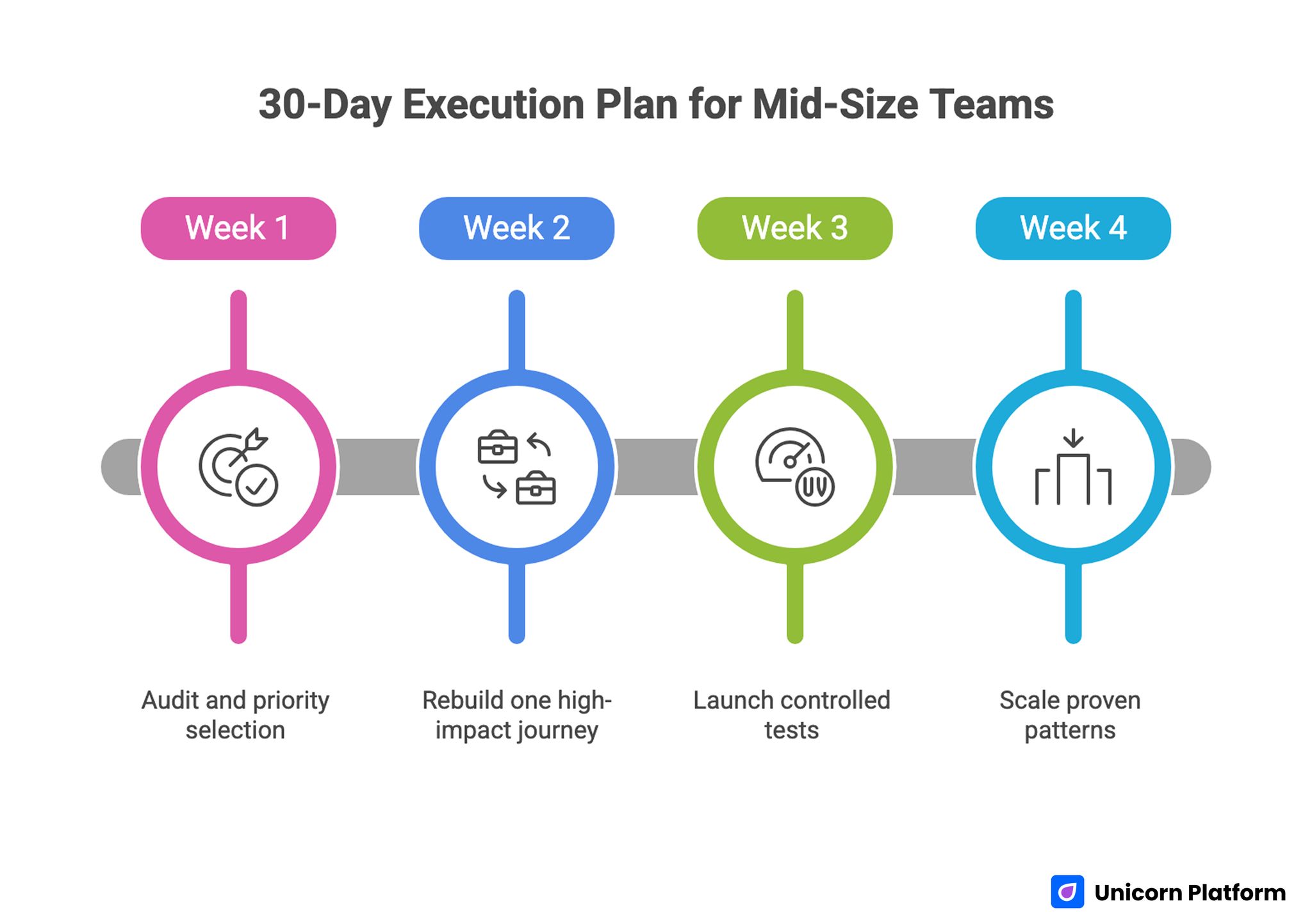

30-Day Execution Plan for Mid-Size Teams

30-Day Execution Roadmap for Mid-Size Marketing Teams Using AI-Driven Workflows

Week 1: Audit and priority selection

Review existing campaigns, landing pages, and content clusters to identify the highest message-fit gap. Select one funnel segment where improved alignment is most likely to impact revenue quality.

Week 2: Rebuild one high-impact journey

Create one intent-specific page set, one aligned ad message set, and one structured content support asset. Keep ownership and approval paths explicit.

Week 3: Launch controlled tests

Run one major-variable test per journey element. Track conversion quality and engagement behavior by intent stage.

Week 4: Scale proven patterns

Promote winning structures into adjacent campaigns and archive low-signal variants. Update brief templates and governance notes based on observed outcomes.

This plan is intentionally narrow. Focused execution typically outperforms broad AI rollout attempts.

90-Day Compounding Plan

Month 1: Stabilize workflow quality

Normalize brief quality, approval standards, and intent mapping discipline across the team. Consistent foundations make later scaling far easier.

Month 2: Deepen performance learning

Expand measurement clarity and tighten the link between page edits and outcome movement. Teams should document why each change was made and what shift was expected.

Month 3: Expand asset durability

Scale winning templates into reusable growth assets with clear maintenance cadence. Archive weak formats so team capacity stays focused on high-return work.

At this stage, teams should see stronger consistency in both conversion efficiency and planning confidence. Operational stability should improve along with commercial results.

Common Mistakes and Fast Corrections

Mistake 1: Automating before strategy is clear

Correction: approve audience, problem, and outcome hierarchy before generation workflows begin. This step prevents high-volume output from drifting off strategy.

Mistake 2: Treating SEO and paid as separate systems

Correction: use shared intent maps and synchronized landing page logic. Shared maps reduce conflict between channel owners.

Mistake 3: Measuring volume instead of value

Correction: prioritize qualified conversion and assisted revenue metrics. Those metrics expose quality changes earlier than volume metrics.

Mistake 4: Publishing generic AI-assisted copy at scale

Correction: enforce angle diversity and proof standards in briefs. This improves differentiation and lowers rewrite burden.

Mistake 5: Ignoring maintenance after initial publication

Correction: run a recurring refresh loop for high-value assets. Fresh evidence and updated framing protect long-term performance.

Mistake 6: Weak role ownership in approvals

Correction: assign explicit decision rights for strategy, quality, and risk checks. Clear ownership shortens review cycles significantly.

FAQ: AI-Driven Digital Marketing

What is the first step for improving AI-enabled marketing performance?

Start by identifying one high-value decision workflow, then redesign process and ownership around that workflow before adding more tools. Focused redesign delivers faster improvement than broad deployment.

How can teams avoid generic AI-generated marketing output?

Use stronger briefs with clear audience context, evidence constraints, and angle direction. Editorial review should validate differentiation before publish.

Should small teams adopt AI across every channel at once?

Usually not. Focused implementation in one funnel segment creates faster learning and lower execution risk.

Which metrics best show whether AI is helping marketing quality?

Qualified conversion, assisted revenue, and time-to-iteration are stronger indicators than content or ad volume alone. They connect activity to meaningful business movement.

How often should landing pages be updated in AI-assisted programs?

Update cadence depends on traffic and volatility, but high-impact pages should be reviewed on a recurring schedule with clear trigger thresholds. Scheduled reviews reduce the risk of stale messaging.

How can paid campaigns and SEO be aligned more effectively?

Map both channels to shared intent stages and connect them to stage-matched landing page narratives. This alignment usually improves efficiency in both channels.

Where does human judgment remain most critical?

Human leadership remains essential for positioning, trade-off decisions, evidence standards, and brand trust boundaries. AI should accelerate decisions, not replace strategic accountability.

What makes governance practical instead of bureaucratic?

Governance should be lightweight, role-based, and tied to real quality risks. The goal is faster confident publishing, not unnecessary delay.

Why do some teams see higher output but flat commercial results?

Because throughput without decision quality often creates noise. Performance improves when speed is linked to intent-fit and measurable learning loops.

What creates long-term advantage in AI-enabled marketing?

Long-term advantage comes from compounding systems: better briefs, better pages, better measurement, and consistent maintenance of high-value assets. Teams that protect these systems typically outperform tool-first competitors.

Final Takeaway

AI changes marketing performance most when teams redesign execution systems, not when they simply add generation tools. The strongest programs combine strategic clarity, fast iteration, clear governance, and value-focused measurement.

With Unicorn Platform, this model is practical to run at speed. Teams can publish intent-matched pages faster, connect channels through shared learning loops, and build durable growth assets that keep improving over time.

Related Blog Posts

- AI in Website Development: Powering the Next Wave of Digital Experiences

- Unicorn Platform Free AI Website Builder: Practical Guide for Fast Launches

- AI-Driven Landing Page Workflows in 2026: How Teams Move Faster Without Losing Conversion Quality

- Building AI Applications in 2026: A Practical Guide to Product Utility and Conversion Readiness