Table of Contents

- Should You Choose a .ai Domain in 2026?

- Portfolio Strategy: Build Trust Beyond the Name

- 30-Day Domain and Launch Plan

- Common Mistakes and How to Avoid Them

- FAQ

Choosing a domain for an AI company looks simple on the surface and expensive in practice when done poorly. A weak choice can reduce brand recall, create confusion in sales conversations, and force costly rework across content, ads, and email infrastructure.

A strong choice does the opposite. It makes the brand easier to remember, easier to trust, and easier to scale across channels. It also improves internal alignment because teams can communicate the same positioning without constantly explaining what the company does.

The most reliable path is not brainstorming in isolation. The most reliable path is a structured naming system with clear criteria, legal checks, message testing, and launch validation.

This guide gives you that system. You will learn how to evaluate extension options, compare naming tools, score candidates, reduce legal and migration risk, and connect naming decisions to conversion performance in Unicorn Platform.

If your team is still building first-site foundations, this practical AI website maker workflow is a useful companion for turning naming decisions into clear launch pages quickly.

sbb-itb-bf47c9b

Quick Strategic Takeaways

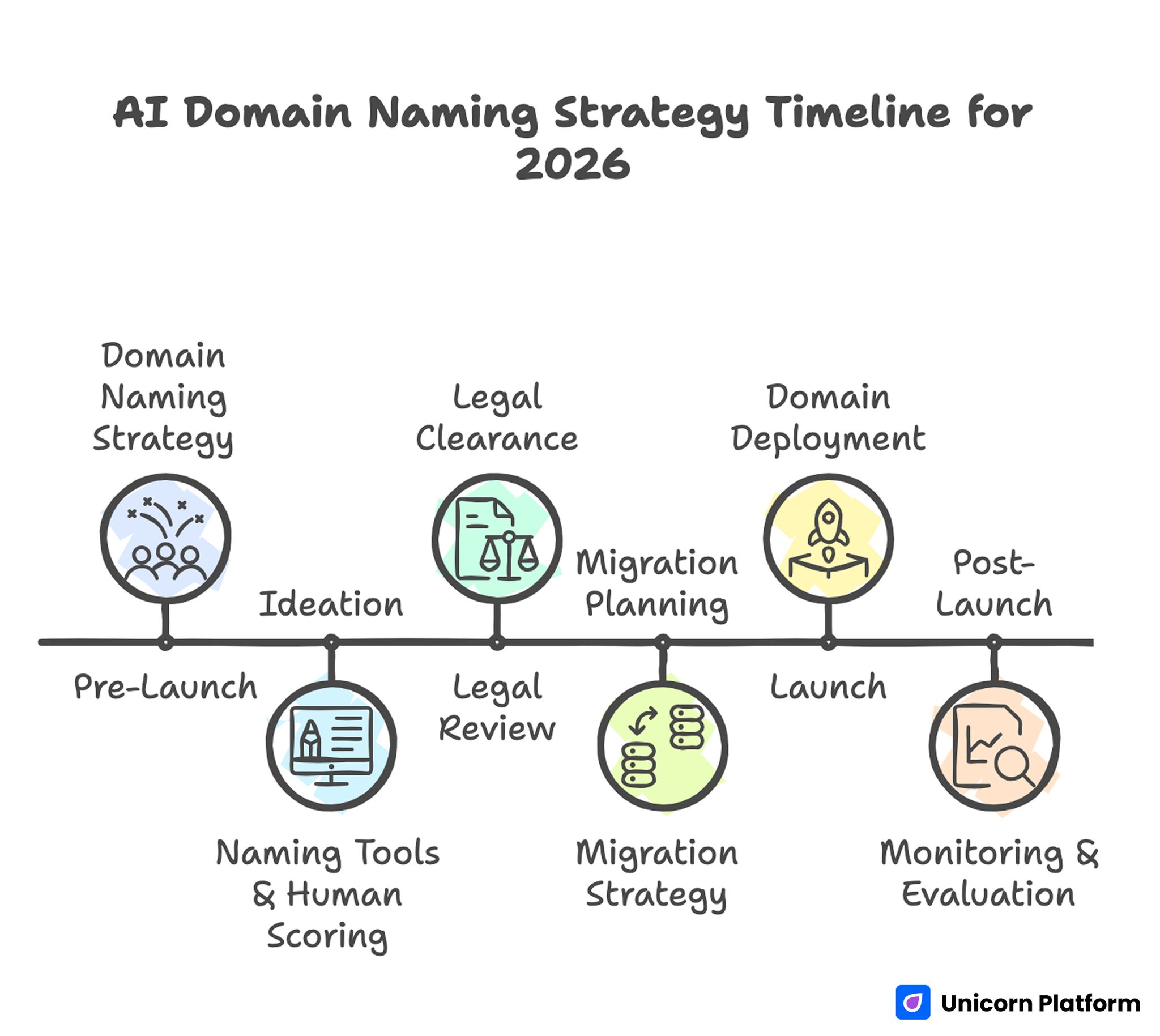

AI Domain Naming Strategy Timeline for 2026

- Domain naming is a growth decision, not a cosmetic one.

- Extension choice matters, but clarity and trust matter more.

- Short names are helpful only when they are also easy to say and spell.

- Naming tools accelerate ideation but still require human scoring.

- Legal review should happen before brand assets are widely deployed.

- Migration planning is mandatory if you rebrand to a new domain.

- Post-launch monitoring determines whether the name works in real usage.

Why Domain Decisions Break AI Launches

AI startups often move fast and treat naming as a final checkbox before launch. That creates a common failure pattern: the team selects a clever name internally, then discovers external confusion once traffic and conversations begin.

The confusion usually shows up in predictable places. Prospects mishear the name on calls, type the wrong address from memory, or fail to understand what category the product belongs to. Marketing performance suffers because the first impression is unclear.

Another common issue is naming drift. Product, sales, and marketing teams use different language for the same offer, and the domain no longer matches the message on the page. Trust drops because users see inconsistency before they see value.

Strong teams prevent this by treating naming as a structured decision with measurable criteria and explicit ownership.

Should You Choose a .ai Domain in 2026?

The .ai extension can be a strong strategic choice when your product is clearly AI-native and your audience expects modern technical positioning. It can signal relevance quickly in crowded categories where immediate context matters.

But extension choice alone does not create trust. If the name is hard to pronounce, easy to mistype, or disconnected from the core value proposition, the extension cannot compensate.

A practical way to decide is to compare .ai against alternatives across business reality, not preference. Ask how each option performs in memorability, credibility, legal risk, and long-term brand flexibility.

When .ai is often a good fit

- You are launching an AI-first product and want category clarity.

- Your primary audience is familiar with technical naming conventions.

- You can afford extension pricing and renewal costs without stress.

- Your messaging and product narrative are tightly aligned.

When another extension may be better

- Your audience is broad and non-technical.

- Your brand direction may expand beyond AI positioning soon.

- Budget pressure makes higher renewals a strategic risk.

- A strong alternative name exists with cleaner brand recall.

The extension decision should support the business model, not dictate it.

Extension Comparison Framework: .ai vs .com vs Alternatives

Founders often compare extensions with vague claims. A better method is to score each option against consistent criteria and decision weight.

Criteria that matter most

- Category signal: does the extension communicate relevant context quickly?

- Trust profile: how does it feel to your target audience?

- Recall quality: how likely users are to type it correctly from memory?

- Cost profile: registration, renewal, and defensive-registration burden.

- Flexibility: can the brand still fit if product scope evolves?

Practical tradeoffs

- .ai often wins on category signaling for AI-native products.

- .com often wins on broad familiarity and long-term flexibility.

- .io, .co, and .tech can work in specific contexts but should be tested for audience trust and recall.

No extension is universally best. The right choice is the one that fits your audience behavior and operating constraints.

Naming Criteria That Predict Better Outcomes

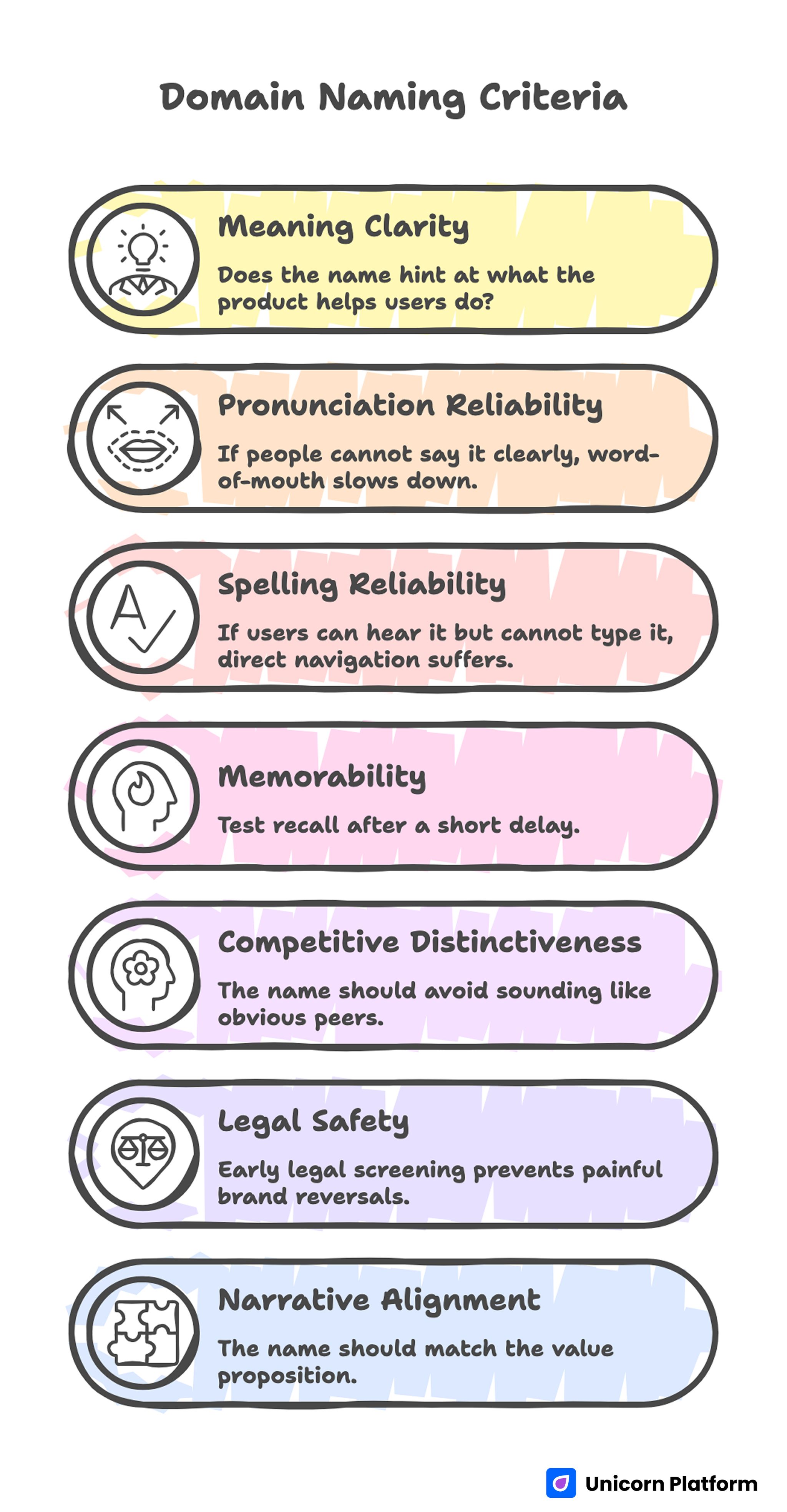

Domain Naming Criteria

Most domain mistakes come from weak criteria, not weak creativity. Good ideas fail when teams do not define what "good" means before discussion begins.

Use a weighted scorecard with the factors below.

1) Meaning clarity

Does the name hint at what the product helps users do? It does not need to describe everything, but it should reduce ambiguity.

2) Pronunciation reliability

If people cannot say it clearly, word-of-mouth slows down and call-based sales friction rises.

3) Spelling reliability

If users can hear it but cannot type it, direct navigation and referral quality suffer.

4) Memorability under time pressure

Test recall after a short delay. If users cannot remember the name after one interaction, brand efficiency is lower.

5) Competitive distinctiveness

The name should avoid sounding like obvious peers in your category. Similar naming reduces trust and raises legal risk.

6) Legal and trademark safety

Early legal screening prevents painful brand reversals after launch assets are already deployed.

7) Narrative alignment

The name should match the value proposition used in headline, proof, and CTA language.

A candidate that fails two or more high-priority criteria should usually be rejected.

How to Use Naming Tools Without Getting Generic Output

Naming tools are useful for speed, especially in early ideation. The risk is that many outputs converge on similar patterns, which can make your brand feel interchangeable.

Use tools as idea accelerators, not as final decision engines.

Better workflow for tool-assisted naming

- Start with your audience problem and value promise, not random keyword lists.

- Generate wide options across tones: technical, benefit-led, metaphorical, and hybrid.

- Filter obvious low-quality outputs quickly using hard constraints.

- Score surviving options with the same weighted rubric.

- Run short external comprehension tests before final selection.

This approach keeps speed while preserving strategic quality.

What to check when comparing naming tools

- Do results include real availability checks?

- Are pricing and registrar options transparent?

- Is there support for trademark conflict screening?

- Can you control style and lexical constraints?

- Does the tool avoid repetitive, low-distinction patterns?

The best tool for your team is the one that supports better decisions, not the one that produces the most names.

Connect Domain Choice to Page Performance in Unicorn Platform

A domain decision is incomplete until it is tested in live page behavior. Users do not evaluate names in isolation. They evaluate the full first impression: URL, headline, trust cues, and action clarity.

In Unicorn Platform, you can create fast variant pages to test naming outcomes without long development cycles. That allows you to compare interpretation quality and conversion signals before committing fully.

For teams refining launch pages during naming validation, this guide on creating an AI landing page provides a practical build sequence that keeps tests controlled.

What to measure during domain validation

- First-screen comprehension: can users explain what you do?

- Category fit: do users map the name to the intended product space?

- CTA confidence: does the name help or hurt willingness to act?

- Inquiry quality: do leads match your target segment?

Domain quality becomes clear when measured through these behavioral signals.

Portfolio Strategy: Build Trust Beyond the Name

Even a strong domain cannot carry a weak credibility layer. AI products often face skepticism, so proof architecture matters from day one.

Create a portfolio section that demonstrates real capability, not generic claims. Show selected outcomes, implementation context, and practical use cases aligned to your audience.

If you are strengthening product proof and positioning together, this playbook on building a software company page can help structure trust sections without clutter.

Portfolio blocks that improve trust

- Problem context: what situation existed before your solution.

- Method summary: what was changed and how.

- Outcome signal: what improved and for whom.

- Scope clarity: where the result applies and where it does not.

This structure makes your brand promise credible and easier to evaluate.

Legal, Compliance, and Risk Controls Before Launch

Fast launches often skip legal checks until late, which creates avoidable risk. A naming conflict discovered after campaign launch can force expensive rework across pages, domains, email accounts, and partner assets.

Run legal screening as an early gate, then do deeper review before final commitment.

Minimum legal checklist

- Preliminary trademark search in relevant jurisdictions.

- Conflict scan for close phonetic and visual matches.

- Verification of social handle consistency where needed.

- Registrar policy review and renewal-risk awareness.

Also define who has approval authority. Naming decisions stall when governance is unclear.

Migration Planning for Domain Changes

Rebrands and domain transitions are common in AI startups, especially after positioning sharpens. Migration quality determines whether growth momentum is preserved or disrupted.

Handle migration as a cross-functional project, not a technical afterthought.

Core migration steps

- Map all existing URLs and destination equivalents.

- Set permanent redirects and verify behavior.

- Refresh internal links, canonicals, and sitemaps.

- Update analytics properties and attribution rules.

- Align campaigns, outbound links, and partner assets.

- Monitor traffic quality during stabilization windows.

Search and conversion losses usually come from missed details, not from the domain change itself.

Message Consistency Across Channels

A domain becomes stronger when the same narrative appears across site content, ads, partner messaging, and outbound communication.

Inconsistent language creates a trust penalty. Users may feel uncertain even when each channel looks polished individually.

Create a concise messaging map with three elements: audience, promise, and proof logic. Then apply it consistently across first-screen copy, comparison pages, and outreach.

Consistency is especially important in early stages where every interaction shapes brand perception.

30-Day Domain and Launch Plan

Week 1: Candidate generation and scoring

Generate domain options with clear constraints, then apply a weighted scorecard. Eliminate low-clarity and high-risk options quickly.

Week 2: Legal and messaging pre-checks

Run early legal screening and draft first-screen messaging aligned to top candidates. Prepare concise proof blocks for validation pages.

Week 3: Live-page validation in Unicorn Platform

Publish variant pages and test comprehension, CTA confidence, and lead quality. Keep variables tight so signal interpretation is clean.

Week 4: Final selection and rollout prep

Select the winning domain based on behavioral evidence, then prepare launch assets, redirects if needed, and communication sequencing.

This plan keeps speed high while protecting brand quality.

90-Day Growth Plan After Launch

Days 1-30: Stabilize perception and tracking

Monitor direct traffic quality, branded search behavior, and inquiry relevance. Fix message ambiguity quickly where needed.

Days 31-60: Expand trust assets

Add stronger proof modules and segment-specific examples for your best-fit audiences. Refine page flow where drop-off is concentrated.

Days 61-90: Scale with governance

Operationalize naming and content standards for future launches. Document what worked so new campaigns inherit proven decisions.

Growth becomes more predictable when naming, messaging, and page strategy are treated as one operating system.

Registrar and Renewal Strategy for AI Brands

Many startups focus on availability and ignore registrar quality. That creates long-term friction when renewal prices spike, DNS management is unreliable, or support response is too slow during incident windows.

Select a registrar using operational criteria, not only first-year discounts. Check renewal transparency, DNS stability features, account security controls, transfer policy clarity, and support reliability under time pressure. A low-cost entry price can become expensive if management quality is weak.

Also define renewal ownership internally. One missed renewal can disrupt paid campaigns, outbound email reliability, and brand trust at the same time. Teams that assign explicit domain ownership and renewal calendar controls reduce this risk significantly.

For defensive protection, register critical typo variants and near-confusion alternatives where practical. Redirect strategy should be documented so defensive domains support brand safety instead of creating maintenance overhead.

Practical Decision Matrix Example

A simple decision matrix can accelerate alignment when founders disagree on naming direction. Score three finalists against weighted criteria and review tradeoffs in one structured meeting instead of repeating open-ended debates.

Example weighting model:

- meaning clarity: 25%

- memorability: 20%

- legal safety: 20%

- audience trust profile: 15%

- extension fit: 10%

- cost and operational burden: 10%

After scoring, discuss only the top two options and identify one decisive risk per candidate. This keeps focus on material differences rather than subjective preference. A fast final decision is easier when the team agrees on criteria before reviewing any names.

Common Mistakes and How to Avoid Them

Mistake 1: Choosing for novelty alone

Novel names can feel exciting internally and confusing externally. Prioritize audience clarity before creative uniqueness.

Mistake 2: Overvaluing extension signal

Extension choice can help positioning, but cannot fix weak message architecture. Build trust through content and proof.

Mistake 3: Skipping legal review until late

Late legal discovery creates expensive rollback work. Move legal checks to the early evaluation stage.

Mistake 4: Treating naming tools as decision authority

Generated options are inputs, not final answers. Human scoring and external testing remain essential.

Mistake 5: Launching without migration readiness

If a transition is planned, migration hygiene must be complete before traffic scaling. Redirect and tracking errors can erase momentum.

Mistake 6: Ignoring post-launch signals

A domain should be re-evaluated with real behavior data, not defended by internal preference after launch.

FAQ: AI Domain Strategy in 2026

Is a .ai domain required for an AI startup?

No. It can be beneficial for category signaling, but strong positioning can also work on other extensions when the name and message are clear.

Does extension choice affect SEO rankings directly?

Extension alone is rarely decisive. Content quality, relevance, site structure, and technical reliability are far more influential. Google’s official SEO guidance emphasizes that domain extensions do not inherently improve rankings, while clarity, usability, and content value play a much larger role in search performance

How many domain candidates should we test?

Testing five to ten serious candidates is usually enough for clear comparison. More candidates can create noise without better insight.

What makes a name easy to remember?

High recall usually comes from simple pronunciation, simple spelling, and clear semantic fit with your value proposition.

Should early-stage teams pay more for premium names?

Only when the expected brand and conversion benefit is clear. Premium pricing without strategic fit can strain resources without meaningful return.

How do we reduce naming bias inside the team?

Use a weighted scorecard, external comprehension checks, and a predefined decision owner. Process discipline reduces preference-driven deadlock.

When should legal checks happen?

Run preliminary checks before final shortlist lock, then complete deeper legal review before launch assets are widely published.

How do we test if a name actually works?

Test with live pages and measure comprehension, trust, and action quality. Real behavior data is more reliable than internal opinion.

What if our current name is already underperforming?

Use a structured rebrand approach: score alternatives, validate messaging, and execute migration with strict technical QA.

How often should we revisit naming strategy?

Review it quarterly or whenever positioning changes materially. Names should evolve only when evidence supports a better long-term outcome.

Final Takeaway

A strong AI domain decision is not a lucky brainstorm. It is the result of clear criteria, disciplined testing, legal diligence, and consistent execution across brand and site experience.

Unicorn Platform helps teams execute this process quickly by turning naming assumptions into measurable page behavior. That speed, paired with rigorous decision rules, is what turns a domain from a label into a durable growth asset.