Table of Contents

- Core Architecture for High-Performing Startup Pages

- 30-Day Startup Execution Plan

- Section-Level Implementation Library

- 12-Week Operating Calendar for Startup Page Teams

- Migration Playbook for Scaling Startup Teams

- Common Mistakes and Fast Fixes

- FAQ

Startups usually move fast by necessity, not by preference. Budgets are tight, teams are small, and every launch competes with product work, sales pressure, and investor expectations.

In that context, landing pages often become rushed deliverables. Pages go live quickly, but messaging is vague, proof is weak, and the main action is unclear. Traffic appears, but qualified conversion stays inconsistent.

The better approach is not slowing down. The better approach is running a clear operating system where launch speed and quality controls work together.

This guide explains that operating system in practical terms. It covers structure, copy, proof, UX, analytics, testing cadence, and team governance so startup pages can improve predictably over time.

sbb-itb-bf47c9b

Quick Strategic Takeaways

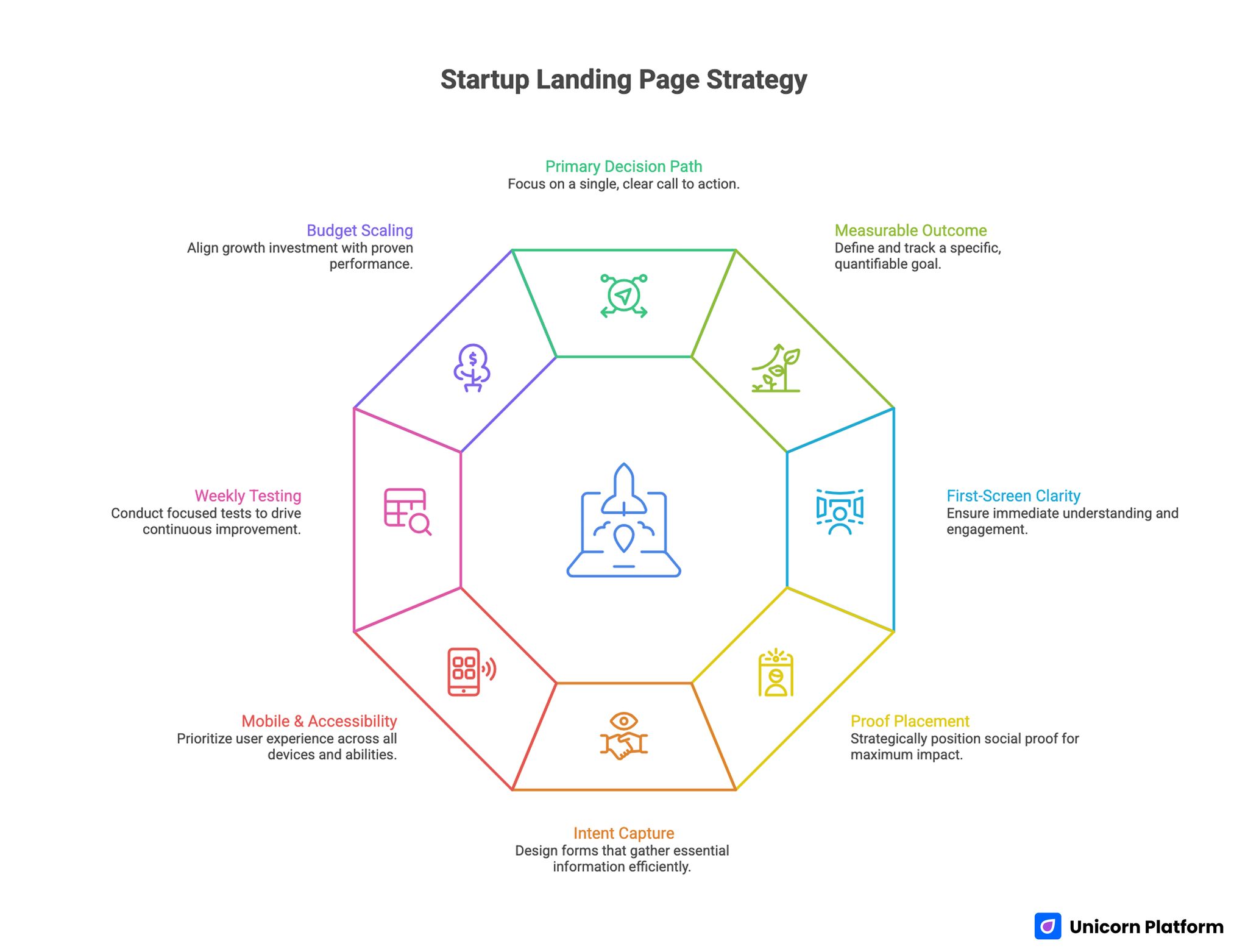

Startup Landing Page Strategy

- A startup page should drive one primary decision path and one measurable outcome.

- First-screen clarity typically drives faster gains than visual redesign cycles.

- Proof placement and relevance influence conversion quality more than proof volume.

- Forms should capture intent with minimal friction, then qualify deeper later.

- Mobile and accessibility checks should be treated as release gates.

- One major test per week produces clearer learning than random frequent changes.

- Growth budget should scale only after message and lead-quality signals stabilize.

Why Startup Landing Workflows Break

Most startup landing problems are operational, not creative. Teams publish pages without a shared decision framework, so each contributor optimizes for a different objective.

Marketing wants click-through growth, product wants feature coverage, founders want brand polish, and sales wants higher-intent leads. Without structure, the page becomes a compromise that satisfies no one.

Another issue is launch celebration bias. Teams treat publishing as completion, then move to other priorities without weekly optimization discipline. As a result, weak messaging and routing issues persist for months.

The fix is to define a repeatable workflow before production begins. Pages perform better when every section has a job, every job has a metric, and every metric has an owner.

Define the Page Job Before Touching Design

Before writing copy or selecting blocks, define the exact job of the page. If the job is unclear, design decisions become subjective and tests become hard to interpret.

Common startup page jobs include trial starts, demo bookings, waitlist enrollments, partner inquiries, and qualified lead captures. Each page should optimize one primary job and only one secondary fallback action.

Then define audience stage for the dominant traffic source. Cold visitors need context and trust first, while warm visitors need faster action with less education.

This definition step prevents one of the most expensive startup mistakes: building one page for conflicting decision stages and trying to optimize all of them at once. It also reduces rewrite cost later because section priorities are clear from the start.

Core Architecture for High-Performing Startup Pages

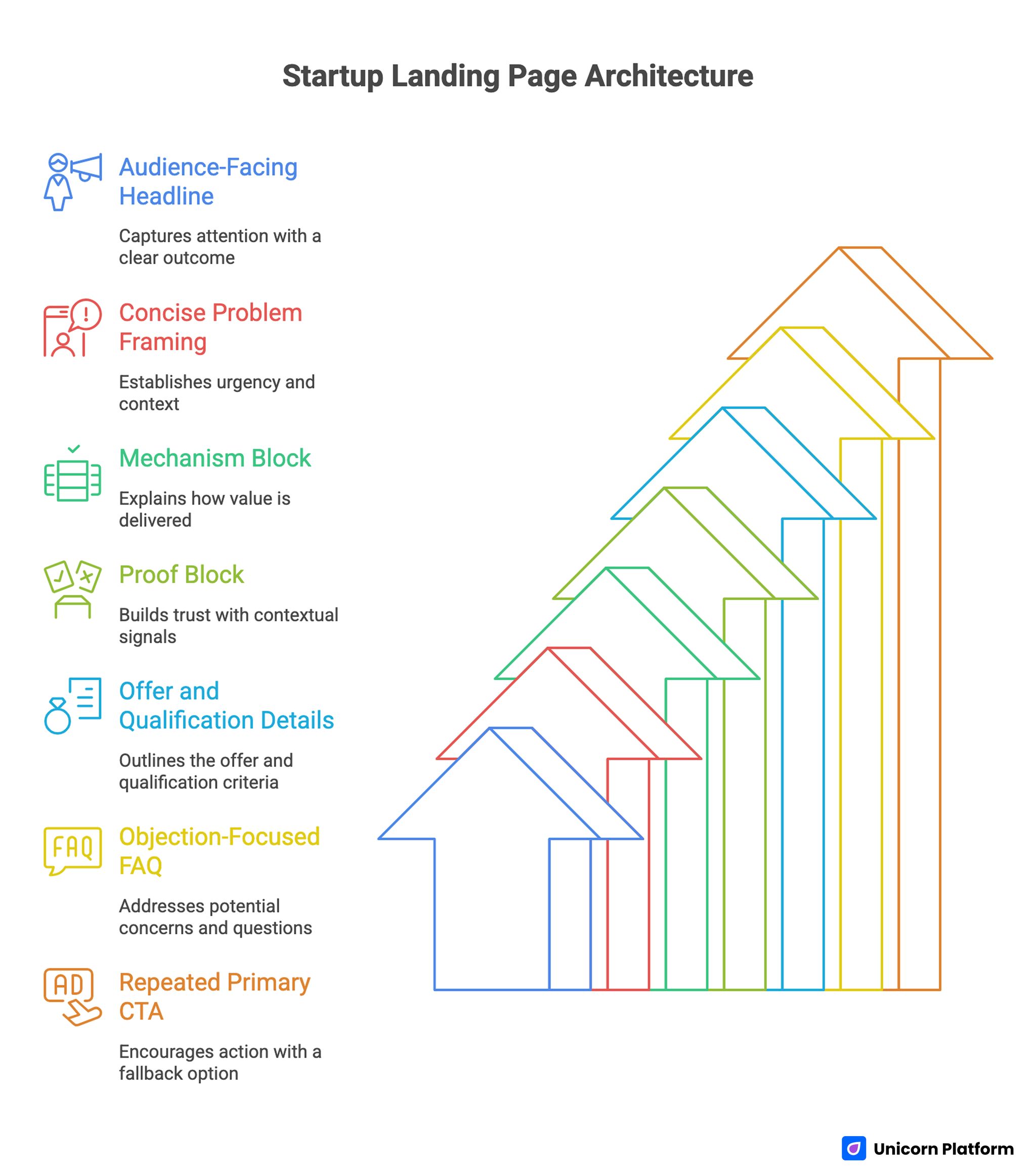

Startup Landing Page Architecture

A stable architecture creates the foundation for reliable testing. When layout changes every week, teams cannot tell which adjustments actually improved performance.

Use a conversion-first structure with clear sequence and keep this order stable for your first optimization cycle. Stable order creates cleaner baseline data for future tests.

- audience-facing headline with concrete outcome

- concise problem framing with urgency context

- mechanism block showing how value is delivered

- proof block with contextual trust signals

- offer and qualification details

- objection-focused FAQ

- repeated primary CTA with one low-friction fallback

This sequence mirrors how buyers reduce uncertainty. It also gives teams a clean map for analyzing drop-off and section-level improvements.

For teams that want a fast baseline before deep customization, this guide on simple startup landing templates is a practical reference point.

Balancing Content and Design Without Overloading Users

Landing page creation usually fails when teams choose one extreme. Some pages are visually polished but content-light, while others are content-heavy with weak hierarchy and poor readability.

The goal is balance: enough information to build confidence, enough design clarity to keep decisions easy. Users should feel guided, not overwhelmed.

A practical balance model uses layered depth. First screen provides relevance and action direction, mid-page provides mechanism and proof, and lower sections handle objections and qualification detail.

Design should support this flow through spacing, contrast, and section rhythm. Content should support it through explicit outcomes, concrete language, and predictable CTA continuity.

First-Screen Clarity as the Primary Conversion Lever

Most startup pages leak value in the first view because they open with broad promises and ambiguous actions. Visitors then scroll without confidence or leave quickly.

A strong first screen answers three questions immediately. Who is this for, what changes for the user, and what is the next action.

Effective first screens usually include a focused audience cue, one concrete outcome, and one dominant CTA. Secondary actions can exist, but they should not compete visually or semantically with the main path.

When first-screen clarity improves, downstream performance often improves automatically because users enter the rest of the page with better intent.

Messaging Standards That Raise Lead Quality

High-performing startup copy is not about clever wording. It is about reducing interpretation effort and aligning expectations before users submit.

Use three copy rules consistently across all major pages in the same funnel. Consistency makes review faster and outcomes easier to compare.

- claims include mechanism, not only aspiration

- CTA text describes a concrete next step

- high-impact claims have nearby evidence

Compare these versions to see how specificity changes clarity:

- Weak: "Grow faster with our platform."

- Stronger: "Launch campaign pages in one day, test one variable weekly, and improve qualified signup flow without engineering bottlenecks."

This change improves clarity and filters low-fit traffic earlier, which usually improves sales and onboarding efficiency.

Offer Framing and Qualification Design

Many startups hide all qualification context because they fear losing leads. In practice, full opacity often increases low-intent submissions and creates pipeline noise.

You do not need full pricing complexity on every landing page. You do need enough context to help users self-assess fit.

Useful qualification cues include the elements below, and each one reduces uncertainty differently. Combined, they help users self-assess fit before submitting.

- entry pricing range or plan orientation

- what first tier includes

- expected setup effort or timeline

- response-time expectations and support model

Qualification signals should feel helpful, not defensive. Their purpose is to reduce uncertainty and improve lead intent quality before form completion.

Proof Architecture for Early and Growth Stages

Startups may not have large enterprise case libraries, but they can still build strong trust through relevant proof. Proof quality depends on context, specificity, and placement.

Use a layered proof model tied to hesitation points. Early proof should confirm credibility quickly, middle proof should connect mechanism to outcomes, and late proof should reduce implementation risk.

Practical proof formats include contextual testimonials, short case snapshots, founder expertise markers, process transparency blocks, and realistic constraints. Constraints often increase trust because they signal honesty.

Place strongest proof near first major CTA. If trust content appears only near the bottom, many users will decide before seeing it.

When teams want to strengthen early traction behavior alongside proof quality, this startup promoter growth framework offers useful tactics.

CTA Strategy and Microcopy Consistency

Multiple CTA placements can help long pages, but one primary action type should dominate each page variant. Competing actions split attention and weaken conversion intent.

CTA wording should match buyer stage. Cold audiences often convert better with guided steps, while warm audiences can move through direct commitment actions.

Microcopy consistency matters across sections. If one CTA promises a walkthrough and another implies immediate signup for the same path, users interpret mismatch as risk.

Secondary actions should remain visibly secondary and function as momentum-preserving options, not alternative primary funnels. If secondary paths look equal to primary actions, completion rates often decline.

Form Friction Management for Lean Teams

First-touch forms should capture enough data for routing without increasing abandonment. Overly long forms at first interaction usually reduce both volume and quality.

Use progressive qualification. Keep initial forms short, then collect deeper details after confirmation through onboarding or follow-up flows.

A practical first-step form asks for name, work email, role, and one intent category. Additional fields should be justified by routing or qualification value.

Review form fields on a fixed cadence. Remove fields that do not influence decisions, and test friction changes with clear before-and-after metrics.

Segment Variants Without Workflow Chaos

As channels diversify, one page rarely fits all intent patterns. Startup teams need source-aware variants, but they also need maintainability.

The best model is shared architecture with modular variation. Keep structure stable while adjusting headline framing, proof emphasis, and CTA language by source intent.

Document what changes by segment and what remains fixed. This prevents uncontrolled drift and preserves attribution quality across tests.

Variant clarity also reduces internal conflict. Teams stop debating one universal page and start optimizing pages for defined contexts.

For teams shaping multi-path funnels around product complexity, this dashboard-focused startup page guide can help structure role-specific decision flows.

Collaboration and Governance for Growing Teams

Landing pages often degrade when ownership is ambiguous. Marketing edits copy, product reviews claims, founders change tone, and no one owns conversion integrity.

Define a lightweight ownership model before the page enters weekly iteration. Clear ownership reduces launch delays and decision conflicts.

- message owner for clarity and CTA continuity

- proof owner for trust relevance and freshness

- analytics owner for event quality and reporting consistency

- release owner for final QA and publishing decisions

Governance should be concise and operational. It should accelerate quality decisions, not create bureaucratic delay.

Require a measurable hypothesis for major changes. If a change has no testable purpose, it usually adds noise rather than learning.

Mobile and Accessibility as Release Gates

Startup traffic is often mobile-first, especially from founder social and community channels. Weak mobile readability, slow media, or awkward forms can erase strong strategy.

Accessibility issues produce similar losses. Poor keyboard flow, weak focus visibility, and confusing error states silently reduce conversion quality.

Use a weekly release gate and treat it as mandatory before any major traffic push. This makes quality control consistent even during high-pressure launch weeks.

- first-screen readability and CTA visibility on mobile

- form completion and error recovery behavior

- keyboard navigation order and focus states

- media load and rendering checks on common devices

- trust block visibility before deep scroll

These checks are fast to run and frequently higher impact than additional creative polish.

SEO Support Architecture for Startup Landing Programs

Conversion pages are strongest when supported by clear intent content, not when isolated from the rest of the site. Search performance and conversion quality usually improve together when content architecture is intentional.

Use a three-level content system and map each planned article to one intent stage. Intent mapping prevents overlapping pages that compete with each other.

- discovery content for broad problem exploration

- comparison content for solution evaluation

- conversion content for action and qualification

Internal links should be contextual and decision-driven. Each link should help users move to relevant depth exactly when they need it.

Distribute links naturally across sections instead of clustering them in one block. This improves reader flow and topical cohesion.

For teams building initial content operations and launch cadence, this practical guide on startup landing creation workflows is useful.

Analytics Framework for Reliable Optimization

Without measurement discipline, teams optimize for vanity outcomes. Click-through rises while lead quality or activation declines, and no one can explain why.

Create a lightweight analytics standard before scaling experiments, then keep it visible in weekly review docs. Visibility improves consistency when multiple contributors ship changes.

- one primary metric per page objective

- one secondary quality metric per test

- standardized event naming across variants

- fixed source tagging taxonomy

- pre-launch event validation checklist

Track downstream outcomes such as qualified lead rates, meeting quality, or activation behavior. If downstream quality drops, top-funnel improvements should be treated cautiously.

Reliable analytics is what turns weekly experiments into a compounding advantage.

Experiment System That Produces Clear Learning

Many startup teams test too many things at once and lose attribution clarity. Controlled testing produces better decisions with less effort.

Run one major variable per weekly cycle. Keep other high-impact elements stable so results are interpretable.

High-value early variables include the set below, and teams should rotate through them systematically. Random variable selection often creates repetitive tests and weak learning.

- headline specificity

- primary CTA wording

- proof placement around first action

- form friction level

- qualification cue placement

Document every test with hypothesis, change, primary metric, secondary metric, and decision. The log becomes a reusable growth asset over time.

30-Day Startup Execution Plan

Week 1: baseline and instrumentation

Publish one focused page with one primary action path, one trust block near the first decision point, and validated tracking events. Confirm mobile and accessibility baseline before campaign launch.

Week 2: first-screen and CTA refinement

Test one headline variant and one CTA variant while keeping architecture stable. Evaluate using conversion rate plus lead-quality checks.

Week 3: proof and form improvements

Refresh proof with contextual outcomes, simplify first-touch form flow, and update FAQ based on live objections. Remove decorative sections that do not improve decision confidence.

Week 4: source-specific variant rollout

Launch one channel-specific variant and compare performance against baseline by source and device. Record keep-or-revert outcomes and update template standards.

This plan keeps execution realistic for lean teams and preserves measurement integrity.

60-Day Compounding Program

Days 1-20 should stabilize architecture and fix usability friction. Days 21-40 should run controlled experiments with consistent tracking and documented decisions.

Days 41-60 should consolidate winning components into reusable modules, formalize ownership, and archive weak variants that create reporting noise. Consolidation is what turns short-term wins into repeatable operating standards.

At the end of 60 days, the goal is a repeatable operating system, not a collection of disconnected edits. Repeatability is what allows teams to scale without rebuilding every month.

90-Day Scale Readiness Model

Before increasing acquisition spend, validate stability across message, trust, routing, and measurement. Scaling unstable pages usually amplifies inefficiency faster than growth.

Run a scale readiness check across these categories and document outcomes in one shared report. Shared visibility keeps scaling decisions grounded in evidence.

- message-source match and first-screen clarity

- proof freshness and relevance by segment

- form reliability and response expectations

- lead-quality stability by source

- tracking integrity and taxonomy consistency

If multiple categories are unstable, delay scaling and fix foundations first. This discipline protects both budget and team confidence.

For teams still using lean budget stacks, this startup launch model with free website makers gives practical context for low-cost execution planning.

Section-Level Implementation Library

A section library helps teams improve performance without rewriting full pages each cycle. Controlled section updates preserve stability while enabling fast learning.

Hero section framework

A reliable hero combines audience cue, outcome statement, and clear action language. Keep wording concrete and avoid broad slogans that require interpretation.

Test hero changes carefully. Compare one framing change at a time while keeping CTA and proof constant.

Mechanism section framework

Mechanism sections should explain input, process, and expected result in simple language. If complexity is high, add one lightweight visual or structured list.

Do not overload this section with full product documentation. Its role is confidence-building, not exhaustive explanation.

Proof section framework

Use proof that matches audience and stage. Early-stage audiences need credibility confirmation, while decision-stage audiences need implementation confidence.

Update proof cadence on a schedule and remove stale references. Fresh proof usually outperforms large legacy proof libraries.

FAQ framework

Build FAQ from real objections in sales, support, and onboarding conversations. Group questions by fit, effort, timeline, risk, and support expectations.

Keep answers short, specific, and action-oriented. If deeper detail is needed, provide context-linked routes to relevant supporting pages.

Follow-up continuity framework

Landing page success depends on what happens after form submission. Confirmation and follow-up communication should reinforce on-page promise language.

A simple follow-up sequence with timing clarity often improves activation quality even without increasing traffic. Continuity between page promise and follow-up messages is often more important than adding new page sections.

12-Week Operating Calendar for Startup Page Teams

A 12-week calendar gives enough time to stabilize baseline quality, run meaningful tests, and consolidate winning standards. Shorter cycles often end before compounding effects become visible.

Weeks 1-2: baseline stabilization

Focus on structure clarity, event integrity, mobile readability, and form reliability. Avoid large design changes during this phase.

Weeks 3-4: message and CTA testing

Run controlled tests on headline and CTA framing. Use both conversion and quality metrics to pick winners.

Weeks 5-6: proof and qualification upgrades

Improve proof specificity and refine qualification cues near core actions. Keep architecture stable to preserve attribution clarity.

Weeks 7-8: channel variant expansion

Deploy one additional source-specific variant. Document hypotheses, outcomes, and section-level insights in shared logs.

Weeks 9-10: form and follow-up optimization

Reduce first-touch friction and improve post-submit continuity. Track downstream quality changes, not only top-funnel movement.

Weeks 11-12: consolidation and scale gating

Archive underperforming variants, standardize winning modules, and run a formal readiness review before budget expansion. This keeps reporting clean and prevents weak patterns from returning.

This calendar keeps teams focused on compounding improvement rather than constant redesign churn. It also improves onboarding because new contributors can follow an established cycle.

Upgrade Triggers Beyond Free Plans

Free-first is often the right starting point, but teams should define upgrade triggers before friction becomes costly. Trigger-based decisions are usually better than reactive migrations.

Useful triggers include recurring publish delays, tracking limits that harm decision quality, collaboration bottlenecks, and repeated QA failures during launch windows. If these signals appear together, operating cost is usually rising quietly.

A practical rule is to review upgrades when three or more friction signals persist through two operating cycles. This threshold prevents premature changes while still protecting growth momentum.

Migration Playbook for Scaling Startup Teams

When a startup outgrows its initial workflow, migration risk becomes a major concern. Poorly planned migrations can erase attribution history and interrupt active campaign performance.

The safest path is phased transition with metric continuity. Keep structure stable while moving systems in controlled steps so decision quality remains intact.

Phase 1: baseline and dependency audit

Before migrating anything, map your active pages, traffic sources, event taxonomy, and follow-up flows. This audit gives teams a clear baseline for validating parity after each move.

Include both conversion and quality metrics in the audit snapshot. Teams that track only top-funnel numbers often miss quality regressions during migration windows.

Phase 2: template parity and tracking parity

Recreate one high-impact template first and verify that key conversion paths behave identically. Keep old and new versions in parallel for a short validation window.

Tracking parity should be validated separately from visual parity. Event consistency is the foundation for trustworthy post-migration decisions.

Phase 3: staged traffic rollout

Shift traffic in controlled increments by source rather than moving all channels at once. This makes diagnosis easier if conversion or lead quality declines.

Start with the source that has the clearest historical baseline. Once parity is confirmed, expand gradually to additional channels.

Phase 4: quality stabilization and deprecation

After rollout, compare new and old performance at both conversion and qualification levels. If quality is stable, deprecate legacy pages and remove redundant variants.

Deprecation discipline matters because duplicate legacy pages create reporting noise. Clean environments improve future testing speed and reduce operational confusion.

Phase 5: documentation and team enablement

Finalize migration with updated playbooks, ownership maps, and QA checklists. Documentation should explain not only what changed, but why each standard now exists.

A well-documented migration turns a risky transition into a long-term operational upgrade. It also shortens onboarding time for new contributors joining growth workflows.

When upgrading, migrate in phases. Preserve architecture and tracking parity first, then expand functionality once performance stability is confirmed.

Founder Priority Framework for Weekly Decisions

Founders face competing demands every week. A simple priority model keeps landing-page work tied to measurable outcomes.

Use this sequence as a weekly default and adjust only when a clear blocker appears. A default order reduces decision fatigue for lean teams.

- fix reliability issues that block conversion

- improve first-screen clarity for highest-volume source

- strengthen proof near first major action

- run one controlled high-impact test

- document outcomes and update reusable standards

This order protects focus and prevents low-impact design changes from consuming limited team bandwidth. Over time, this discipline creates more predictable gains from the same effort.

Common Mistakes and Fast Fixes

Mistake 1: Multiple equal-priority actions

Competing CTAs reduce decision confidence. Keep one primary action and demote secondary paths.

Mistake 2: Abstract claims without mechanism

Vague messaging increases interpretation burden. Rewrite core claims with concrete workflow context.

Mistake 3: Proof placed late in the page

Users may decide before they see trust signals. Move high-relevance proof near first major actions.

Mistake 4: Long first-touch forms

Early friction reduces completions and can lower quality. Keep initial forms concise and qualify deeper later.

Mistake 5: No release gate for mobile and accessibility

Silent interaction failures erode conversion quality. Add fixed QA checks before every major publish.

Mistake 6: Unstructured testing

Without clear hypotheses and logs, teams repeat weak experiments. Use one-variable cycles with documented decisions.

Mistake 7: Scaling before quality stability

More spend amplifies weak systems. Scale only after lead-quality and reliability signals stabilize.

FAQ: Startup Landing Page Creation

How long should a startup landing page be?

It should be long enough to resolve decision risk for your target audience. Structure and relevance matter more than arbitrary length targets.

What should we optimize first on a new page?

Start with first-screen clarity and primary CTA alignment. These typically deliver the fastest reliable improvements.

Can no-code workflows support serious startup growth?

Yes, when teams enforce consistent structure, measurement discipline, and weekly iteration standards. Tool cost has less impact than process quality in early growth stages.

How many variants should early teams run?

Start with one baseline and one source-specific variant. Expand only after tracking and QA are stable.

Should startup pages include pricing information?

Include enough context for self-qualification even if full pricing detail is not appropriate for the page. Minimal transparency usually outperforms complete uncertainty.

How often should trust content be refreshed?

At least monthly, and sooner when product, offer, or segment focus changes. Proof relevance should follow campaign shifts, not fixed calendars alone.

Why are accessibility checks important for conversion?

They reduce friction for all users and prevent silent drop-off in critical interaction points. Accessibility fixes often improve mobile interaction quality as well.

Which metric matters most after conversion rate?

Lead quality by source is usually the most useful secondary indicator. It helps teams distinguish real funnel improvement from vanity movement.

How often should lean teams run experiments?

One major experiment per week is a practical cadence for most startups. This pace balances learning speed with implementation stability.

When is traffic scaling justified?

When message consistency, form reliability, and downstream lead-quality indicators remain stable through at least two review cycles. Stability gates reduce the risk of scaling short-lived improvements.

Final Takeaway

Landing page creation for startups is not a one-time design task. It is an operating discipline built on clear structure, relevant proof, controlled testing, and measurable decision quality.

With Unicorn Platform, lean teams can run this discipline quickly through reusable sections and rapid iteration cycles. When process quality is strong, startup pages become dependable growth assets instead of fragile campaign artifacts.

For teams that want an additional rapid execution reference, this launch-your-site-in-3-steps workflow is a useful baseline.