Table of Contents

- The Workflow-Fit Model

- Migration Architecture: Move in Controlled Phases

- Common Failure Modes and Fixes

- 30-Day Adoption Sprint

- FAQ

Most teams do not switch platforms because they want new software. They switch because their current setup creates operational drag: page launches take too long, experiments stall in handoffs, and conversion improvements get buried under maintenance work. The stack may still function technically, but it stops matching how the team needs to publish and learn.

A stronger decision process starts with workflow fit, not feature lists. You need a platform that supports who is editing pages, how often campaigns launch, how QA is enforced, and how updates are measured after release. If those operational requirements are ignored, migration only relocates the same bottlenecks.

This guide gives a practical model for selecting and migrating to a new platform while protecting conversion quality. It is built for lean growth teams, founders, and operators who need faster execution with clearer governance.

sbb-itb-bf47c9b

Quick Decision Snapshot

Use this short filter before deeper evaluation. If two or more points below are true, your current stack is likely constraining growth. That usually means operational friction is already affecting outcomes:

- Publishing a new campaign page takes longer than one sprint.

- Non-technical editors cannot safely update high-impact sections.

- Mobile QA is inconsistent across releases.

- Form behavior and analytics break after routine edits.

- Message tests are delayed by design or development bottlenecks.

- Core page quality varies based on who publishes.

Teams that fail this filter should move into structured evaluation rather than incremental patching. Small tactical fixes will rarely resolve systemic workflow mismatch.

Why Platform Changes Usually Happen Late

Most organizations tolerate friction until it becomes visible in revenue. Small inefficiencies accumulate quietly: a delayed launch here, a missed experiment there, one more temporary workaround in the page flow. By the time leadership asks for a platform review, performance debt is already high.

Late switching also happens because teams evaluate tools by demos instead of operating reality. Demos show polished capabilities, but they rarely reveal what daily publishing feels like under deadline pressure. The key question is not whether a platform can do something once. The key question is whether your team can execute reliably every week with predictable quality.

A useful way to avoid late reactions is to run quarterly platform health checks. Those checks should focus on cycle time, QA stability, and conversion movement from recent releases. If progress slows while effort rises, the stack is likely the limiting factor.

The Workflow-Fit Model

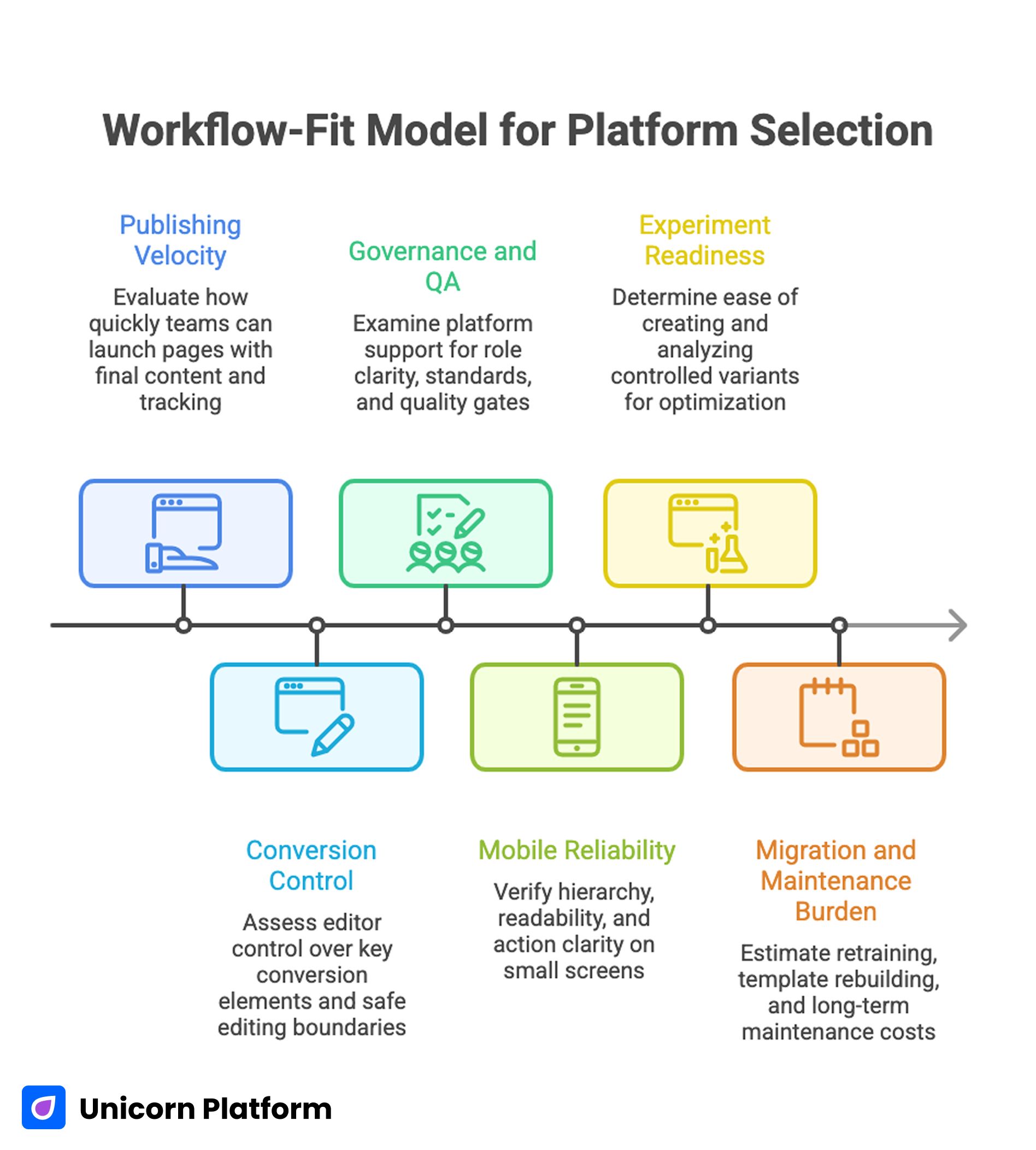

Workflow-Fit Model for Platform Selection

Evaluate each platform through six lenses. This creates a decision based on operating requirements rather than preference.

1) Publishing Velocity

Measure real launch speed, not setup speed. Ask how quickly your team can go from brief to live page with final copy, design consistency, and validated tracking.

Velocity should include revision rounds because most high-impact pages are not finished in one draft. If each revision still requires heavy technical intervention, velocity gains are superficial.

2) Conversion Control

Editors need direct control over high-leverage conversion components: headline hierarchy, trust block placement, CTA sequencing, and form depth. If those components are locked behind developer cycles, growth experiments become expensive.

Conversion control also means safe editing boundaries. Teams should be able to improve message clarity without risking structural breakage across the page.

3) Governance and QA

Publishing speed without governance creates inconsistency. A good platform supports role clarity, reusable section standards, and repeatable pre-publish checks.

Governance should prevent two extremes: uncontrolled edits and bottlenecked approvals. The right middle ground is fast collaboration with explicit quality gates.

4) Mobile Reliability

Desktop performance is not enough. Mobile first impressions often decide whether visitors continue. Your platform must preserve hierarchy, readability, and action clarity under small-screen constraints.

Mobile reliability includes form ergonomics, tap-target comfort, and predictable rendering after content edits. It also requires consistent checks after each revision, not only before major launches.

5) Experiment Readiness

Teams need to test message and flow assumptions continuously. If launching controlled variants is slow or risky, optimization stalls.

Experiment readiness means variant creation is simple, analytics are stable, and outcomes can be interpreted without ambiguity. Teams should be able to launch and read tests without ad-hoc engineering intervention.

6) Migration and Maintenance Burden

Switching cost is not only data transfer. Real cost includes retraining, template rebuilding, QA labor, and temporary performance volatility.

A good target platform reduces long-term maintenance burden so teams spend less time fixing pages and more time improving results. Long-term efficiency is usually the largest source of migration ROI.

Scoring Matrix You Can Use Immediately

Assign weights to each lens based on business model. For example, a campaign-heavy SaaS team may weight publishing velocity and experiment readiness higher than a content-led media business.

A practical scoring setup works best when the process is documented in one shared sheet. Use the sequence below:

- Set lens weights that total 100 points.

- Score each platform from 1 to 5 on each lens.

- Multiply score by weight.

- Compare weighted totals.

- Validate top option with a live pilot page.

Do not skip the pilot. Spreadsheet scoring reduces bias, but real workflow testing reveals hidden friction that scoring alone cannot capture.

When a Wix Replacement Path Makes Sense

A Wix replacement path is usually worth evaluating when teams outgrow template-first workflows and need tighter conversion control with faster iteration loops. The most common trigger is not design limitation by itself. It is the combination of editing constraints, handoff friction, and uneven quality across releases.

In that situation, this Wix replacement path is a practical reference for teams that want faster page cycles while keeping governance intact. It is especially relevant when growth and content teams need to work directly in the same publishing flow.

Before switching, validate three conditions. These checks reduce rollout volatility in the first migration phase:

- You have a defined section system, not ad-hoc page assembly.

- QA ownership is explicit for mobile, forms, and tracking.

- Measurement priorities are agreed before migration starts.

If these conditions are missing, migration will still happen, but performance stability will be harder to protect. Addressing them early prevents expensive rework in later batches.

When an Unbounce Replacement Path Is the Better Move

An Unbounce replacement path becomes relevant when campaign teams need tighter integration between acquisition pages and the broader site journey. The challenge is often architectural fragmentation: campaign pages perform in isolation, but continuity breaks after the first conversion step.

This Unbounce replacement framework is useful when teams need unified standards across campaign landing pages, service pages, and nurture destinations. The goal is not to remove campaign agility. The goal is to keep campaign agility while reducing cross-system friction.

Use this trigger list as a fast qualification step. Multiple matches usually indicate structural fragmentation:

- Campaign pages launch fast, but follow-up pages lag.

- Design language diverges between paid and organic journeys.

- Tracking and attribution become inconsistent across systems.

- Post-conversion routing requires manual workarounds.

If these conditions persist, a more unified page stack usually improves both execution speed and lifecycle conversion quality. It also simplifies ownership between acquisition and lifecycle teams.

Speed Is Valuable Only With Standards

“Build quickly” messaging is attractive, but speed alone does not produce better outcomes. Fast publishing without structure usually creates cleanup work: weak message hierarchy, misplaced trust blocks, inconsistent CTA behavior, and missed QA checks.

This is why teams should pair production speed with a documented release standard. A short standard can still be strict if it covers section logic, conversion flow, mobile QA, and analytics verification.

For teams building this discipline from scratch, this guide on creating a free site quickly with practical builders is useful as a starting reference. Treat it as a baseline for execution speed, then add your own QA rules for production quality.

Migration Architecture: Move in Controlled Phases

Large “all-at-once” migrations increase risk and reduce confidence. A phased architecture gives better control over quality, measurement, and stakeholder alignment.

Phase 1: Inventory and Intent Mapping

Audit existing pages by business role, intent stage, and performance contribution. Separate critical assets from low-impact assets so migration order reflects business risk.

For each critical page, document the core decision context. Keep this brief practical so teams can maintain it across sprints:

- primary audience segment.

- page job and desired action.

- top objections that must be resolved.

- tracking events that cannot break.

This inventory becomes your migration source of truth. It prevents batch sequencing from being driven by guesswork.

Phase 2: Pilot Rebuild

Select one high-impact page for pilot rebuild. Recreate flow logic, not just visual layout. Keep conversion events and form behavior traceable from day one.

Run side-by-side validation for a limited period. Compare message clarity signals, form quality, and post-conversion progression. Do not treat the pilot as a design showcase; treat it as an operational stress test.

Phase 3: Standardize Components

After pilot stability is confirmed, define reusable page components with clear usage rules. Component standards reduce quality drift and simplify onboarding for new contributors.

Useful component categories include the modules below. Standard naming makes reuse and QA faster:

- first-screen outcome blocks.

- social proof modules.

- process explanation sections.

- pricing or package context blocks.

- action sections by intent stage.

Standardization should increase speed and reduce review overhead. It also lowers variance when multiple editors publish in parallel.

Phase 4: Rollout With Weekly Governance

Roll out high-priority pages in weekly batches. Assign explicit owners for copy clarity, mobile QA, and analytics verification.

Batch reviews should produce three outputs each week: what improved, what broke, and what standards need refinement. That loop helps the migration learn as it scales.

Conversion System Design for Platform Transitions

A platform switch is an opportunity to improve conversion architecture, not merely replicate existing pages. If teams clone weak page logic into the new system, migration cost rises without meaningful performance gain.

Use transition time to refine these areas before broad rollout. Early architecture improvements compound over every later page:

- First-screen relevance by audience segment.

- Objection-specific proof placement.

- CTA expectation clarity from click to next step.

- Form depth matched to intent stage.

- Post-submit messaging and routing.

This approach turns migration from technical overhead into measurable growth work. It ensures the new stack improves outcomes, not only tooling.

Content Governance for Multi-Editor Teams

As soon as multiple contributors edit pages, governance becomes critical. Without guardrails, style and conversion quality drift quickly.

A practical governance model includes three clear role tracks. Each role should own specific release decisions:

- one owner for message clarity and hierarchy.

- one owner for proof freshness and trust quality.

- one owner for QA and release checks.

Ownership should be explicit, not assumed. “Shared responsibility” often means missing accountability at publish time.

Documentation should stay lightweight. A concise standards file plus change log is usually enough if it is reviewed every sprint.

QA Framework That Protects Speed

Quality gates should be strict enough to prevent regressions and short enough to run quickly. Long checklists get skipped under deadlines. Tiny checklists miss critical issues.

A balanced pre-publish QA stack should fit inside one focused review pass. Keep it short enough to run every release:

- section logic check: does each block support a user decision?

- copy clarity check: are outcomes concrete and understandable?

- proof check: is evidence specific and positioned near friction?

- interaction check: do CTAs and forms behave as expected?

- mobile check: is hierarchy and readability preserved?

- analytics check: are critical events firing correctly?

The key is consistency. QA quality improves when every release uses the same gates.

Measurement: What to Track After the Switch

Post-migration evaluation should focus on business-relevant signals, not only vanity metrics. Raw traffic growth can hide weak conversion quality.

Track these metrics together during each rollout wave. Looking at them as a set prevents false conclusions from isolated signals:

- qualified lead rate.

- form completion quality.

- step-two progression after initial conversion.

- response lag where sales follow-up exists.

- source-level conversion consistency.

Reviewing these as a set prevents false optimism. One metric alone rarely shows real page health.

Cost Model: Where Hidden Expense Usually Lives

Many teams underestimate migration cost because they focus on license pricing and ignore operating overhead. Operational burden usually exceeds license variance over time.

Hidden costs often include the following categories. Quantifying each one early gives stakeholders a more realistic rollout plan:

- rebuilding templates without standardized components.

- repeated QA cycles due to unclear ownership.

- analytics fixes after delayed instrumentation planning.

- retraining time for editors and reviewers.

- temporary performance dips during rollout.

A clear migration plan reduces these costs by making work predictable and reusable. Repeatable process is the main defense against hidden migration overhead.

Common Failure Modes and Fixes

Failure: Switching tools without changing workflow

Symptoms include similar launch delays and similar quality drift after migration. Fix by redesigning ownership, QA gates, and iteration cadence before rollout expands.

Failure: Overweighting visual parity

Symptoms include high effort spent matching old designs while conversion logic remains weak. Fix by prioritizing decision flow, trust timing, and action clarity before visual fidelity details.

Failure: No pilot-stage learning loop

Symptoms include repeated issues across multiple migrated pages. Fix by running a strict pilot review and converting findings into mandatory component standards.

Failure: Inconsistent mobile behavior

Symptoms include acceptable desktop results with weak mobile action quality. Fix by adding real-device checks as a hard release gate for every high-impact page.

Failure: Measurement fragmentation

Symptoms include conflicting reports and low confidence in optimization decisions. Fix by defining non-negotiable events before migration and validating tracking during each batch.

30-Day Adoption Sprint

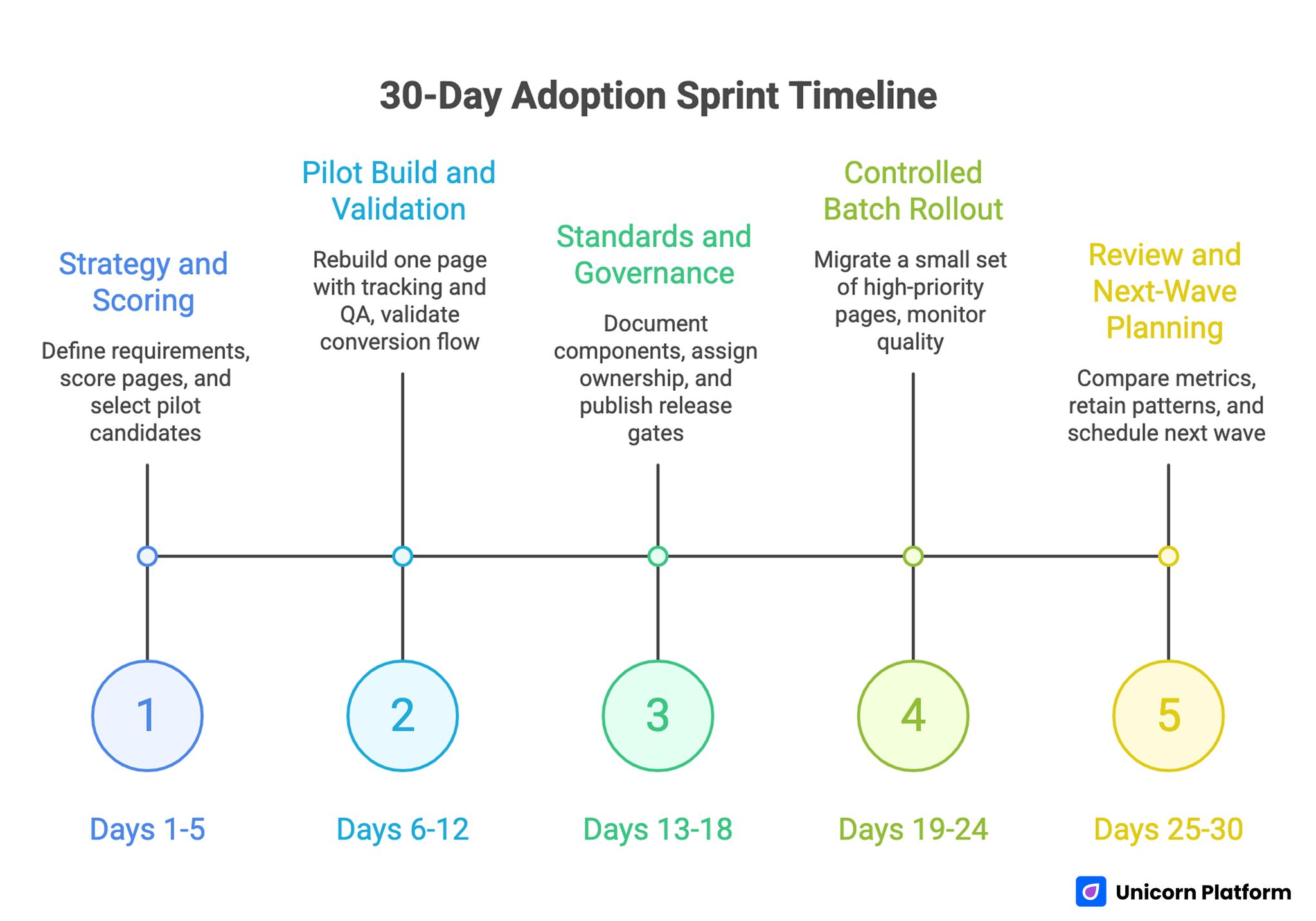

30-Day Adoption Sprint Timeline

Days 1-5: Strategy and scoring

Define platform requirements, apply weighted scoring, and choose pilot page candidates. Confirm migration goals in operational terms, not abstract feature goals.

Days 6-12: Pilot build and validation

Rebuild one high-impact page with full tracking and mobile QA. Validate copy hierarchy, conversion flow, and event integrity before broad rollout.

Days 13-18: Standards and governance

Document reusable components, finalize ownership roles, and publish release gates. Run one additional page migration to test repeatability.

Days 19-24: Controlled batch rollout

Migrate a small set of high-priority pages, monitor conversion quality, and record issues in a shared change log. Keep batch size intentionally small so fixes can be absorbed quickly.

Days 25-30: Review and next-wave planning

Compare pre-switch and post-switch quality metrics, retain winning patterns, and schedule the next migration wave with updated standards. This closes the first adoption loop with evidence instead of assumptions.

Minimal Artifact Set for Ongoing Execution

After the first 30-day sprint, teams need a small artifact set to keep quality stable. Without these artifacts, each new batch starts from memory and debate instead of a shared operating baseline. That slows down publishing and increases avoidable QA regressions.

Keep five artifacts active and current:

- A one-page platform decision memo with scoring logic and assumptions.

- A component usage guide with examples of valid and invalid section patterns.

- A release checklist used before every publish, including analytics verification.

- A migration issue log with owner, impact, and resolution date.

- A monthly review template that links page changes to business outcomes.

None of these documents need to be long. The value comes from consistency, ownership, and reuse. When this artifact set is maintained, onboarding becomes faster and experimentation quality improves because every editor works from the same decision rules.

FAQ: Platform Alternatives for Website Teams in 2026

What is the first signal that a platform change is needed?

The earliest reliable signal is when cycle time keeps increasing even though team effort stays high. If publishing gets harder each quarter, the workflow is likely misaligned with the stack.

Should we migrate everything at once?

No. A staged rollout protects conversion stability and gives your team room to learn from real usage before scaling the move.

How do we compare tools without bias?

Use weighted scoring tied to operational requirements, then validate top options with a live pilot page. Demo impressions alone are not sufficient for final selection.

What matters more: features or workflow fit?

Workflow fit matters more because unused features do not improve outcomes. Teams win when daily execution is faster, safer, and easier to measure.

How do we keep no-code speed from hurting quality?

Use fixed release gates and clear ownership for message clarity, proof integrity, mobile QA, and analytics checks. Speed works best when the process is consistent.

What should we optimize first after migration?

Start with first-screen relevance, proof placement, and CTA expectation matching. Those changes usually generate the clearest early gains.

How many experiments should run at once?

For most teams, one high-impact variable per cycle is enough. Too many simultaneous tests reduce interpretability and slow decision quality.

How do we avoid content drift with multiple editors?

Maintain reusable section standards and a short change log reviewed every sprint. Drift drops when contributors work from shared structural rules.

Can platform switching improve lead quality, not just volume?

Yes, if the migration includes clearer qualification flow and intent-matched forms. Better architecture often improves downstream conversion quality.

What is the main reason migrations fail?

Migrations fail when teams change software but keep old operating habits. The platform changes, but ownership and QA logic remain broken.

Final Takeaway

Choosing an alternative platform is not a branding decision. It is an operating decision about execution speed, conversion control, and governance quality.

Teams that migrate in phases, enforce release standards, and measure real business signals usually achieve faster launches with steadier performance. With the right process, a platform transition becomes a durable growth improvement rather than a short-term rebuild project.