Table of Contents

- What Startup Teams Actually Need From an AI Website Builder

- 30-Day Startup Execution Plan

- Common Failure Patterns and Fast Fixes

- FAQ

Launching a startup site is easier than it used to be. Launching one that consistently produces qualified demand is still difficult.

Most teams can create a homepage quickly with AI assistance. The real challenge appears after launch, when the page needs to explain value clearly, build trust fast, and move visitors into the right next action without confusion.

That is why selecting a builder is only the first step. The core advantage comes from a repeatable operating model that combines drafting speed, editorial quality, and disciplined iteration.

This guide explains how to build that model. You will learn how to choose the right stack, set up practical OpenAI-enabled workflows, create better page structure, run useful tests, and keep quality stable as output volume grows.

If your team is comparing broad approaches before choosing a workflow, this practical AI website maker guide is a useful baseline for evaluating speed versus control.

sbb-itb-bf47c9b

Quick Strategic Takeaways

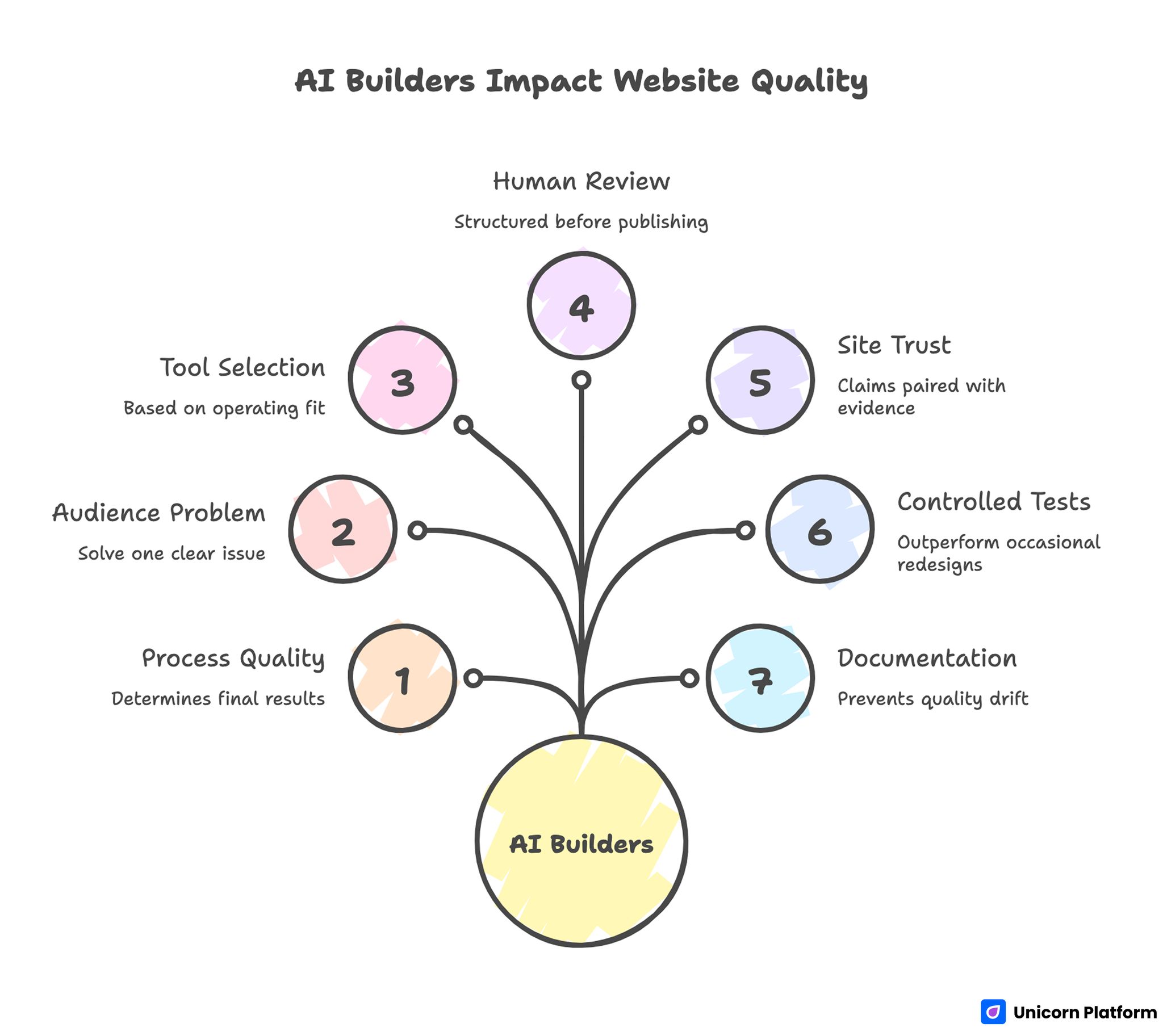

AI Builders Impact Website Quality

- AI builders shorten production time, but process quality determines results.

- The first page should solve one clear audience problem, not everything at once.

- Tool selection should be based on operating fit, not trend popularity.

- Prompt output needs structured human review before publishing.

- Site trust improves when claims are paired with practical evidence.

- Weekly controlled tests usually outperform occasional full redesigns.

- Documentation and ownership prevent quality drift as teams scale.

Why "Open the Builder" Is Not a Strategy

Many startup teams assume the hard part is getting a site live. In practice, the hard part is maintaining conversion quality after the first version is published.

The common failure pattern is fast launch followed by random edits. Teams change headlines, sections, and CTAs simultaneously, then cannot tell which change helped and which hurt.

Another pattern is generic messaging. AI-generated text may sound polished but still fail to answer practical visitor questions: Who is this for? What happens first? Why should I trust this now?

Without a defined operating model, speed creates volume but not reliable outcomes. Teams need a clear process for what gets published, what gets tested, and what gets retired.

What Startup Teams Actually Need From an AI Website Builder

A startup-friendly builder should reduce execution friction while preserving decision quality. Fast output is useful only when decision-making quality improves at the same time.

1) Fast editing for non-technical contributors

Founders, growth leads, and marketers should be able to update key sections without engineering bottlenecks. If every change needs developer intervention, launch speed and experimentation quality both decline.

2) Reusable section systems

You should be able to reuse proven blocks across campaigns while adapting message by segment. Reusable structures reduce QA overhead and keep narrative consistency across pages.

3) Conversion-focused controls

The platform should support clear CTA hierarchy, form flexibility, and trust-block placement without complex workarounds. Conversion controls that feel native are usually easier for lean teams to maintain reliably.

4) Practical SEO and performance controls

Basic discoverability and speed settings should be simple enough for lean teams to maintain. Complicated technical workflows create silent maintenance debt and slower iteration cycles.

5) Integration reliability

Analytics, CRM, and automation connections must be stable, or optimization decisions become unreliable. Weak integrations often produce noisy data and false conclusions.

6) Cost behavior that scales predictably

A low entry price is less important than long-term operating cost under real page volume and contributor growth. Budget planning should reflect real workflow usage rather than first-month assumptions.

This selection framework prevents many expensive tool switches later. It also shortens decision cycles because teams compare tools against shared criteria instead of personal preference.

Getting Started With OpenAI-Enabled Website Workflows

A lot of teams jump directly into generation prompts without setup discipline. Better results come from preparing the workflow before writing copy.

Step 1: define one audience and one target outcome

Start with one clear audience profile and one measurable goal for the page. Broad goals create broad copy and weak action flow.

Step 2: map user questions by intent stage

List what visitors ask when they are exploring, evaluating, and deciding. Use these questions to structure page sections and assistant prompt trees.

Step 3: choose a constrained prompt framework

Prompt templates should include audience, problem, mechanism, proof requirements, and CTA intention. Constrained prompts reduce generic output.

Step 4: set editorial guardrails before drafting

Define unacceptable patterns, such as vague claims, unsupported outcomes, and unclear next steps. Guardrails make review faster and more consistent.

Step 5: publish one controlled variant first

Avoid launching many uncontrolled versions at once. A focused first variant provides cleaner learning and faster iteration.

This setup mirrors practical OpenAI adoption principles: experiment quickly, but measure clearly.

Tool Stack Choices for Lean Startup Teams

Tool choices should reflect startup constraints, not enterprise wish lists. A smaller, reliable stack usually creates better learning speed than a large, partially adopted stack.

A practical baseline stack includes:

- one builder for publishing and structure control

- one analytics layer with clear event naming

- one CRM destination for lead routing

- one collaboration workflow for approvals and QA

Adding more tools too early often increases complexity without improving outcomes. Teams usually gain more from cleaner process than from larger stacks.

For teams deciding between custom and no-code paths, this guide on building custom sites without coding can help compare capability against maintenance burden.

Message Architecture That Improves Conversion Quality

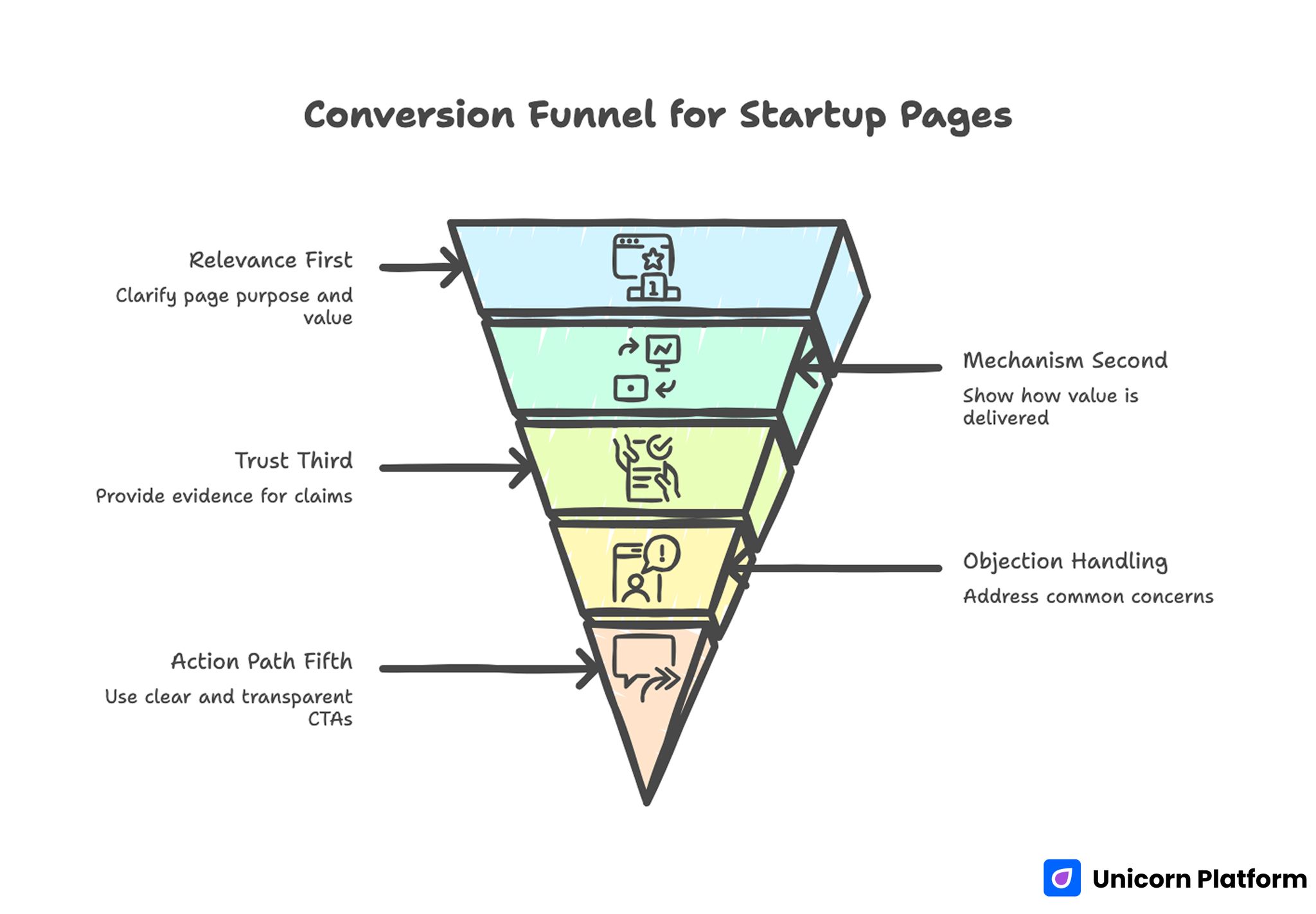

Conversion Funnel for Startup Pages

High-performing startup pages usually follow a stable decision sequence. When that sequence is broken, users spend more effort interpreting the page and less effort deciding.

Research from HubSpot’s landing page best practices shows that clear, single‑focused calls to action and low‑friction user paths significantly improve conversion behavior. Aligning CTA design with user intent reduces hesitation and increases the likelihood of qualified interaction.

Relevance first

The first screen should clarify who the page is for and what practical result is offered. If this is unclear, bounce risk rises immediately.

Mechanism second

Show how value is delivered in concrete terms. Avoid feature dumping before visitors understand workflow impact.

Trust third

Place proof near claims. Trust improves when users see evidence where uncertainty appears, not only at the page bottom.

Objection handling fourth

Address common concerns before the final CTA. Typical concerns include setup effort, timeline, reliability, and expected output quality.

Action path fifth

Use one dominant CTA per variant and keep commitment transparent. Hidden commitment language harms trust quickly.

This architecture works across SaaS, services, and early-stage product launches.

Website performance has a direct impact on user engagement and business outcomes. Research from Portent’s shows that faster page load times and smoother interaction patterns significantly improve conversion rates.

Practical OpenAI Prompting Patterns for Web Teams

Prompt quality has a direct effect on editing workload. Better prompts produce drafts that are easier to validate and less expensive to refine.

A useful startup prompt format:

- audience: who the page targets

- problem: the concrete pain point

- outcome: what changes after adoption

- mechanism: how the product creates value

- trust requirement: what proof must appear

- CTA intent: action expected at this stage

- tone constraints: what to avoid in wording

This format produces drafts that are easier to refine and less likely to sound generic.

A second useful pattern is section-level prompting. Generate each major section independently, then integrate them into one coherent narrative. This usually improves specificity compared to generating the entire page in one call.

Embeddings and On-Site Search: When It Makes Sense

Some startups can improve user experience by adding smarter on-site search powered by embeddings. This is especially useful when support questions cluster around findability rather than product complexity.

This is most valuable when your site has enough content depth that users struggle to find relevant answers quickly. A simple semantic search layer can reduce support burden and increase content utility.

Start with a constrained use case, such as searching help docs, case summaries, or implementation guides. Keep result quality monitoring explicit and avoid overselling search accuracy early.

For most early-stage teams, semantic search is a phase-two enhancement, not a launch requirement.

Responsible Use and Trust Boundaries

AI-assisted websites require trust clarity. Visitors need to understand what is automated, what is reviewed, and where confidence limits exist. Clear boundaries reduce skepticism and improve action confidence.

A practical trust model includes:

- clear claim boundaries

- plain-language summary of how outputs are generated

- explicit escalation path for uncertain cases

- fresh proof elements with update ownership

Responsible use is not only a policy issue. It is also a conversion issue because uncertainty without context lowers action confidence.

Pricing and Resource Planning for Website Operations

Budget planning should account for more than software subscriptions. Teams should model contributor time, maintenance burden, and rework risk alongside platform fees.

Real operating cost includes:

- platform and seat pricing

- integration and tracking maintenance

- editorial and proof refresh labor

- testing and QA cycles

- migration risk if tools change

A useful model is quarterly planning with three scenarios: conservative, expected, and aggressive growth. This keeps cost decisions aligned with actual release volume and team capacity.

For early launch phases where budget is limited, this practical free startup site launch model can help teams structure low-risk validation before scaling spend.

Weekly Optimization Cadence That Compounds

The best results usually come from small, controlled weekly changes. Structured iteration produces cleaner signal quality than irregular broad redesigns.

A reliable cadence:

- Monday: review prior signals by section and source

- Tuesday: select one hypothesis and one metric target

- Wednesday: ship one major test change

- Thursday: run QA and mobile verification

- Friday: document keep/revise/archive decision

This routine improves learning quality and reduces random experimentation.

30-Day Startup Execution Plan

Week 1: foundation and clarity

Define the page objective, audience, and primary action. Rework first-screen copy to be specific and practical.

Week 2: trust and mechanism

Improve mechanism explanation and add proof near major claims. Remove sections that do not help decisions.

Week 3: controlled variant testing

Launch one comparison variant and test one meaningful variable, such as hero framing or CTA language.

Week 4: retention of winners

Keep winning sections, archive weak versions, and update templates for reuse in the next cycle.

This plan is realistic for lean startup teams and provides enough structure for reliable improvement. It is intentionally narrow so teams can learn quickly without overextending resources.

90-Day Scale Roadmap

Month 1: stabilize baseline

Focus on consistent narrative flow, clean tracking, and trustworthy proof placement.

Month 2: expand by segment

Create one adjacent segment variant while preserving core structure and trust modules.

Month 3: operationalize governance

Formalize review ownership, refresh cadence, and quality gates across all active pages.

Scale should follow repeatability, not just traffic growth. Expanding before quality is stable usually increases noise and slows meaningful progress.

Common Failure Patterns and Fast Fixes

Failure pattern: high engagement, weak qualified leads

Fix: tighten audience specificity and reduce broad claims that attract low-fit traffic.

Failure pattern: fast publishing, low learning

Fix: run one major test variable per cycle and standardize experiment documentation.

Failure pattern: polished design, unclear value

Fix: move mechanism explanation and proof closer to first-screen claims.

Failure pattern: message mismatch across channels

Fix: map acquisition promise to exact confirming section on the landing page.

Failure pattern: stale proof assets

Fix: assign proof ownership and run scheduled refresh cycles.

Failure pattern: contributor inconsistency

Fix: lock reusable templates and enforce a shared editorial checklist before release.

These fixes are operational, not cosmetic. They usually produce measurable gains faster than full redesigns.

Governance Model for Startup Web Teams

Quality declines quickly when ownership is implicit. Explicit role boundaries improve speed because decisions move through predictable review paths.

A practical role map:

- positioning owner: message clarity and audience fit

- proof owner: evidence freshness and claim validation

- growth owner: experiment design and interpretation

- QA owner: release checks and technical reliability

Even in small teams, explicit role ownership improves speed because decisions move through predictable paths. It also reduces release errors caused by unclear accountability.

Documentation Standard That Prevents Repeated Mistakes

Keep one short release note for every major update:

- what changed

- why it changed

- target metric

- result after 7 days

- keep/revise/archive decision

This note turns experimentation into reusable knowledge. Without it, teams often repeat low-value edits and lose compounding progress. Documentation is one of the simplest ways to increase learning velocity.

FAQ: AI Website Builder Workflows for Startups

What should a startup optimize first, speed or clarity?

Clarity first. Speed is useful only when users can quickly understand value and next steps.

Are free AI builders enough for launch?

They can be enough for early validation. Growth-stage operations usually need stronger controls and integrations.

How many page variants should we run at once?

Run only as many variants as your team can measure and maintain responsibly. More variants without process create noise.

How do we avoid generic AI copy?

Use constrained prompts and strict editorial review. Generic claims should never pass final QA.

Do we need custom code to get good conversion results?

Not always. Many teams achieve strong results with no-code workflows when structure and governance are disciplined.

What metrics matter beyond click-through rate?

Qualified conversion, activation quality, and segment-level outcome metrics are usually more useful than raw engagement signals.

How often should we update trust elements?

At least monthly, and immediately after major product or pricing changes.

What is the biggest operational mistake startup teams make?

Treating each release as isolated work instead of part of a repeatable system with ownership and documentation.

Should we build advanced features like semantic search early?

Only if it supports a clear user need and you can maintain quality reliably. Keep early scope focused on core conversion flow.

How do we know when to switch tools?

Switch when workflow limits clearly reduce conversion quality or iteration speed, not just because another tool looks newer.

Final Takeaway

AI builders can give startups a real execution edge, but only when speed is paired with structure. The teams that win are the teams that use clear message architecture, trustworthy proof, controlled experiments, and explicit operating ownership. Consistent process turns fast publishing into durable growth.

With Unicorn Platform, startup teams can run this model effectively: publish quickly, learn reliably, and improve conversion quality in steady, measurable cycles. That combination is what separates one-time launch activity from repeatable performance.