Table of Contents

- The Editorial Operating System AI Teams Need

- Build a Coverage Model for Major Lab Announcements

- Common Mistakes and Fast Fixes

- 30-Day Implementation Plan

- FAQ

AI reporting now moves at infrastructure speed. Major model updates, policy signals, product launches, funding announcements, and benchmark claims can appear in clusters, sometimes several times in one day. Readers expect fast coverage, but they also expect reliability, context, and clear implications.

That combination is where many teams break. Newsrooms that optimize only for speed tend to publish surface summaries with weak verification. Newsrooms that optimize only for depth often miss the first attention window and lose momentum to faster outlets. Strong AI journalism requires both, supported by repeatable workflows. Strong AI journalism requires both, supported by repeatable workflows. According to Pew Research Center, audiences increasingly expect news organizations to balance speed with accuracy, and are more likely to disengage when content appears rushed or insufficiently verified.

The core question is no longer whether a newsroom can write quickly. The real question is whether the newsroom can maintain quality while operating under permanent volatility.

Unicorn Platform is useful in this environment because it supports structured publishing workflows, clean section reuse, and clear content hierarchy across devices. Those foundations reduce operational friction and help editors focus on reporting quality instead of page-building overhead.

sbb-itb-bf47c9b

Quick Takeaways

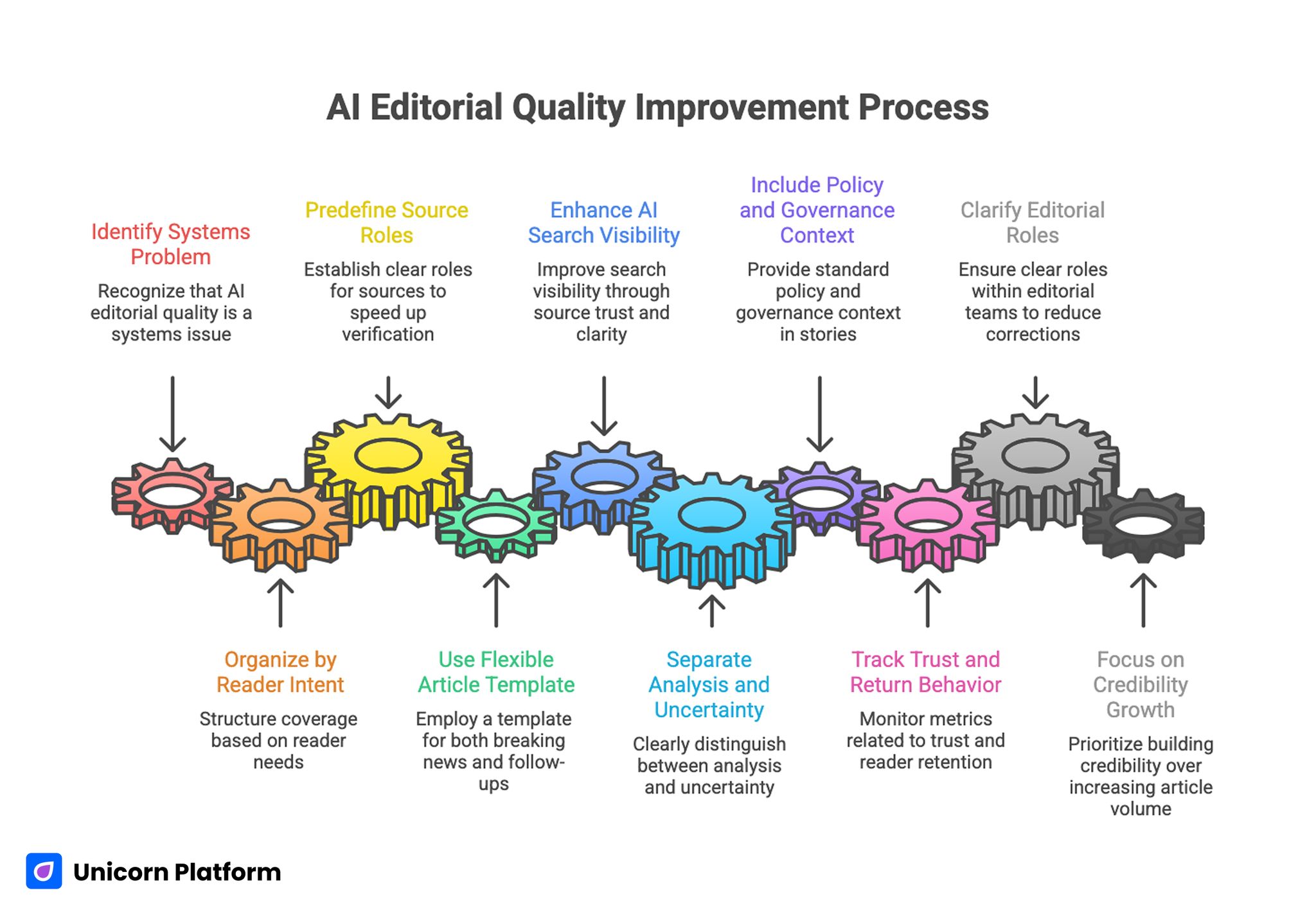

AI Editorial Quality Improvement Process

- AI editorial quality is mostly a systems problem, not a writing talent problem.

- Coverage should be organized by reader intent, not only by topic category.

- Verification speed improves when source roles are predefined.

- One article template can support both breaking updates and deep follow-ups.

- AI search visibility increasingly depends on source trust and contextual clarity.

- Reader retention improves when analysis and uncertainty are separated clearly.

- Policy and governance context should be standard in major AI stories.

- Metrics should track trust and return behavior, not just pageview spikes.

- Role clarity in editorial teams reduces correction cycles.

- Sustainable growth comes from compounding credibility, not headline volume.

Why AI Reporting Fails Under Volume

Most failures come from process gaps that appear only at scale. When story volume rises, teams often compress verification into a single editorial pass and skip independent confirmation. This may produce short-term traffic, but it increases correction risk and weakens audience confidence.

A second failure mode is interpretive overreach. Reporters may present experimental claims as settled outcomes because the pressure to publish is high. In AI coverage, where benchmarks and demos can be context-sensitive, this creates confusion and accelerates trust erosion.

A third issue is audience blending. One article tries to serve engineers, founders, casual readers, policymakers, and marketers at the same time. The result is usually shallow for advanced readers and too dense for general readers.

A stronger model starts with explicit intent segmentation before drafting begins. That early decision removes most of the confusion that appears later in editing.

The Editorial Operating System AI Teams Need

High-trust AI coverage works best with a simple operating sequence. Each step keeps the newsroom aligned when pressure is high:

- Confirm what changed.

- Confirm what did not change.

- Classify certainty level.

- Identify affected audiences.

- Explain implications by time horizon.

This sequence protects quality because it separates facts from interpretation early in the workflow. It also reduces newsroom disagreement by creating a shared editorial frame before headlines are written.

Teams can run this sequence quickly with templated checklists and section-level ownership. The goal is not bureaucracy. The goal is predictable quality under pressure.

Organize Coverage by Reader Intent, Not by Hype Cycle

AI media is often planned around trending terms, but readers return when they can find reliable decision support. Intent-based architecture solves this by mapping coverage to why someone reads, not only what happened.

A practical structure includes three lanes. Each lane serves a different decision need for the reader:

- breaking updates for immediate orientation,

- weekly synthesis for pattern recognition,

- strategic analysis for operational decisions.

Breaking updates should prioritize verified facts and bounded interpretation. Weekly synthesis should compare signals across events and identify trend consistency. Strategic analysis should answer what teams should change in product, operations, or policy response.

This structure gives readers a clear expectation for each format and improves editorial consistency over time. It also makes team planning calmer because publication rhythm becomes predictable.

Source Verification Stack for Fast Newsrooms

AI reporting quality depends on source hierarchy. Fast teams should avoid single-source publication for consequential claims.

Use a three-layer stack. This keeps verification practical without slowing every story to a full investigation:

- primary source: official release, paper, filing, or direct statement,

- independent layer: credible external confirmation or expert review,

- contextual layer: historical, market, or policy comparison.

If one layer is missing, the article should label uncertainty explicitly rather than implying full confidence. Transparent uncertainty is usually better for trust than artificial certainty.

Editorial speed improves when this stack is encoded into the template itself. Reporters then know what evidence must exist before approval.

Build a Coverage Model for Major Lab Announcements

OpenAI, Google, Anthropic, and other major labs can generate intense news cycles with mixed technical and commercial signals. Teams need a repeatable structure for these stories so coverage quality does not depend on individual writer style.

A useful major-lab format includes specific checkpoints. The structure should be stable across stories so readers can compare updates quickly:

- what is officially announced,

- what evidence supports performance claims,

- who benefits immediately,

- where uncertainty remains,

- what to monitor over the next 30 days.

This framework helps readers distinguish launch messaging from validated impact. It also creates a consistent archive pattern, making follow-up reporting easier and more coherent.

AI Search Visibility and Media Discovery

AI-answer interfaces increasingly shape how users discover technology reporting. Publications that are repeatedly cited usually combine clear structure, explicit sourcing, and high topical coherence over time.

For editorial teams, this means visibility is not only a keyword game. It is a reliability game. Content that explains claims clearly, cites strong sources, and resolves ambiguity tends to earn more machine-mediated exposure.

When shaping long-term content planning for discoverability, this guide on data-driven SEO strategies for content opportunities can help connect editorial priorities with durable search demand. It is most useful when tied to your recurring analysis cadence.

Even with strong discovery performance, retention still depends on whether each article gives actionable clarity, not just retrieval-friendly formatting. Discoverability starts the relationship, but trust quality keeps it.

Story Template Design for Repeatable Quality

A reliable AI news template should guide reader decisions quickly. One effective sequence is listed below, and each step should be visible at a glance:

- headline and one-sentence framing,

- verified facts block,

- impact by audience segment,

- uncertainty and open questions,

- next watchpoints and timeline.

This pattern works because it mirrors reader behavior. Most readers scan for orientation first, then decide whether to invest attention in deeper interpretation.

Templates should also standardize certainty language. Phrases that imply proof should be reserved for verified outcomes. Experimental indicators should be labeled as preliminary.

Different Depth for Different Readers

Not every audience needs the same level of technical detail. The fastest way to lose relevance is to publish one layer of explanation for all readers.

A practical approach is layered depth. This allows one article to serve multiple audiences without becoming incoherent:

- surface layer for general orientation,

- applied layer for operators and managers,

- technical appendix for advanced readers.

Layered depth improves both readability and authority. General readers can follow the story without jargon overload, while advanced readers can still evaluate substance.

This model also reduces rewriting effort because core facts remain stable while depth layers can be adjusted by section. The newsroom spends less time duplicating work across similar stories.

Calendar Strategy: Daily Speed and Weekly Intelligence

AI editorial calendars should separate reactive publication from reflective publication. If all energy goes to breaking coverage, trust decays because context is thin.

A balanced schedule can allocate clear publication lanes. The point is to protect both speed and depth in the same calendar:

- daily short updates for confirmed changes,

- weekly synthesis for cross-story signals,

- monthly explainers for structural trends.

This rhythm helps teams avoid burnout while still meeting audience demand for timely reporting. It also improves planning quality for deeper pieces that need expert input.

For teams refining format balance across news, explainers, and evergreen content, this content marketing format framework offers practical planning guidance. Use it to keep publishing mix aligned with audience intent changes.

A calendar should also include scheduled post-publication reviews so insight quality improves with each cycle. Review windows are where durable editorial gains usually appear.

Metrics That Predict Trust and Retention

Pageviews are easy to track and easy to misread. High traffic on a controversial headline does not prove editorial strength.

More reliable indicators include several behavior signals. These reveal whether readers treat the publication as a trusted reference:

- repeat visits to analysis pieces,

- source-link click-through behavior,

- scroll completion on context sections,

- newsletter conversion from article pages,

- time-to-second-session after first visit.

These metrics show whether readers view your publication as decision support or disposable feed content. That distinction is essential for long-term growth planning.

Editorial teams should tie metric movement to section-level changes. That creates a measurable improvement loop rather than intuition-driven adjustments.

Policy, Safety, and Governance as Standard Context

In 2026, AI stories rarely exist in a purely product context. Regulatory direction, safety testing, data governance, and labor implications often shape real-world impact as much as the model update itself.

A compact governance section in major stories improves decision value for business readers and policy-focused audiences. It also reduces over-rotation on feature novelty.

The governance section does not need to be legalistic. It should answer practical questions: what obligations might change, which risks are material, and where uncertainty remains.

This discipline strengthens credibility because it signals that the publication covers outcomes, not just announcements. Readers reward that framing with higher return intent.

Mobile Reading Experience for Fast News Consumption

A large share of AI news discovery happens on mobile devices. If first-screen clarity is weak, readers exit before reaching the section where nuance appears.

Mobile-friendly reporting should prioritize a few structural choices. These choices improve scan behavior during high-volume news periods:

- concise first-screen framing,

- short evidence blocks,

- clear subheads for scan behavior,

- one primary CTA path,

- minimal visual noise around key claims.

User behavior patterns matter here. Small improvements in layout and interaction flow can produce large gains in comprehension and return intent.

For teams working on mobile-first experience design, this guide on user behavior tips to optimize landing pages can inform section hierarchy and interaction decisions. Apply the same principles to long-form analysis pages, not only landing pages.

Team Roles That Protect Speed and Accuracy

Coverage quality improves when role boundaries are explicit. A compact role model is usually enough for most teams:

- source verifier,

- narrative editor,

- final risk approver,

- performance reviewer.

The source verifier owns evidence integrity. The narrative editor owns clarity and certainty language. The final approver owns publication risk on consequential stories. The performance reviewer links editorial decisions to outcome metrics.

One person can cover multiple roles in smaller teams, but responsibility boundaries should still be clear. Ambiguous ownership is a direct path to repeated mistakes.

Pre-Publish Gates That Prevent Correction Cycles

Fast publication does not require heavy process, but it does require non-negotiable quality gates. The goal is consistency, not bureaucracy.

A practical gate can include a short set of checks. These checks should be completed before every high-impact publication:

- claim-to-source alignment check,

- certainty language check,

- audience-level clarity check,

- mobile scan check,

- policy-context check for major stories.

These gates reduce post-publication edits, improve reader confidence, and save total editorial time. They also make escalation decisions easier during breaking cycles.

The key is consistency. Gates only work when they are applied to every high-impact story, not selectively during high-pressure cycles.

Case Pattern: From Traffic Spikes to Reliable Readership

Consider a small AI publication that publishes quickly and attracts periodic traffic spikes but struggles with repeat sessions. Audit reveals three issues: thin sourcing, inconsistent story structure, and weak follow-up analysis.

The team introduces an intent-based calendar, a fixed source stack, and a two-step publication model: fast verified brief followed by structured analysis within 24 to 48 hours. This adjustment changes both output quality and editorial confidence.

After several cycles, repeat readership rises because users know what to expect and where to find deeper context. Editorial confidence improves as correction rates decline. Commercial performance becomes more predictable because qualified audience behavior stabilizes.

The lesson is simple: repeatability compounds faster than improvisation. Newsrooms that standardize quality loops usually outperform louder but inconsistent competitors.

Common Mistakes and Fast Fixes

Mistake 1: Publishing interpretation before verification

Fix: require at least two evidence layers before consequential claims are framed as outcomes. This rule catches weak assumptions before publication.

Mistake 2: Using inflated certainty language

Fix: standardize wording for preliminary, confirmed, and disputed signals. Shared language reduces interpretive drift across writers.

Mistake 3: Mixing audience levels in one undifferentiated narrative

Fix: add layered depth with clear section labels for general, operational, and technical readers. Readers should immediately know where their level of detail starts.

Mistake 4: Treating every update as equal priority

Fix: rank stories by reader impact and decision relevance, not social velocity. Prioritization improves quickly when this rule is explicit.

Mistake 5: Ignoring policy context in major stories

Fix: add a concise governance section for high-impact releases. This prevents feature-centric reporting from hiding real-world constraints.

Mistake 6: Over-optimizing for headline CTR

Fix: track return behavior and source engagement, then optimize for quality retention metrics. Quality-led optimization usually improves both trust and monetization outcomes.

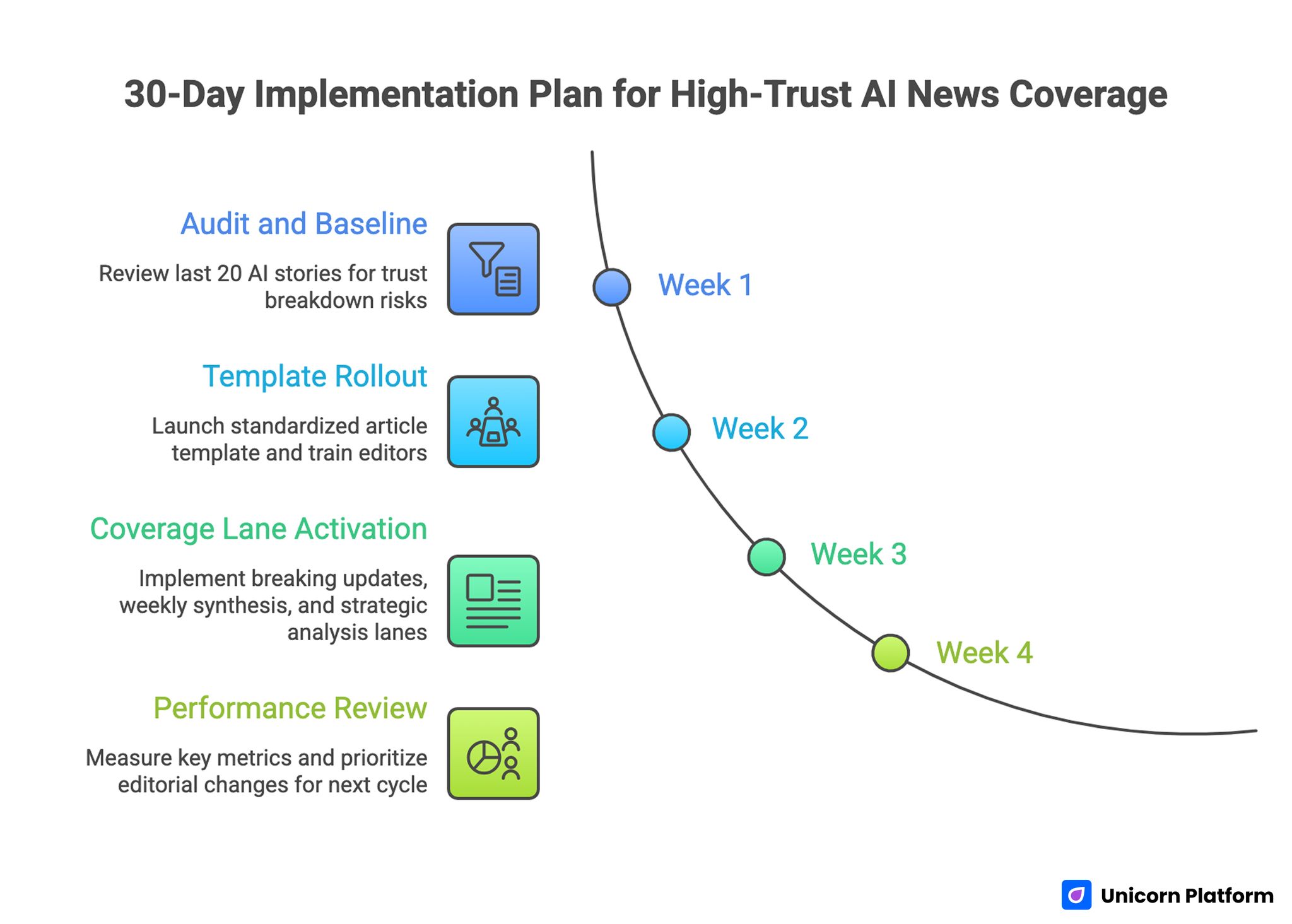

30-Day Implementation Plan

30-Day Implementation Plan for High-Trust AI News Coverage

Week 1: Audit and baseline

Review the last 20 AI stories for source depth, certainty language, and section consistency. Document where trust breakdown risk is highest.

Week 2: Template rollout

Launch a standardized article template with source stack requirements, uncertainty labeling, and audience-intent sections. Train editors on the template before full rollout.

Week 3: Coverage lane activation

Implement breaking updates, weekly synthesis, and strategic analysis lanes. Assign role ownership for each lane.

Week 4: Performance review

Measure return sessions, source clicks, completion rates, and newsletter conversion by section type. Prioritize the highest-leverage editorial changes for next cycle.

This plan is lightweight enough for small teams but strong enough to improve quality rapidly. It can be executed without major tooling changes.

90-Day Compounding Plan

Month 1: Stabilize operations

Focus on consistency, source integrity, and template adherence. Reduce correction volume and normalize role ownership.

Month 2: Improve analytical depth

Expand follow-up pieces, strengthen cross-story synthesis, and publish clearer uncertainty updates. Use monthly reviews to identify which formats create the strongest return behavior.

Month 3: Scale what works

Double down on formats with strong return behavior and high trust signals. Retire low-value formats that consume capacity without improving audience quality.

At this stage, editorial quality and business metrics should begin reinforcing each other. Better trust signals should translate into steadier audience value.

FAQ: AI News Coverage

How can AI news teams stay fast without sacrificing accuracy?

Use a structured pre-publish sequence with explicit source roles and certainty checks. Speed improves when workflow is predictable, not when verification is skipped.

What should be included in every high-impact AI story?

At minimum: verified facts, affected audiences, uncertainty boundaries, and near-term watchpoints. These elements reduce confusion and improve practical value.

Is breaking news still worth publishing if depth is limited?

Yes, if the piece is clearly framed as a verified brief and followed by a deeper analysis once more evidence appears. Clarity of labeling is what protects trust in this model.

How should teams handle uncertain benchmark claims?

Present them as provisional signals, cite methodology where available, and explain what evidence is still missing. This gives readers realistic confidence boundaries.

Which metrics matter most for editorial trust?

Repeat visits, source-link engagement, and completion of context-heavy sections are stronger trust indicators than raw pageviews. These metrics reflect usefulness, not just curiosity.

How often should AI news templates be updated?

Review monthly or after major coverage cycles. Template changes should be driven by observed reader behavior and correction patterns.

Should every AI story include policy context?

Not every story needs deep policy analysis, but major releases should include a concise governance lens to support informed interpretation. This context helps operational readers make better decisions.

How can smaller teams compete with larger AI outlets?

Smaller teams can win by being clearer, more consistent, and more transparent with sources. Reliability can outperform volume.

What is the best way to cover recurring topics like major AI labs?

Use a repeatable format that tracks what changed, what stayed the same, and what implications are credible right now. Consistency makes multi-story comparison far easier for readers.

How do you avoid hype-driven editorial drift?

Tie story priority to reader decision value, enforce certainty language standards, and evaluate output using retention-focused metrics. This combination reduces drift toward performative coverage.

Final Takeaway

AI journalism quality in 2026 depends less on writing speed and more on editorial system design. Newsrooms that combine fast verification, intent-based structure, transparent uncertainty, and disciplined follow-up can scale coverage without losing trust.

Unicorn Platform supports this model by making structure reusable and publication operations cleaner. The advantage is not only faster output. The real advantage is compounding credibility that readers return to when decisions matter.

Related Blog Posts

- AI-Driven Landing Page Workflows in 2026: How Teams Move Faster Without Losing Conversion Quality

- Building AI Applications in 2026: A Practical Guide to Product Utility and Conversion Readiness

- Building AI-Assisted Websites in 2026 Without Losing Quality

- AI-Driven Digital Marketing in 2026: A Practical Operating Model for Growth Teams