Table of Contents

- Budget Bands by Product Type

- The Core Cost Buckets You Should Always Track

- Hidden Cost Drivers That Break Forecasts

- 30-Day Rollout Plan for First Cost Validation

- FAQ

Most teams start with one question: how much budget is enough to ship an AI product that actually works in production. That is the right business question, but it is usually answered with the wrong method.

Many estimates are built from feature wishlists and rough engineering hours. The result looks precise, yet teams still miss timelines, overspend in later phases, and launch products that cannot support reliable user outcomes. The issue is not only pricing accuracy. The issue is scope design and operational planning.

A stronger approach treats budgeting as a system decision. You define the user workflow first, then map build cost, validation cost, and optimization cost across staged milestones. This model gives better financial visibility and creates room for learning after launch.

Within Unicorn Platform, this planning style also improves go-to-market quality because messaging can reflect realistic implementation boundaries instead of overpromising from day one. It also keeps stakeholder expectations aligned during pricing conversations.

sbb-itb-bf47c9b

Quick Takeaways

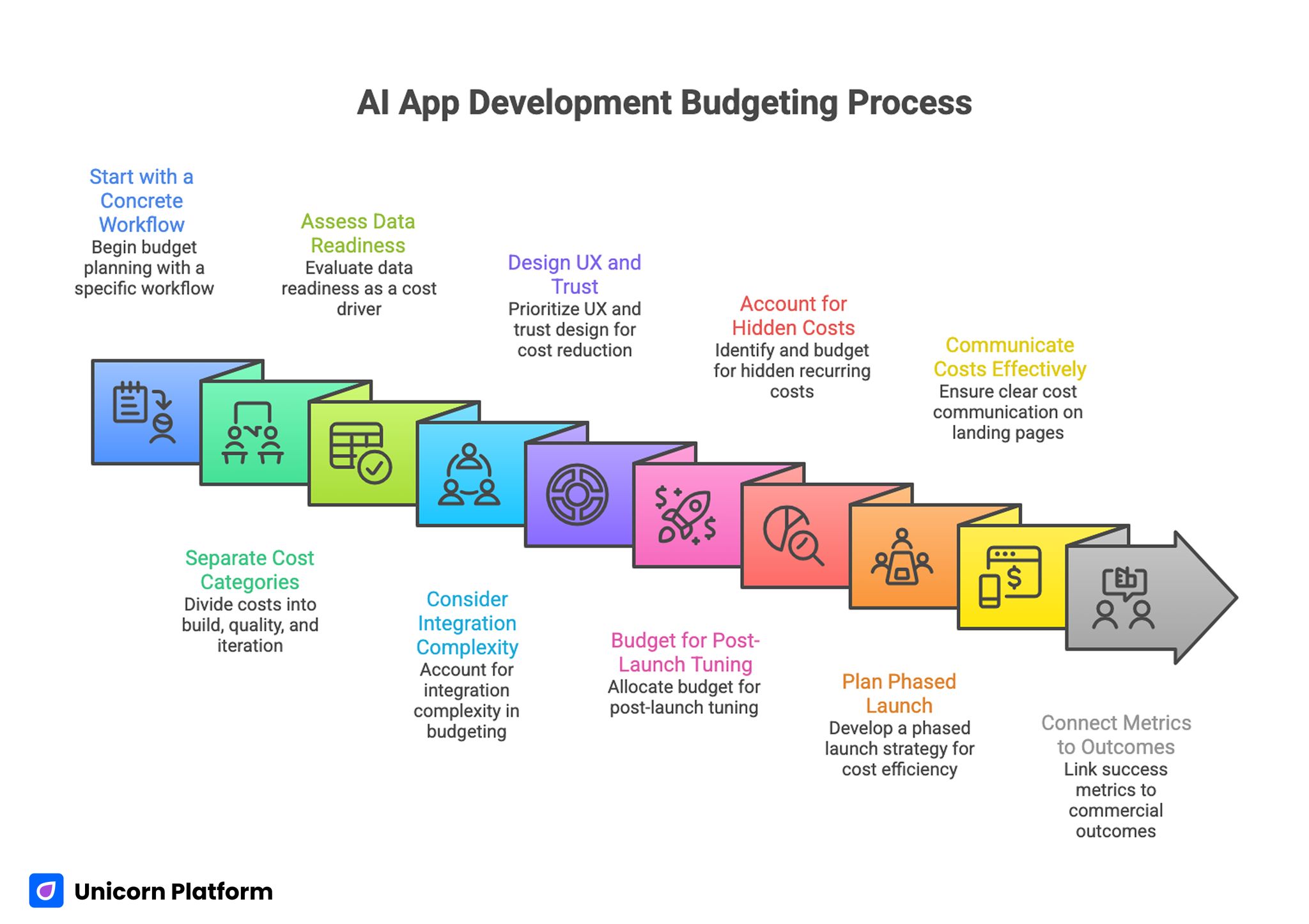

AI App Development Budgeting Process

- Budget planning should begin with one concrete workflow, not a broad AI feature list.

- Teams that separate build, quality, and iteration costs make better decisions under pressure.

- Data readiness is often a larger cost driver than model selection.

- Integration complexity can exceed core model work in many real projects.

- Early UX and trust design reduce expensive rework later.

- Post-launch tuning should be budgeted before development starts.

- Hidden recurring costs can outweigh initial delivery costs over time.

- One phased launch path usually outperforms full-feature first releases.

- Cost communication on landing pages affects lead quality as much as product pricing.

- Success metrics should connect product usage to commercial outcomes, not only release velocity.

Why Budget Conversations Break Down

Cost discussions fail when teams confuse an estimate with a strategy. An estimate gives a number. A strategy gives a path for reaching business value with controlled risk.

A common failure mode is scope inflation at planning time. Teams include assistant features, automation layers, analytics dashboards, custom integrations, and enterprise controls in one first release. This inflates complexity before anyone validates whether users will adopt the core workflow.

Another failure mode is separating technical scope from commercial reality. Engineering may estimate implementation effort correctly, but marketing and sales may still attract low-fit traffic because pricing logic and capability boundaries are not clear in public messaging.

The third issue is missing lifecycle budgeting. Teams often fund build and launch but underfund monitoring, retraining, QA improvements, and conversion optimization. Product quality then declines or plateaus when real usage grows.

Cost Planning Should Start With Product Scope

The most reliable method is to design one primary workflow first. Define who uses it, what task it completes, and what measurable outcome should improve when that task is done correctly.

Once that workflow is clear, map the required system blocks. This mapping reduces ambiguity before implementation begins.

- Input layer: data ingestion, validation, permissions.

- Intelligence layer: model routing, prompt logic, fallback behavior.

- Experience layer: interface flows, error states, trust prompts.

- Operations layer: monitoring, logging, governance, support.

This structure prevents underestimation because it exposes non-model work early. It also makes ownership and sequencing easier to review across teams.

When teams need a practical path for sequencing product scope before heavy budgeting, this AI app development guide is a useful planning reference. It helps teams validate whether the first release scope is realistic.

Budget Bands by Product Type

No single budget number works for every AI product. Cost depends on complexity, reliability requirements, and operational surface area.

Assistant or support workflow products

These projects often start with chat-based interactions, retrieval over internal knowledge, and ticket routing actions. They can launch quickly when knowledge quality is strong and integration requirements are light.

Cost risk rises when teams need strict permissions, multi-language support, or heavy domain-specific accuracy controls. Those constraints should be reflected in both timeline and QA planning.

Transcription and meeting intelligence products

These workflows include audio ingestion, speaker separation, summarization, structured action extraction, and export into existing systems. Complexity grows with noisy input quality and enterprise privacy requirements.

Recurring processing costs are a major factor, so planning should include realistic usage growth assumptions. Teams that skip this step often underestimate their run-rate by a wide margin.

Recommendation and personalization products

These systems require behavior data pipelines, ranking logic, and experimentation layers. They often need stronger analytics infrastructure than teams expect in early planning.

The product can look simple to end users while backend complexity remains high. Budget models should treat this as a normal pattern, not an exception.

Vision-enabled or document automation products

Computer vision and document intelligence work adds data quality constraints, annotation overhead, and reliability testing burdens. Budget pressure usually appears in QA and false-positive control, not only in model training.

The Core Cost Buckets You Should Always Track

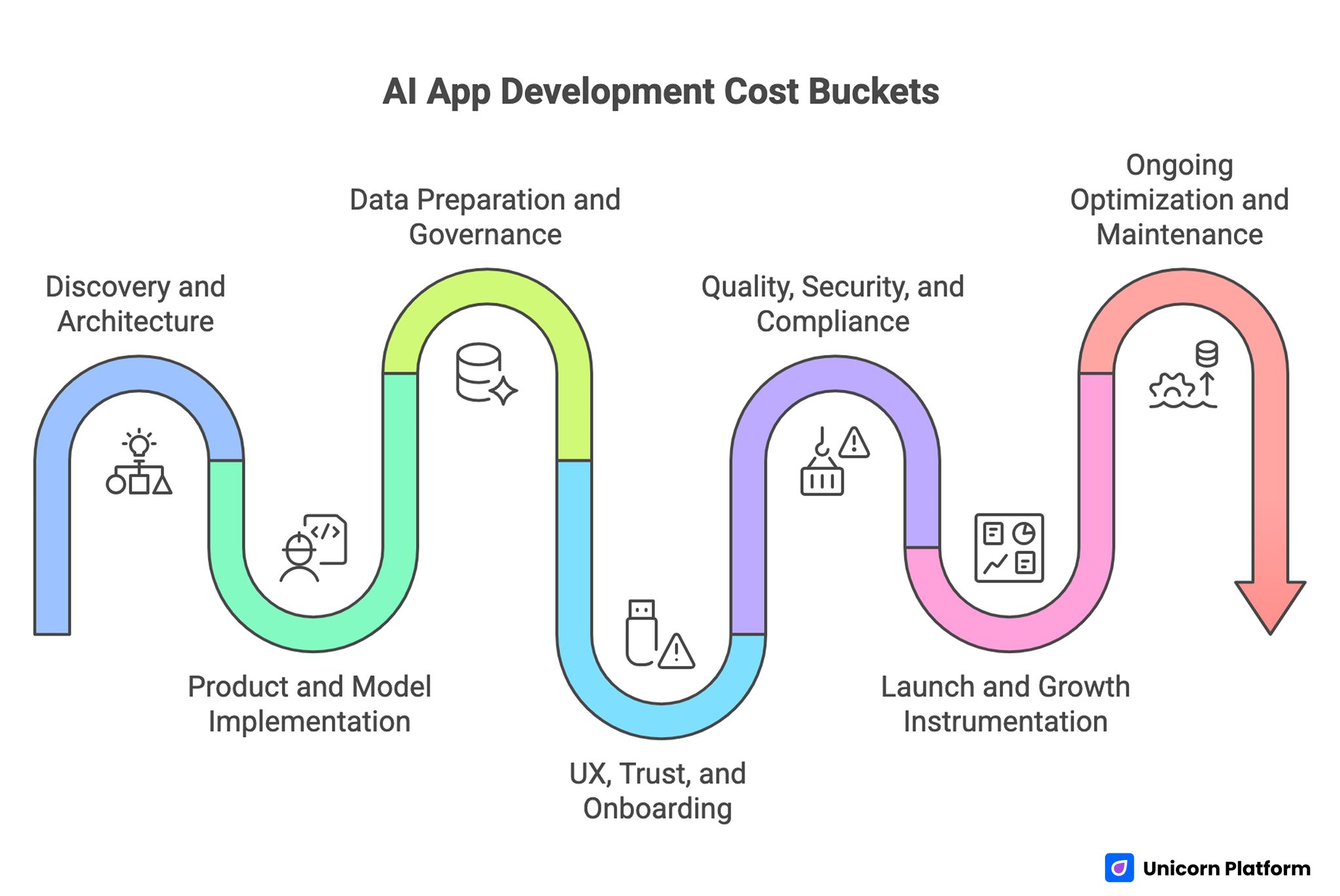

AI App Development Cost Buckets

Strong forecasts track distinct cost buckets instead of one blended total. This makes tradeoffs visible and helps finance decisions stay tied to product outcomes.

1. Discovery and architecture

This includes problem framing, user workflow definition, technical design, data audit, and risk mapping. Skipping this phase reduces short-term spend but increases total rework later.

2. Product and model implementation

This bucket covers application engineering, model integration, orchestration logic, and API infrastructure. Teams often focus only on this line item and miss the rest.

3. Data preparation and governance

Data cleaning, labeling, permissions, lifecycle controls, and policy design usually require more time than expected. This is where many projects quietly exceed early estimates.

4. UX, trust, and onboarding

User confidence depends on understandable outputs, clear boundaries, and error recovery paths. These elements are often treated as polish, but they are central to adoption and retention.

5. Quality, security, and compliance

Load testing, accuracy evaluation, permission controls, and compliance checks should be planned as first-class workstreams. Deferred quality work usually returns as expensive incident response.

6. Launch and growth instrumentation

Event tracking, funnel definitions, analytics dashboards, and experiment setup are required for post-launch learning. Without this layer, cost and performance decisions become guesswork.

7. Ongoing optimization and maintenance

Model tuning, prompt updates, UX refinements, and infrastructure cost controls continue long after release. Teams should budget this phase from the beginning instead of requesting emergency funds later.

Team Model Choices and Their Cost Impact

The delivery model changes both financial profile and execution speed. Choosing the wrong model can create avoidable cost drag for months.

In-house model

Internal teams offer deeper product context and long-term continuity. This model works well when the company expects ongoing AI investment and can support hiring timelines.

The tradeoff is higher fixed cost and slower ramp in specialized areas. This model is strongest when long-term internal capability is a strategic priority.

Agency or development partner model

Partner teams can accelerate delivery and provide packaged experience across similar projects. This is useful for teams that need speed and do not yet have complete internal AI staffing.

Risk appears when knowledge transfer and ownership handoff are weak. Budget should include explicit documentation and transition milestones.

Hybrid model

A hybrid setup uses partner expertise for early acceleration while internal teams own long-term operations. This approach can balance speed and control when responsibilities are clear.

Cost performance depends on clean governance and decision authority. Without that structure, hybrid teams can become expensive and slow.

Timeline Design: Budget by Milestone, Not Calendar

Calendar-based budgets create pressure to ship incomplete or unstable releases. Milestone-based budgets connect spending to evidence.

Use a staged model. Each stage should have a clear go or no-go decision gate.

- Milestone A: validated workflow and architecture baseline.

- Milestone B: functional prototype with measurable task success.

- Milestone C: MVP with instrumentation and support readiness.

- Milestone D: reliability hardening and compliance checks.

- Milestone E: launch plus first optimization loop.

Funding against milestones improves accountability and reduces sunk-cost escalation. It also prevents overbuilding before adoption signals are visible.

Hidden Cost Drivers That Break Forecasts

Most overruns come from factors not included in first estimates. Teams that surface these drivers early stay in control.

Data quality and structure debt

Poor source data causes repeated model failures and manual cleanup cycles. This problem can dominate budget if not corrected at the beginning.

Integration complexity

Connecting AI outputs to existing tools, permissions, and workflows often takes longer than initial model integration. This integration burden should be estimated explicitly, not hidden in engineering buffer time.

Reliability requirements

Enterprise contexts need auditability, role-based access, and incident recovery processes. These requirements add significant engineering and QA effort.

Recurring model and infrastructure usage

As usage scales, inference and storage expenses can rise quickly. Teams should model usage scenarios before launch and review monthly after release.

Cross-functional coordination costs

Product, engineering, legal, marketing, and support alignment takes time. Weak governance creates delay and duplicated work.

How to Control Spend Without Reducing Value

Cost control should protect outcome quality, not merely cut scope at random. Disciplined prioritization works better than broad cost-cutting.

Start with one high-value workflow

Launch one workflow that has clear business importance and measurable user impact. This creates faster learning and lowers waste.

Reuse proven components

Adopt reusable interface patterns, prompt templates, and monitoring standards where possible. Reinventing each component for every release increases cost without improving user value.

Set quality thresholds early

Define accuracy, latency, and trust requirements before heavy build work begins. Thresholds reduce late-stage redesign and conflict.

Budget for iteration as a fixed line item

Plan optimization as scheduled work, not optional improvement. Teams that skip this budget usually spend more in crisis mode later.

Enforce experiment discipline

Run one major variable per test cycle and keep clear release notes. Controlled experiments produce clearer gains and lower analytical confusion.

Building a Cost-to-ROI Model That Decision Makers Trust

Executive decisions should connect investment to commercial outcomes over time. Short-term delivery metrics are not enough on their own.

A practical model includes the following views. Keeping them separate improves decision clarity.

- investment view: discovery, build, launch, optimization.

- adoption view: activation, repeat usage, retention.

- value view: time saved, conversion lift, operational efficiency.

- risk view: quality incidents, compliance exposure, churn impact.

With this structure, teams can evaluate not only whether costs are acceptable, but whether value growth is tracking as expected. This also makes stakeholder reporting much easier.

Scenario planning helps here. Use conservative, expected, and aggressive adoption assumptions, then update monthly with observed performance.

Pricing Communication and Conversion Quality

Public pricing communication affects cost efficiency because it shapes lead quality. If messaging is vague, sales teams spend time on low-fit opportunities.

A strong pricing narrative should clarify key decision points for buyers. Clarity here reduces low-fit conversations.

- what drives implementation effort,

- what is included at each scope level,

- what outcomes are realistic in early phases,

- what support model is available after launch.

Landing pages should guide users through effort expectations, not only headline value claims. This approach improves qualification quality before sales calls begin.

When refining section flow for pricing pages, this guide to high-converting landing page structure helps align explanation order with user decision behavior. Apply that sequencing logic before writing detailed copy.

Go-to-Market Flow for AI Cost-Sensitive Offers

AI product buyers often move through awareness, comparison, and decision stages with different concerns at each step. Content and CTA sequencing should match that progression.

Early-stage visitors usually need scope education and feasibility clarity. Mid-stage evaluators need side-by-side implementation logic and risk framing. Decision-stage buyers need clear qualification paths and next-step confidence.

A staged CTA model works well in practice. It keeps user intent aligned with the right next step.

- early stage: explore implementation options,

- comparison stage: review scope and delivery paths,

- decision stage: request a tailored estimate.

This structure improves pipeline quality because users self-select according to readiness. It also reduces avoidable handoffs in sales and support.

Mobile and UX Constraints in Cost-Focused Journeys

Many users first encounter budget-related pages on mobile devices. Dense content and unclear hierarchy can cause early drop-off before users reach meaningful details.

Prioritize first-screen clarity, short visual blocks, and immediate access to scope explanation. Keep form steps simple and progressive.

Mobile behavior patterns often shape conversion more than teams expect, especially when decisions involve budget risk. Strong mobile readability protects early-stage intent.

If your team is improving small-screen conversion flow, this mobile app landing page guide offers implementation-level direction. Use it as a checklist during release reviews.

Use Case Focus: Meeting Intelligence and Transcription Products

Transcription and meeting intelligence solutions are useful budgeting examples because they combine model usage with clear workflow economics. They also reveal recurring cost behavior quickly.

A robust version of this product class includes audio ingestion, diarization, summary generation, action extraction, and integration into project or CRM workflows. Each module has different reliability and cost implications.

Budget discipline here depends on usage modeling. Frequent meetings, long recordings, and multi-language needs can change recurring costs substantially. Teams should validate expected usage patterns before aggressive rollout.

Quality controls are equally important. Users need transparent confidence cues, easy correction paths, and clear handling of uncertain outputs. These UX and trust elements should be budgeted as required work, not optional enhancements.

Operational Governance: The Cost Multiplier Few Teams Plan For

Governance quality determines whether cost scales linearly or chaotically. It is one of the most important long-term budget variables.

Define clear owners for the following areas. Explicit ownership prevents budget drift.

- product scope and release sequencing,

- model and data governance,

- analytics and experiment integrity,

- security and compliance controls,

- customer support and escalation flows.

Without role clarity, teams duplicate work, delay decisions, and increase delivery risk. Governance is often the hidden multiplier behind budget variance.

Within Unicorn Platform, this governance model also improves content consistency because product boundaries, proof standards, and CTA logic remain aligned across touchpoints. Consistency reduces rework in later campaign cycles.

30-Day Rollout Plan for First Cost Validation

Week 1: Scope lock and baseline assumptions

Define one core workflow, one target user segment, and one primary success metric. Document cost assumptions for data, integration, and post-launch support.

Week 2: Prototype plus trust and UX foundations

Ship a functional prototype with explicit error handling and boundary messaging. Validate whether users understand outputs and can complete the intended task.

Week 3: Instrumentation and qualification flow

Add event tracking, funnel checkpoints, and staged qualification paths. Align landing page messaging with the actual implementation model.

When product flows include conversational support or guided interactions, this resource on AI assistants for websites can help connect feature design to operational value. It is most useful during instrumentation and qualification planning.

Week 4: Review and decision gate

Compare observed behavior with initial assumptions. Approve targeted expansion only if quality, adoption, and operational metrics meet predefined thresholds.

90-Day Optimization Plan for Cost Efficiency

Month 1: Stabilize

Reduce workflow friction, improve output consistency, and validate integration reliability. Remove low-value features that increase support burden.

Month 2: Optimize

Run controlled tests on onboarding, trust cues, and qualification logic. Focus on one major variable per cycle to protect signal quality.

Month 3: Scale

Expand only proven patterns to adjacent use cases. Standardize documentation, update assumptions, and tighten recurring cost monitoring.

This sequence protects budget by linking expansion to evidence instead of optimism. It also keeps team confidence high during scale decisions.

Practical Budget Checklist Before Greenlighting Build

Use this checklist to confirm readiness before funding expansion. Teams should review each item explicitly.

- Primary workflow is explicit and measurable.

- Data sources and quality constraints are documented.

- Integration dependencies are mapped with owners.

- Quality and compliance requirements are defined.

- Post-launch iteration budget is approved.

- Landing page cost narrative matches real delivery scope.

- Analytics events and success metrics are finalized.

- Support and escalation model is in place.

Teams that pass these checks usually move faster with fewer late surprises. They also make post-launch decisions with better data.

Example Cost Worksheet for Planning Sessions

Teams often ask for a simple way to convert strategy into a working budget model. A lightweight worksheet solves this by forcing assumptions into visible numbers before implementation starts.

Begin with five input groups:

- Expected active users by month for the first two quarters.

- Average workflow runs per active user.

- Core integration points required for launch.

- Reliability and compliance requirements by user tier.

- Planned release cadence for optimization cycles.

After inputs are captured, run the worksheet in sequence. First estimate implementation effort by milestone, then estimate recurring platform and model usage, and finally add an optimization reserve tied to expected iteration frequency.

A practical formula for early planning is:

- total quarter cost = delivery milestone budget + recurring usage budget + optimization reserve.

- delivery milestone budget = discovery + prototype + MVP + reliability hardening.

- recurring usage budget = projected workflow volume x average processing cost per workflow.

- optimization reserve = 15-30% of delivery milestone budget, depending on uncertainty level.

This formula is not a universal truth. It is a decision tool for testing assumptions and comparing scope options before committing engineering capacity.

How to Read the Worksheet Without False Confidence

A model can look clean and still be wrong if assumptions are weak. The best teams review assumption quality with the same rigor they apply to technical architecture.

Run three scenarios every time:

- conservative case with slower adoption and higher support burden,

- expected case with balanced growth and stable workflow behavior,

- aggressive case with faster adoption and tighter reliability margins.

Then evaluate where breakpoints appear. If small changes in usage create large cost swings, focus on control levers such as routing logic, caching, and workflow constraints before scale campaigns begin.

Review confidence levels monthly. Inputs based on real usage should replace planning assumptions as soon as production data is available.

Vendor Estimate Review: Questions That Prevent Rework

Vendor proposals can look comparable while hiding major differences in scope boundaries. A structured review set helps teams compare like-for-like and avoid later contract disputes.

Use these questions in every estimate review:

- Which delivery artifacts are included at each milestone?

- What is explicitly excluded from first release scope?

- How are model and infrastructure usage assumptions defined?

- Which QA and compliance checks are included before launch?

- What handoff materials are delivered for internal ownership?

- How is post-launch support scoped and priced?

Answers should be specific enough to map into your worksheet categories. If an estimate cannot be mapped cleanly, the scope is probably ambiguous.

Ask for risk notes in writing. A high-quality proposal explains where uncertainty is highest and what mitigation actions are recommended.

Documentation Habits That Keep Budgets Accurate

Budget accuracy improves when documentation is treated as an operating asset rather than an administrative task. Short, consistent logs create high leverage over time.

For each release cycle, document:

- assumption updates and why they changed,

- observed usage versus expected usage,

- quality incidents and resulting fixes,

- conversion impact by major workflow change,

- spend movement by cost bucket.

This history prevents repeated estimation errors and speeds executive decision-making. It also makes future forecasting more realistic because the team can reference actual operating behavior.

FAQ: How Much Does It Cost to Build an AI App

What is the best way to estimate the budget for an AI product?

Start with a workflow-first model. Define one user task, required system blocks, and milestone-based delivery phases. Then allocate budget across discovery, implementation, quality, and optimization.

Why do AI project estimates often increase after kickoff?

Initial estimates usually miss data readiness work, integration complexity, and post-launch reliability requirements. These are major cost drivers that appear during execution.

Should startups build full-feature AI products from the start?

Usually no. A focused initial workflow gives better learning and lower risk. Full-feature first releases tend to inflate complexity before product-market evidence exists.

How much should be reserved for post-launch iteration?

The exact amount depends on product complexity and usage growth, but iteration should always be a planned line item. Treating optimization as optional leads to expensive reactive fixes.

Which team model is usually most efficient?

It depends on internal capabilities and timeline pressure. Hybrid models are often effective when responsibilities and handoff criteria are clearly defined.

How can teams reduce hidden recurring costs?

Model usage scenarios early, monitor monthly, and align product behavior with cost controls. Also prioritize data quality and clean integration design to avoid repeated rework.

Does better pricing communication really affect cost efficiency?

Yes. Clear scope and effort messaging improves lead qualification, shortens sales cycles, and reduces time spent on low-fit opportunities.

What should be measured after launch to validate budget decisions?

Track activation, repeat usage, output quality, support burden, and commercial outcomes. A budget is validated only when product behavior and business impact align.

How do transcription-style AI products differ in budgeting?

They combine clear user value with potentially high recurring processing costs. Usage growth, quality controls, and privacy requirements must be modeled carefully.

What is the biggest budgeting mistake teams make in AI projects?

Treating cost as a one-time build decision. Durable success comes from lifecycle planning that includes quality, monitoring, and iterative improvement.

Final Takeaway

Budgeting AI products in 2026 is less about finding one perfect number and more about designing a repeatable path from scope to outcome. Teams that align workflow definition, milestone funding, quality gates, and post-launch learning can control cost while improving product reliability.

The strongest programs use financial discipline and editorial clarity together. When scope decisions, pricing communication, and optimization loops are connected, investment turns into measurable growth rather than expensive uncertainty.