Table of Contents

- Conversion Architecture for AI-Generated Pages

- Human Editing Framework That Improves AI Output

- 30-Day Execution Plan for an AI Landing Page Program

- Common Failure Modes and How to Fix Them

- FAQ

AI landing page generators are no longer side tools for experimentation. They are now part of core go-to-market workflows for startups, agencies, and product teams that need to launch quickly without sacrificing quality.

Speed alone does not produce revenue. Many teams can publish an AI-generated page in one hour, but conversion stays weak because the message is vague, trust is underdeveloped, and the call to action does not match visitor intent.

A better approach treats AI as a production accelerator and treats editorial review as a quality control system. The winning workflow combines prompt quality, conversion structure, and ongoing optimization rather than relying on one generated draft.

This guide shows exactly how to do that in 2026. You will get practical frameworks for choosing a tool, creating stronger prompts, editing drafts with intent, and running a repeatable QA process that protects performance.

If you need a baseline build flow before optimization, this detailed walkthrough on creating an AI landing page is a strong starting point for setup and initial structure.

sbb-itb-bf47c9b

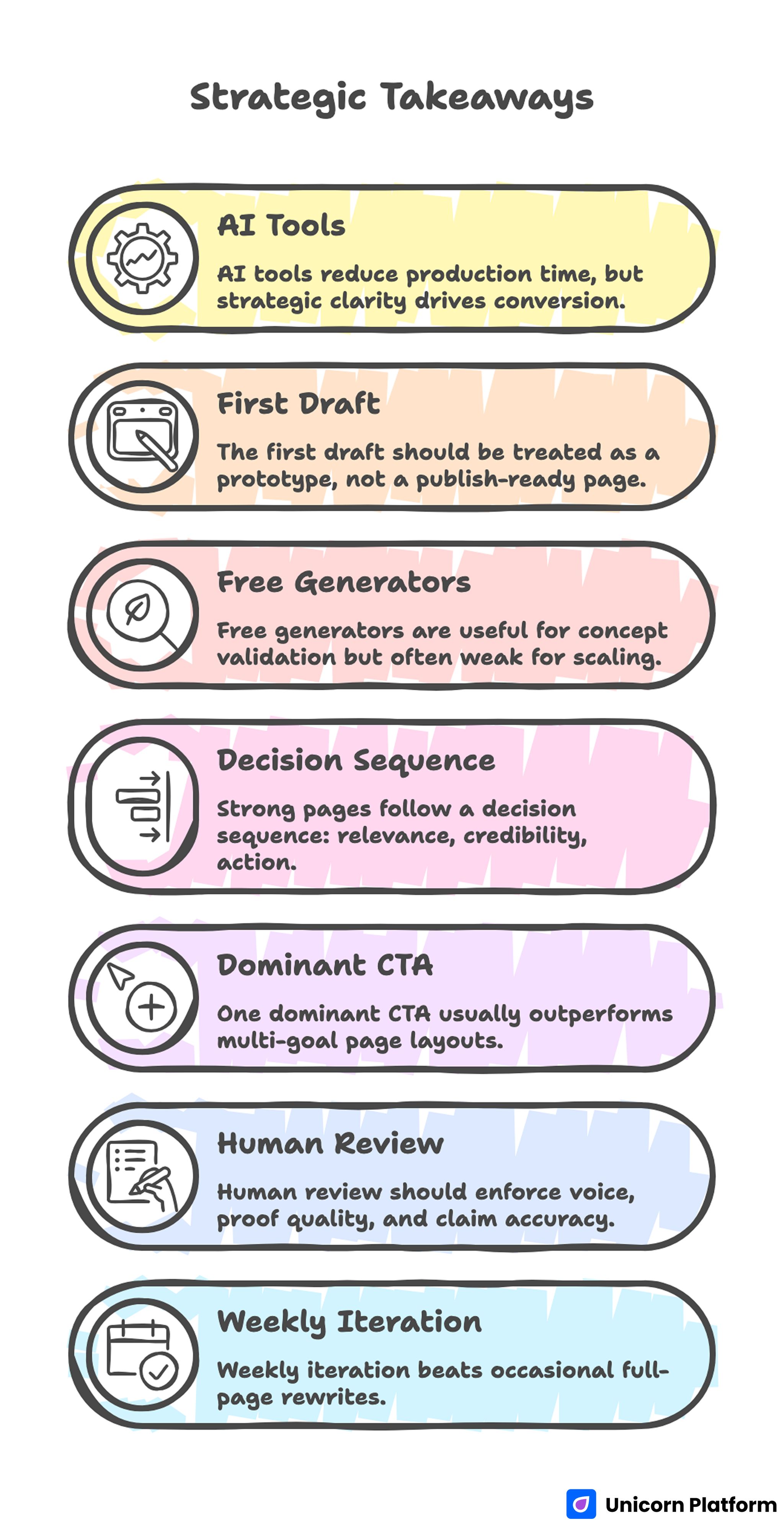

Quick Strategic Takeaways

Strategic Takeaways

- AI tools reduce production time, but strategic clarity drives conversion.

- The first draft should be treated as a prototype, not a publish-ready page.

- Free generators are useful for concept validation but often weak for scaling.

- Strong pages follow a decision sequence: relevance, credibility, action.

- One dominant CTA usually outperforms multi-goal page layouts.

- Human review should enforce voice, proof quality, and claim accuracy.

- Weekly iteration beats occasional full-page rewrites.

Why AI Landing Page Generators Matter Now

The main market shift is operational speed. Campaign windows are shorter, offers evolve faster, and growth teams need copy and layout updates in hours, not weeks. AI generation helps teams ship quickly when product positioning changes or when a new acquisition channel opens.

Another shift is volume pressure. Teams now run more segmented campaigns, which means more landing pages, more message variants, and more analytics feedback loops. Manual page production for each segment is slow and expensive without a structured generation workflow.

AI tools solve part of this problem by shortening the path from idea to draft. The part they do not solve is business alignment, because the model cannot decide your highest-value audience, your proof quality threshold, or your conversion tradeoffs.

That is why high-performing teams pair AI generation with strict editorial and conversion QA. They publish fast, but they do not publish blindly.

Current industry trends show that AI is reshaping how digital marketing teams operate — enhancing speed without sacrificing quality — as discussed in _“_How AI Is Changing Digital Marketing” by Forbes:

What an AI Landing Page Generator Should Actually Deliver

A useful generator should produce more than surface-level copy. It should help with structure, message variation, and faster experimentation while keeping the page easy to edit after generation.

Core capabilities to expect

- Intent-aware hero and subhead options for different audience angles.

- Section-level layout suggestions that support narrative flow.

- Fast generation of CTA variants aligned to funnel stage.

- Editable output that can be tuned without developer intervention.

- Basic SEO scaffolding for title, headings, and semantic structure.

Capabilities that are often overrated

- One-click "high-converting" claims without proof or context.

- Fully autonomous optimization promises with no human review.

- Visual polish that hides weak message clarity.

A practical evaluation starts by asking one question: can this tool help my team move from draft to reliable conversion performance faster than the current workflow?

Free AI Landing Page Generator vs Paid Tools: Practical Tradeoffs

Free tools are attractive for early-stage teams because they reduce upfront cost and make experimentation easy. They can be a smart entry point when you need to test direction quickly and have limited resources.

The downside appears when teams try to scale. Free outputs are often more generic, customization is limited, integration depth is weaker, and conversion controls are frequently thin. Those limits are manageable for testing, but they become expensive when paid traffic and lead quality are involved.

Where free tools can work well

- Message exploration during early positioning discovery.

- Quick campaign prototypes before committing budget.

- Internal alignment drafts for sales or marketing review.

- Side experiments where conversion risk is low.

Where free tools usually create bottlenecks

- Consistent brand voice across multiple campaigns.

- Advanced form logic and lead-routing workflows.

- Reliable SEO and structured metadata control.

- Deeper performance experiments across segments.

Decision rule for upgrades

Upgrade when the opportunity cost of weak control exceeds tool cost. If low-fit leads, slow edits, or weak testing capacity are already hurting outcomes, the paid workflow is usually cheaper than staying limited.

For teams designing automated creation workflows with stronger control, this guide on an OpenAI website builder model explains how to operationalize speed without losing quality.

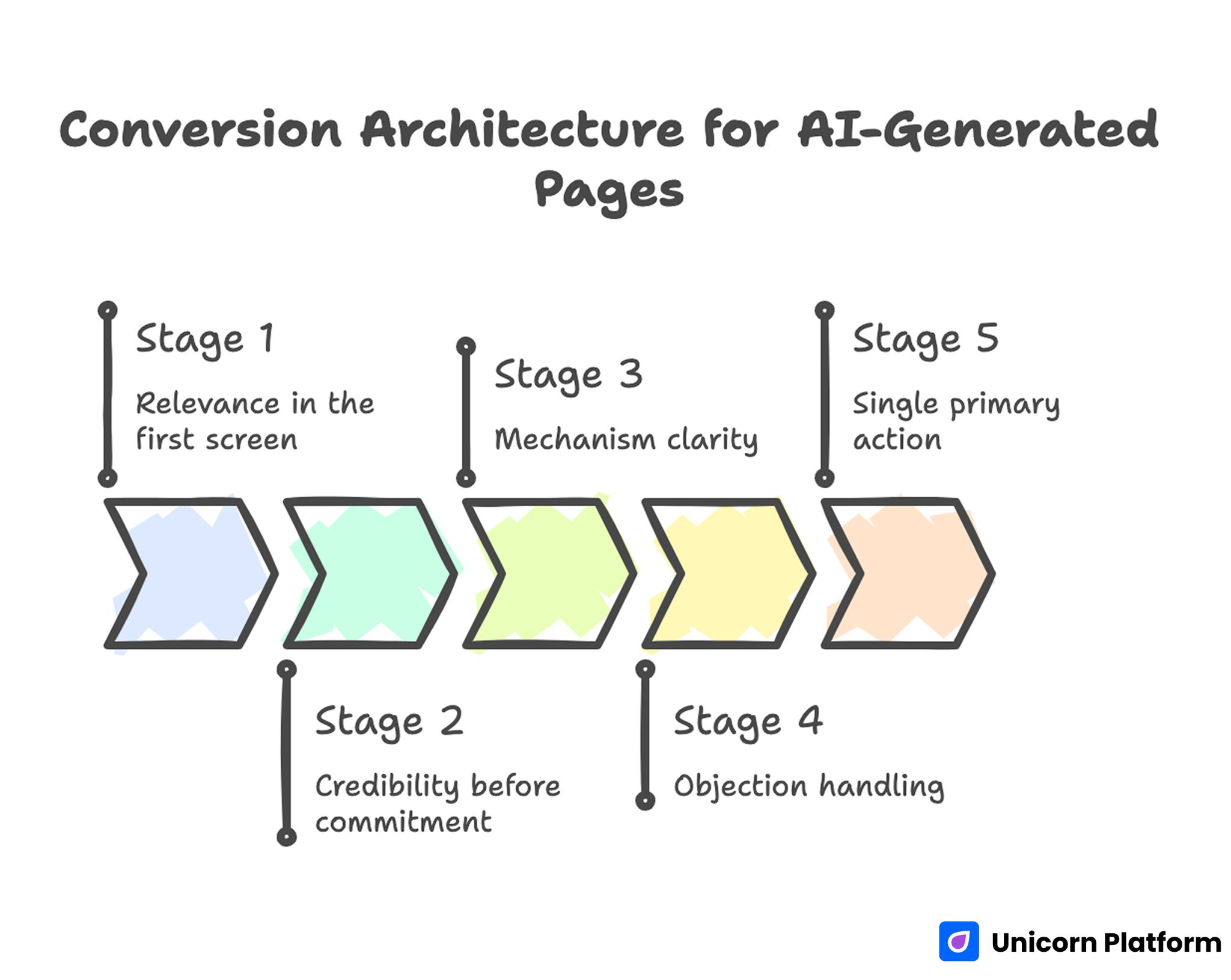

Conversion Architecture for AI-Generated Pages

Conversion Architecture for AI-Generated Pages

Most successful landing pages still follow a predictable decision path. AI can accelerate the writing, but it does not replace the sequence visitors need to feel confident. Design and UX principles that improve landing page conversion are well documented by Smashing Magazine in “Landing Pages: Conversion, UX, and Best Practices”

Stage 1: Relevance in the first screen

The first screen should answer three questions quickly: who this is for, what result it creates, and why now. Broad claims reduce trust because they sound like template copy.

Specificity is the fastest conversion lever at this stage. Replace abstract wording with outcome language linked to a clear audience.

Stage 2: Credibility before commitment

Trust must appear before major commitment requests. A short credibility block near the first CTA can reduce hesitation better than a long testimonial section hidden near the footer.

Useful proof formats include role-specific testimonials, concise outcomes, and selected references that support your core claim.

Stage 3: Mechanism clarity

Visitors need to understand how the offer works, not only what it promises. A compact process section reduces uncertainty and improves qualified action.

Keep process language practical. Explain inputs, steps, timeline, and expected output.

Stage 4: Objection handling

Strong pages surface objections early and answer them directly. Typical objections include fit, effort, price, integration difficulty, and expected results.

A focused FAQ block is often enough when answers are specific and concise.

Stage 5: Single primary action

The page should guide visitors toward one dominant next step. Multiple equal-priority CTAs often lower conversion confidence by creating choice friction.

Clear post-submit expectations also matter. Visitors should know response time, next interaction format, and required preparation.

How to Prompt for Better AI Landing Page Drafts

Prompt quality is usually the biggest predictor of draft quality. Generic prompts produce generic pages, even with advanced models.

A high-performance prompt template

Use this structure when generating first drafts:

- Audience: exact role, maturity stage, and primary pain.

- Offer: what is included and what is excluded.

- Desired outcome: measurable change after conversion.

- Differentiation: why this offer is not interchangeable.

- Proof placeholders: real signals that will be added manually.

- CTA: one primary action and one secondary fallback.

- Tone constraints: vocabulary, brand voice, and banned phrasing.

- Section constraints: target length per section and reading level.

Practical prompt example

"Create a landing page for a B2B SaaS onboarding audit. Audience: product-led growth teams at seed to Series B companies. Pain: trial users do not activate in week one. Outcome: improved activation through onboarding friction fixes. CTA: book a diagnostic call. Tone: direct, practical, non-hype. Include proof placeholders and a concise FAQ."

This structure produces stronger drafts because it removes ambiguity from intent, offer scope, and conversion action.

Human Editing Framework That Improves AI Output

Generated drafts should move through a structured edit, not ad hoc line changes. A simple five-pass model helps teams improve quality quickly and consistently.

Pass 1: Strategy alignment

Check that the page matches your audience, offer stage, and campaign objective. Remove sections that do not support the primary conversion path.

Pass 2: Message specificity

Replace generic benefit language with concrete outcomes and use-case clarity. Keep claims realistic and decision-oriented.

Pass 3: Proof and trust

Insert real proof elements and remove placeholder claims. Every trust signal should support a specific decision concern.

Pass 4: CTA and friction

Validate that one primary CTA leads the page and that form fields match funnel stage. Shorten unnecessary fields that reduce completion.

Pass 5: Readability and brand voice

Tighten sentence flow, simplify jargon, and align tone with brand standards. The final page should read like professional editorial copy, not generated text.

When teams need fast iterative edits after launch, this workflow for updating a landing page with Ask AI prompts is useful for controlled revisions between experiments.

Tool Selection Scorecard for AI Landing Page Generators

Choosing a tool by demo quality alone is risky. Use a weighted scorecard that reflects operational and conversion priorities.

Recommended criteria

- Draft quality consistency across multiple prompts.

- Section structure control and editing flexibility.

- Voice and tone control features.

- Conversion-oriented components such as form and CTA options.

- SEO controls for metadata and heading structure.

- Integration depth with analytics and marketing stack.

- Collaboration workflow for non-technical teams.

- Export portability and ownership clarity.

- Pricing predictability at your expected output volume.

Simple weighting model

- Conversion control: 25%

- Editing flexibility: 20%

- Integration quality: 15%

- Draft quality consistency: 15%

- SEO controls: 10%

- Team workflow: 10%

- Cost predictability: 5%

Scoring with clear criteria prevents feature-driven decisions that fail in real production conditions.

QA Checklist Before Publishing Any AI-Generated Page

A strict QA gate reduces preventable performance loss. Run this checklist before every launch and after every major update.

Content QA

- Headline states audience and outcome clearly.

- Value proposition is specific and non-generic.

- Claims are accurate and verifiable.

- Proof is concrete, current, and relevant.

UX and conversion QA

- Primary CTA is obvious and consistent across sections.

- Forms are short enough for the intended funnel stage.

- Important objections are addressed before final CTA.

- Mobile rendering preserves hierarchy and readability.

Technical QA

- Tracking events fire correctly for key actions.

- Page speed is acceptable on common mobile devices.

- Metadata and heading structure are complete.

- Redirects, thank-you flows, and notifications work.

Teams that treat QA as mandatory rather than optional usually recover tool cost faster through improved conversion reliability.

Scenario Playbooks: How Different Teams Should Use AI Generators

Different business models need different page strategies. The generator can stay the same while workflow priorities change.

Startup founder playbook

Focus on speed to validated message. Generate several positioning variants, test one audience at a time, and optimize for qualified conversations instead of broad lead volume.

Use short test cycles with one major variable per iteration. Preserve a simple change log so each update has a measurable reason.

SaaS growth team playbook

Prioritize trial activation and downstream pipeline quality. Connect landing page messaging directly to onboarding outcomes, and avoid generic acquisition copy that attracts low-intent users.

Build segment variants only when base messaging is stable. Scaling weak baseline copy across segments multiplies inefficiency.

Agency playbook

Standardize prompt templates, QA rules, and reporting formats. Agencies gain leverage by making quality repeatable across accounts rather than reinventing each workflow.

Create clear client review checkpoints for claims, proof assets, and compliance requirements before publication.

SEO and AI Search Readiness for Landing Pages

SEO for AI-generated pages should be practical and intent-driven. Search visibility improves when page language reflects actual user questions and when structure is clean enough for fast interpretation.

Start with fundamentals: precise title tags, clear H1-H3 hierarchy, concise meta descriptions, and purposeful internal links. Avoid keyword stuffing and avoid forcing repeated exact matches that make copy harder to read.

Support discoverability with section-level clarity. A page that answers specific use-case questions often performs better than a broad page trying to rank for every related phrase.

Also prepare for AI-assisted discovery patterns by writing direct answer blocks for key objections and implementation questions. Clarity helps both readers and retrieval systems understand relevance quickly.

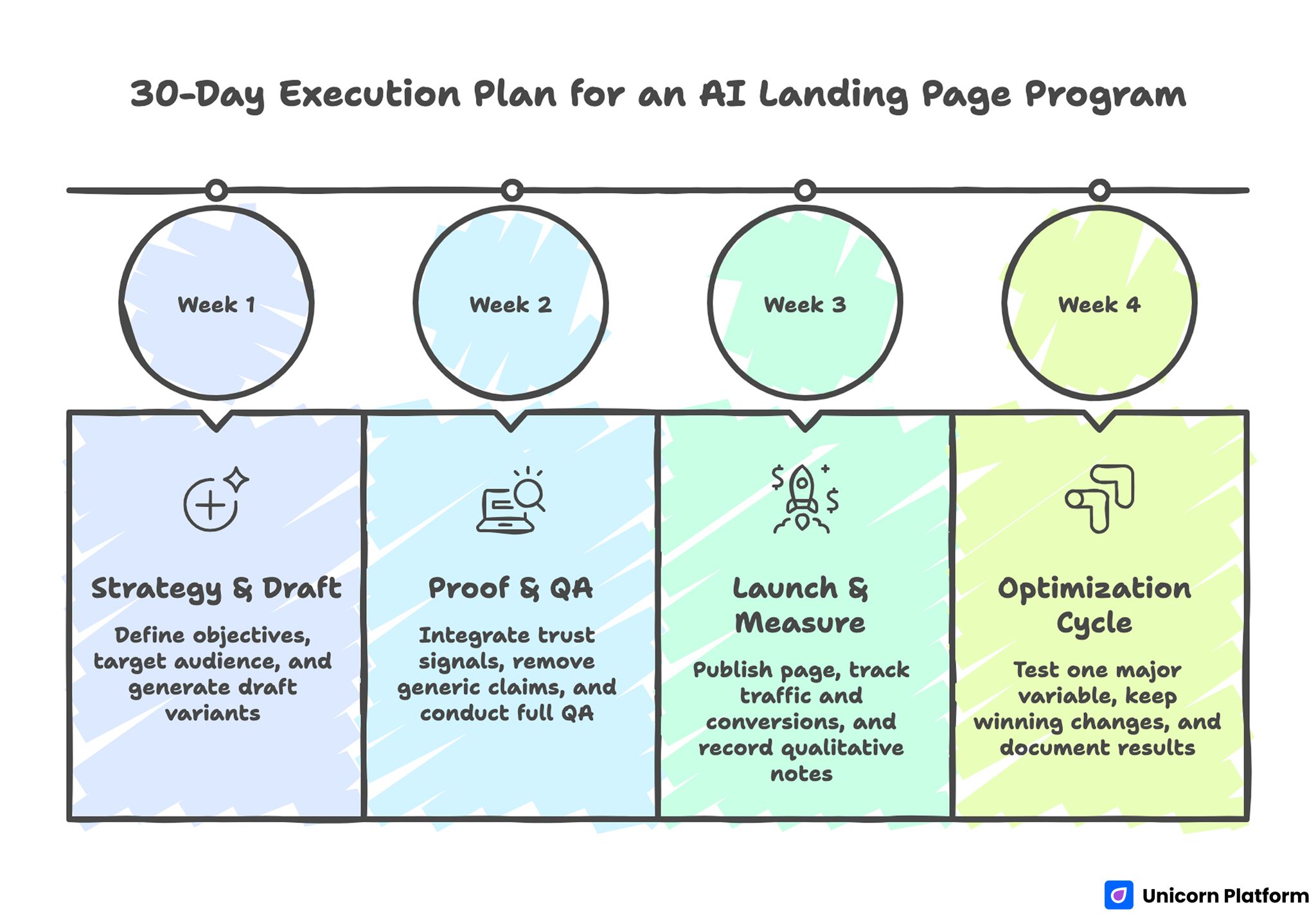

30-Day Execution Plan for an AI Landing Page Program

30-Day Execution Plan for an AI Landing Page Program

Week 1: Define strategy and draft set

Set objective, target audience, and one primary conversion action. Generate three to five draft variants and select one baseline using relevance and clarity, not just style.

Week 2: Proof integration and QA hardening

Add real trust signals, remove generic claims, and run full QA on content, UX, and technical behavior. Confirm that analytics tracking reflects your core funnel actions.

Week 3: Launch and baseline measurement

Publish the page, run stable traffic for measurement, and track conversion quality by source. Record qualitative lead notes, not only quantitative metrics.

Week 4: Controlled optimization cycle

Test one major variable such as headline specificity, proof placement, or CTA wording. Keep winning changes and document why they performed better.

90-Day Growth Model for Compounding Results

Days 1-30: Stabilize fundamentals

Focus on message clarity, trust credibility, and conversion flow integrity. Avoid over-expanding into multiple audiences before baseline performance is reliable.

Days 31-60: Expand into segmented variants

Launch one segment-specific version for a high-value audience. Keep core structure consistent while adapting examples, objections, and CTA framing.

Days 61-90: Scale and systematize

Promote the best-performing variant, archive weak experiments, and strengthen your prompt library with proven patterns. At this stage, operational consistency becomes a major growth advantage.

Common Failure Modes and How to Fix Them

Failure mode: publishing raw first drafts

Raw drafts often look polished but carry generic claims and weak decision logic. Introduce a mandatory editing and QA gate before any page goes live.

Failure mode: overpromising without proof

Unverified claims may increase clicks and decrease trust during evaluation. Replace broad statements with realistic outcomes supported by concrete evidence.

Failure mode: excessive CTA options

Too many next steps create friction and lower conversion confidence. Keep one dominant CTA and treat secondary actions as supportive paths.

Failure mode: weak mobile execution

If mobile readability is poor, intent quality drops before visitors reach your proof or CTA. Test real devices, compress media, and simplify first-screen layout.

Failure mode: no experiment discipline

Multiple simultaneous changes produce noisy results and unclear learning. Run one meaningful test at a time with documented hypotheses and outcomes.

Failure mode: metric tunnel vision

Page views and click-through rates can look healthy while lead quality declines. Add qualified inquiry share and downstream conversion metrics to evaluation.

Advanced Operational Controls for Multi-Page AI Programs

Teams that run many landing pages in parallel need stronger controls than one-off builders. Without operational standards, quality drifts between campaigns and debugging becomes expensive because every page behaves differently.

Start with ownership and portability rules. Each page should have a clear owner, a documented source of truth for copy assets, and an export policy so critical campaign pages are not trapped in disconnected tools or undocumented workflows.

Security and reliability checks should be explicit in the launch process. Validate script load order, form endpoint behavior, lead routing, and error handling before traffic scales. Operational failures can erase gains from strong messaging because missed leads are rarely recovered.

Approval workflow design matters too. Define who signs off on claims, proof accuracy, and compliance-sensitive language. A short pre-launch approval chain is faster than emergency fixes after the page has already collected low-quality or risky traffic.

Performance Dashboard Design That Connects to Revenue

A useful dashboard should reflect business outcomes, not vanity metrics. AI generation increases output volume, so teams need a way to see which pages create qualified opportunities and which pages only generate surface engagement.

Build reporting in three layers. The first layer measures attention signals such as page sessions and CTA click-through. The second layer measures behavior quality such as form completion quality and disqualification rate. The third layer tracks downstream impact such as pipeline contribution, close relevance, and time to sales response.

Review this dashboard weekly during active campaigns and monthly for baseline pages. Weekly reviews support fast iteration, while monthly reviews identify strategic drift and help teams retire underperforming variants before they consume additional budget.

Operational reporting becomes most useful when paired with decision rules. For example, if a page has strong CTR but weak qualified inquiry share, prioritize message specificity and proof quality before spending more on distribution.

FAQ: AI Landing Page Generator Strategy

What is an AI landing page generator best used for?

It is best used for speeding up draft creation and experimentation. The strongest results come when teams combine generation speed with human strategy, proof validation, and conversion QA.

Can free AI landing page generators be enough for a startup?

They can be enough for early concept testing and initial message exploration. Once paid traffic and lead quality become priorities, limited customization and weaker controls often become costly.

How many drafts should I generate before choosing one?

Three to five structured variants are usually enough for a first pass. More variants can help later, but only if each draft tests a clear strategic angle rather than random copy differences.

Do AI-generated landing pages rank in search?

They can rank when content is original, intent-matched, and technically sound. Strong structure, accurate metadata, and clear answers to user questions are more important than generation method.

How do I prevent generic AI copy?

Use constrained prompts with audience, outcome, and tone requirements, then run a focused human edit for specificity and brand voice. Generic wording usually survives only when review is rushed.

What proof should I include on an AI-generated page?

Use evidence that reduces real decision risk: role-relevant testimonials, concise outcomes, and process transparency. Proof should support your main claim and appear before major commitment requests.

How often should I update an AI landing page?

Weekly light updates and monthly strategic reviews are a practical cadence. Frequent small improvements generally outperform occasional large rewrites.

What is the biggest conversion mistake with AI pages?

The biggest mistake is treating generation as optimization. Draft creation is only the first step, and performance usually depends on the quality of editing, proof, and testing discipline.

Should I personalize landing pages for each segment?

Personalization helps when your baseline message is already stable. Segment variants should adapt examples and objections while preserving core structure and brand consistency.

Which metrics matter most after launch?

Track qualified inquiry share, form completion quality, and downstream conversion by source. These indicators usually reveal business impact better than top-of-funnel metrics alone.

Final Takeaway

An AI landing page generator is most valuable when used inside a disciplined system: clear strategy, high-quality prompts, human editorial review, and continuous optimization.

Teams that combine speed with rigor ship faster and learn faster, which is the real advantage in competitive markets. Unicorn Platform works well in this model because it supports rapid publishing while keeping structure, editing control, and iterative testing practical for non-technical and cross-functional teams.